High I/O throughput degrades query performance on ApsaraDB RDS for SQL Server instances. I/O performance is governed by two factors: IOPS and I/O throughput. In most cases, IOPS is unlikely to become a performance bottleneck — I/O throughput is more likely to cause issues after it reaches its upper limit. This topic explains how to identify the root cause and apply the appropriate fix.

I/O throughput limits

The maximum I/O throughput depends on the storage type attached to your instance.

Premium Local SSDs

RDS instances using Premium Local SSDs share the physical host's storage with other instances on that host. Each instance has a maximum IOPS limit, but I/O throughput is not capped per instance — throughput can exceed 1 GB/s. Because instances share the underlying storage, they may compete for I/O resources. For exclusive I/O resource allocation, use the dedicated host instance family. For more information, see Primary ApsaraDB RDS instance types.

Cloud disks

Cloud disks are dedicated to each instance, so instances do not compete for I/O resources. The maximum I/O throughput for instances using cloud disks depends on two factors:

-

Computing resources: Defined by the ECS g6 instance family specifications.

-

Storage type and capacity: RDS supports standard SSDs and ESSDs. Throughput varies by storage type and capacity.

Identify the I/O load type

The I/O load on an RDS instance has two major components:

-

Data file reads: Data page reads during query execution and backups.

-

Transaction log reads and writes: Log reads are mostly from backup operations; log writes come from DML (data manipulation language) and DDL (data definition language) operations.

To pinpoint which load type is causing high I/O throughput, monitor these metrics in the Performance Insight tab:

| Metric | I/O type | Description |

|---|---|---|

| Page_Reads | Read | Data pages read from data files per second that could not be served from the cache |

| Page_Write | Write | Data pages written to data files per second |

| Log_Bytes_Flushed/sec | Write | Bytes written to transaction log files per second |

| Backup_Restore_Throughput/sec | Read | Bytes read from or written to data files and transaction log files per second during backup and restore operations |

Each data page is 8 KB.

Use the table below to identify your root cause and jump to the relevant section:

| Elevated metric | Root cause | Go to |

|---|---|---|

| Page_Reads | Insufficient cache (data pages read from disk) | High I/O from data page reads |

| Page_Write, Log_Bytes_Flushed/sec | Heavy DML or DDL workload | High I/O from write operations |

| Backup_Restore_Throughput/sec | Backup activity | High I/O from backups |

View I/O throughput metrics

This procedure does not apply to instances running SQL Server 2008 R2 with cloud disks.

-

Go to the Instances page. In the top navigation bar, select the region where your instance resides. Find the instance and click its ID.

-

In the left-side navigation pane, choose Autonomy Services > Performance Optimization. On the page that appears, click the Performance Insight tab.

-

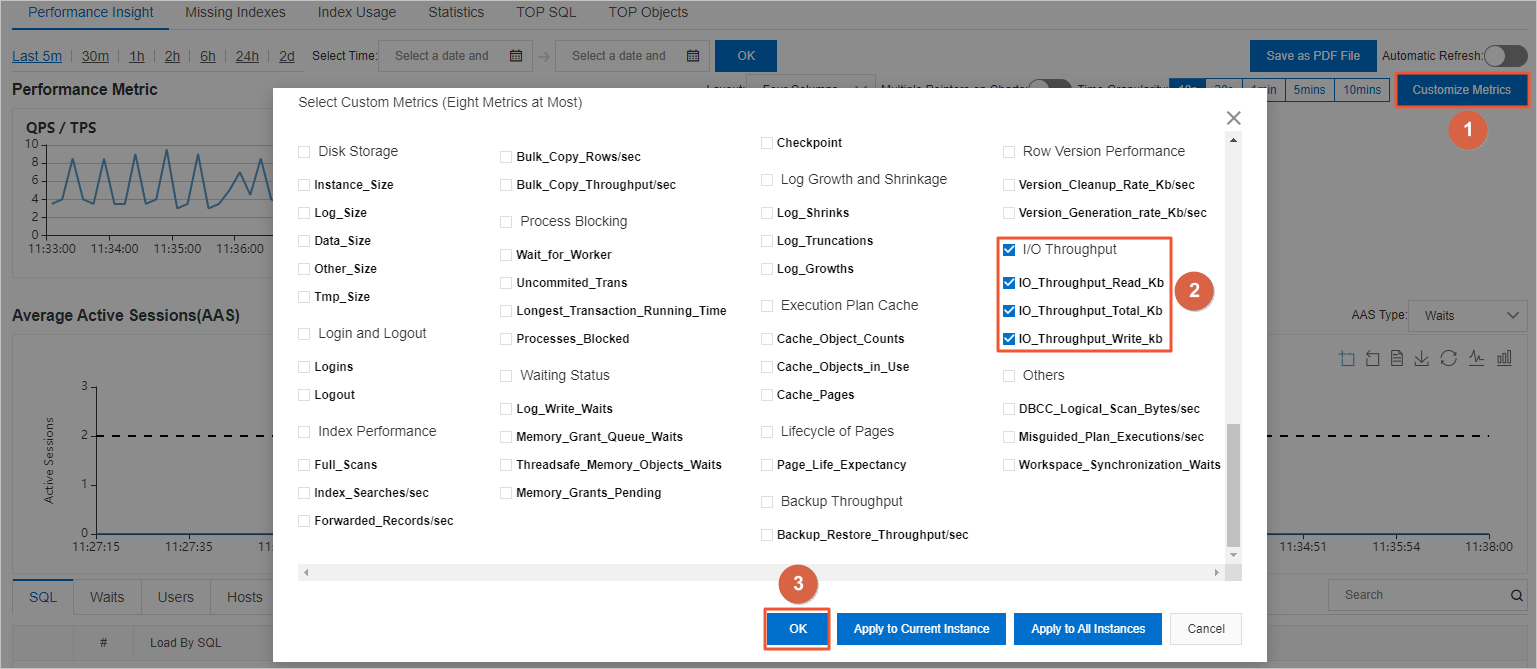

In the upper-right corner, click Custom metric. In the dialog box, select I/O Throughput and click OK. Selecting I/O Throughput adds the following metrics to the chart:

-

IO_Throughput_Read_Kb: I/O throughput per second for disk read operations.

-

IO_Throughput_Write_kb: I/O throughput per second for disk write operations.

-

IO_Throughput_Total_Kb: Combined read and write I/O throughput per second.

-

-

To drill into specific load types, click Custom metric again and add the metrics from the table in Identify the I/O load type.

Case analysis

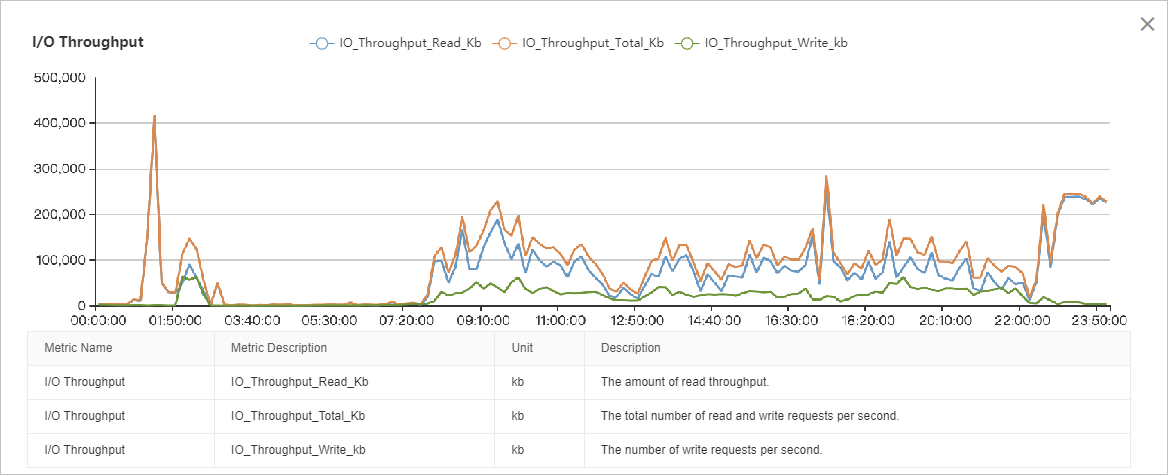

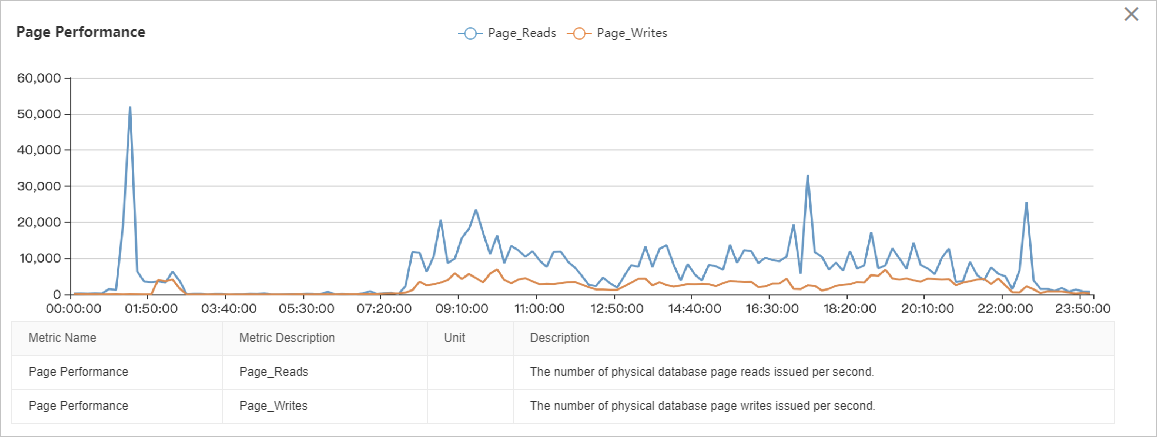

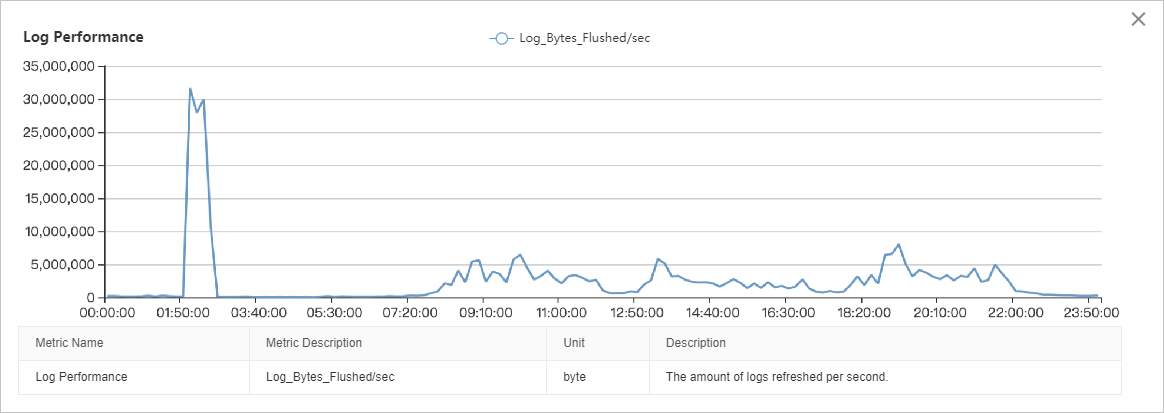

The following example shows how to interpret I/O throughput data across different time windows.

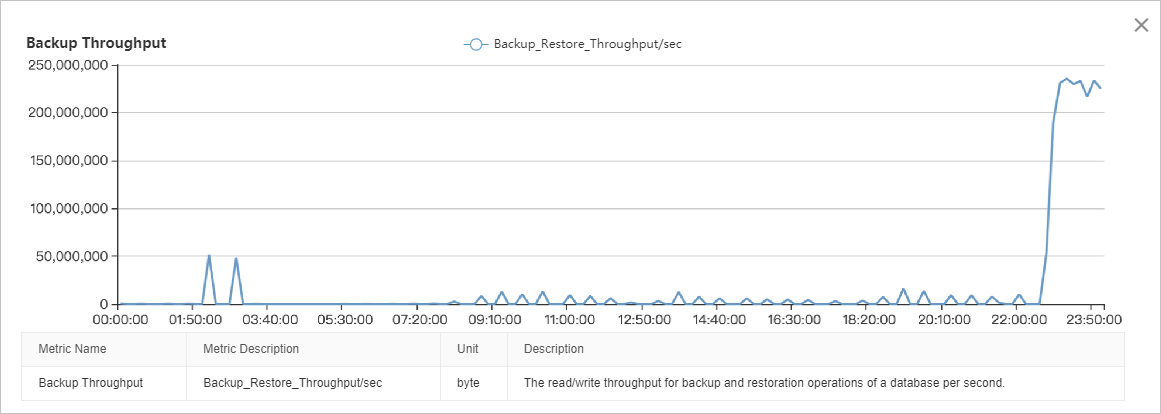

The overall read load exceeds the write load. Throughput is stable from 08:00 to 22:00, with peaks at 01:00–03:00 and 22:00–24:00.

Peak at ~01:00 — Data page reads spike to approximately 50,000 pages/second (~400 MB/s). This indicates cache pressure causing disk reads.

Peak at 02:00–03:00 — Four sources combine for a cumulative peak of ~150 MB/s:

| Source | Throughput |

|---|---|

| Data page reads | ~40 MB/s |

| Data page writes | ~40 MB/s |

| Transaction log writes | ~30 MB/s |

| Log backups | ~50 MB/s |

Steady state 08:00–22:00 — Three sources in descending order by proportion:

| Source | Throughput |

|---|---|

| Data page reads | 80–100 MB/s |

| Data page writes | ~30 MB/s |

| Transaction log writes | ~5 MB/s |

Peak at 22:00–24:00 — Backup activity only, sustained at over 220 MB/s.

Troubleshoot high I/O from data page reads

Excessive data page reads are the most common cause of high I/O on ApsaraDB RDS for SQL Server instances. When the buffer pool is too small to hold frequently accessed data, SQL Server must read data pages from disk on every cache miss.

Diagnose cache pressure using PLE

Page Life Expectancy (PLE) measures the average time, in seconds, that a data page stays in the buffer pool before being evicted. A declining PLE indicates growing cache pressure.

The minimum healthy PLE threshold is 300 seconds. For instances with more memory, calculate the recommended threshold as follows:

Recommended PLE threshold = (Buffer pool memory in GB / 4) × 300Example: An instance with 16 GB of RAM allocates up to 12 GB to the buffer pool.

Recommended PLE threshold = (12 / 4) × 300 = 900 secondsIf PLE consistently falls below your threshold, the buffer pool is undersized for the workload. For background on this metric, see Page Life Expectancy (PLE) in SQL Server.

Resolve high I/O from data page reads

The primary fix is to upgrade the memory specifications of the instance. Do not upgrade the disk performance level (PL) to address read-heavy I/O — a larger buffer pool reduces disk reads at the source.

To further reduce the total number of data pages that must be read:

-

Archive or delete historical data.

-

Enable data compression on large tables.

-

Delete low-value indexes.

-

Defragment indexes to reduce index fragmentation.

Troubleshoot high I/O from write operations

High write I/O is caused by DML or DDL operations. Use Autonomy Services to check which operations were running during the high-I/O period.

DML operations that generate write I/O include INSERT, DELETE, UPDATE, and MERGE. DDL operations include CREATE INDEX and ALTER INDEX.

High I/O from DML operations

First, determine whether the DML activity is a routine workload or a temporary task.

Non-routine workloads (for example, bulk data archiving or one-time data processing): Schedule these operations during off-peak hours to avoid competing with production traffic.

Routine workloads: Upgrade the disk performance level (PL). For example, upgrade an ESSD from PL1 to PL2. Also, review the index structure and remove nonclustered indexes that are no longer needed, as unnecessary indexes increase write amplification.

High I/O from DDL operations

DDL operations such as index creation and rebuilds are typically maintenance tasks. Schedule them during off-peak hours.

When running CREATE INDEX or ALTER INDEX, specify the maximum degree of parallelism (MAXDOP) in the SQL statement. Setting MAXDOP limits the number of parallel threads used, which reduces the peak I/O throughput generated by the operation — at the cost of longer execution time.

Troubleshoot high I/O from backups

ApsaraDB RDS for SQL Server runs backups only on the primary instance, which adds to the primary instance's I/O load. Full backups have the largest impact; log backups have the smallest.

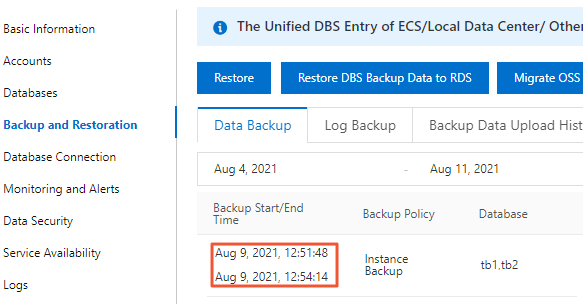

To view backup duration for your instance, go to the Backup and Restoration page in the ApsaraDB RDS console.

Schedule backups to avoid peak hours

Set the backup start time and backup cycle to minimize overlap with production peak hours. For detailed configuration steps, see Back up an ApsaraDB RDS for SQL Server instance.

Example 1: A full backup takes approximately 6 hours. Business peak hours run from 09:00 to 21:00, and a background processing job runs from 22:00 to 01:00. Set the backup start time to 01:00–02:00. Each backup finishes before 08:00. Set the backup cycle to every day of the week to keep the restore baseline recent, which speeds up point-in-time restoration.

Example 2: A full backup takes approximately 15 hours and disrupts weekday workloads on every run. Set the backup cycle to Saturday and Sunday only. Note that with less frequent full backups, point-in-time restoration may take longer because more log backups must be replayed.

When scheduling is not enough

If adjusting the backup schedule does not resolve the conflict with your workload:

-

Upgrade the disk performance level (PL): Higher disk PL provides more I/O throughput, giving both backups and the workload more headroom.

-

Split your data across multiple instances: Smaller datasets per instance reduce both backup duration and the I/O generated per backup run.