This solution enables comprehensive metric monitoring for applications deployed in Container Service for Kubernetes (ACK) clusters with OpenTelemetry SDK instrumentation through Alibaba Cloud Managed Service for Prometheus. Native OTel metrics, custom business instrumentation metrics, and metrics converted from trace spans can be collected in a unified manner. Converting trace data into metrics can effectively reduce data ingestion volume.

Overview of OpenTelemetry

OpenTelemetry (OTel) is an open-source observability framework that provides unified APIs, SDKs, and tools for generating, collecting, and exporting telemetry data (including metrics, traces, and logs) from distributed systems. Its core goal is to solve the fragmentation problem of observability data between different tools and systems.

OpenTelemetry metrics are structured data that quantify system behavior, used to monitor system performance and health status. Metric sources depend on SDK or agent instrumentation in different languages. For Java applications, metrics typically include standard JVM metrics, custom metrics, and metrics converted from trace data.

OpenTelemetry's metric design is compatible with Prometheus. Through OpenTelemetry Collector, metrics can be converted to Prometheus format, enabling seamless integration with Alibaba Cloud Managed Service for Prometheus.

Role of OpenTelemetry Collector

OpenTelemetry Collector is an extensible data processing pipeline responsible for collecting telemetry data from data sources (such as applications and services) and converting the data into formats required by target systems (such as Prometheus).

Data collection

Applications generate metric data through the OpenTelemetry SDK and send it to the Collector via OpenTelemetry Protocol (OTLP) or other protocols (such as native Prometheus protocol).

Data transformation

In the community Collector extensions, you can use the following two exporters to convert OTel metrics to Prometheus format.

Prometheus exporter converts OpenTelemetry metrics to Prometheus format and provides an endpoint for the Prometheus agent to scrape data.

Metric name mapping: converts OpenTelemetry metric names to Prometheus-compatible format.

Label processing: preserves or renames labels to comply with Prometheus naming rules.

Data type conversion:

gauge→ Prometheusgaugesum→ Prometheuscounterorgauge(based onMonotonicproperty)histogram→ Prometheushistogram(throughbucketandsumsub-metrics)

The following sample configuration shows a metric scraping endpoint on port

1234.exporters: prometheus: endpoint: "0.0.0.0:1234" namespace: "acs" const_labels: label1: value1 send_timestamps: true metric_expiration: 5m enable_open_metrics: true add_metric_suffixes: false resource_to_telemetry_conversion: enabled: true

Prometheus remote write exporter converts OpenTelemetry metrics to Prometheus format and writes directly to the target Prometheus service through the RemoteWrite protocol.

Like the Prometheus exporter, this exporter also performs data format conversion. Sample configuration:

exporters: prometheusremotewrite: endpoint: http://<Prometheus Endpoint>/api/v1/write namespace: "acs" resource_to_telemetry_conversion: enabled: true timeout: 10s headers: Prometheus-Remote-Write-Version: "0.1.0" external_labels: data-mode: metrics

Best practices for applications deployed in ACK clusters

1. Make preparations in Managed Service for Prometheus

Cluster with Prometheus monitoring enabled

If Prometheus monitoring is enabled for your ACK cluster, the Prometheus instance already exists. You can log on to the CloudMonitor console, navigate to the page, and find the Prometheus instance with the same name as your ACK cluster.

Cluster without Prometheus monitoring enabled

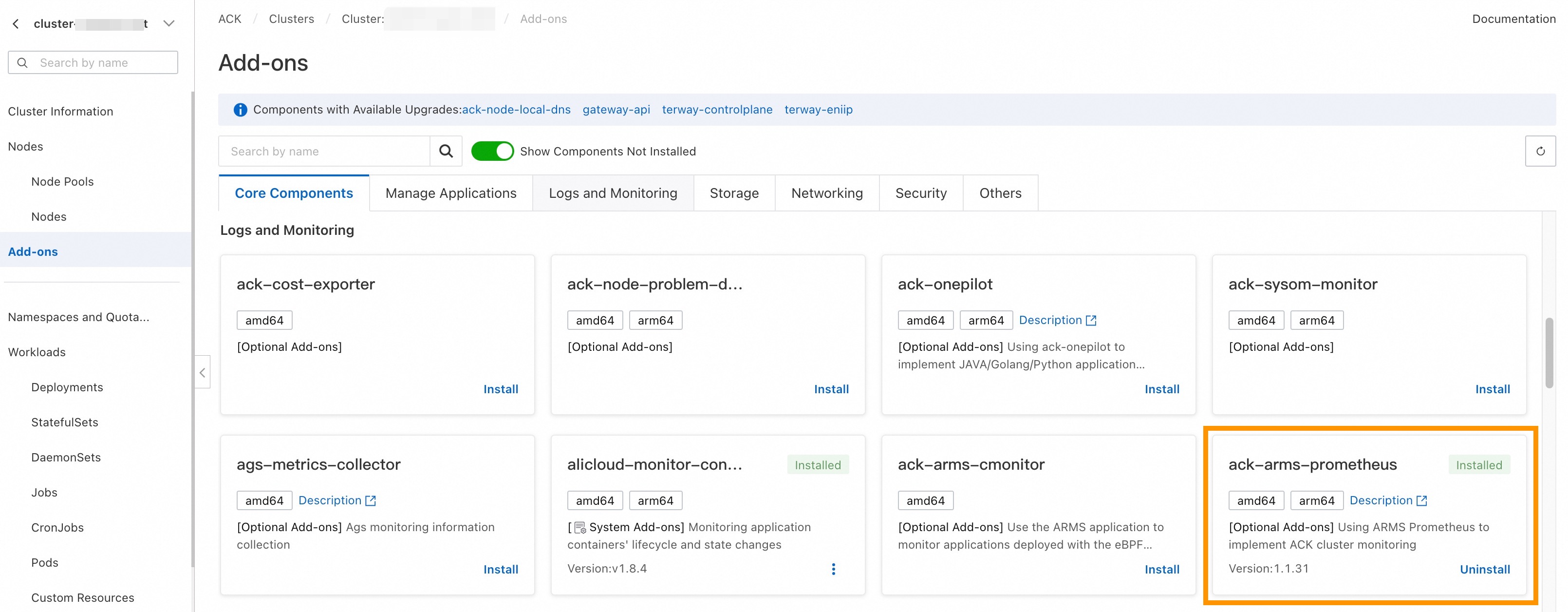

Log on to the ACK console, click the cluster name, navigate to the Add-ons page, and then install the ack-arms-prometheus component, which will automatically enable Prometheus monitoring for the cluster.

2. Deploy the Collector in SideCar mode

Because metric statistical calculations require metrics or traces from the same pod instance to reach the same Collector, using the Gateway deployment mode requires handling load balancing, which is relatively complex. Therefore, we recommend that you deploy the Collector in SideCar mode.

Prometheus exporter mode

The advantage of this approach is that you do not need to handle Prometheus write path authentication, and can adjust metric collection intervals by modifying collection configurations.

Deployment architecture diagram

Deployment configuration reference

# Kubernetes Deployment example

apiVersion: apps/v1

kind: Deployment

spec:

template:

metadata:

labels:

# Add specific labels to the pod, typically named with application information for easier metric collection configuration

observability: opentelemetry-collector

spec:

volumes:

- name: otel-config-volume

configMap:

# This configuration is created using the Collector configuration reference below

name: otel-config

containers:

- name: app

image: your-app:latest

env:

- name: OTEL_EXPORTER_OTLP_ENDPOINT

value: http://localhost:4317

- name: otel-collector

# You can directly use the provided Collector image (which includes Prometheus-related extension plugins)

# Replace regionId in the image name with the actual region ID

image: registry-<regionId>.ack.aliyuncs.com/acs/otel-collector:v0.128.0-7436f91

args: ["--config=/etc/otel/config/otel-config.yaml"]

ports:

- containerPort: 1234 # Prometheus endpoint

name: metrics

volumeMounts:

- name: otel-config-volume

mountPath: /etc/otel/configCollector configuration reference

Configure the Collector's resource limits (CPU and memory) according to application request volume to ensure it can process all data properly.

apiVersion: v1

kind: ConfigMap

metadata:

name: otel-config

namespace: <app-namespace>

data:

otel-config.yaml: |

extensions:

zpages:

endpoint: localhost:55679

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch:

memory_limiter:

# 75% of maximum memory up to 2G

limit_mib: 1536

# 25% of limit up to 2G

spike_limit_mib: 512

check_interval: 5s

resource:

attributes:

- key: process.runtime.description

action: delete

- key: process.command_args

action: delete

- key: telemetry.distro.version

action: delete

- key: telemetry.sdk.name

action: delete

- key: telemetry.sdk.version

action: delete

- key: service.instance.id

action: delete

- key: process.runtime.name

action: delete

- key: process.runtime.description

action: delete

- key: process.pid

action: delete

- key: process.executable.path

action: delete

- key: process.command.args

action: delete

- key: os.description

action: delete

- key: instance

action: delete

- key: container.id

action: delete

connectors:

spanmetrics:

histogram:

explicit:

buckets: [0.001, 0.005, 0.01, 0.05, 0.1, 0.5, 1, 5, 10]

dimensions:

- name: http.method

default: "GET"

- name: http.response.status_code

- name: http.route

# Custom attribute

- name: user.id

metrics_flush_interval: 15s

exclude_dimensions:

metrics_expiration: 3m

events:

enabled: true

dimensions:

- name: default

default: "GET"

exporters:

debug:

verbosity: detailed

prometheus:

endpoint: "0.0.0.0:1234"

namespace: "acs"

const_labels:

label1: value1

send_timestamps: true

metric_expiration: 5m

enable_open_metrics: true

add_metric_suffixes: false

resource_to_telemetry_conversion:

enabled: true

service:

pipelines:

logs:

receivers: [otlp]

exporters: [debug]

traces:

receivers: [otlp]

processors: [resource]

exporters: [spanmetrics]

metrics:

receivers: [otlp]

processors: [memory_limiter, batch]

exporters: [prometheus]

metrics/2:

receivers: [spanmetrics]

exporters: [prometheus]

extensions: [zpages]

This configuration processes incoming metrics and traces, using the resource type processors to discard environment attributes that are typically not of interest, preventing excessive metric data volume. It uses spanmetrics to convert key span statistics into metrics.

Configure a collection task

apiVersion: monitoring.coreos.com/v1

kind: PodMonitor

metadata:

name: opentelemetry-collector-podmonitor

namespace: default

annotations:

arms.prometheus.io/discovery: "true"

spec:

selector:

matchLabels:

observability: opentelemetry-collector

podMetricsEndpoints:

- port: metrics

interval: 15s

scheme: http

path: /metricsPrometheus RemoteWrite exporter mode

This approach is suitable for scenarios with large data volumes and unstable collection, where the Collector writes directly to the Prometheus instance.

The configuration is relatively complex and requires handling data write path configuration on your own.

Deployment architecture diagram

Prepare data write path

Because this method involves the Collector writing data directly to the Prometheus instance, you first need to obtain the Prometheus endpoint and authentication information.

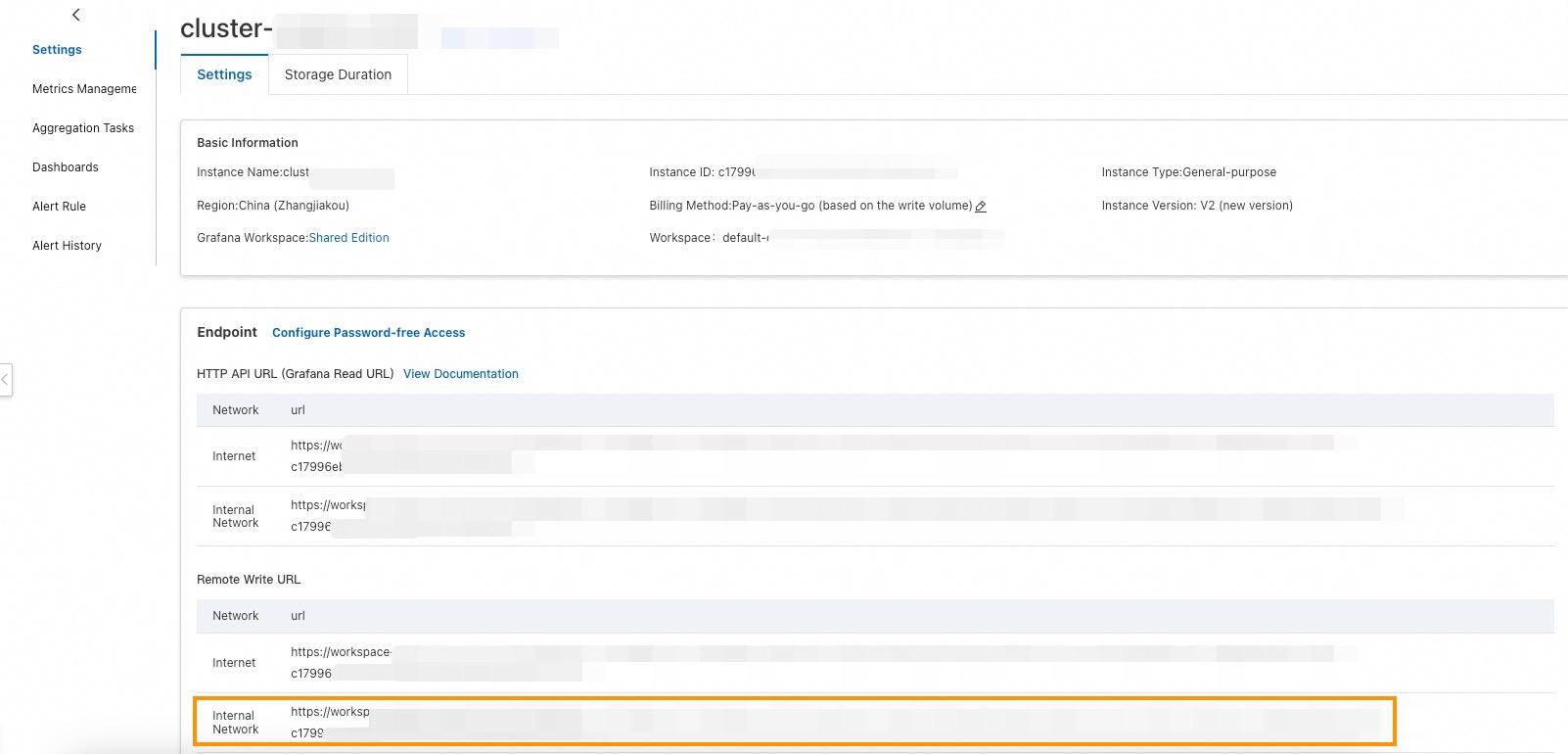

Obtain the endpoint

Log on to the CloudMonitor console, navigate to the page, find the Prometheus instance corresponding to your cluster. In most cases, the Prometheus instance ID matches the ACK cluster ID, and the instance name matches the container cluster name. Click the instance name. On the Settings page, find the Remote Write URL and obtain the internal network address for later use.

Obtain the authentication information

You have the following two options:

V2 Prometheus instances support configuring password-free policies, allowing password-free writing from within the cluster's current virtual private cloud (VPC).

Assign a Resource Access Management (RAM) user for metric data writing, assign the AliyunPrometheusMetricWriteAccess system policy, and then obtain the AccessKey pair of the RAM user to use as the username and password for writing.

Deployment configuration reference

The Collector deployment configuration is the same as that of the Prometheus exporter mode.

Collector configuration reference

apiVersion: v1

kind: ConfigMap

metadata:

name: otel-config

namespace: <app-namespace>

data:

otel-config.yaml: |

extensions:

zpages:

endpoint: localhost:55679

receivers:

otlp:

protocols:

grpc:

endpoint: 0.0.0.0:4317

http:

endpoint: 0.0.0.0:4318

processors:

batch:

memory_limiter:

# 75% of maximum memory up to 2G

limit_mib: 1536

# 25% of limit up to 2G

spike_limit_mib: 512

check_interval: 5s

resource:

attributes:

- key: process.runtime.description

action: delete

- key: process.command_args

action: delete

- key: telemetry.distro.version

action: delete

- key: telemetry.sdk.name

action: delete

- key: telemetry.sdk.version

action: delete

- key: service.instance.id

action: delete

- key: process.runtime.name

action: delete

- key: process.runtime.description

action: delete

- key: process.pid

action: delete

- key: process.executable.path

action: delete

- key: process.command.args

action: delete

- key: os.description

action: delete

- key: instance

action: delete

- key: container.id

action: delete

connectors:

spanmetrics:

histogram:

explicit:

buckets: [0.001, 0.005, 0.01, 0.05, 0.1, 0.5, 1, 5, 10]

dimensions:

- name: http.method

default: "GET"

- name: http.response.status_code

- name: http.route

# Custom attribute

- name: user.id

metrics_flush_interval: 15s

exclude_dimensions:

metrics_expiration: 3m

events:

enabled: true

dimensions:

- name: default

default: "GET"

exporters:

debug:

verbosity: detailed

prometheusremotewrite:

# Replace with the Prometheus RemoteWrite internal network address

endpoint: http://<Endpoint>/api/v3/write

namespace: "acs"

resource_to_telemetry_conversion:

enabled: true

timeout: 10s

headers:

Prometheus-Remote-Write-Version: "0.1.0"

# This configuration is required if password-free mode is not enabled

Authorization: Basic <base64-encoded-username-password>

external_labels:

data-mode: metrics

service:

pipelines:

logs:

receivers: [otlp]

exporters: [debug]

traces:

receivers: [otlp]

processors: [resource]

exporters: [spanmetrics]

metrics:

receivers: [otlp]

processors: [memory_limiter, batch]

exporters: [prometheusremotewrite]

metrics/2:

receivers: [spanmetrics]

exporters: [prometheusremotewrite]

extensions: [zpages]

Replace the Prometheus RemoteWrite endpoint in the configuration with the address obtained earlier.

Run the following command to obtain the value of

base64-encoded-username-password:echo -n 'AK:SK' | base64