This topic answers common questions about Data Definition Language (DDL) operations in PolarDB-X.

How do I increase the database limit in PolarDB-X 2.0?

PolarDB-X 2.0 allows a maximum of 32 logical databases by default. To increase this limit, connect using a high-privilege account and run:

SET GLOBAL MAX_LOGICAL_DB_COUNT=64;How do I identify abnormal DDL operations in PolarDB-X 2.0?

Run the following statements to check for stuck or abnormal DDL activity:

SHOW FULL DDL;

SHOW FULL physical_processlist WHERE info !='';How many records can I insert in a single batch operation?

The safe batch size depends on your row size and the max_allowed_packet setting.

Estimate your row size

Calculate the maximum bytes per row using these InnoDB storage sizes:

| Data type | Size |

|---|---|

| INT | 4 bytes |

| BIGINT | 8 bytes |

| DECIMAL | 4 bytes |

| DATETIME | 8 bytes |

| VARCHAR(N), UTF8 | 3 bytes per character |

| VARCHAR(N), UTF8MB4 | 4 bytes per character |

For TEXT, BLOB, LONGTEXT, and other large object types, evaluate each field separately based on actual maximum usage. For example, a Group_ID VARCHAR(1024) that only stores a single digit still counts as 1,024 bytes in your maximum row estimate.

Calculate the batch size

Using an example row of 1,913 bytes (8+8+8+8+8+8+255+255+255+1,024+4+64+8):

At 1 MB: (1,024 × 1,024) / 1,913 ≈ 548 records. Keep batches under 500 to avoid exceeding the limit based on the PolarDB-X CN computation layer's 16 MB batch insert method.

At

max_allowed_packet=16M: 500 × 16 = 8,000 records is a safe upper bound.

Parameter recommendations

Set

max_allowed_packetto its maximum value.Set

innodb_log_buffer_sizebetween 32M and 128M. Test different values to find the optimal response time for your workload — this prevents InnoDB from flushing logs to disk too frequently before transaction commits.

Other factors that affect insertion performance

InnoDB buffer pool size

Disk IOPS and network bandwidth (in distributed database deployments)

Redo log buffer flush behavior (

innodb_log_buffer_size)

Why does DDL fail with [ERR_REPARTITION_TABLE_WITH_GSI] can not alter table repartition when gsi table is not public?

The Global Secondary Index (GSI) on the table is in an unexpected state. DDL operations on the table are only allowed when the GSI is in PUBLIC or ABSENT state. Any other state means the GSI is still being created.

To resolve this:

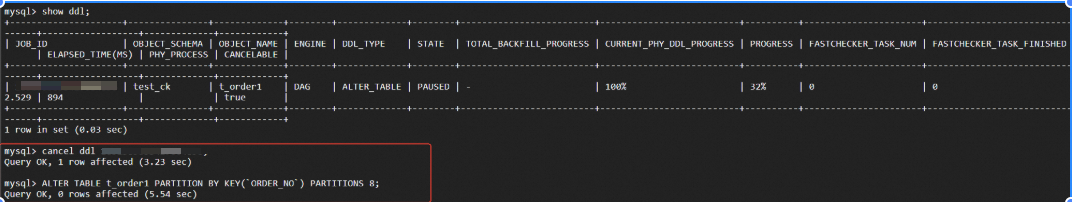

Run

SHOW DDL;to check for blocking DDL operations.If a DDL operation is blocking, wait for it to finish. To check the GSI state, run

SHOW GLOBAL INDEX FROM <table_name>;.If the GSI state is not PUBLIC or ABSENT, cancel the GSI creation and then re-run your DDL:

CANCEL DDL <JOB_ID>;

Why does DDL fail with find incorrect column / Out of range value for column / Data too Long for column?

These errors occur when existing data is incompatible with the new column definition. For example, if a column is DECIMAL(10, 0) and contains values ≥ 10, changing it to DECIMAL(5, 4) (which allows only 1 digit before the decimal point) will fail.

How to recover from a partial shard failure after ALTER TABLE MODIFY COLUMN or CHANGE COLUMN

In PolarDB-X, a MODIFY COLUMN DDL can succeed on some shards and fail on others when data in the failed shards violates the new column constraint. For example:

-- Create a table partitioned across 32 shards

CREATE TABLE `t1` (

`id` bigint(20) NOT NULL AUTO_INCREMENT,

`c2` varchar(16) DEFAULT NULL,

PRIMARY KEY (`id`)

) ENGINE = InnoDB DEFAULT CHARSET = utf8mb4

PARTITION BY KEY(`id`)

PARTITIONS 32;

INSERT INTO t1 VALUES (1, "1");

INSERT INTO t1 VALUES (2, "abc");

-- In strict SQL_MODE, this fails because "abc" is not a valid integer

SET sql_mode="strict_trans_tables";

ALTER TABLE t1 MODIFY COLUMN c2 INT;The error looks like:

...Not all physical DDLs have been executed successfully: 32 expected, 31 done, 1 failed...Incorrect integer value...PolarDB-X provides two recovery paths: forward execution (fix data and continue) and reverse execution (roll back the change).

Forward execution

Fix the invalid data in the failed shard, then continue the DDL.

Fix the invalid data:

UPDATE t1 SET c2=0 WHERE id=2;Get the JOB_ID of the failed DDL:

SHOW DDL;Look for the entry with state

PAUSED. For example,JOB_ID = 1661145277907759104.Continue the DDL:

CONTINUE DDL 1661145277907759104;

Reverse execution

Roll back the column change by reverting successfully modified shards to their original state.

Before you begin

Confirm that all of the following conditions apply before attempting reverse execution:

Your PolarDB-X instance is version 5.4.18 or later. Earlier versions do not support automatic rollback for this scenario.

The instance is PolarDB-X 2.0.

The ALTER statement only changes column types — renaming is not supported.

The DDL partially failed: the error message contains a pattern like

32 expected, 31 done, 1 failed, where the number of failures is greater than 0 and less than the expected number.The DDL did not use

ALGORITHM=OMC(Online Modify Column). OMC is only used when the DDL statement explicitly setsALGORITHM=OMC.

Steps

Get the JOB_ID of the failed DDL:

SHOW DDL;Look for the entry with state

PAUSED. For example,JOB_ID = 166113736846543257.Cancel the DDL task.

This step requires a high-privilege account.

DELETE FROM metadb.ddl_engine WHERE job_id=166113736846543257; DELETE FROM metadb.ddl_engine_task WHERE job_id=166113736846543257;Verify the task no longer exists:

SHOW DDL;Check the table state. At this point, some shards are inconsistent:

ImportantThe table is in an inconsistent state. Proceed immediately to the next step.

CHECK TABLE t1;Expected output showing inconsistency:

+----------------------+-------+----------+--------------------------------+ | TABLE | OP | MSG_TYPE | MSG_TEXT | +----------------------+-------+----------+--------------------------------+ |testdb.t1:Topology | check | Error | Table 'testdb_P00001_GROUP.t1_EZ5p_00010' find incorrect columns 'c2', please recreate table | |testdb.t1:Columns | check | Error | Table 'testdb_P00000_GROUP.t1_EZ5p_00000' find incorrect columns 'c2', please recreate table | +----------------------+-------+----------+--------------------------------+Run the reverse ALTER TABLE to restore consistency. You can restore to the original type or any compatible type:

You can also modify the column to a different type, not necessarily the original. For example, if reducing

varchar(16)tovarchar(12)originally failed, tryvarchar(14)instead.ALTER TABLE t1 MODIFY COLUMN c2 varchar(16);Verify the table is consistent:

CHECK TABLE t1;Expected output:

+--------------------+-------+----------+----------+ | TABLE | OP | MSG_TYPE | MSG_TEXT | +--------------------+-------+----------+----------+ | testdb.t1:Topology | CHECK | status | OK | | testdb.t1:Columns | CHECK | status | OK | +--------------------+-------+----------+----------+