WAL parallel replay accelerates write-ahead log (WAL) replay on read-only nodes and secondary databases in a PolarDB for PostgreSQL cluster. By distributing replay subtasks across multiple processes, the feature reduces crash recovery time, lowers replication lag on read-only nodes, and speeds up service recovery after restarts.

Applicability

WAL parallel replay is available for the following versions of PolarDB for PostgreSQL:

| PostgreSQL version | Minimum minor engine version |

|---|---|

| PostgreSQL 18 | 2.0.18.0.1.0 |

| PostgreSQL 17 | 2.0.17.2.1.0 |

| PostgreSQL 16 | 2.0.16.3.1.1 |

| PostgreSQL 15 | 2.0.15.7.1.1 |

| PostgreSQL 14 | 2.0.14.5.1.0 |

| PostgreSQL 11 | 2.0.11.9.17.0 |

You can view the minor engine version in the PolarDB console or by running SHOW polardb_version;. If your cluster's minor engine version does not meet the requirements, upgrade the minor engine version.Use cases

| Scenario | How parallel replay helps |

|---|---|

| Crash recovery | Speeds up crash recovery for the primary database, read-only nodes, and secondary databases, reducing the time to restore service after an unexpected failure. |

| Read-only node replication lag | The LogIndex BGW process replays WAL records in parallel on read-only nodes, reducing the data lag between a read-only node and the primary database. |

| Secondary database restart recovery | The Startup process replays WAL records in parallel on a secondary database, accelerating service recovery after a planned restart or failover. |

How it works

Parallelizing WAL replay

A single WAL record may modify multiple data blocks. Define the smallest replay unit, a replay subtask, as Task(i,j) = LSN(i) → Block(i,j), which means replaying the *i*-th WAL record on data block Block(i,j).

A WAL record that modifies m blocks produces m replay subtasks. Across multiple WAL records, not every subtask depends on the result of the preceding one—subtasks that touch different data blocks are independent and can run in parallel.

For example, given three WAL records with the following subtask sets:

Task[0,*] = [Task[0,0], Task[0,1], Task[0,2]]Task[1,*] = [Task[1,0], Task[1,1]]Task[2,*] = [Task[2,0]]

If Block[0,0] = Block[1,0], Block[0,1] = Block[1,1], and Block[0,2] = Block[2,0], then three groups can execute in parallel: [Task[0,0], Task[1,0]], [Task[0,1], Task[1,1]], and [Task[0,2], Task[2,0]].

PolarDB exploits this independence in its parallel task execution framework, applied directly to the WAL replay path.

Key concepts

| Term | Definition |

|---|---|

| Block | A data block. |

| WAL | Write-ahead log. |

| Task Node | A subtask execution node in the parallel execution framework. Each Task Node receives and executes one subtask. |

| Task Tag | A classification identifier for a subtask. Subtasks with the same Task Tag must be executed in sequence. |

| Hold List | A linked list that each child process uses to schedule and execute replay subtasks. |

Parallel task execution framework

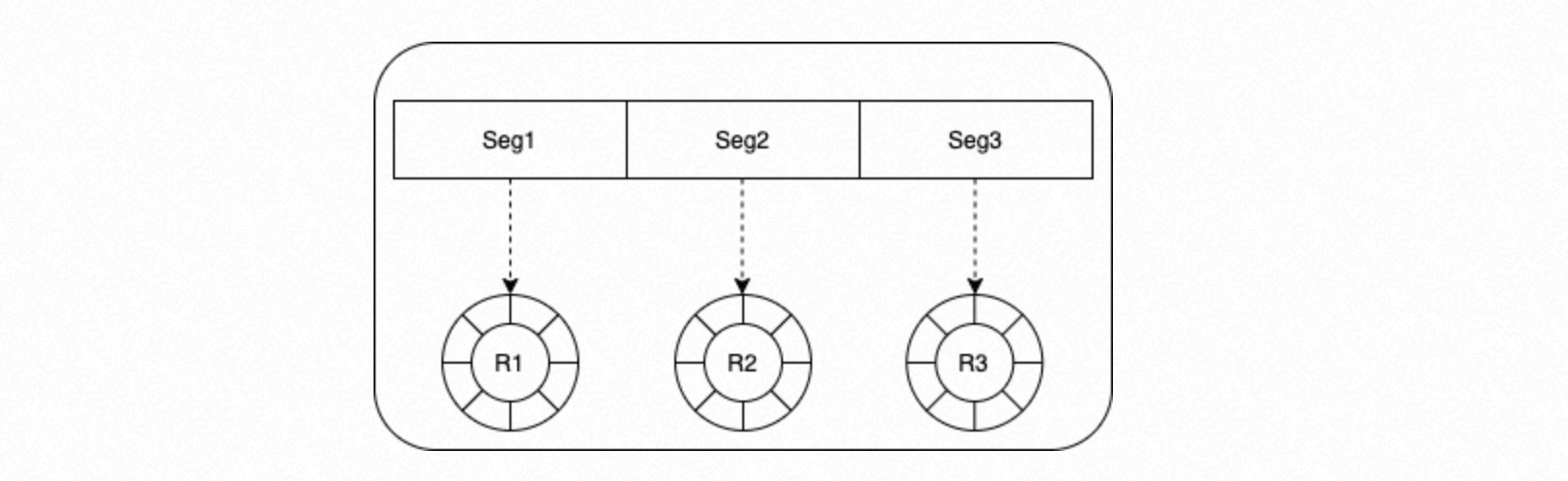

Shared memory is divided equally based on the number of concurrent processes. Each segment acts as a circular queue assigned to one process. The depth of each queue is configurable.

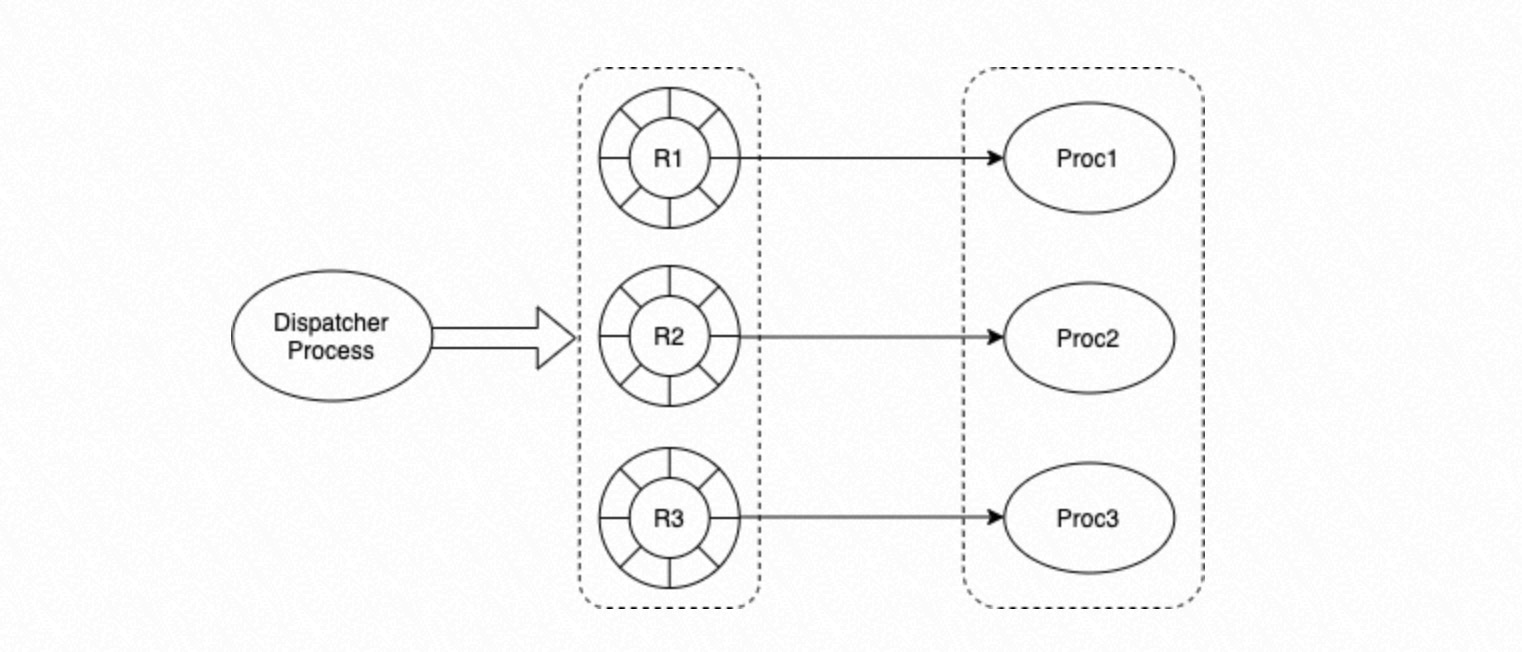

The framework has two roles:

Dispatcher process: Dispatches tasks to specific processes and removes completed tasks from queues.

Process group: Each process retrieves a task from its circular queue and executes it based on the task's state.

Task states

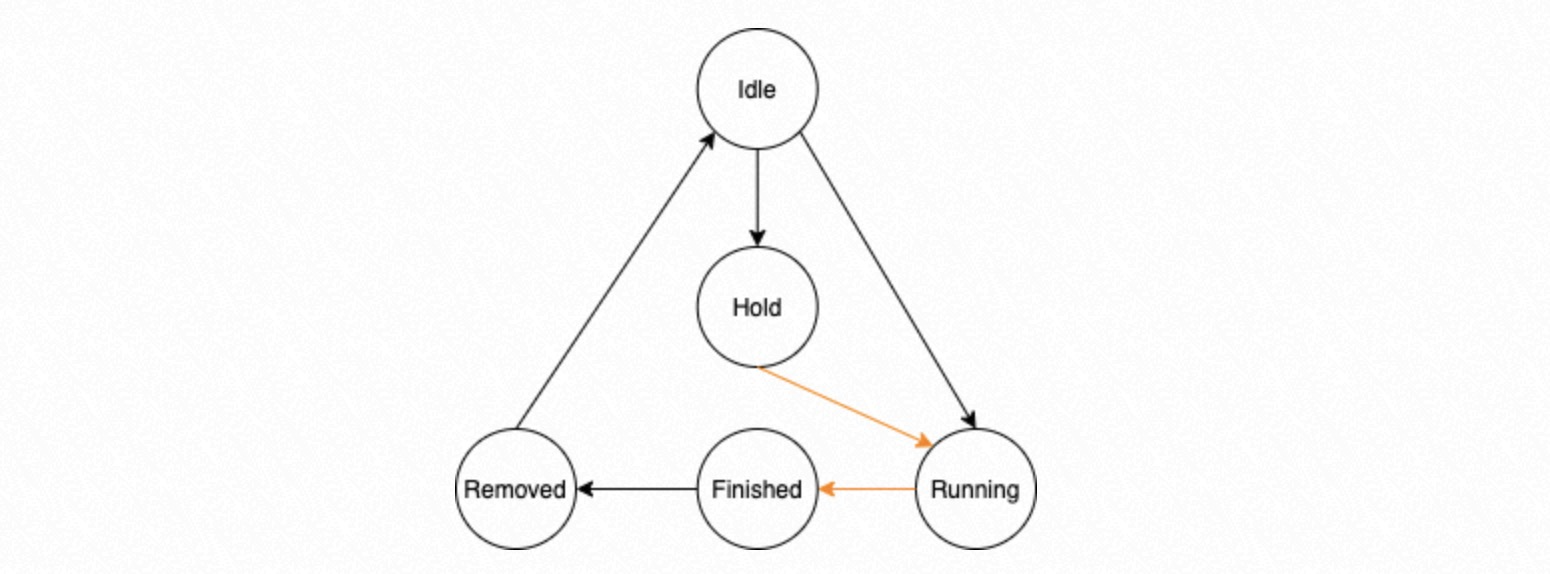

Each Task Node in a circular queue has one of five states:

| State | Description |

|---|---|

| Idle | The Task Node has no assigned task. |

| Running | The Task Node is assigned a task and is waiting or executing. |

| Hold | The task depends on a preceding task and must wait for it to finish. |

| Finished | All processes in the process group have completed the task. |

| Removed | The Dispatcher has confirmed that the task and all its prerequisites are Finished, and has deleted them from the management struct. This ensures dependent task results are processed in the correct order. |

In the state machine above, transitions along black lines are performed by the Dispatcher process; transitions along orange lines are performed by the parallel replay process group.

Dispatcher process: task scheduling and dependency management

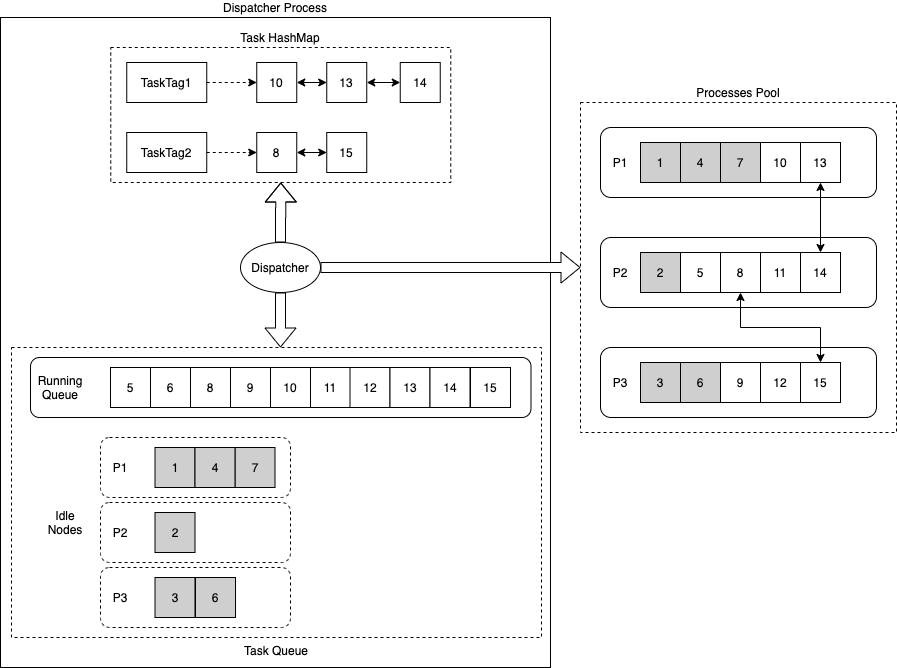

The Dispatcher uses three data structures:

Task HashMap: Records the hash mapping between Task Tags and their corresponding task execution lists. Each task has a Task Tag; tasks that share a dependency share the same Task Tag.

Task Running Queue: Records tasks currently being executed.

Task Idle Nodes: Records Task Nodes in the Idle state for each process in the process group.

The Dispatcher applies two scheduling policies:

Affinity scheduling: If a task with the same Task Tag is already running, assign the new task to the process handling the last task in that Tag's linked list. This keeps dependent tasks on the same process, reducing inter-process synchronization overhead.

Round-robin fallback: If the preferred process's queue is full, or no task with the same Task Tag is running, select the next process in the group and assign an Idle Task Node from its queue. This distributes tasks evenly across all processes.

Process group: parallel execution and retry

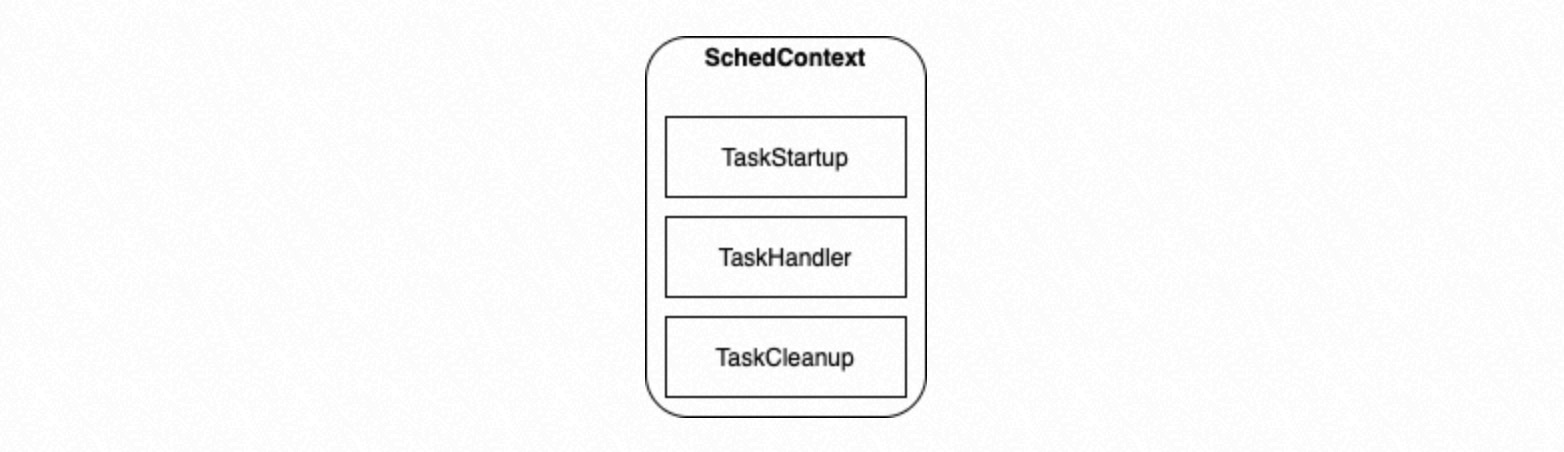

During process group initialization, SchedContext is configured with three function pointers:

| Function | Purpose |

|---|---|

TaskStartup | Runs initialization before a process begins executing tasks. |

TaskHandler | Executes a specific task based on the incoming Task Node. |

TaskCleanup | Runs cleanup before a process exits. |

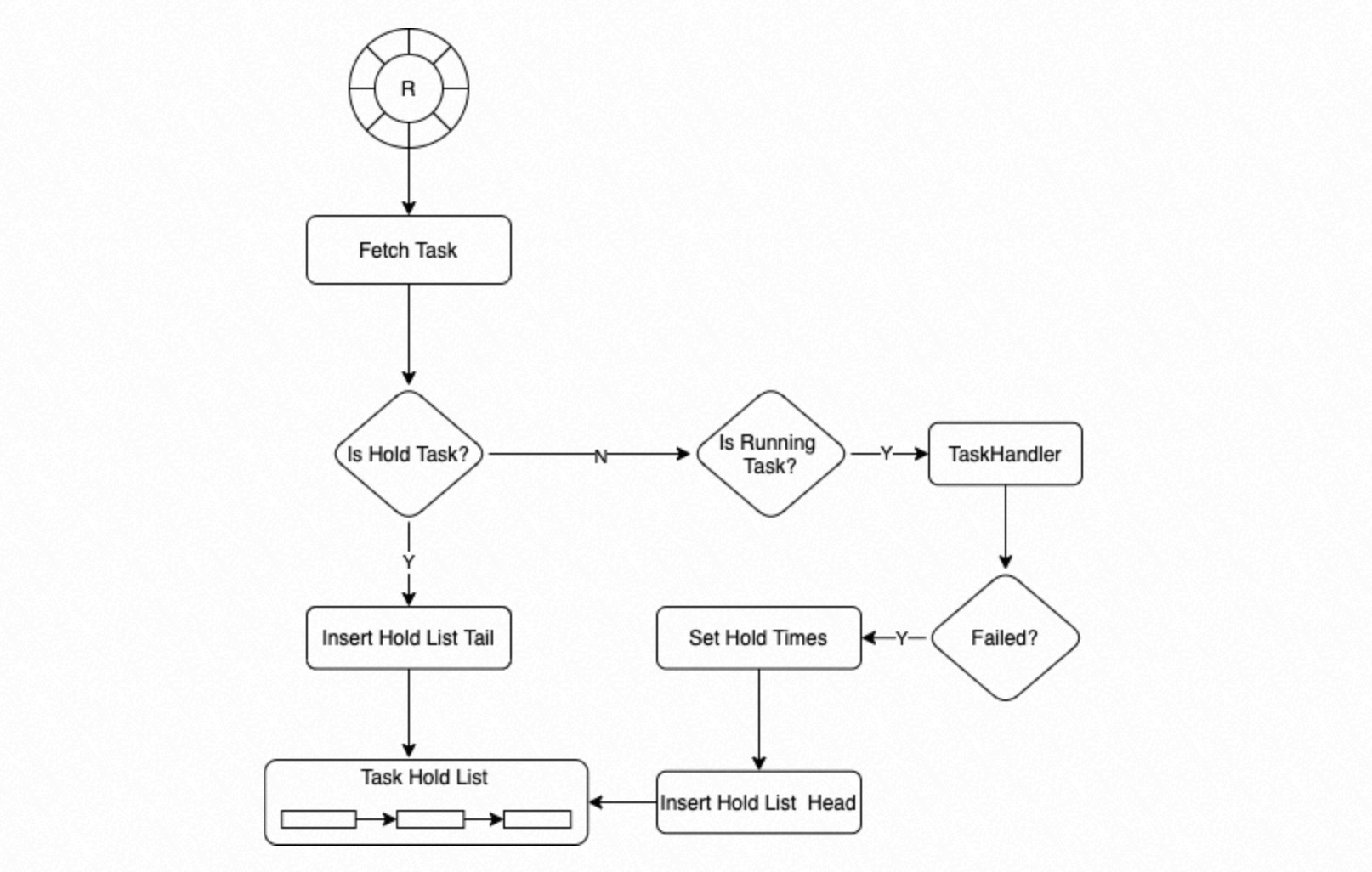

Each process retrieves a Task Node from its circular queue:

If the state is Hold, the process inserts the node at the tail of the Hold List.

If the state is Running, the process calls

TaskHandler. IfTaskHandlerfails, the system sets a retry count of 3 for the Task Node and inserts it at the head of the Hold List.

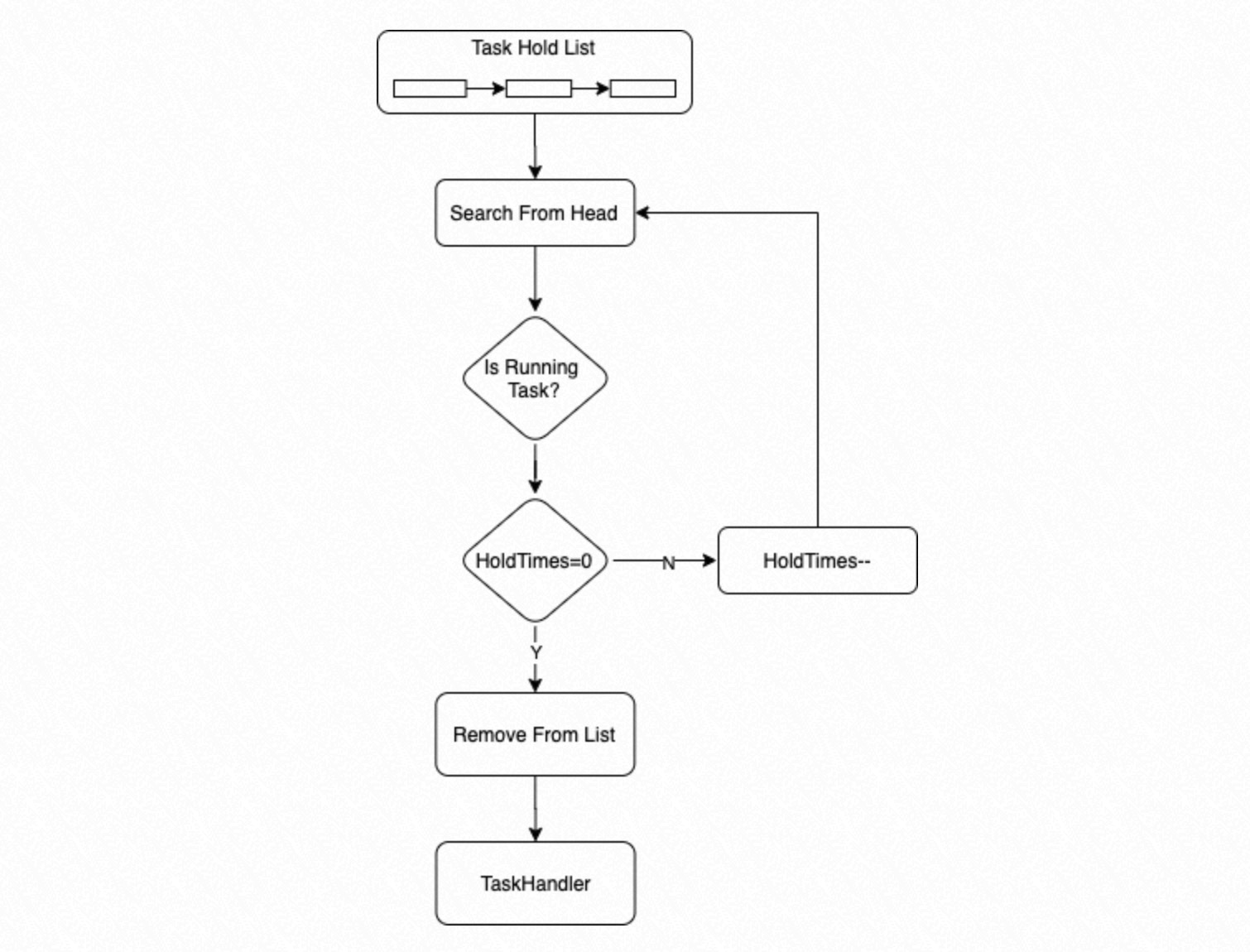

The process then searches the Hold List from the beginning for an executable task:

If a task's state is Running and its wait count is 0, the process executes it.

If the wait count is greater than 0, the process decrements it by 1 and moves on.

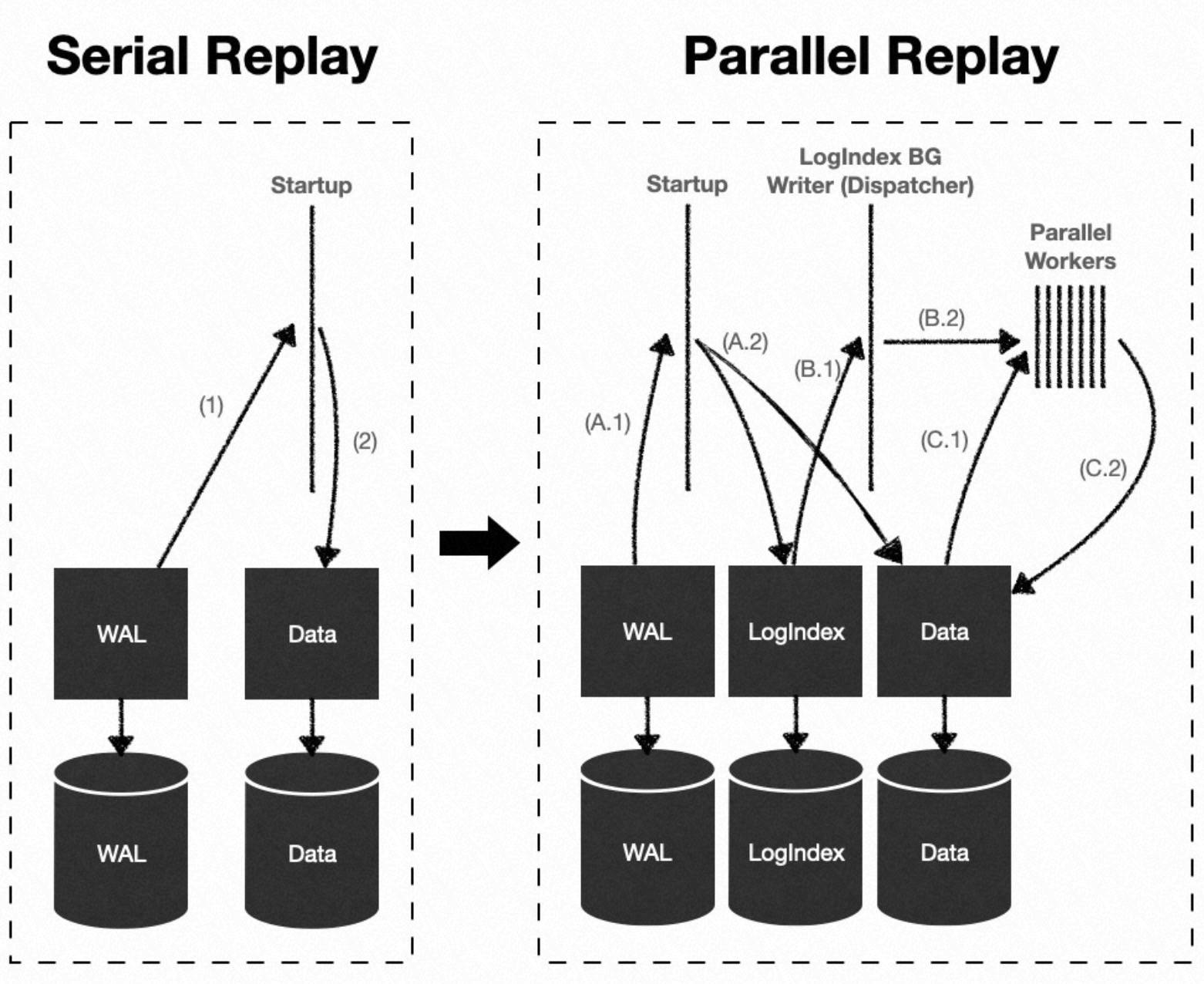

WAL parallel replay workflow

LogIndex data records the mapping between WAL records and the data blocks they modify, and supports retrieval by log sequence number (LSN). During continuous WAL replay on a standby node, PolarDB uses the parallel execution framework to distribute replay tasks across multiple processes.

The four processes involved and their roles:

| Process | Role |

|---|---|

| Startup process | Parses WAL records and builds LogIndex data without replaying. |

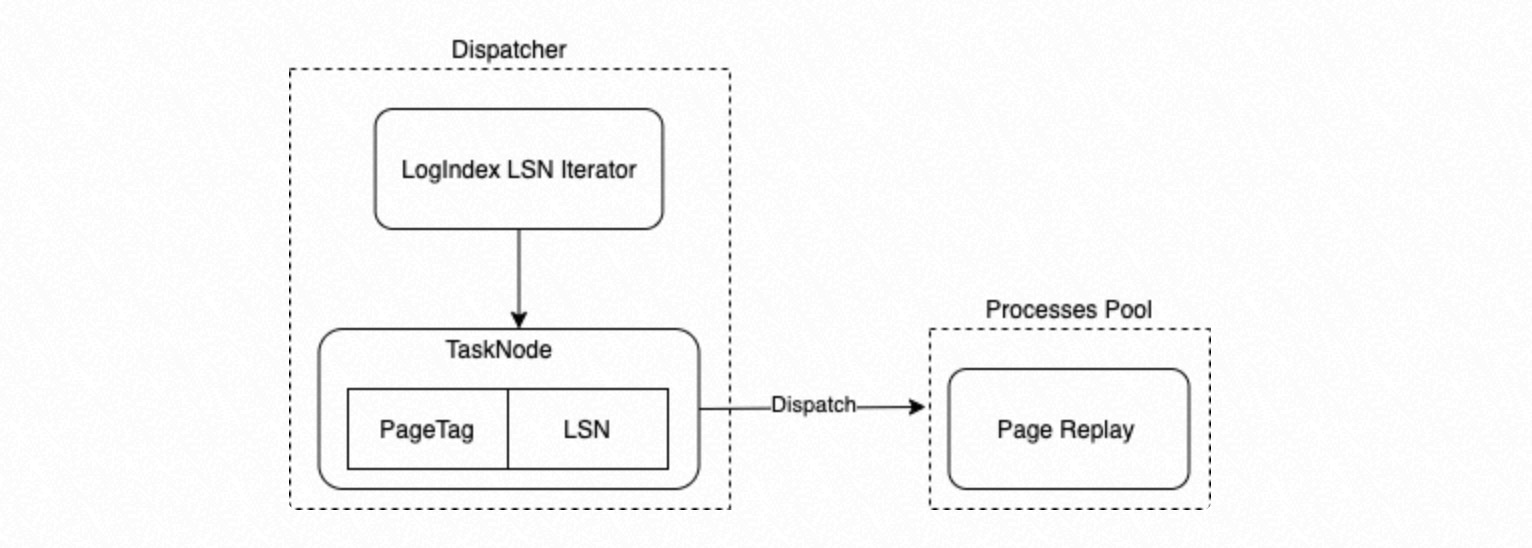

| LogIndex BGW replay process | Acts as the Dispatcher. Uses LSNs to retrieve LogIndex data, builds replay subtasks ({LSN → PageTag} mappings), and assigns them to the parallel replay process group. The PageTag serves as the Task Tag. |

| Parallel replay process group | Executes replay subtasks, each replaying a single WAL record on a data block. |

| Backend process | When reading a data block, uses the PageTag to retrieve LogIndex data, gets the linked list of LSNs for WAL records that modified the block, and replays the entire WAL record chain on that block. |

The Dispatcher enumerates PageTags and their corresponding LSNs in LogIndex insertion order to build {LSN → PageTag} Task Nodes, then dispatches them to the process group.

Enable WAL parallel replay

Add the following parameter to the postgresql.conf file on the standby node.

polar_enable_parallel_replay_standby_mode = ON| Parameter | Type | Default | Description |

|---|---|---|---|

polar_enable_parallel_replay_standby_mode | Boolean | OFF | Enables parallel WAL replay on the standby node. Set to ON to activate the feature. |