Elastic Parallel Query distributes analytical queries across multiple read-only nodes in parallel, enabling hybrid transactional and analytical processing (HTAP) capabilities on PolarDB for PostgreSQL (Compatible with Oracle). This topic explains how the feature works, how to configure it, and how to control parallelism at the system, session, and query level.

How it works

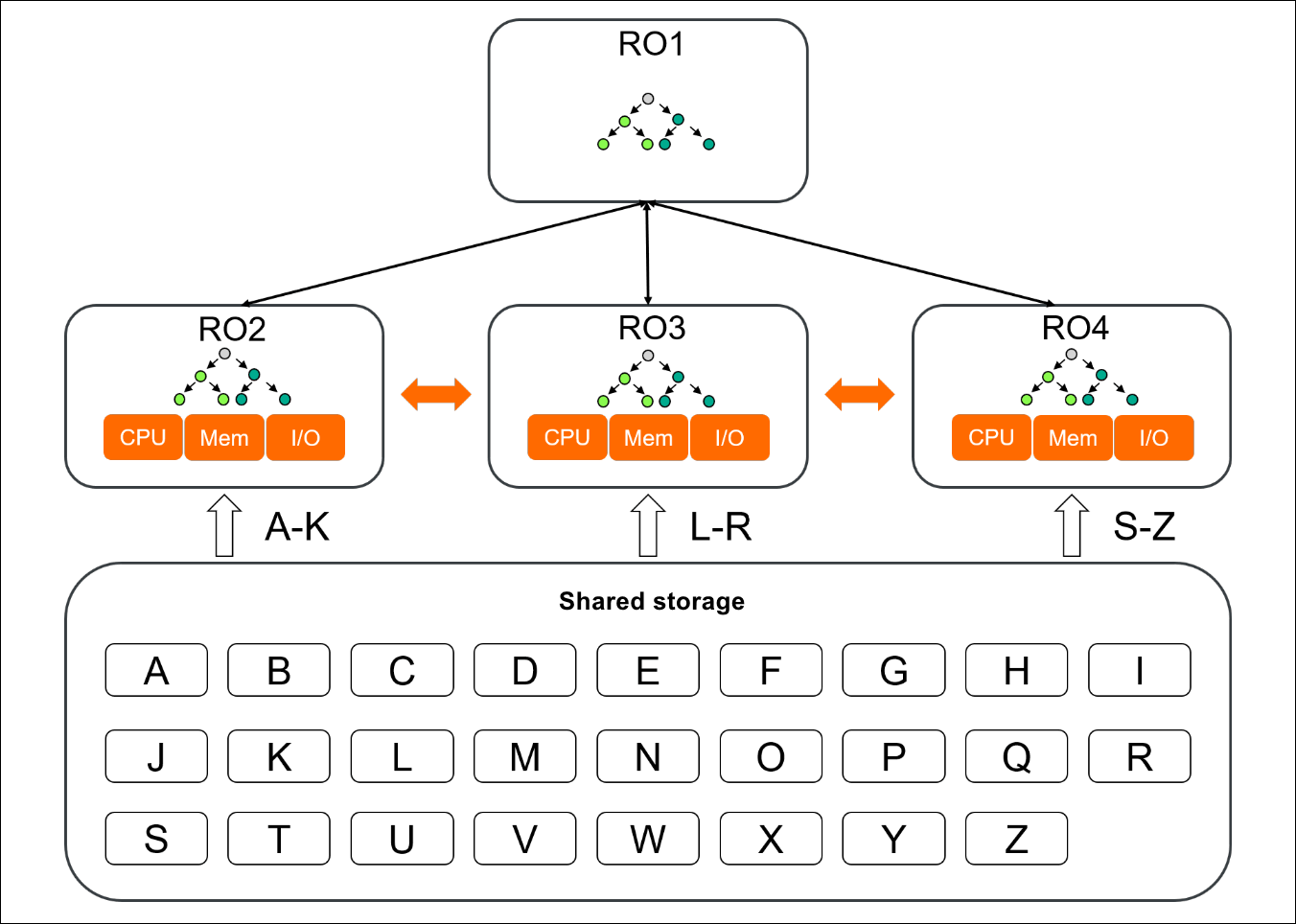

Elastic Parallel Query uses two types of nodes:

-

Query coordinator (QC) node: receives the query request, divides the execution plan into shards, routes shards to parallel execution (PX) nodes, and aggregates the results.

-

PX nodes: each runs an assigned shard, reads data blocks from the shared storage system Polar File System (PolarFS), and returns results to the QC node.

In the figure above, RO1 is the QC node and RO2, RO3, and RO4 are PX nodes. RO1 divides the execution plan into three shards and routes them. Each PX node reads from PolarFS and sends results back to RO1, which combines them into the final result.

Usage notes

Elastic Parallel Query is disabled by default and requires multiple read-only nodes. It is suitable only for analytical queries that are run infrequently.

Parameters

Configure the following parameters to enable and tune Elastic Parallel Query.

| Parameter | Description | Default |

|---|---|---|

polar_enable_px |

Enables or disables Elastic Parallel Query. Set to on to enable. |

off |

polar_px_dop_per_node |

Degree of parallelism (DoP) per session per node. Each node uses this many PX worker processes for a query. Set this to the number of CPU cores on your nodes for maximum throughput. | 1 |

polar_px_max_workers_number |

Maximum number of worker processes per node across all sessions. Caps the total parallelism regardless of DoP settings. | 30 |

polar_px_nodes |

Comma-separated list of read-only nodes to use for parallel execution. Leave blank to use all available read-only nodes. | (all nodes) |

polar_px_enable_replay_wait |

Enables strong consistency. When set to on, read-only nodes replay all WAL log records up to the moment the query is initiated before running the query. This ensures nodes read the latest DDL changes but reduces query performance. |

off |

px_workers |

Table-level parameter controlling whether Elastic Parallel Query applies to a specific table. Set to 1 to enable, -1 to disable, and 0 to use the default (not applied). |

0 |

polar_cluster_map |

Read-only parameter listing the names of all read-only nodes in the cluster. Updated automatically when nodes are added. Available only for clusters running minor kernel version 1.1.20 (released January 2022) or earlier. | N/A |

synchronous_commit |

Controls whether a transaction waits for WAL logs to be written to disk before returning a success message. Valid values: off, on, local, remote_write, remote_apply. In PX mode, this parameter is set to on. |

on |

Key considerations:

-

`polar_enable_px`: must be set to

onbefore any other configuration takes effect. Set this at the system level to enable the feature globally, or at the session level to limit it to specific workloads. -

`polar_px_dop_per_node`: setting this too high on nodes running concurrent sessions multiplies worker process usage. Each session can consume up to

polar_px_dop_per_nodeworkers per node, so total worker usage = DoP x number of active sessions. Make sure the total does not exceedpolar_px_max_workers_number. -

`polar_px_enable_replay_wait`: set to

ononly when your workload involves recent DDL changes (such asCREATE TABLE) that the read-only nodes may not have replayed yet. For read-heavy analytical workloads without recent DDL activity, leave this asoffto avoid replay overhead. -

`px_workers`: use this to limit Elastic Parallel Query to specific high-value tables rather than enabling it globally. This reduces unnecessary resource consumption on tables that do not benefit from parallelism.

Control parallelism at system, session, and query level

Elastic Parallel Query can be enabled and tuned at three levels. The level you choose depends on your use case:

-

System level: apply Elastic Parallel Query globally for all queries and all sessions. Use this when your cluster is dedicated to analytical workloads.

-

Session level: enable, disable, or force Elastic Parallel Query for the current connection. Use this for ad hoc analytical queries on a mixed-workload cluster.

-

Query level: override parallelism for a single query using hints. Use this for targeted optimization of specific queries without changing session or system defaults.

System level

Configure global Grand Unified Configuration (GUC) parameters to enable Elastic Parallel Query for all sessions.

postgres=# alter system set polar_enable_px=1;

ALTER SYSTEM

postgres=# alter system set polar_px_dop_per_node=1;

ALTER SYSTEM

postgres=# select pg_reload_conf();

pg_reload_conf

----------------

t

(1 row)

postgres=# \c postgres

You are now connected to database "postgres" as user "postgres".

postgres=# drop table if exists t1;

DROP TABLE

postgres=# select id into t1 from generate_series(1, 1000) as id order by id desc;

SELECT 1000

postgres=# alter table t1 set (px_workers=1);

ALTER TABLE

postgres=# explain (verbose, costs off) select * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 2:1 (slice1; segments: 2)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)The PX Coordinator node in the plan output confirms that Elastic Parallel Query is active. The segments: 2 value reflects the DoP times the number of PX nodes being used.

Session level

Control Elastic Parallel Query for the current session using the ALTER SESSION statement or GUC parameters. Changes apply only to the current connection.

ALTER SESSION syntax

ALTER SESSION ENABLE PARALLEL QUERY

ALTER SESSION DISABLE PARALLEL QUERY

ALTER SESSION FORCE PARALLEL QUERY [PARALLEL integer]-

ENABLE PARALLEL QUERY: allows hints and syntax to enable Elastic Parallel Query for this session. -

DISABLE PARALLEL QUERY: blocks Elastic Parallel Query for this session, even if hints specify it. -

FORCE PARALLEL QUERY [PARALLEL integer]: forces Elastic Parallel Query regardless of other settings. If you omitPARALLEL integer, the DoP defaults to the value ofpolar_px_dop_per_node.

Priority order when multiple settings conflict: query-level hint (highest) > FORCE PARALLEL > polar_px_dop_per_node (lowest).

After the current session is reconnected, Elastic Parallel Query is enabled for the session by default.

Example — enable for session:

--enable

postgres=# set polar_enable_px = false;

SET

postgres=# set polar_px_enable_hint = true;

SET

postgres=# alter session enable parallel query;

ALTER SESSION

postgres=# explain (verbose, costs off) select /*+ PARALLEL(4)*/ * from t1 where id < 10;

INFO: [HINTS] PX PARALLEL(4) accepted.

QUERY PLAN

-------------------------------------------

PX Coordinator 8:1 (slice1; segments: 8)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)

--disable

postgres=# set polar_enable_px = false;

SET

postgres=# set polar_px_enable_hint = true;

SET

postgres=# alter session disable parallel query;

ALTER SESSION

postgres=# explain (verbose, costs off) select /*+ PARALLEL(4)*/ * from t1 where id < 10;

QUERY PLAN

------------------------

Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

(3 rows)

--force

postgres=# set polar_enable_px = false;

SET

postgres=# set polar_px_enable_hint = false;

SET

postgres=# alter session force parallel query;

ALTER SESSION

postgres=# explain (verbose, costs off) select * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 2:1 (slice1; segments: 2)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)

postgres=# alter session force parallel query parallel 2;

ALTER SESSION

postgres=# explain (verbose, costs off) select * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 4:1 (slice1; segments: 4)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)GUC parameters

Set session-level GUC parameters to enable or disable Elastic Parallel Query for the current connection.

postgres=# set polar_enable_px = true;

SET

postgres=# set polar_px_dop_per_node = 1;

SET

postgres=# explain (verbose, costs off) select * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 2:1 (slice1; segments: 2)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)Query level

Override parallelism for a single query using SQL hints. First enable hint support:

set polar_px_enable_hint = true; -- default: falseSupported hint syntax:

/*+ PARALLEL(DEFAULT) */ -- use the DoP from polar_px_dop_per_node

/*+ PARALLEL(integer) */ -- use the specified DoP

/*+ NO_PARALLEL(tablename) */ -- disable Elastic Parallel Query for the specified tableThese hints are compatible with Oracle hint syntax. Elastic Parallel Query supports only PARALLEL and NO_PARALLEL hints. When using multiple hints:

Only the first hint block takes effect when multiple hint blocks are chained (for example, /*+A*/ /*+B*/).

If multiple PARALLEL hints appear in a single block with the same DoP, one takes effect. If they specify different DoP values, none take effect.

If aPARALLELhint and aNO_PARALLELhint appear in the same block, theNO_PARALLELhint does not take effect.

Example:

postgres=# set polar_enable_px = false;

SET

postgres=# set polar_px_dop_per_node = 1;

SET

postgres=# set polar_px_enable_hint = true;

SET

postgres=# explain (verbose, costs off) select * from t1 where id < 10;

QUERY PLAN

------------------------

Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

(3 rows)

postgres=# explain (verbose, costs off) select /*+ PARALLEL(DEFAULT) */ * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 2:1 (slice1; segments: 2)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)

postgres=# explain (verbose, costs off) select /*+ PARALLEL(4) */ * from t1 where id < 10;

QUERY PLAN

-------------------------------------------

PX Coordinator 8:1 (slice1; segments: 8)

Output: id

-> Partial Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

Optimizer: PolarDB PX Optimizer

(6 rows)

postgres=# explain (verbose, costs off) select /*+ PARALLEL(0) */ * from t1 where id < 10;

QUERY PLAN

------------------------

Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

(3 rows)

postgres=# explain (verbose, costs off) select /*+ NO_PARALLEL(t1) */ * from t1 where id < 10;

QUERY PLAN

------------------------

Seq Scan on public.t1

Output: id

Filter: (t1.id < 10)

(3 rows)Combined behavior: system, session, and query settings

The following table shows how results are determined when system, session, and query settings interact.

System (polar_enable_px) |

Session | Query | Result |

|---|---|---|---|

on |

enable (DoP=X) |

No hint | Parallel, DoP=X |

on |

enable (DoP=X) |

PARALLEL(Y) |

Parallel, DoP=Y |

on |

enable (DoP=X) |

NO_PARALLEL |

Serial |

on |

disable (DoP=X) |

No hint | Serial |

on |

disable (DoP=X) |

PARALLEL(Y) |

Serial |

on |

disable (DoP=X) |

NO_PARALLEL |

Serial |

on |

FORCE PARALLEL Z |

No hint | Parallel, DoP=Z |

on |

FORCE PARALLEL Z |

PARALLEL(Y) |

Parallel, DoP=Y |

on |

FORCE PARALLEL Z |

NO_PARALLEL |

Serial |

off |

enable (DoP=X) |

No hint | Serial |

off |

enable (DoP=X) |

PARALLEL(Y) |

Parallel, DoP=Y |

off |

enable (DoP=X) |

NO_PARALLEL |

Serial |

off |

disable (DoP=X) |

No hint | Serial |

off |

disable (DoP=X) |

PARALLEL(Y) |

Serial |

off |

disable (DoP=X) |

NO_PARALLEL |

Serial |

off |

FORCE PARALLEL Z |

No hint | Parallel, DoP=Z |

off |

FORCE PARALLEL Z |

PARALLEL(Y) |

Parallel, DoP=Y |

off |

FORCE PARALLEL Z |

NO_PARALLEL |

Serial |

Example: enable Elastic Parallel Query for a table

The following example demonstrates the effect of Elastic Parallel Query on a single-table query.

Create a test table with one million rows:

CREATE TABLE test(id int);

INSERT INTO test SELECT generate_series(1,1000000);

EXPLAIN SELECT * FROM test;By default, Elastic Parallel Query is disabled and the planner uses a sequential scan:

QUERY PLAN

--------------------------------------------------------

Seq Scan on test (cost=0.00..35.50 rows=2550 width=4)

(1 row)Enable Elastic Parallel Query for the table and run the query again:

ALTER TABLE test SET (px_workers=1);

SET polar_enable_px=on;

EXPLAIN SELECT * FROM test;The plan now uses a PX Coordinator, confirming that parallel execution is active:

QUERY PLAN

-------------------------------------------------------------------------------

PX Coordinator 2:1 (slice1; segments: 2) (cost=0.00..431.00 rows=1 width=4)

-> Seq Scan on test (scan partial) (cost=0.00..431.00 rows=1 width=4)

Optimizer: PolarDB PX Optimizer

(3 rows)To query available read-only nodes and assign specific nodes for Elastic Parallel Query:

-- List all read-only nodes

SHOW polar_cluster_map;

polar_cluster_map

-------------------

node1,node2,node3

(1 row)

-- Use only node1 and node2

SET polar_px_nodes='node1,node2';

SHOW polar_px_nodes;

polar_px_nodes

----------------

node1,node2

(1 row)Performance data

The following results are from a test using five read-only nodes:

-

Full table scan (

SELECT COUNT(*)): Elastic Parallel Query is 60 times faster than single-node parallel execution. -

TPC-H queries: Elastic Parallel Query is 30 times faster than single-node parallel execution.

This test is based on the TPC-H benchmark but does not satisfy all TPC-H benchmark requirements. The results may not be directly comparable to published TPC-H benchmark results.