PolarDB for PostgreSQL (Compatible with Oracle) uses a shared-everything architecture that decouples compute from storage, letting you scale compute nodes independently without copying data.

How it works

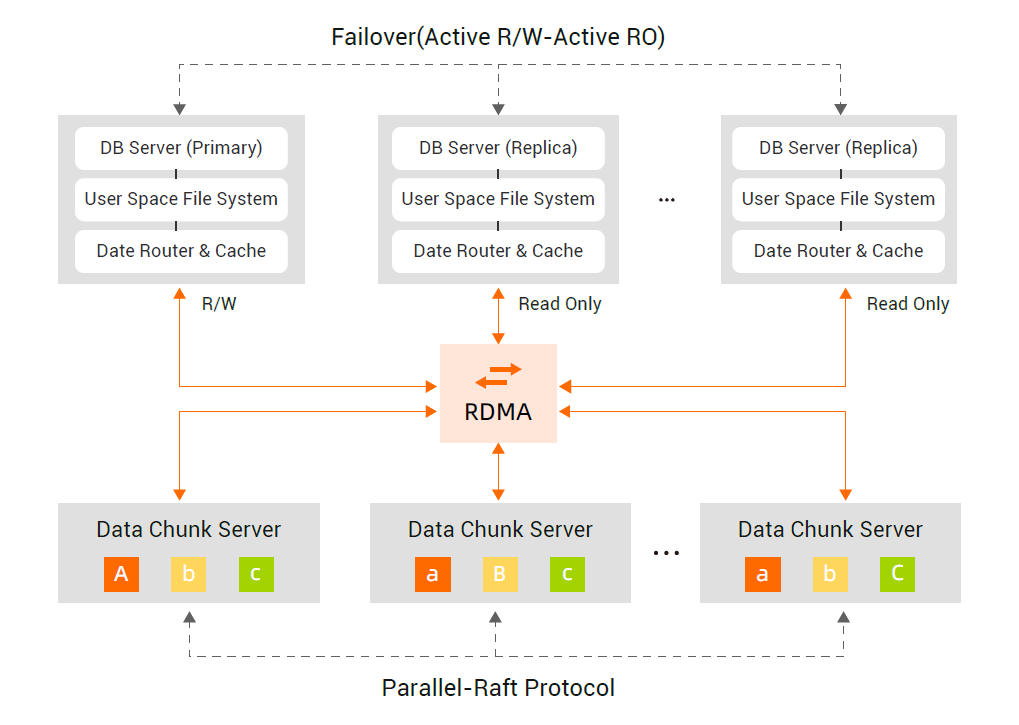

The architecture separates compute nodes from storage nodes. Database files and redo log files are stored in shared storage, so the primary node and all read-only nodes access the same data. Only metadata in the primary node's memory is synchronized to read-only nodes, which eliminates data copying and reduces network overhead. Multiversion Concurrency Control (MVCC) ensures read consistency across nodes.

In traditional database clusters, each replica holds a full copy of the data. Adding a replica requires synchronizing all incremental data, which increases latency as the cluster grows. With PolarDB's shared storage, adding a read-only node takes within 5 minutes and requires no data replication.

Architecture layers

Layer | Component | Role |

Proxy | PolarProxy | Routes connections, balances load, and provides a unified endpoint |

Compute | Primary node + read-only nodes | Parses SQL, runs queries, and manages transactions |

Network | 25 Gbit/s Remote Direct Memory Access (RDMA) | Low-latency interconnect between compute and storage |

Storage | Polar File System (PolarFS) | Distributed file system shared by all compute nodes |

Compute layer

A cluster supports up to 16 database nodes.

Primary node — Handles all read and write operations. Each cluster has one primary node.

Read-only node — Handles read operations only. A cluster supports up to 15 read-only nodes. Because read-only nodes connect to the same shared storage volume, a new read-only node is ready to serve traffic within 5 minutes with no data replication.

Compute nodes parse and optimize SQL statements, run parallel queries, and handle lock-free high-performance transactions. Memory state is synchronized across compute nodes using a high-throughput physical replication protocol.

Compute nodes also push filter and projection operators down to the storage layer through an intelligent interconnection protocol based on database semantics, reducing the volume of data transferred over the network.

Storage layer

Polar File System (PolarFS) is a distributed file system independently developed by Alibaba Cloud for database workloads. In China, PolarFS is a distributed storage system designed for database applications. It uses a full user-space I/O stack with kernel bypass, delivering latency and throughput comparable to a local SSD. The distributed cluster architecture provides both high capacity and high performance.

Key storage facts:

Maximum capacity: 500 TB per instance

Elastic scaling: Storage expands online without interrupting your workload

Billing: Charged only for the capacity you use

Reliability: Built on storage technologies developed for large-scale cloud operations

For the design principles behind PolarFS, see *PolarFS: An Ultra-low Latency and Failure Resilient Distributed File System for Shared Storage Cloud Database* (VLDB 2018).

Network

Compute nodes and storage nodes communicate over a 25 Gbit/s RDMA network. The user-mode network protocol layer uses kernel bypass, which keeps transaction latency low and reduces the overhead of synchronizing state across compute nodes.

PolarProxy

PolarProxy is the built-in database proxy that sits between your application and the compute nodes. It provides a single endpoint, so you never need to update your connection string when nodes are added, removed, or a failover occurs.

PolarProxy also manages traffic under load: it buffers and merges requests, reuses connections, and distributes load across nodes. This keeps the cluster stable in high-concurrency scenarios.