By default, PolarDB for MySQL synchronizes redo logs between nodes using shared storage or TCP, which can become a bottleneck under high write workloads and degrade read-only node consistency. Remote Direct Memory Access (RDMA)-based log shipment replaces this path with direct memory-to-memory transfer, bypassing both TCP and the receiver CPU. Compared to the default methods, RDMA-based log shipment improves log shipment throughput by 100% and reduces replication latency by more than 50%.

How it works

RDMA-based log shipment replaces shared storage and TCP synchronization with direct memory writes between nodes. Unlike traditional methods that require both sender and receiver CPUs to participate (two-sided operations), RDMA uses one-sided READ/WRITE operations: the primary node writes directly to the remote read-only node's memory using a remote address and key, without involving the receiver CPU. This means RDMA does not consume read-only node compute resources even under heavy write pressure.

The log transfer flow works as follows:

The redo log buffer of a read-only node acts as a remote image of the primary node's redo log buffer.

Before flushing the log buffer to disk, the primary node asynchronously writes the redo log to the remote read-only node's log buffer and synchronizes the offset.

The read-only node reads from its local log buffer instead of fetching redo logs from shared storage, which speeds up replication.

Limits

The cluster must run PolarDB for MySQL 8.0.1 with revision version 8.0.1.1.33 or later.

RDMA-based log shipment cannot be enabled for a secondary cluster in a Global Database Network (GDN).

Enable RDMA-based log shipment

Set the loose_innodb_polar_log_rdma_transfer parameter to enable this feature.

| Parameter | Level | Description |

|---|---|---|

loose_innodb_polar_log_rdma_transfer | Global | Enables or disables RDMA-based log shipment. Valid values: ON, OFF (default). |

Performance

Replication latency

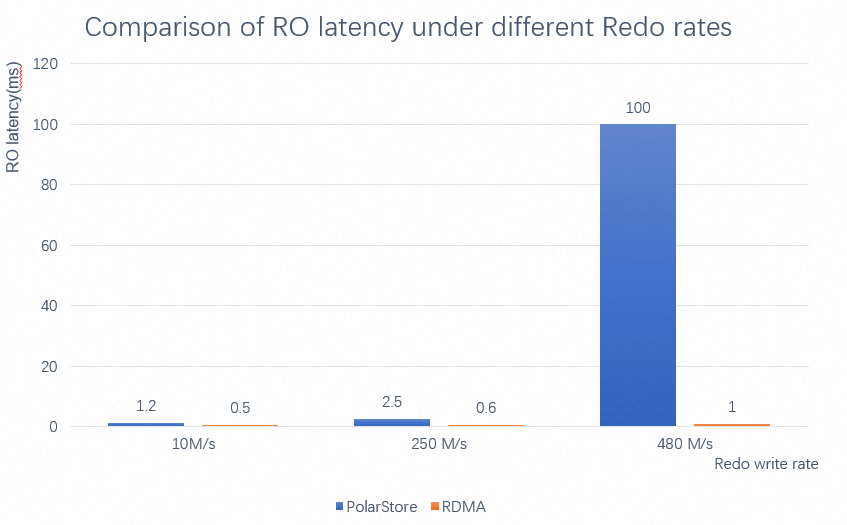

RDMA-based log shipment significantly reduces read-only node replication latency across write-intensive scenarios. Because RDMA bypasses the receiver CPU, latency stays low even as write pressure increases — unlike TCP-based replication, which competes with workloads for compute resources.

The following test uses a PolarDB for MySQL 8.0 cluster with 32 CPU cores and 128 GB of memory, 20 tables each containing 2 million rows. The test measures replication latency at increasing redo write rates, with RDMA-based log shipment enabled and disabled.

As redo write rate increases, enabling RDMA-based log shipment keeps replication latency substantially lower than the default methods.

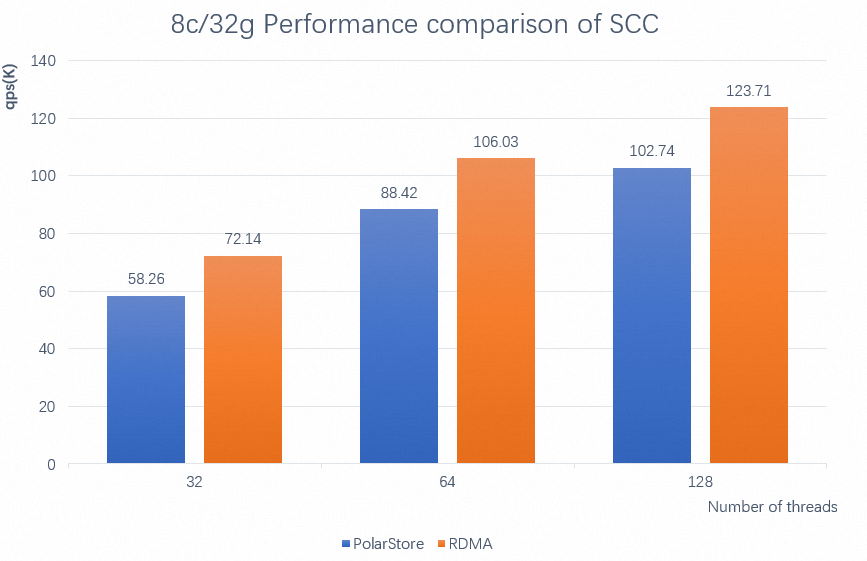

Strict Consistency Cluster (SCC) performance

When global consistency (high-performance mode) is enabled, read-only node replication latency directly affects overall cluster throughput. Reducing this latency through RDMA — without consuming receiver CPU — improves Strict Consistency Cluster (SCC) performance, particularly under high write pressure.

The following test uses a PolarDB for MySQL 8.0 cluster with 8 CPU cores and 32 GB of memory, 20 tables each containing 2 million rows. The test measures SCC performance at different thread counts, with RDMA-based log shipment enabled and disabled.

Enabling RDMA-based log shipment improves SCC performance by approximately 20%.