This topic describes the three-layer architecture of PolarDB for MySQL, which uses Compute Express Link (CXL) to decouple compute, memory, and storage. The core innovations of this technology are detailed in the paper "Unlocking the Potential of CXL for Disaggregated Memory in Cloud-Native Databases," which received the Best Paper Award at SIGMOD 2025. PolarDB for MySQL uses PolarCXLMem to create a disaggregated memory system that directly connects high-performance memory pools to compute nodes. This architecture allows CXL memory to be loaded in seconds and expands the maximum memory of a single node to 8 TB. In the sysbench I/O-bound model, this architecture improves performance by more than 100%. Additionally, the proprietary PolarRecv technology, combined with the persistence feature of CXL memory, improves database crash recovery performance by more than 40 times. CXL-based memory pooling is also suitable for artificial intelligence (AI) training and inference scenarios.

The Compute Express Link (CXL) memory extension feature is currently in canary release. To use this feature or if you have any questions, submit a ticket to contact us.

Applicable scope

Cluster version: MySQL 8.0.2 with minor engine version 8.0.2.2.31.1 or later.

How it works

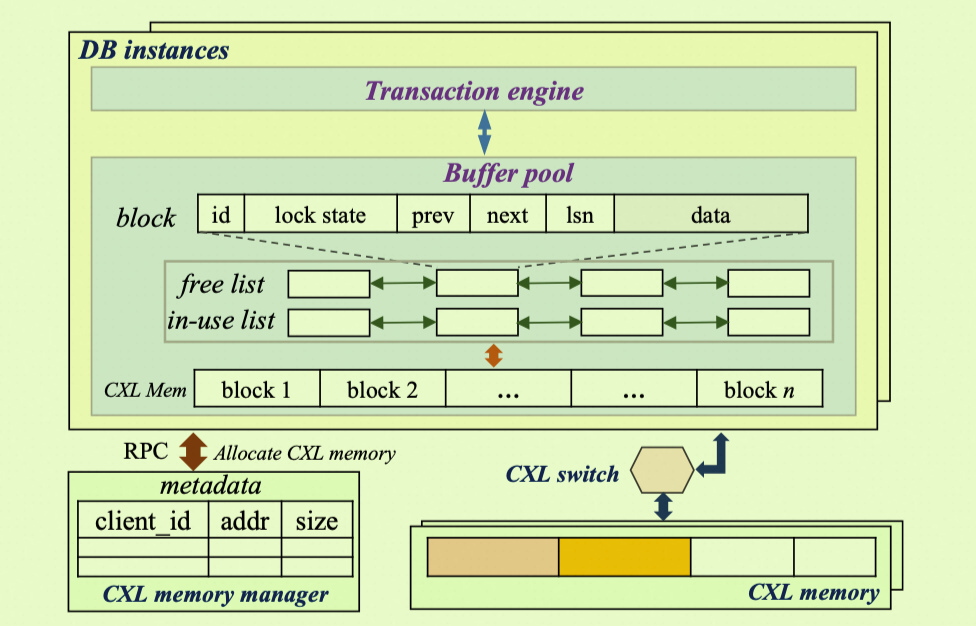

Traditional architectures make it difficult and costly to scale out memory resources. To address this pain point, PolarDB for MySQL uses CXL 2.0 technology to provide an innovative PolarCXLMem disaggregated memory architecture. This architecture uses a CXL memory pool to extend DRAM. The pool connects directly to the compute nodes, and the database engine can read and write data in CXL memory using native Load/Store instructions, just as it would access local memory.

Compared to traditional Remote Direct Memory Access (RDMA) solutions, the PolarCXLMem architecture does not require a complex network protocol stack or data copying. This avoids performance overhead and write amplification. It achieves true decoupling of compute and memory, offering a memory option with near-DRAM performance, lower cost, and higher capacity.

Unified and non-hierarchical memory design

Unlike the PolarDB three-layer decoupling (RDMA) solution, which accesses remote memory over an RDMA network, PolarCXLMem uses the CXL.mem protocol to directly map CXL memory devices to the host's physical address space. For the database kernel, DRAM and CXL memory form a single, non-hierarchical Buffer Pool. The CPU uses native

Load/Storeinstructions to directly access CXL memory in the same way it accesses local DRAM. This avoids the performance overhead and write amplification caused by swapping pages between different memory levels.Hardware-level cache coherency

The CXL.cache protocol ensures data consistency between the CPU cache and CXL memory at the hardware level. This eliminates the need for the database software to perform additional cache management, which simplifies the architecture and improves efficiency.

Restoration within seconds using persistent memory (PolarRecv)

The PolarRecv technology leverages the persistence feature of CXL memory. When the database crashes, the Buffer Pool data on the CXL persistent memory does not need to be recovered by replaying redo logs. Using PolarRecv, the database can directly load data from CXL memory. This enables loading in seconds and reduces the recovery time objective (RTO) to seconds. In specific test scenarios, the recovery speed can be improved by more than 40 times.

Benefits

Significant cost reduction: The cost per gigabyte of CXL memory is much lower than that of local DRAM, reducing your total database memory costs by 30% to 50%. Compute and memory resources can also scale elastically and independently on a pay-as-you-go basis, which prevents resource waste.

Overcome capacity limitations: Overcome the memory slot limitations of a single physical server and increase the memory capacity of a single compute node to 8 TB. This lets you effectively handle analysis and query scenarios for very large datasets.

Near-DRAM performance: In various online transactional processing (OLTP) workload scenarios, such as point queries, mixed reads, and read-writes, adding CXL memory improves cluster performance by 20% to 112%. Additionally, the proprietary

PolarRecvtechnology and the persistence of CXL memory can reduce the database crash recovery RTO by more than 40 times.

Scenarios

Memory-intensive online transaction processing (OLTP): In scenarios such as social media, gaming, and E-commerce, core data such as user relationships, product information, and real-time orders must reside in memory to ensure low-latency access. You can use CXL memory to cost-effectively cache larger working sets in memory and reduce I/O overhead.

Large-scale data analysis and reporting (analytical processing, or AP): For services that require complex queries and real-time analysis of massive datasets, the large capacity of CXL memory can hold more data. This accelerates query processing and avoids frequent disk reads caused by insufficient memory.

Development and test environments: When you configure large memory specifications for development and test environments, CXL memory is a cost-effective choice. It lets you simulate large-memory production environments at a lower cost.

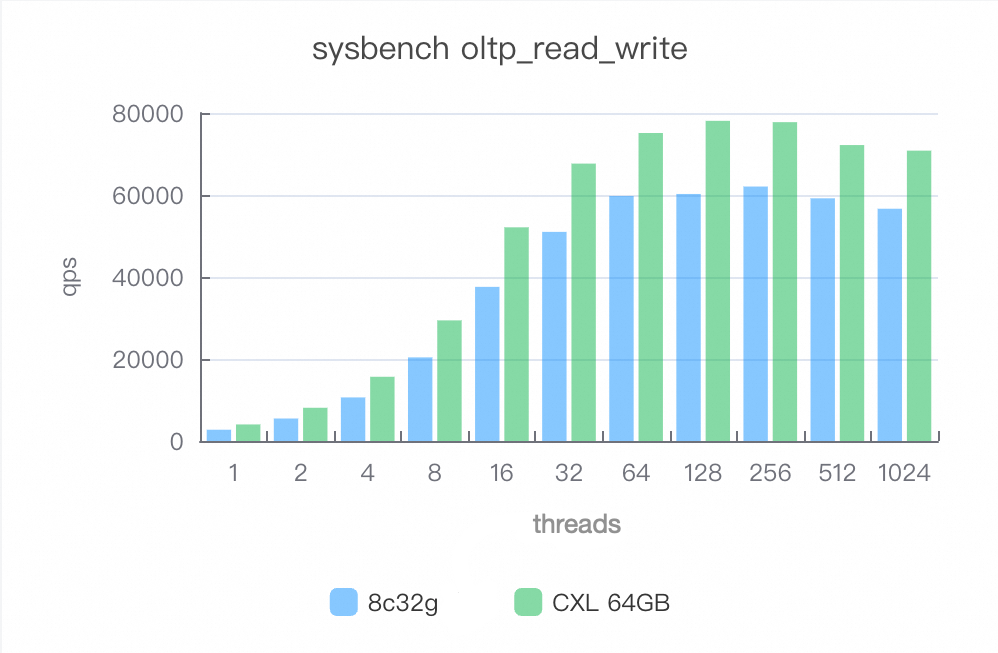

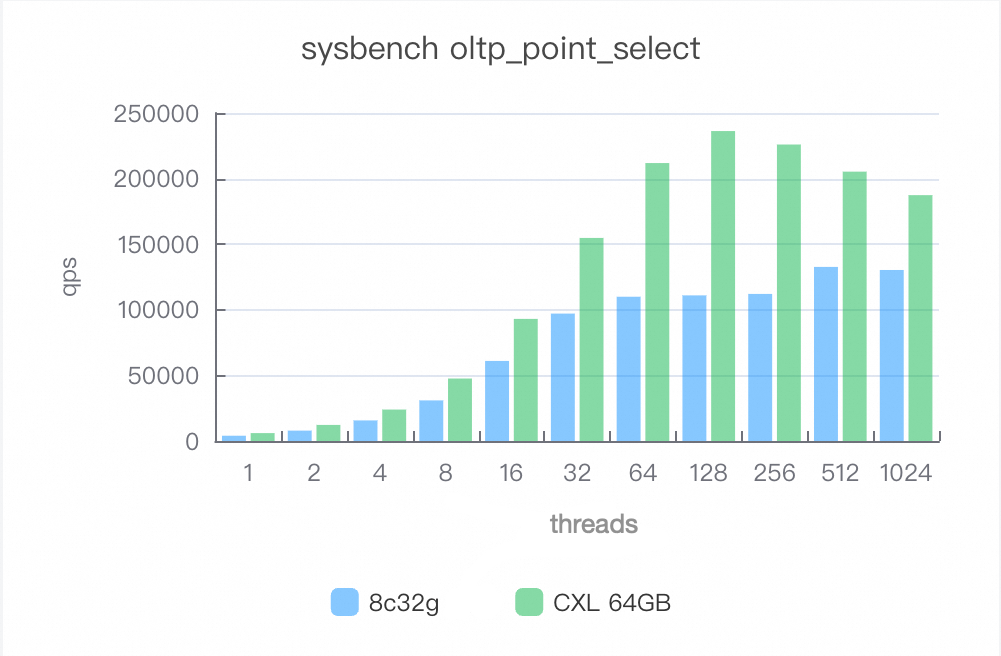

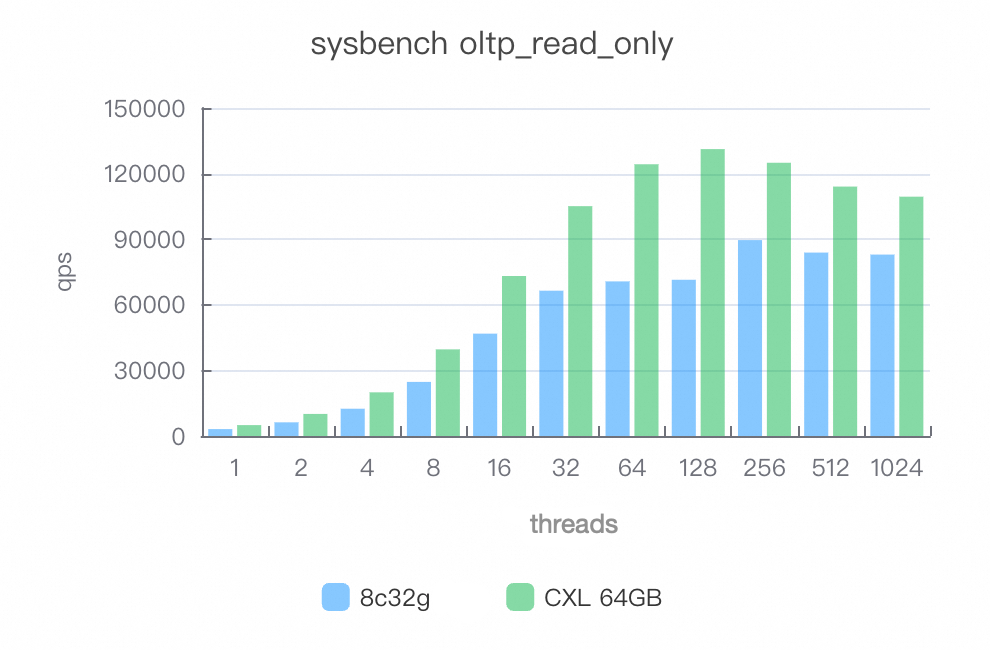

Performance test report

The following performance data is from a specific test environment and is for reference only. Actual performance may vary based on factors such as cluster specifications, workload, data patterns, and parameter settings.

Test environment

Cluster specifications: 8.0.2 Enterprise Edition, Dedicated, 8 cores, 32 GB.

Test data: 40 tables, with 10,000,000 rows per table.

Solution comparison

Plan A (Baseline): 8 cores, 32 GB, with 32 GB of total available memory.

Plan B (CXL Extension): 8 cores, 32 GB + 64 GB of CXL memory, with 96 GB of total available memory.

This test compares the performance improvement that results from adding CXL memory to the base specifications. This comparison does not reflect the performance difference between CXL memory and an equivalent amount of pure DRAM. The main purpose of this test is to verify the impact of expanding memory capacity with CXL on database throughput.

Test results

After adding 64 GB of CXL memory, the overall cluster performance, measured in queries per second (QPS), increased by up to 112%. This is because a larger Buffer Pool can cache more data, which reduces disk I/O.

Point queries (oltp_point_select)

After the CXL memory pool is enabled, performance improves by 50% to 112%.

Mixed reads (oltp_read_only)

After the CXL memory pool is enabled, performance improves by 30% to 80%.

Mixed reads and writes (oltp_read_write)

After the CXL memory pool is enabled, performance improves by 20% to 50%.