When binary logging is enabled, a large transaction can monopolize the binlog write queue and block all other transactions from committing. This causes a cascade of write latency, slow query surges, and connection spikes. PolarDB for MySQL provides optimized binlog writing for large transactions. This feature redirects large-transaction binlog data to a dedicated file, so other transactions can commit without waiting.

Supported versions

This feature requires PolarDB for MySQL Enterprise Edition or Standard Edition running one of the following database engine versions:

PolarDB for MySQL 8.0.1, revision version 8.0.1.1.42 or later

PolarDB for MySQL 8.0.2, revision version 8.0.2.2.25 or later

To check your cluster's engine version, see the "Query an engine version" section of Engine versions 5.6, 5.7, and 8.0.

Use cases

Enable this feature when large transactions run alongside other transactions in the same cluster. It is suited for the following scenarios:

Mixed-workload clusters: Large batch writes or bulk data imports coexist with OLTP transactions.

Latency-sensitive workloads: You need to prevent large transaction commits from causing latency spikes or slow query surges for concurrent transactions.

Connection surge prevention: Large transaction commits are triggering application-level retries and connection count spikes.

How it works

At commit time, each transaction flushes its binlog data from its in-memory binlog cache to the shared binlog file. When multiple transactions commit concurrently, they queue up for this write. If one transaction—for example, a transaction with 1 GB of binlog data—holds the queue for an extended period, every other transaction in the queue must wait. This produces a cascade of problems: slow queries surge, write locks are held longer, applications retry failed operations, and connection counts spike.

After you enable the feature, PolarDB for MySQL redirects the binlog data of any qualifying large transaction to a dedicated binlog file. This prevents the large transaction from blocking other transactions in the write queue, so concurrent transactions can commit without waiting.

The dedicated binlog file has a standard header that includes FORMAT_DESCRIPTION_EVENT and PREVIOUS_GTIDS_LOG_EVENT. It also includes a special placeholder called IGNORABLE_LOG_EVENT, which downstream systems automatically ignore.

Enable optimized binlog writing

Set loose_enable_large_trx_optimization to ON to enable the feature. Use loose_binlog_large_trx_threshold_up to set the binlog size threshold that triggers the optimization.

Both parameters take effect immediately without a cluster restart.

For instructions on setting parameters, see Configure cluster and node parameters.

| Parameter | Scope | Default value | Valid values | Description |

|---|---|---|---|---|

loose_enable_large_trx_optimization | Global | OFF | OFF, ON | Enables or disables optimized binlog writing for large transactions. |

loose_binlog_large_trx_threshold_up | Global | 1 GB | 10 MB–300 GB | The binlog size threshold above which a transaction is written to a dedicated binlog file instead of the shared binlog file. |

Limitations

Downstream replication nodes cannot use database concurrency-based multi-threaded replication when this feature is enabled. Do not set slave_parallel_workers>0 and slave_parallel_type='DATABASE' together. You can use slave_parallel_workers>0 and slave_parallel_type='logical_clock' instead.

The following additional limitation applies:

Checksum is not supported for the binlog files of large transactions.

Performance comparison

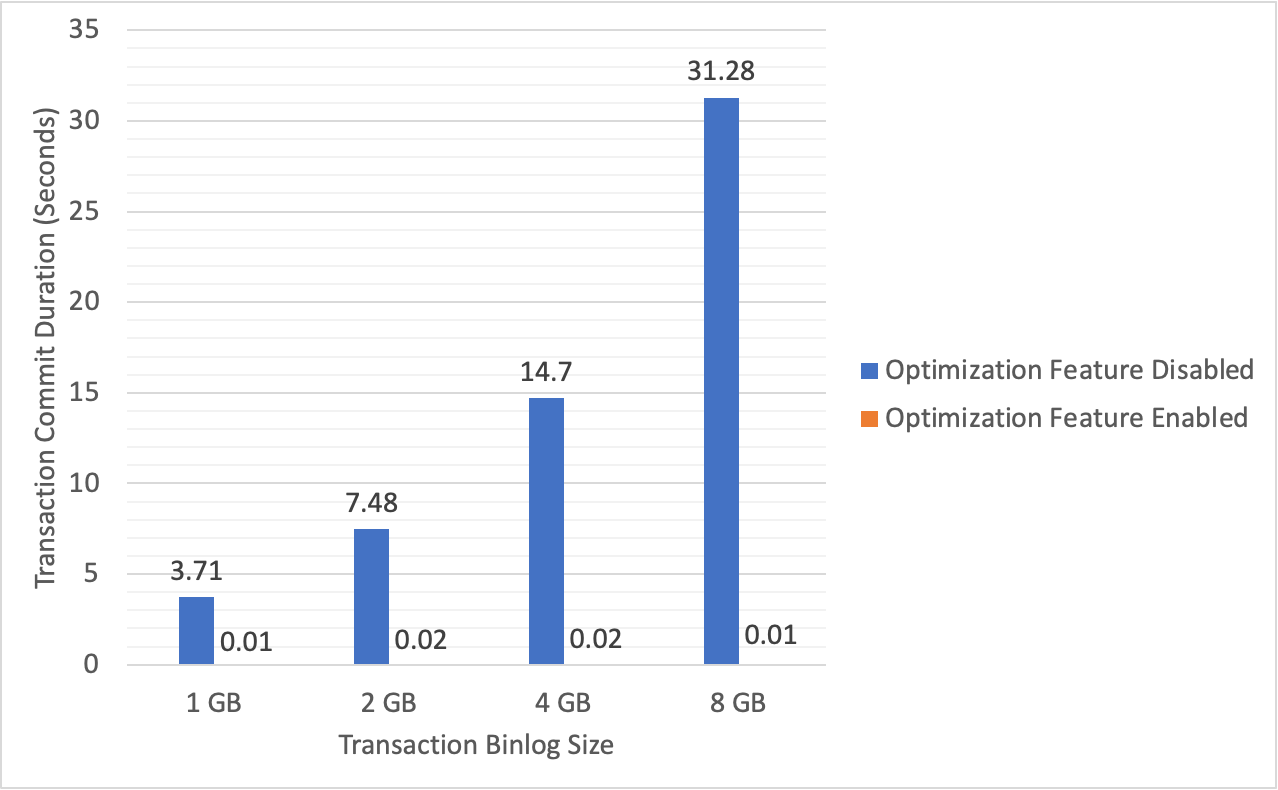

The following figure shows the time required to commit large transactions on a cluster using the PSL5 storage type, before and after enabling the feature.

After enabling the feature, commit time for large transactions drops substantially. This eliminates the heavy I/O load and prolonged write locks that large transaction commits previously caused.