DSW instances in public and dedicated resource groups have limited storage, and data is cleared after a set period. Mount a Dataset or storage path to expand storage, persist data, or share data across instances.

-

Public resource group: Data is stored on a free Cloud Disk with 100 GiB space. Data is cleared after you delete the instance or if it remains stopped for more than 15 days.

-

Dedicated resource group: Data is stored on the instance system disk. Data is cleared when the instance is stopped or deleted.

-

After 15-day cleanup: If your instance was stopped for more than 15 days, symbolic links to mounted storage (e.g., from

/home/admin/workspace to/mnt/data ) are removed. To restore access:- Restart the instance.

- Recreate the symbolic link manually:

ln -s /mnt/data /home/admin/workspace/data - Or remount the dataset/storage path from the DSW console.

Comparison: Dataset vs. storage path

Mount a Dataset for long-term storage and team collaboration. Mount a storage path directly for temporary tasks or quick storage expansion.

|

Feature |

Mount a dataset |

Mount a storage path |

|

Cloud products |

OSS, NAS, CPFS |

|

|

Version management |

Supports version management and data acceleration. |

Does not support version management. |

|

Data sharing |

Supports sharing across multiple instances. |

Available only to current instance. |

|

Operational complexity |

Requires creating and configuring a dataset. |

Simple; requires only path specification. |

|

Use cases |

Long-term storage, team collaboration, high security requirements. |

Temporary tasks, rapid storage expansion. |

Comparison: Startup mounting vs. dynamic mounting

Two mounting methods are available: startup mounting and dynamic mounting.

-

Startup mounting: Configure when creating an instance or changing its configuration. Requires instance restart to apply.

-

Dynamic mounting: Mount storage using PAI SDK in a running instance. Does not require instance restart.

Limitations

-

Unique path: Each Dataset mount path must be unique.

-

Write limit: Avoid frequent write operations in OSS mount directories. Frequent writes can cause performance degradation or failed operations.

-

Git limit: Git operations are not supported in OSS mount directories. Execute Git commands in local directories or non-mounted paths.

Dynamic mounting limits

-

Read-only limit: Dynamic mounting is read-only. Suitable for fast mounting or temporary read-only access.

-

Storage type restrictions: Dynamic mounting only supports OSS and NAS.

-

Resource restrictions: Dynamic mounting does not support Lingjun resources.

Mount at startup

Configure the Dataset Mounting or Storage Path Mounting parameters on the instance configuration page. Restart the instance to apply the configuration.

Mount a dataset

-

Create a dataset

Log in to PAI console. Go to AI Asset Management > Dataset and create a custom or public dataset. For more information, see Create and manage datasets.

-

Mount the dataset

On the configuration page (appears when creating a new DSW instance), find the Mount Dataset section. For an existing instance, click Change Settings to open this page. Click Custom Dataset, select the dataset you created, and enter a Mount Path.

Notes on mounting a custom dataset

-

CPFS dataset: DSW instance VPC must match CPFS file system VPC. Mismatched VPCs cause instance creation to fail.

-

NAS dataset: Configure network and select a security group.

-

Dedicated resource group: First Dataset must be NAS type. This Dataset mounts to both your specified path and the default DSW working directory at

/home/admin/workspace.

Mount a storage path directly

This section uses OSS path mounting as an example.

-

Create an OSS bucket

Activate OSS and create a bucket.

ImportantBucket region must match PAI region. Bucket region cannot be changed after creation.

-

Mount the OSS path

On the DSW instance configuration page (opened when creating an instance or by clicking Change Settings for an existing instance), find Storage Path Mounting. Click OSS, select the OSS bucket path you created, and enter a Mount Path. Advanced Configurations is empty by default. Configure as needed. For more information, see Advanced mount configuration.

Dynamic mounting

Mount a Dataset or storage path using PAI SDK within a DSW instance without instance restart.

Note: Dynamic mounting is read-only, supports only OSS and NAS, and does not support Lingjun resources.

Prerequisites

-

Install PAI Python SDK. Open DSW instance Terminal and run the following command. Requires Python 3.8 or later.

python -m pip install pai>=0.4.11 -

Configure SDK access key for PAI.

-

Method 1: Configure DSW instance with default PAI role or custom RAM role. Open instance configuration page, at the bottom click Show More to select instance RAM role. For more information, see Associate a RAM role with a DSW instance.

-

Method 2: Configure manually using command-line tool provided by PAI Python SDK. Run the following command in Terminal to configure access parameters. For an example, see Initialization.

python -m pai.toolkit.config

-

Examples

Mount storage without reconfiguring and restarting DSW instance.

-

Mount to the default path

Data is mounted to the default mount path inside the instance. Default path for official pre-built images is

/mnt/dynamic/.from pai.dsw import mount # Mount an OSS path mount_point = mount("oss://<YourBucketName>/Path/Data/Directory/") # Mount a dataset. The input parameter is the dataset ID. # mount_point = mount("d-m7rsmu350********")

-

Mount to a specified path

Dynamic mounting requires mounting data to a specific path (or subdirectory) within the container. Get dynamic mount path using SDK API.

from pai.dsw import mount, default_dynamic_mount_path # Get default mount path of the instance default_path = default_dynamic_mount_path() mount_point = mount("oss://<YourBucketName>/Path/Data/Directory" , mount_point=default_path + "tmp/output/model")

-

Dynamically mount NAS

from pai.dsw import mount, default_dynamic_mount_path # Get default mount path of the instance default_path = default_dynamic_mount_path() # Mount NAS. NAS endpoint and instance must be in same VPC. Replace <region> with region ID, such as cn-hangzhou. mount("nas://06ba748***-xxx.<region>.nas.aliyuncs.com/", default_path+"mynas3/") -

View all mount configurations in the instance

from pai.dsw import list_dataset_configs print(list_dataset_configs()) -

Unmount mounted data

from pai.dsw import mount, unmount mount_point = mount("oss://<YourBucketName>/Path/Data/Directory/") # Input parameter is mount path (MountPath queried by list_dataset_configs). # After running unmount command, takes a few seconds for change to take effect. unmount(mount_point)

Advanced mount configuration

Adapt to different read/write scenarios (fast reads/writes, incremental writes, read-only access) and optimize read/write performance by setting advanced parameters when configuring a mount.

View mount configurations

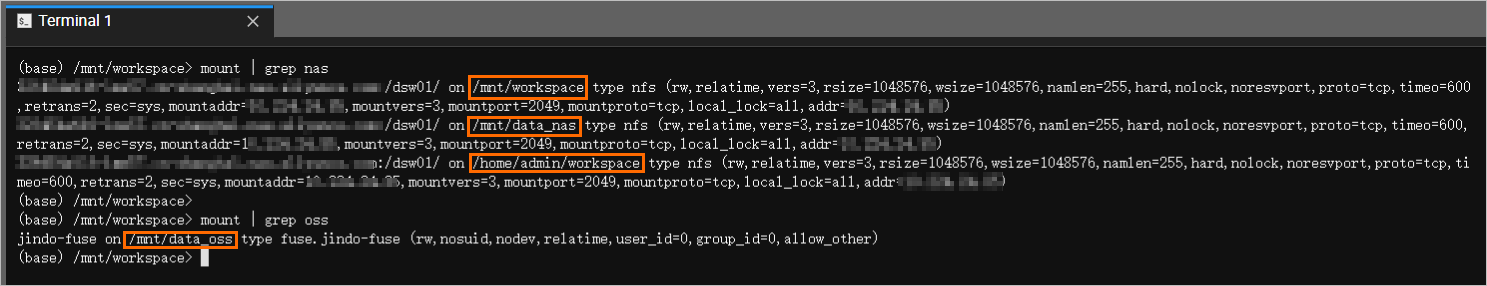

Open DSW instance and, in Terminal, enter the following commands to verify NAS and OSS Dataset mounts.

# View all mounts

mount

# Query NAS mount path

mount | grep nas

# Query OSS mount path

mount | grep ossOutput similar to the following indicates a successful mount.

-

NAS Dataset is mounted to

/mnt/data_nas,/mnt/data, and/home/admin/workspacedirectories./mnt/data_nasis the mount path specified when creating DSW instance, and the other two paths are default working directories where the first NAS Dataset is mounted. As long as your NAS volume and service run correctly, your data and code are stored persistently. -

OSS Dataset is mounted to the

/mnt/data_ossdirectory in DSW instance.

FAQ

Q: Why are my mounted OSS files not showing up in the JupyterLab file browser?

JupyterLab file browser displays the default working directory (/home/admin/workspace), but OSS path was likely mounted to a different location (e.g., /mnt/data).

Three ways to access your files:

-

Use absolute path in code: Files are already mounted successfully. In code, use full mount path to access them, for example,

open('/mnt/data/my_file.csv'). -

Mount to workspace subdirectory: To see files in UI, set mount path to a subdirectory of working directory when configuring the mount, such as

/mnt/data/my_oss_data. After mount completes, OSS files appear inmy_oss_datafolder in file browser. -

Access via Terminal: In DSW Terminal, use

cd /mnt/datacommand to enter mount directory. Then use commands such aslsto view and manage files.

Q: Why do I get a "Transport endpoint is not connected" or "Input/output error" when accessing a mounted OSS path in DSW?

These errors indicate that connection between DSW instance and OSS mount has been lost. Common causes:

-

Insufficient RAM Role Permissions: RAM role configured for DSW instance may lack necessary permissions to access OSS. Ensure the role (e.g.,

AliyunPAIDLCAccessingOSSRole) is correctly assigned and has read/write permissions for the target bucket. -

Mount Service Crash (OOM): During intensive I/O operations (e.g., reading many small files), underlying mount service (

ossfsorJindoFuse) can run out of memory and crash. Mitigate by adjusting memory limits or disabling metadata cache in Advanced Configuration of mount settings. For more information, see JindoFuse. -

Restore connection:

-

For startup mounts: Simplest solution is to restart DSW instance. System will automatically re-establish mount connection.

-

For dynamic mounts: Execute a remount command using PAI SDK in notebook or terminal without restarting the instance.

-

Q: What storage can I mount in DSW, and is it possible to mount Alibaba Cloud Drive or MaxCompute tables?

Mount storage from OSS, NAS, and CPFS by creating a Dataset or by mounting a storage path directly. However, some services cannot be mounted as a file system:

-

Alibaba Cloud Drive: Direct mounting is not supported. Recommended approach is to first upload data to an OSS bucket and then mount that bucket in DSW instance.

-

MaxCompute Tables: Cannot mount MaxCompute table as a directory. To access data in MaxCompute, use the appropriate SDK, such as PyODPS, within DSW code. For more information, see Use PyODPS to read and write MaxCompute tables.

Q: Will code and data be lost if DSW instance is stopped or deleted? How to persist data and code?

Data stored on local system disk of DSW instance is temporary and will be deleted.

-

Public resource group: Data is cleared if instance is stopped for more than 15 days.

-

Dedicated resource group: Data is cleared as soon as instance is stopped or deleted.

To ensure work is not lost, use an externally mounted storage service.

-

Persistence solution: Save all important files (including code, datasets, and models) to a mounted OSS or NAS path. Data stored in personal OSS or NAS is persistent and independent of DSW instance lifecycle.

-

Migration solution: To move work to a new DSW instance, simply mount the same OSS or NAS path that contains persisted data. This is the most efficient migration method.

References

For more information, see DSW FAQ