Pre-trained large language models (LLMs) require fine-tuning to meet specific task requirements. This guide covers fine-tuning strategies (SFT/DPO), parameter-efficient techniques (LoRA/QLoRA), and hyperparameter optimization for enhanced model performance.

Fine-tuning methods

SFT/DPO

Model Gallery supports supervised fine-tuning (SFT) and direct preference optimization (DPO). LLM training typically progresses through the following phases:

Pre-training (PT)

The initial training phase where the model learns grammar, logical reasoning, and common sense knowledge from large-scale corpora.

-

Objective: Develop comprehensive language understanding, logical reasoning, and common sense knowledge.

-

Example: Pre-trained models in Model Gallery (Qwen series, Llama series).

Supervised fine-tuning (SFT)

Refines the pre-trained model for specific scenarios such as conversation and question-answering.

-

Objective: Improve output format and content quality.

-

Scenario: Generating professional domain-specific answers (e.g., medical queries).

-

Solution: Fine-tune with domain-specific Q&A datasets (e.g., medical literature).

Preference optimization (PO)

After supervised fine-tuning, models may generate grammatically correct but factually inaccurate or value-misaligned responses. Preference optimization further refines conversational abilities and aligns models with human values. Main approaches include Proximal Policy Optimization (PPO) using reinforcement learning, and Direct Preference Optimization (DPO). DPO uses an implicit reinforcement learning paradigm without explicit reward models, achieving more stable training than PPO.

Direct preference optimization (DPO)

-

DPO uses a trainable LLM and a reference model. The reference model is a pre-trained LLM with frozen parameters that guides training and prevents output deviation.

-

Training data: Triplets (prompt, chosen (preferred outcome

), rejected (non-preferred outcome )). -

The DPO loss function maximizes the probability of generating preferred outcomes relative to the reference model, while minimizing the probability of non-preferred outcomes:

-

σ: Sigmoid function mapping results to (0, 1).

-

β: Hyperparameter (typically 0.1–0.5) controlling loss function sensitivity.

-

PEFT: LoRA/QLoRA

Parameter-efficient fine-tuning (PEFT) updates only a small parameter subset while keeping the majority unchanged, achieving competitive performance with reduced data and compute requirements. Model Gallery supports full parameter fine-tuning and two efficient techniques: LoRA and QLoRA.

LoRA (Low-Rank Adaptation)

LoRA introduces a low-rank bypass parallel to the model's parameter matrix, formed by multiplying two matrices (dimensions m×r and r×n, where r ≪ m, n). During forward pass, input passes through both the original parameters and LoRA bypass, with outputs summed. During training, only LoRA components are updated while original parameters remain frozen, significantly reducing computational overhead.

QLoRA (Quantized LoRA)

QLoRA combines model quantization with LoRA. Beyond introducing LoRA bypasses, QLoRA quantizes models to 4-bit or 8-bit precision during loading. During computation, quantized parameters are dequantized to 16-bit for processing. This approach optimizes parameter storage and further reduces memory consumption compared to LoRA.

Training method selection and data preparation

Select appropriate training methods based on your scenario requirements.

Full parameter training, LoRA, or QLoRA

-

Complex tasks: Use full parameter training to leverage all model parameters for optimal performance.

-

Simple tasks: Use LoRA or QLoRA for faster training with lower compute requirements.

-

Limited data: LoRA or QLoRA helps prevent overfitting with smaller datasets.

-

Limited resources: QLoRA further reduces GPU memory usage, though quantization/dequantization may increase training time.

Prepare training data

-

Simple tasks: Large datasets are not required.

-

SFT: Thousands of samples typically suffice. Data quality is more critical than quantity.

Hyperparameters

learning_rate

Controls parameter update magnitude per iteration. Balance is essential for stable convergence without compromising training speed.

num_train_epochs

Number of complete passes through the training dataset. For example, 10 epochs means processing the entire dataset 10 times.

Too few epochs cause underfitting; too many cause overfitting. Recommended range: 2–10 epochs. For small datasets, increase epochs to prevent underfitting. For large datasets, 2 epochs typically suffice. Lower learning rates require more epochs. Monitor validation accuracy and stop training when improvements plateau.

per_device_train_batch_size

Number of training samples processed per iteration. The optimal batch size maximizes hardware utilization without compromising training effectiveness. This parameter specifies samples used per GPU core in a single training iteration.

-

Batch size primarily controls training speed rather than performance. Smaller batches increase gradient estimation variance and require more iterations for convergence. Larger batches reduce training duration.

-

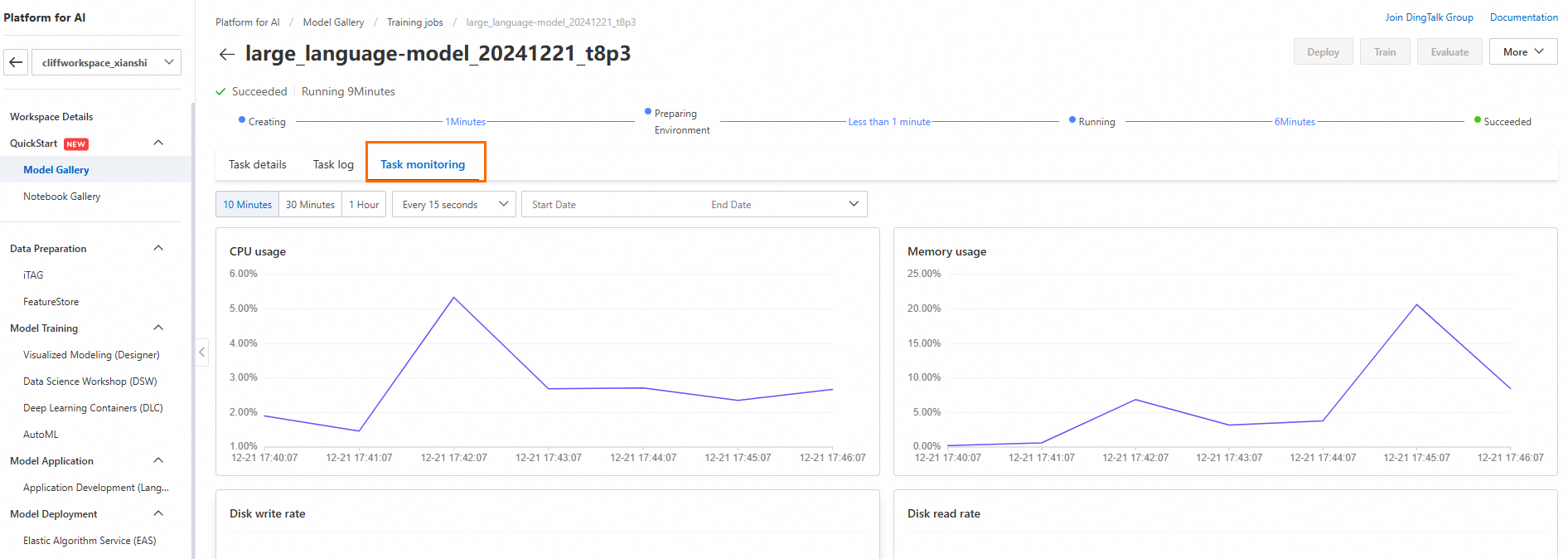

The optimal batch size is the maximum value hardware can accommodate. To find the largest batch size without GPU memory overflow: Navigate to Model Gallery > Job Management, select your training job, and view GPU memory usage on the Task monitoring tab.

-

Using more GPU cores with the same per_device_train_batch_size effectively increases total batch size.

seq_length

For LLMs, training data is processed by a tokenizer into token sequences. Sequence length specifies the token sequence length accepted per training sample. Data is truncated or padded to match this length.

Choose appropriate sequence length based on training data token length distribution. Token sequence lengths are typically similar across different tokenizers. Use the OpenAI tokens online calculation tool to estimate token sequence length.

For SFT, estimate sequence length for: system prompt + instruction + output. For DPO, estimate: system prompt + prompt + chosen and system prompt + prompt + rejected, selecting the larger value.

lora_dim/lora_rank

LoRA is applied within the Transformer's multi-head attention component. Research demonstrates:

-

Adapting multiple weight matrices in multi-head attention is more effective than single matrix adaptation.

-

Increasing rank doesn't guarantee better subspace coverage. Low-rank adaptation matrices often suffice.

The llm_deepspeed_peft algorithm in Model Gallery applies LoRA to all four weight matrix types within multi-head attention, with default rank 32.

lora_alpha

LoRA scaling coefficient. Higher values increase LoRA matrix impact (suitable for small datasets); lower values reduce impact (suitable for large datasets). Typically set to 0.5–2× the lora_dim value.

dpo_beta

Controls deviation from the reference model in DPO training (default: 0.1). Higher beta values enforce stricter alignment with the reference model. Not used in SFT training.

load_in_4bit/load_in_8bit

In QLoRA, these parameters specify loading the base model with 4-bit or 8-bit precision.

gradient_accumulation_steps

Larger batch sizes require more GPU memory, potentially causing OOM (out of memory) errors. Smaller batch sizes increase gradient estimation variance, affecting convergence speed. Gradient accumulation addresses both issues by accumulating gradients over multiple batches before updating the model. The effective batch size equals the set batch size multiplied by gradient_accumulation_steps.

apply_chat_template

Setting to true automatically applies the default chat template to training data. For custom chat templates, set to false and include necessary special tokens in training data manually. System prompts can be configured regardless of this setting.

system_prompt

Provides context to guide model responses. Example: "You are a friendly and professional customer service representative who solves user problems concisely, often using examples."