Trains image metric learning models using raw data with mainstream backbones such as ResNet and Vision Transformers.

Prerequisites

OSS is activated, and Machine Learning Studio is authorized to access OSS. For more information, see Activate OSS and Grant permissions.

Limitations

Requires DLC computing engine.

Supported models

Supported backbones: resnet50, resnet18, resnet34, resnet101, swint_tiny, swint_small, swint_base, vit_tiny, vit_small, vit_base, xcit_tiny, xcit_small, and xcit_base.

Configuration

-

Input ports

Port (left to right)

Data type

Recommended upstream component

Required

Training annotation file

OSS

No

Evaluation annotation file

OSS

No

-

Parameters

Tab

Parameter

Required

Description

Default value

Field Settings

Metric learning model type

Yes

Algorithm type for training. Valid values:

-

Data-parallel metric learning

-

Model-parallel metric learning

Data-parallel metric learning

OSS directory for training output

Yes

OSS directory where trained models are stored. Example:

oss://examplebucket/yun****/designer_testNone

Training annotation file path

No

Configure if no training annotation file is connected to the input port.

NoteIf configured in both places, input port takes precedence.

OSS path where the training annotation file is stored. Example:

oss://examplebucket/yourfolder****/data/imagenet/meta/train_labeled.txtEach line in train_labeled.txt must follow this format:

absolute path/image_name.jpg label_idImportantSeparate image path and label_id with a space.

None

Validation annotation file path

No

Configure if no evaluation annotation file is connected to the input port.

NoteIf configured in both places, input port takes precedence.

OSS path where the validation annotation file is stored. Example:

oss://examplebucket/yourfolder****/data/imagenet/meta/val_labeled.txtEach line in val_labeled.txt must follow this format:

absolute path/image_name.jpg label_idImportantSeparate image path and label_id with a space.

None

File of class name list

No

Enter class names directly or specify OSS path to a file containing class names.

None

Data source format

Yes

Input data format. Valid values: ClsSourceImageList and ClsSourceItag.

ClsSourceImageList

OSS path of the pre-trained model

No

OSS path to a custom pre-trained model. If not set, PAI uses the default pre-trained model.

None

Parameter Settings

Metric learning backbone

Yes

Backbone model for metric learning. Valid values:

-

resnet_50

-

resnet_18

-

resnet_34

-

resnet_101

-

swin_transformer_tiny

-

swin_transformer_small

-

swin_transformer_base

resnet50

Image resize size

Yes

Image size in pixels after resizing.

224

Backbone output feature dimension

Yes

Output feature dimension of the backbone. Must be an integer.

2048

Feature output dimension

Yes

Output feature dimension of the Neck. Must be an integer.

1536

Number of training classes

Yes

Number of output dimensions for metric learning.

None

Loss function

Yes

Loss function that evaluates the difference between predicted and actual values. Valid values:

-

AMSoftmax (recommended parameters: margin 0.4, scale 30)

-

ArcFaceLoss (recommended parameters: margin 28.6, scale 64)

-

CosFaceLoss (recommended parameters: margin 0.35, scale 64)

-

LargeMarginSoftmaxLoss (recommended parameters: margin 4, scale 1)

-

SphereFaceLoss (recommended parameters: margin 4, scale 1)

-

Model-parallel AMSoftmax, whose classification limit can be extended with the number of GPUs

-

Model-parallel Softmax, whose classification limit can be extended with the number of GPUs

AMSoftmax (recommended parameters: margin 0.4, scale 30)

Loss function scale parameter

Yes

Set based on the selected loss function.

30

Loss function margin parameter

Yes

Set based on the selected loss function.

0.4

Loss function weight

No

Loss function weight. Balances optimization between metric and classification.

1.0

Optimizer

Yes

Optimizer for training. Valid values:

-

SGD

-

AdamW

SGD

Initial learning rate

Yes

Initial learning rate for training. Must be a floating-point number.

0.03

Training batch_size

Yes

Number of samples trained per iteration.

None

Total training epochs

Yes

Total number of training rounds on all samples.

200

Checkpoint saving frequency

No

Frequency to save model checkpoints. A value of 1 saves after each epoch.

10

Execution Tuning

Training data read threads

No

Number of processes for reading training data.

4

Enable half-precision

No

Enables half-precision for training to reduce memory usage.

None

Computing mode

Yes

Computing engine to run this component. Supported engines:

-

Standalone DLC

-

Distributed DLC

Standalone DLC

Workers

No

Configure when using Distributed DLC.

Number of concurrent worker processes during training.

1

GPU model

Yes

GPU specification for training.

8vCPU+60GB Mem+1xp100-ecs.gn5-c8g1.2xlarge

-

Example

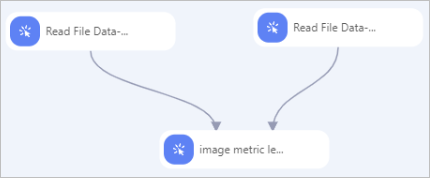

Build a workflow using the Image Metric Learning Training (raw) component as shown below. Configure components as follows:

Configure components as follows:

-

Prepare and annotate data using the PAI iTAG module. See iTAG.

-

Use Read OSS Data-4 and Read OSS Data-5 to read training and validation annotation files. Set OSS Data Path for each component to the corresponding annotation file path.

-

Connect both Read OSS Data components to Image Metric Learning Training (raw) and configure parameters. See Configuration.