Create custom workflows in Designer to build, train, and deploy machine learning models visually. This guide demonstrates end-to-end model development by building a classification pipeline to predict heart disease.

Prerequisites

Activate PAI and create a workspace. For more information, see Activate and Create a Default Workspace.

Associate your workspace with MaxCompute resources. For more information, see Quick Start - Preparations.

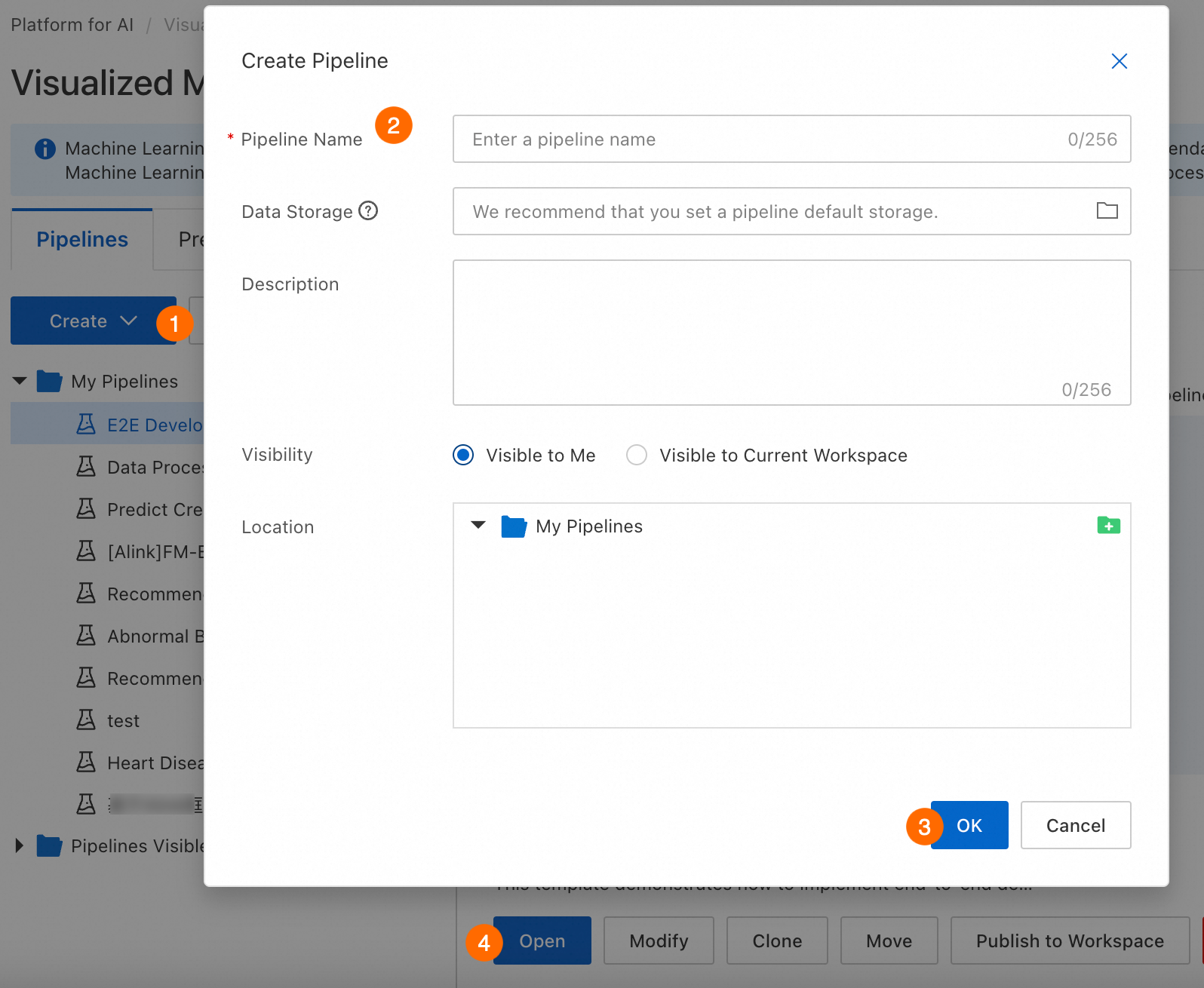

Step 1: Create a pipeline

Go to Machine Learning Designer, select a workspace, and open the Designer page. Then create and enter a new workflow.

Parameter | Description |

Workflow name | Custom workflow name. |

Workflow data storage | OSS Bucket storage path for temporary data and models generated during runtime. If not configured, uses default workspace storage. For each run, the system automatically creates a temporary folder at |

Visibility |

|

Step 2: Prepare and preprocess data

Before training a model, prepare a data source and preprocess it to meet model input requirements.

Prepare data

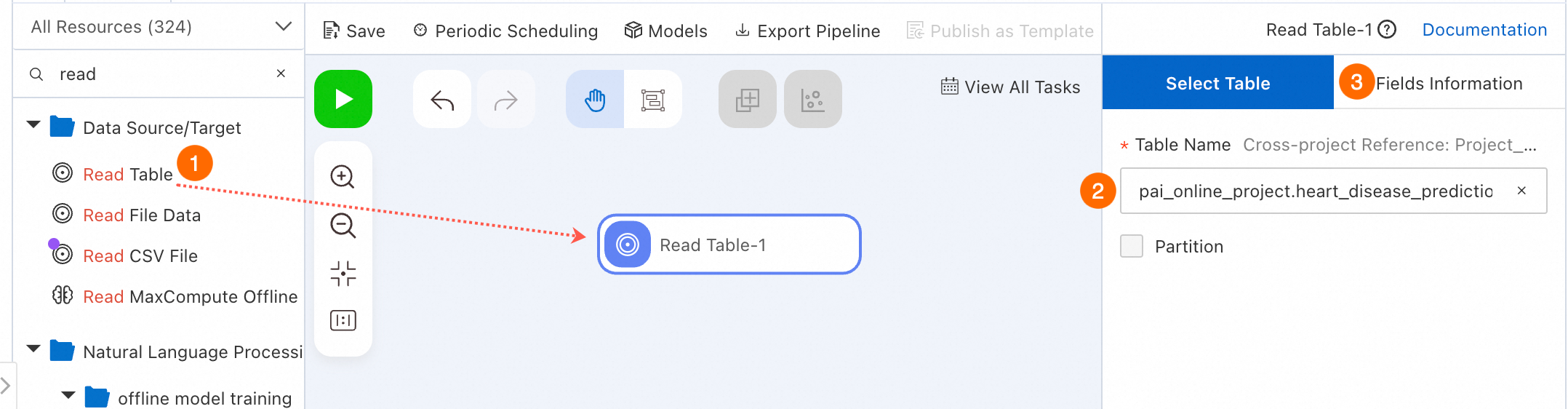

In a workflow, add Source/Target components to read data from sources such as MaxCompute and OSS. For more information, see the specific component documentation in Component Reference: Source/Target. This topic uses the Read Data Table component to read public heart disease sample data provided by PAI. For details about the dataset, see Heart Disease Data Set.

Select an appropriate Data Source/Target component to read data.

In the component list on the left, click Data Source/Target. Drag the Read Table component onto the canvas to read MaxCompute table data. A workflow node named Read Table-1 appears on the canvas.

Configure the source data table name on the node configuration page.

Select the Read Table-1 node on the canvas. In the Table Name field on the right-side node configuration page, enter the MaxCompute table name. Enter

pai_online_project.heart_disease_predictionto read the public heart disease case data table provided by PAI.Switch to the Fields Information tab on the right-side node configuration page to view field details of this public data.

Preprocess data

Heart disease prediction is a binary classification problem. The logistic regression model component requires DOUBLE or BIGINT input. This section converts and preprocesses heart disease case data for model training.

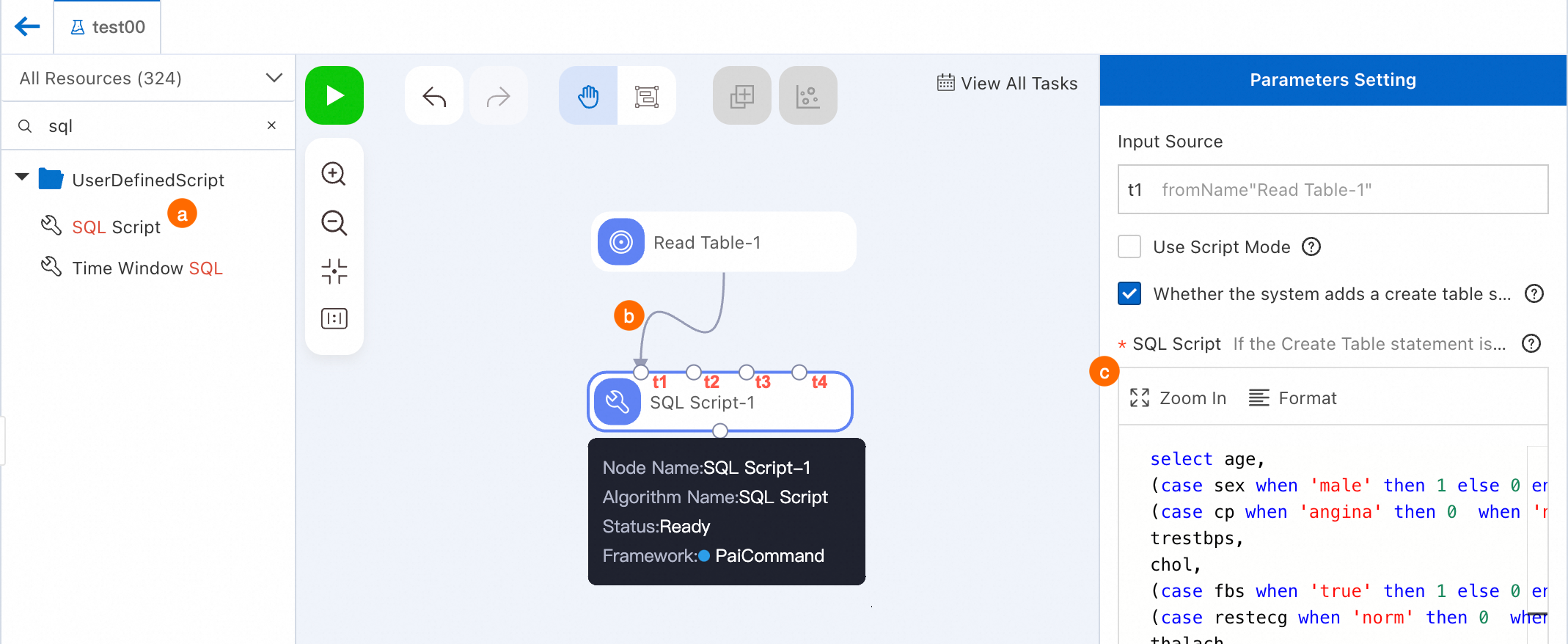

Preprocess data: Convert non-numeric fields to numeric types.

Search for the SQL Script component and drag it onto the canvas. A workflow node named SQL Script-1 appears on the canvas.

Connect the Read Table-1 node as the t1 input source for SQL Script-1 node.

Configure the node.

Click the SQL Script-1 node. On the right-side configuration page, enter the following code. Input Source in the right-side Parameters Setting panel is `t1`.

select age, (case sex when 'male' then 1 else 0 end) as sex, (case cp when 'angina' then 0 when 'notang' then 1 else 2 end) as cp, trestbps, chol, (case fbs when 'true' then 1 else 0 end) as fbs, (case restecg when 'norm' then 0 when 'abn' then 1 else 2 end) as restecg, thalach, (case exang when 'true' then 1 else 0 end) as exang, oldpeak, (case slop when 'up' then 0 when 'flat' then 1 else 2 end) as slop, ca, (case thal when 'norm' then 0 when 'fix' then 1 else 2 end) as thal, (case status when 'sick' then 1 else 0 end) as ifHealth from ${t1};Click Save in the upper-left corner of the canvas to save the workflow configuration.

Right-click the SQL Script-1 component. Click Run from Root Node To Here to debug and run this workflow.

The workflow runs each node in order. When a node runs successfully, a checkmark icon (

) appears in its upper-right corner.Note

) appears in its upper-right corner.NoteAlternatively, click the

(Run) icon in the upper-left corner of the canvas to run the entire workflow directly. For complex workflows, run specific nodes or parts of nodes to simplify debugging. If a run fails, right-click the corresponding node and select View Log to troubleshoot the cause.

(Run) icon in the upper-left corner of the canvas to run the entire workflow directly. For complex workflows, run specific nodes or parts of nodes to simplify debugging. If a run fails, right-click the corresponding node and select View Log to troubleshoot the cause.Upon successful execution, right-click the target node (for example, SQL Script-1) and select to verify correctness of the current node's output data.

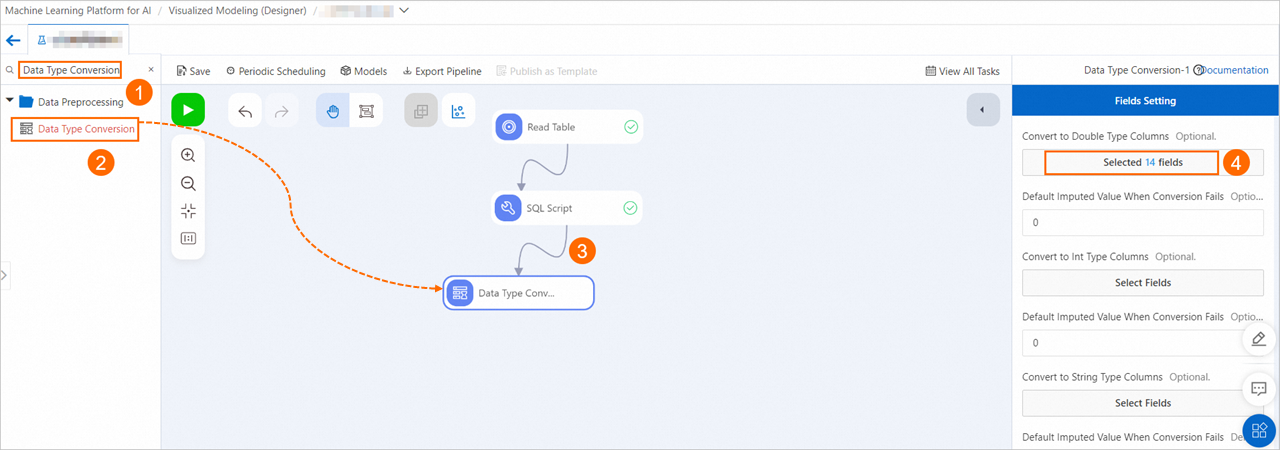

Preprocess data: Convert fields to DOUBLE type. This ensures processed fields meet input data requirements of the logistic regression model.

Following the previous step, drag the Data Type Conversion component and connect it as a descendant node of SQL Script-1 node. Click the node. On the Fields Setting tab, click Select Fields under Columns to convert to double type. Select all fields to convert them to DOUBLE type.

Preprocess data: Normalize the data. This converts the numerical range of each feature to 0–1, removing the impact of scale on results.

Following the previous step, drag the Normalization component and connect it as a descendant node of Data Type Conversion-1 node. Click the node. On the Fields Setting tab, select all fields.

Preprocess data: Split the data into training and prediction sets to prepare for subsequent model training and prediction.

Drag the Split component and connect it as a descendant node of Normalization-1 node. The split outputs two data tables.

The Split component splits data into a model training set and a model prediction set at a 4:1 ratio by default. Click the Split component and set Split Ratio on the Parameter Settings tab on the right. For more information about other parameters, see Split.

Right-click the Data Type Conversion-1 component. Click Run from Here to run the remaining nodes in the workflow.

Step 3: Train the model

Each sample in this example is either sick or healthy, making heart disease prediction a binary classification problem. This section uses the logistic regression binary classification component to build the heart disease prediction model.

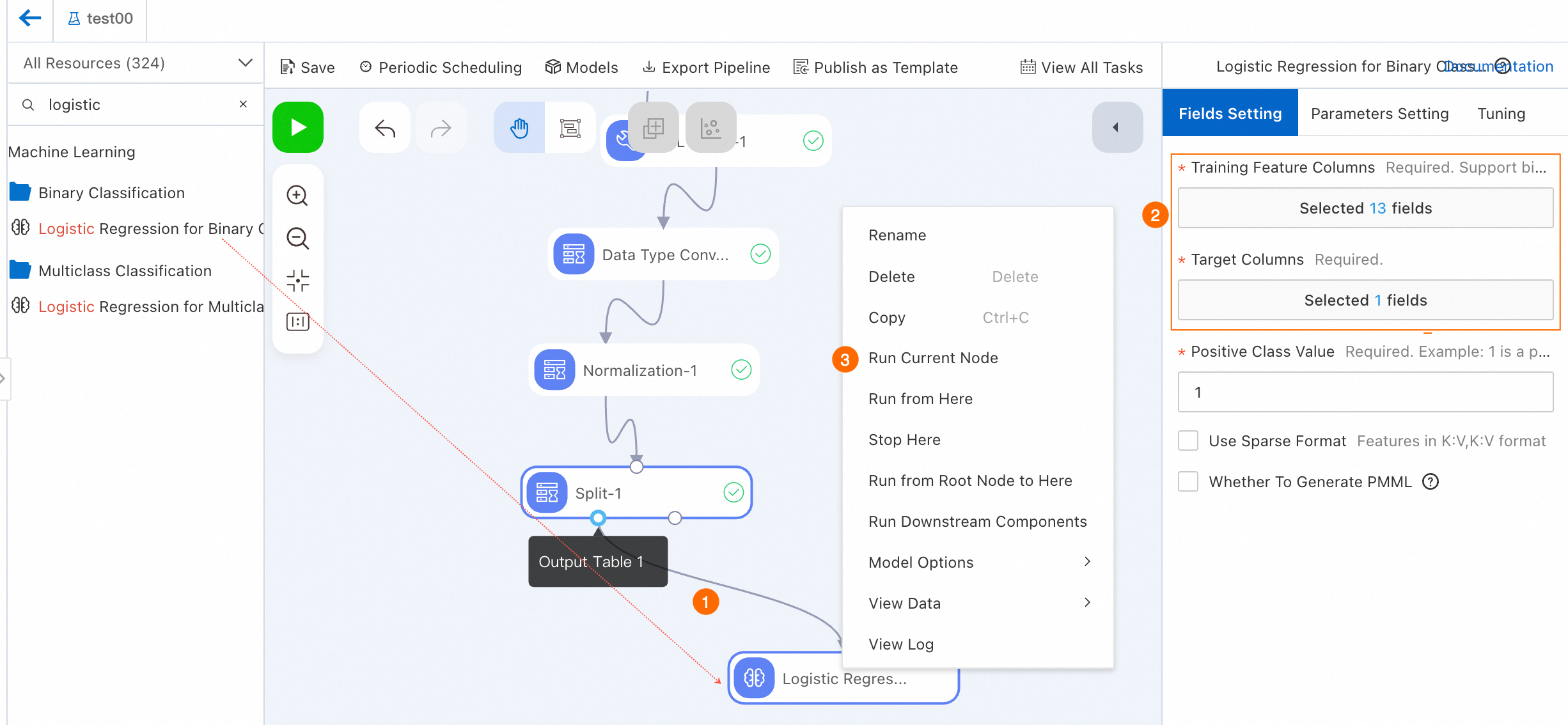

Drag the Binary Logistic Regression component and connect it as a descendant node of Output Table 1 from the Split-1 node.

Configure the node.

Click the Binary Logistic Regression-1 node, and in the Fields Setting tab on the right, set Target Column to ifhealth and set Training Feature Columns to all columns except Target Column. For more information, see Binary Logistic Regression.

NoteTo complete the next Step 6: Model Deployment (Optional), select the Logistic Regression Binary Classification node and check the Generate PMML check box on the right-side fields setting tab.

Run the node.

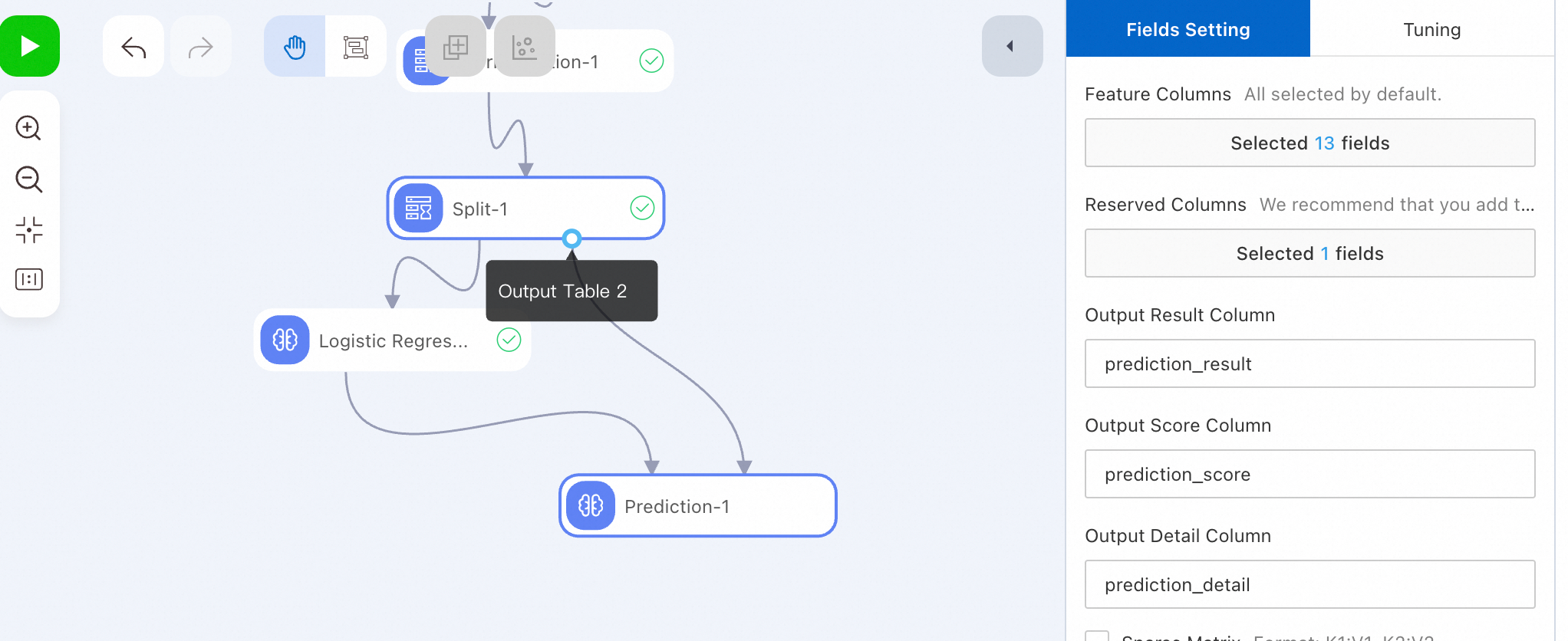

Step 4: Run model prediction

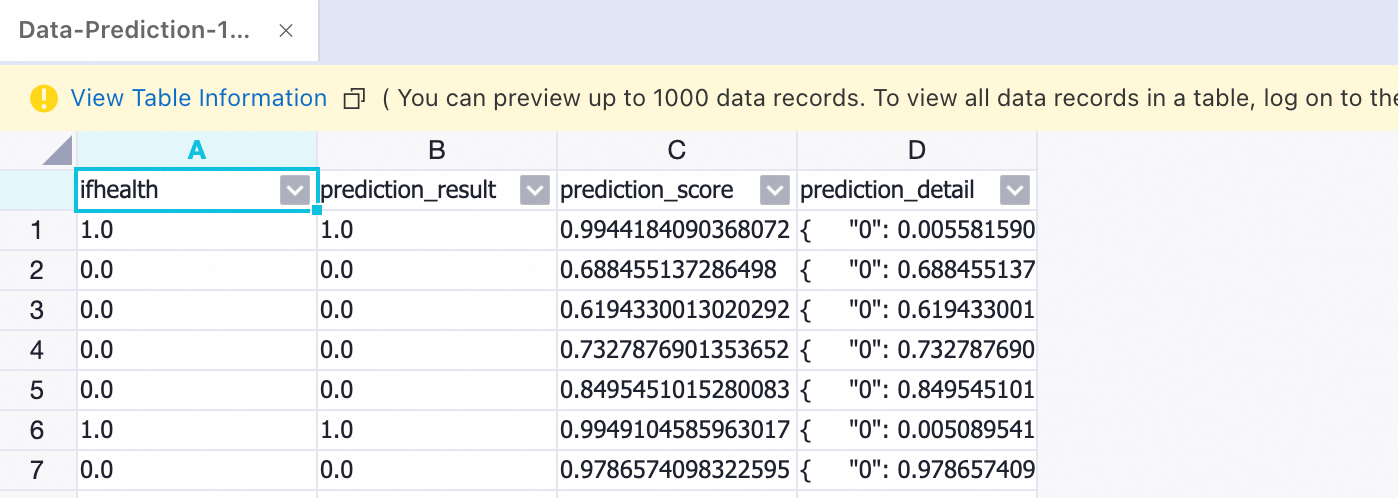

Run the prediction node and view the prediction results.

After a successful run, right-click the prediction node. Select View Data > Prediction Result Output Port to view the prediction data.

Step 5: Evaluate the model

Drag the Binary Classification Evaluation component and connect it as a descendant node of Prediction-1 node.

Click the Binary Classification Evaluation-1 node. On the right-side Fields Setting tab, set Original Label Column to ifhealth.

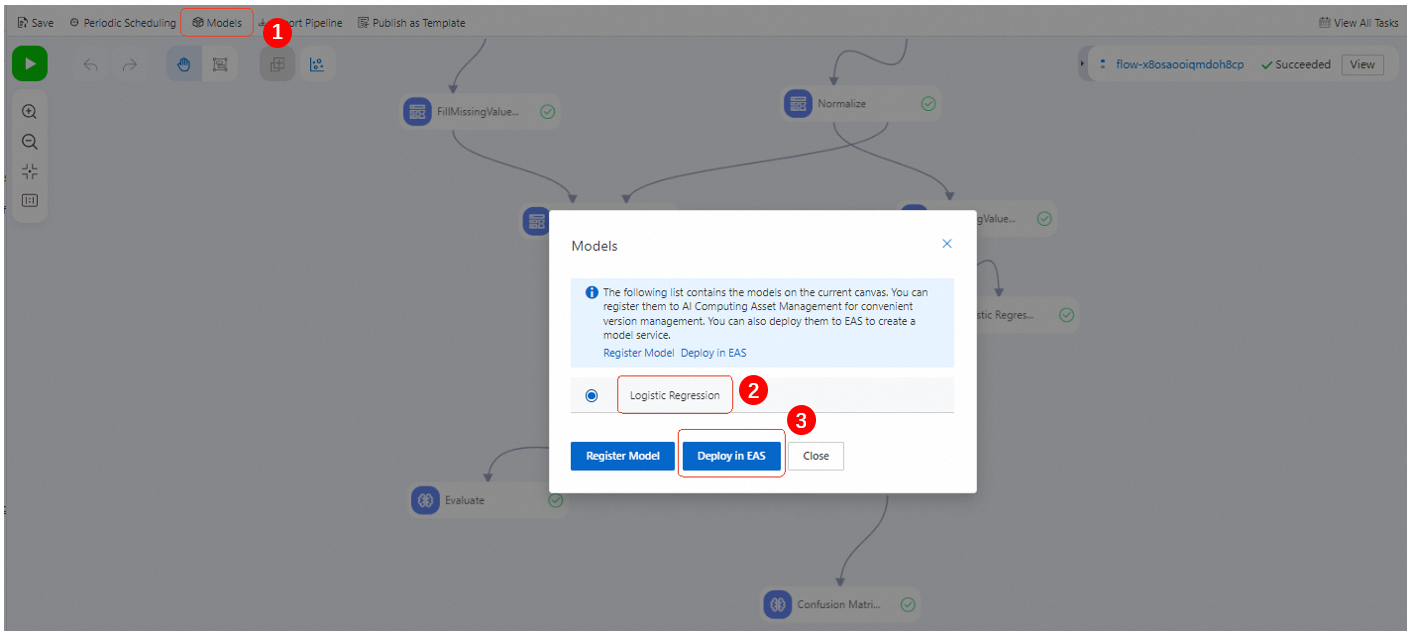

Step 6: Deploy the model (optional)

Designer and EAS integrate seamlessly. After completing offline training, offline prediction, and evaluation, deploy a single model to EAS to create an online model service.

After the workflow runs successfully, click Model List. Select the model to deploy and click Deploy to EAS.

Confirm the configuration parameters. For more information, see deploy a model as an online service.

On the EAS deployment page, Model File and Processor Type are configured by default. Configure other parameters as needed.

Click Deploy.

When Service Status changes from Creating to Running, model deployment is successful.

ImportantIf no longer using the deployed online model, click Stop in the Operation column to avoid unnecessary costs.

References

Designer provides rich workflow templates to quickly build models directly. For more information, see Template Workflows.

Use DataWorks to perform offline scheduling for offline workflows and periodically update models. For more information, see Using DataWorks to perform offline scheduling for Designer workflows.

Configure global variables in workflows and use them in both online workflows and DataWorks offline scheduling workflows to improve flexibility and efficiency. For more information, see Global variables.