Run standalone PyTorch transfer learning jobs with training data stored in NAS file systems using DLC and DSW.

Prerequisites

Create a General-purpose NAS file system in your target region. For more information, see Create a General-purpose NAS file system.

Limitations

This document applies only to general computing resources in public resource groups.

Create a dataset

-

Go to the Datasets page.

-

Log on to the PAI console.

-

In the left-side navigation pane, click Workspaces. On the Workspaces page, click the name of the workspace that you want to manage.

-

In the left-side navigation pane, choose .

-

-

Create a basic dataset. Set Storage Class to General-purpose NAS file system.

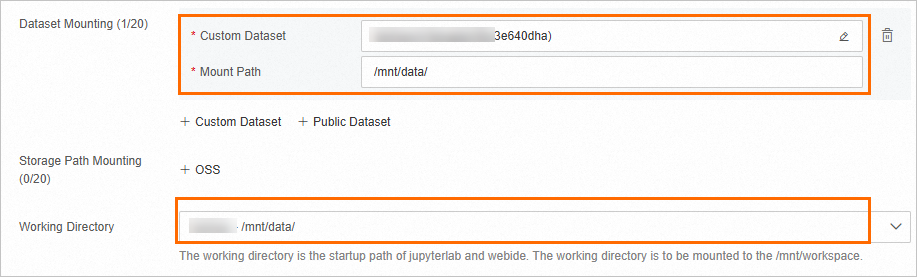

Create a DSW instance

Create a DSW instance. For more information, see Create a DSW instance.

|

Parameter |

Description |

|

|

Environment Context |

Dataset Mount |

Click Custom Dataset, select the created NAS dataset, and set Mount Path to |

|

Working Directory |

Select |

|

|

Network Information |

VPC Configuration |

Not required for this procedure. |

Prepare training data

Download and decompress training data from this public dataset.

-

Go to the development environment of a DSW instance.

-

Log on to the PAI console.

-

In the left-side navigation pane, click Workspaces. On the Workspaces page, click the name of the workspace that you want to manage.

-

In the upper-left corner of the page, select the region where you want to use PAI.

-

In the left-side navigation pane, choose .

-

Optional: On the Data Science Workshop (DSW) page, enter the name of a DSW instance or a keyword in the search box to search for the DSW instance.

-

Click Open in the Actions column of the instance.

-

-

In the menu bar, select Notebook.

-

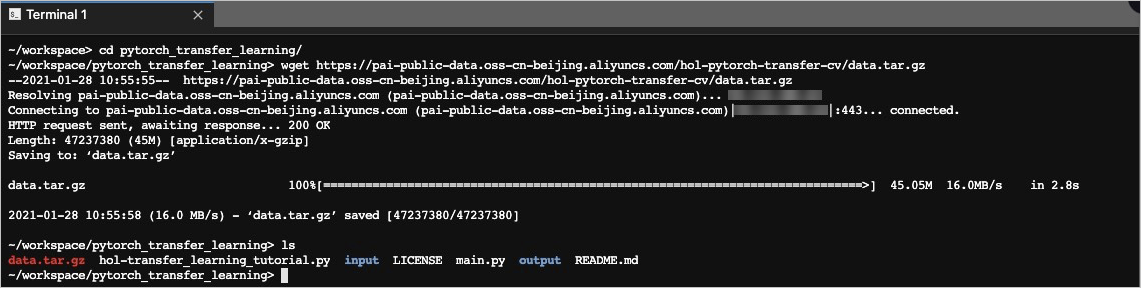

Download training data.

-

Click the

icon to create a folder named pytorch_transfer_learning.

icon to create a folder named pytorch_transfer_learning. -

Select Terminal to open the terminal.

-

Navigate to the folder and download the dataset.

cd /mnt/workspace/pytorch_transfer_learning/ wget https://pai-public-data.oss-cn-beijing.aliyuncs.com/hol-pytorch-transfer-cv/data.tar.gz

-

Decompress the downloaded dataset.

tar -xf ./data.tar.gz -

Navigate to the pytorch_transfer_learning directory in the Notebook tab, right-click the decompressed data folder (hymenoptera_data), select Rename, and rename it to input.

-

Prepare training code

-

Download training code to the

pytorch_transfer_learningfolder.cd /mnt/workspace/pytorch_transfer_learning/ wget https://pai-public-data.oss-cn-beijing.aliyuncs.com/hol-pytorch-transfer-cv/main.py -

Create an output folder to store the trained model.

mkdir output -

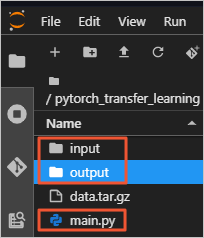

Verify that the folder contains the following files:

-

input: training data

-

main.py: training code

-

output: model storage location

-

Create a job

-

Go to the Create Job page.

-

Log on to the PAI console, select the target region and workspace, and click Deep Learning Containers (DLC).

-

On the Deep Learning Containers (DLC) page, click Create Job.

-

-

Configure the following parameters on the Create Job page:

Parameter

Description

Basic Information

Job Name

Specify the job name.

Environment Context

Node Image

Select Alibaba Cloud Image and a PyTorch image. Example:

pytorch-training:1.12-gpu-py39-cu113-ubuntu20.04.Dataset

Select the created NAS dataset.

Start Command

Enter the following command to specify input and output paths:

python /mnt/data/pytorch_transfer_learning/main.py -i /mnt/data/pytorch_transfer_learning/input -o /mnt/data/pytorch_transfer_learning/outputThird-party Library Configuration

Enter the following third-party libraries:

numpy==1.16.4 absl-py==0.11.0Code Configuration

Not required for this procedure.

Resource Information

Resource Source

Select Public Resources.

Framework

Select PyTorch.

Job Resources

Select a resource specification. For example, under Resource Specification, select CPU > ecs.g6.xlarge, and set Nodes to 1.

-

Select OK to create the job.

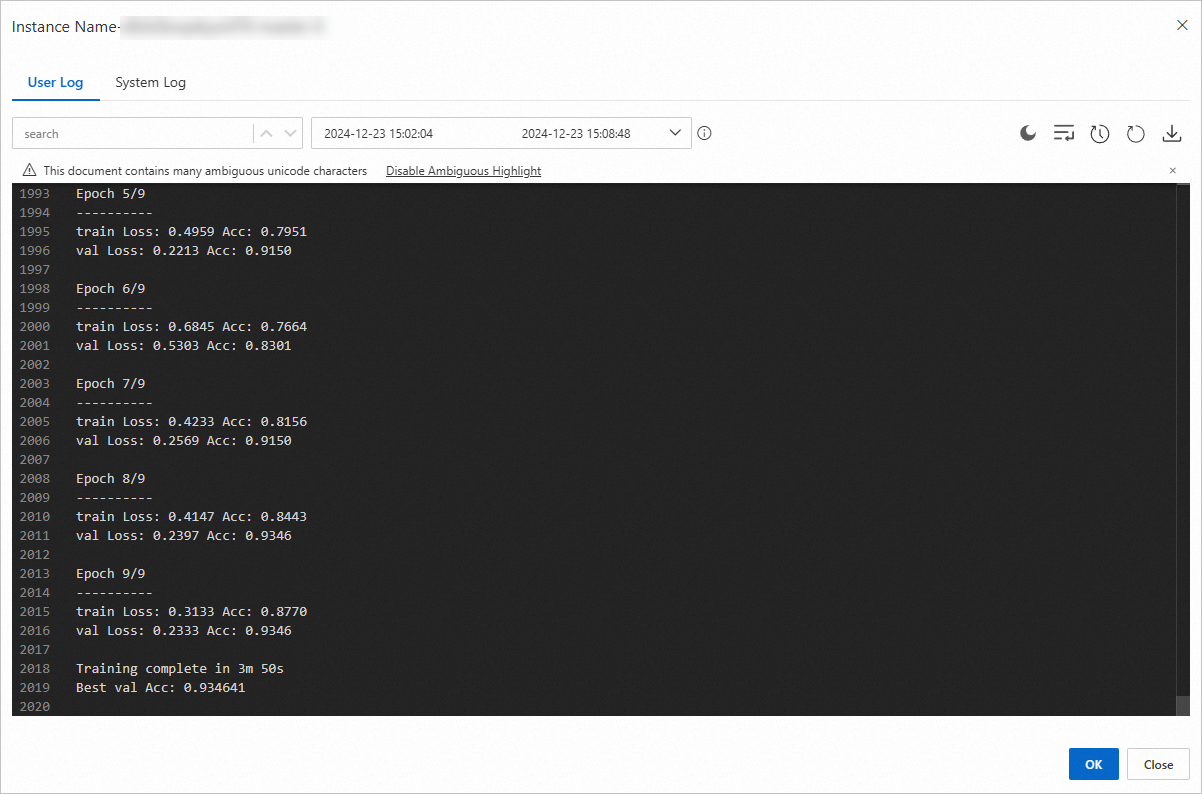

View job status

-

On the Deep Learning Containers (DLC) page, select the job name to view details.

-

View Basic Information and Resource Information on the job details page.

-

In the Instance section, select Log in the Actions column to view training logs.

Example training logs: