Alibaba Cloud E-MapReduce (EMR) is the first big data system certified to run the TPC-DS 100 TB benchmark. This topic walks you through the complete process: setting up an EMR cluster, generating TPC-DS data on OSS-HDFS, and running all 99 SQL statements using either Apache Hive or Apache Spark.

Use cases

Big data performance evaluation: Use TPC-DS as a decision support benchmark when evaluating OSS-HDFS as Hadoop-compatible storage, especially for large-scale data analysis and query workloads.

Data lake architecture verification: Run TPC-DS in EMR clusters to get objective, standardized results for your OSS-based data lake—covering complex query processing, extract, transform, and load (ETL) jobs, and data warehouse performance.

Capacity planning: When scaling out a cluster, upgrading hardware, or adjusting storage policies, TPC-DS gives you a consistent yardstick to measure how performance changes with data volume and compute resources.

Cost-effectiveness analysis: Measure OSS-HDFS performance against TPC-DS to determine whether OSS-HDFS fits your workload and budget.

Background

TPC-DS is a standard benchmark designed and maintained by the Transaction Processing Performance Council (TPC). The official TPC-DS tools target standalone environments and are not suited for large-scale distributed clusters. To run TPC-DS on EMR, you need the following:

Hive TPC-DS benchmark testing tool: Developed by Hortonworks, this tool is customized for Hive and Spark in the Hadoop ecosystem. It supports generating and running TPC-DS and TPC-H SQL in a cluster environment.

EMR cluster version 5.15.1 or later: This version supports the Hortonworks Data Platform (HDP) 3 series, which corresponds to Hive 3.1.

Prerequisites

Before you begin, make sure you have:

An Alibaba Cloud account with permissions to create EMR clusters

An OSS bucket with OSS-HDFS enabled

SSH access to the emr-master node

Step 1: Create an EMR cluster and download the testing tool

Create an EMR cluster

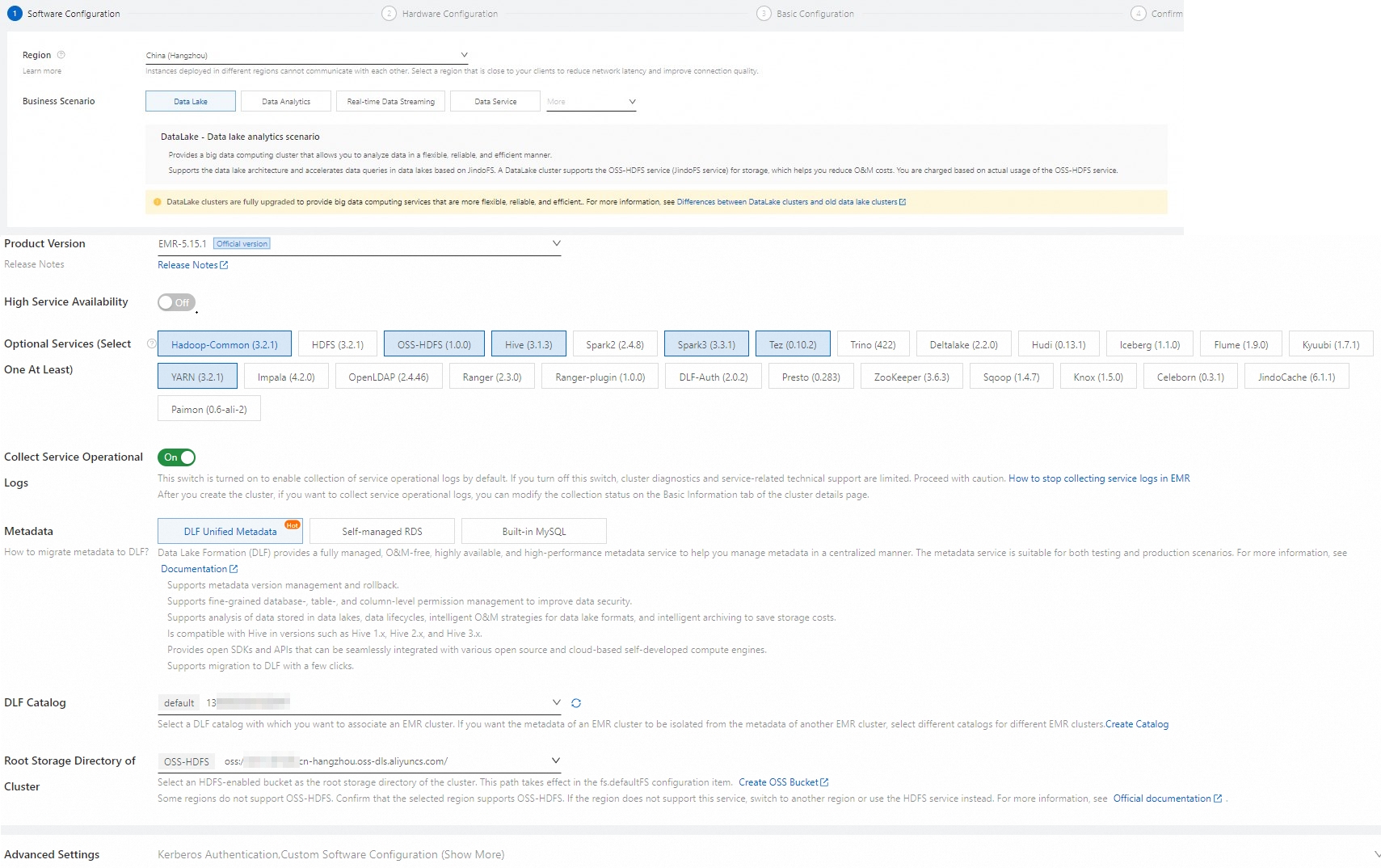

Create an EMR cluster of version 5.15.1 or later. Configure the following key parameters. For other parameters, see Create a cluster.

ImportantThe total data disk capacity of your core nodes must be more than three times the dataset size. For best query performance, use a big data or local SSD instance type for the emr-core node. A general-purpose instance with 4 vCPUs and 16 GiB of memory works for small-scale testing.

Category Parameter Value Software configurations Business Scenario New Data Lake Metadata DLF Unified Metadata Root Storage Directory of Cluster Select a bucket with OSS-HDFS enabled Hardware configurations Node Group Assign Public Network IP

Install Git and Maven

Log on to the emr-master node via SSH. For details, see Log on to a cluster.

Install Git:

sudo yum install -y gitInstall Apache Maven:

Download the latest binary archive (for example,

apache-maven-3.9.6-bin.tar.gz) from the Apache Maven Project page and upload it to the emr-master node.Extract the archive:

tar zxf apache-maven-3.9.6-bin.tar.gzConfigure environment variables:

cd apache-maven-3.9.6 export MAVEN_HOME=`pwd` export PATH=`pwd`/bin:$PATH

Download the Hive TPC-DS benchmark testing tool

Download the tool using one of the following methods:

Clone from GitHub (may be slow from regions in the Chinese mainland):

git clone https://github.com/hortonworks/hive-testbench.gitDownload the ZIP package: Download hive-testbench-hdp3.zip to your local machine, then upload it to the emr-master node.

Extract the ZIP package:

unzip hive-testbench-hdp3.zip

Step 2: Compile and package the data generator

(Optional) Configure the Alibaba Cloud Maven mirror to speed up compilation in regions in the Chinese mainland. With this mirror, compilation finishes in 2–3 minutes.

mkdir -p ~/.m2/ cp $MAVEN_HOME/conf/settings.xml ~/.m2/Add the following mirror entry to

~/.m2/settings.xml:<mirror> <id>aliyun</id> <mirrorOf>central</mirrorOf> <name>Nexus aliyun</name> <url>http://maven.aliyun.com/nexus/content/groups/public</url> </mirror>Switch to the tool directory:

cd hive-testbench-hdp3Download the tpcds-extern.patch file, upload it to the current directory, and copy it to the patches directory:

cp tpcds-extern.patch ./tpcds-gen/patches/all/Compile and package the data generator:

./tpcds-build.sh

Step 3: Generate and load data

TPC-DS uses a scale factor (SF) to control dataset size, measured in GB. SF=1 produces a 1 GB dataset, SF=100 produces 100 GB, and SF=1000 produces 1 TB.

This example uses SF=3 (3 GB) as a quick demonstration—data generation and loading takes approximately 40–50 minutes at this scale.

Core node total data disk capacity must exceed three times the dataset size. For SF=3, ensure you have at least 9 GB of total core node disk space.

Set the scale factor:

SF=3Check whether the target Hive database already exists:

hive -e "desc database tpcds_bin_partitioned_orc_$SF"If the database exists, drop it before proceeding. Leaving it in place causes errors in subsequent steps:

hive -e "drop database tpcds_bin_partitioned_orc_$SF cascade"Update the Hive service URL in the setup script. The default URL in

tpcds-setup.shpoints to a ZooKeeper-based discovery endpoint that does not match the EMR cluster configuration. Replace it with the direct endpoint:sed -i 's/localhost:2181\/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2?tez.queue.name=default/master-1-1:10000\//' tpcds-setup.shThis changes the URL from

jdbc:hive2://localhost:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2?tez.queue.name=defaulttojdbc:hive2://master-1-1:10000/.Fix a Hive parameter compatibility issue. The parameter

hive.optimize.sort.dynamic.partition.threshold=0is not supported in Hive 2 or Hive 3 and causes job failures. Replace it with the supported equivalent:sed -i 's/hive.optimize.sort.dynamic.partition.threshold=0/hive.optimize.sort.dynamic.partition=true/' settings/*.sqlGenerate and load data. On completion, the TPC-DS data tables are created in the

tpcds_bin_partitioned_orc_$SFdatabase and the data files are saved to the root storage path of your OSS-HDFS bucket../tpcds-setup.sh $SFCollect Hive table statistics. Without statistics, Hive's query optimizer cannot choose optimal execution plans, which significantly degrades query performance. For SF=3, this step takes approximately 20–30 minutes.

hive -f ./hive-testbench-hdp3/ddl-tpcds/bin_partitioned/analyze.sql \ --hiveconf hive.execution.engine=tez \ --database tpcds_bin_partitioned_orc_$SF

Step 4: Run TPC-DS SQL statements

TPC-DS has 99 SQL queries. Run them using Hive or Spark.

Use Hive to run TPC-DS queries

All 99 Hive SQL files are in the sample-queries-tpcds directory. With SF=3, each query returns results within 5 minutes.

TPC-DS queries and data are randomly generated. Some SQL statements may return no records—this is expected.

Run a single query:

cd sample-queries-tpcds

hive --database tpcds_bin_partitioned_orc_$SF

set hive.execution.engine=tez;

source query10.sql;Run all 99 queries in sequence:

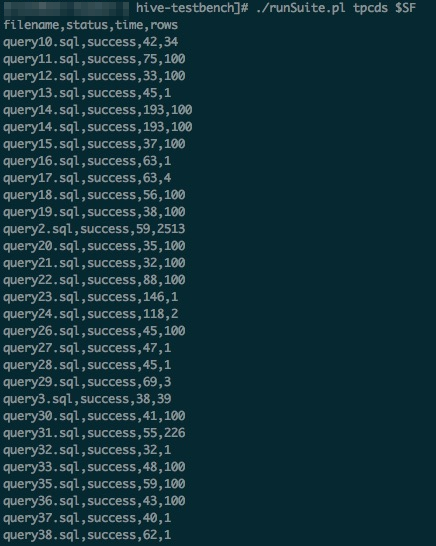

cd ~/hive-testbench-hdp3

echo 'set hive.execution.engine=tez;' > sample-queries-tpcds/testbench.settings

./runSuite.pl tpcds $SF

Use Spark to run TPC-DS queries

The tool provides sample Spark SQL files in the spark-queries-tpcds directory. This example uses Spark Beeline connected to Spark Thrift Server.

EMR Spark supports storing tables across multiple storage systems (HDFS and OSS) and storing metadata in DLF.

Collect Hive table statistics via Spark Beeline. Without statistics, the query optimizer cannot select optimal execution plans, which degrades query performance.

cd ~/hive-testbench-hdp3 spark-beeline -u jdbc:hive2://master-1-1:10001/tpcds_bin_partitioned_orc_$SF \ -f ./ddl-tpcds/bin_partitioned/analyze.sqlSwitch to the Spark SQL directory:

cd spark-queries-tpcds/Run a single query:

spark-beeline -u jdbc:hive2://master-1-1:10001/tpcds_bin_partitioned_orc_$SF -f q1.sqlRun all 99 queries in sequence:

ImportantIn

q30.sql, the column namec_last_review_date_skis incorrectly written asc_last_review_date. This causes query 30 to fail—this is a known issue in the tool.for q in `ls *.sql`; do spark-beeline -u jdbc:hive2://master-1-1:10001/tpcds_bin_partitioned_orc_$SF -f $q > $q.out done

FAQ

Spark SQL job submissions fail when running all 99 queries

The default memory allocation for Spark Thrift Server is too low for large datasets. If job submissions fail, increase the spark_thrift_daemon_memory parameter and restart Spark Thrift Server:

In the EMR console, click EMR on ECS in the left-side navigation pane.

In the top navigation bar, select the region where your cluster resides and select a resource group.

Find your cluster and click Services in the Actions column.

On the Services tab, find Spark3 and click Configure.

Search for

spark_thrift_daemon_memoryand increase its value based on your dataset size. Click Save, enter an execution reason in the dialog box, and click Save again.Restart Spark: choose More > Restart in the upper-right corner of the Spark service page, enter an execution reason, and click OK. In the Confirm dialog box, click OK.