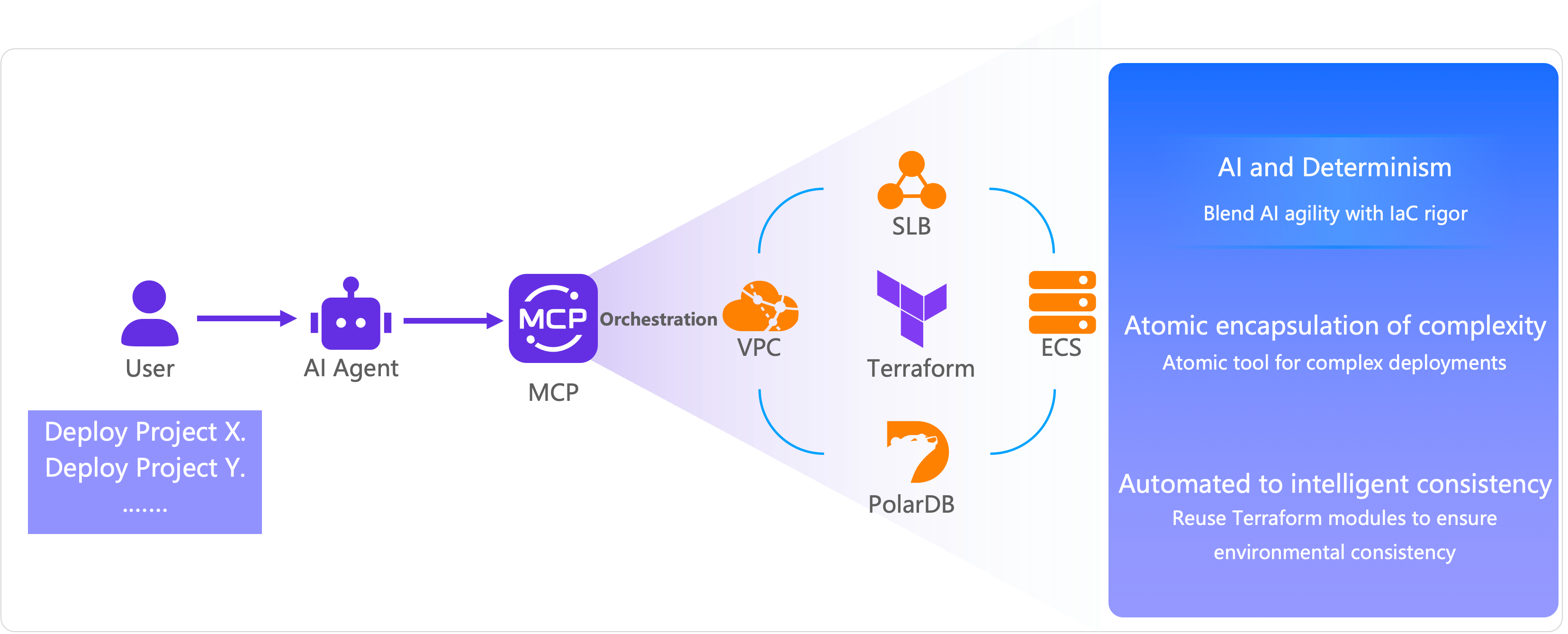

Terraform is an infrastructure as code (IaC) tool that lets you define and provision cloud resources using the HashiCorp Configuration Language (HCL). OpenAPI Model Context Protocol (MCP) Server integrates with Terraform, allowing you to create Terraform tools that AI agents can execute. This combines the flexibility of an AI agent with the deterministic orchestration of IaC.

Overview

The MCP is an open standard that enables AI models to securely connect with external tools, applications, and data sources. OpenAPI MCP Server implements this protocol for Alibaba Cloud services, providing a bridge between AI agents and cloud infrastructure management.

How it works

When you create a Terraform tool in OpenAPI MCP Server, the system:

Stores your Terraform code as an executable tool.

Makes the tool available to AI agents through the MCP protocol.

Executes the Terraform code when an AI agent calls the tool.

Returns execution results or task status to the agent.

This ensures that AI agents use current Alibaba Cloud provider information and best practices, rather than potentially outdated training data.

What you can do

With Terraform tools in OpenAPI MCP Server, you can:

Create reusable Terraform configurations that AI agents can execute.

Automate infrastructure provisioning through natural language commands.

Manage cloud resources with deterministic, version-controlled configurations.

Combine AI flexibility with IaC reliability.

Prerequisites

Before you begin, ensure that you have:

An Alibaba Cloud account with appropriate permissions.

Access to the OpenAPI MCP Server page.

Basic understanding of Terraform and HCL syntax.

(Optional) An IDE client (such as AI coding assistant Lingma) configured to use OpenAPI MCP Server.

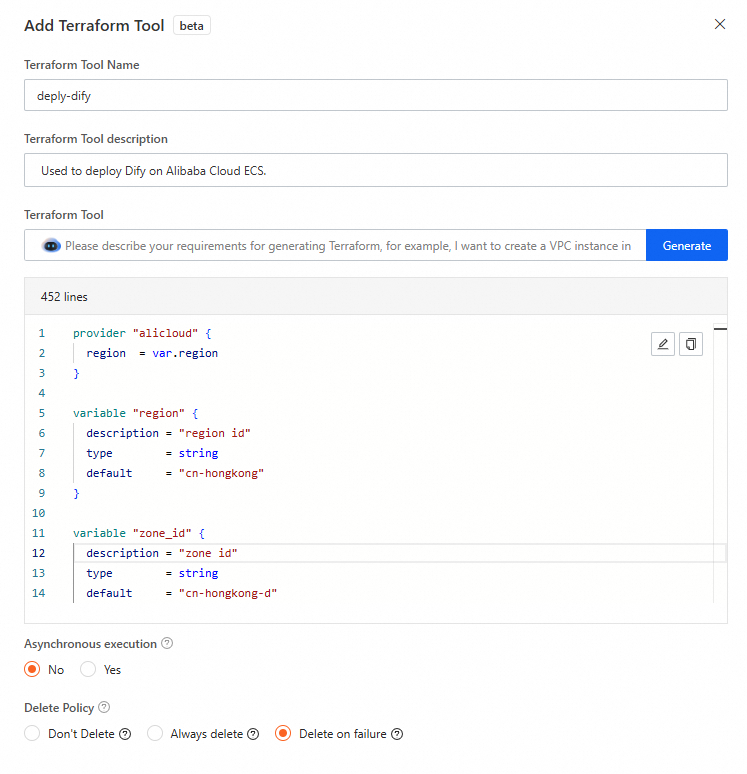

Create a Terraform tool

Go to the OpenAPI MCP Server page, on the Create an MCP Server tab, click Add Terraform Tools.

In the Add Terraform Tool panel, configure the following parameters.

Parameter

Description

Terraform Tool Name

The name of the Terraform tool.

Terraform Tool Description

A description of the Terraform tool's function or any important notes.

Terraform Tool

The Terraform code for the tool. You can use the built-in Terraform AI assistant to generate the code or write it yourself.

Asynchronous Execution

The execution mode for the Terraform tool. Valid values:

No: The agent waits for the task to complete before returning the results. Use this mode for simple, fast-running configurations (typically completes in less than 30 seconds).

Yes: The agent call returns a

TaskIdimmediately. OpenAPI MCP Server automatically adds theQueryTerraformTaskStatussystem tool, which you can then call with theTaskIdto check the task's status.

NoteWe recommend selecting Yes for complex or long-running Terraform configurations to prevent model invocation timeouts.

Deletion Policy

The resource cleanup behavior after execution. Valid values:

Never Delete: Resources are not deleted after the task runs, regardless of success or failure. Use this option when you want to keep resources for further use or manual cleanup.

Always Delete: All created resources are immediately deleted after the task runs, regardless of success or failure. Use this option for temporary test environments.

Delete on Failure: Created resources are deleted only if the task fails. Use this option to clean up resources when deployment fails, while preserving successful deployments.

NoteIf you select Never Delete or Delete on Failure, this tool cannot be used to release the resources later. Running the tool again will attempt to create a new set of resources.

Write the Terraform code. This topic provides an example of Terraform code to deploy Dify for testing. For more Terraform examples, see Terraform tutorials.

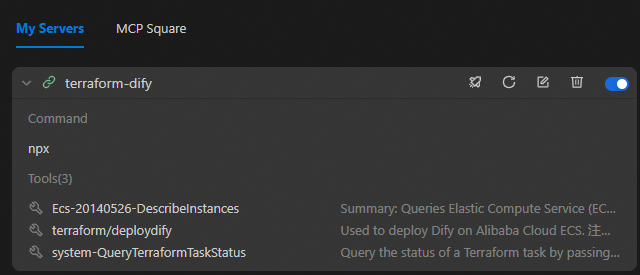

Test the Terraform tool on a client

This section describes how to test the Terraform tool in AI Coding Assistant Lingma.

Step 1: Configure OpenAPI MCP Server in Lingma

Configure OpenAPI MCP Server in Lingma. After the configuration is applied, information about the tool is displayed in Lingma.

Step 2: Run the Terraform tool

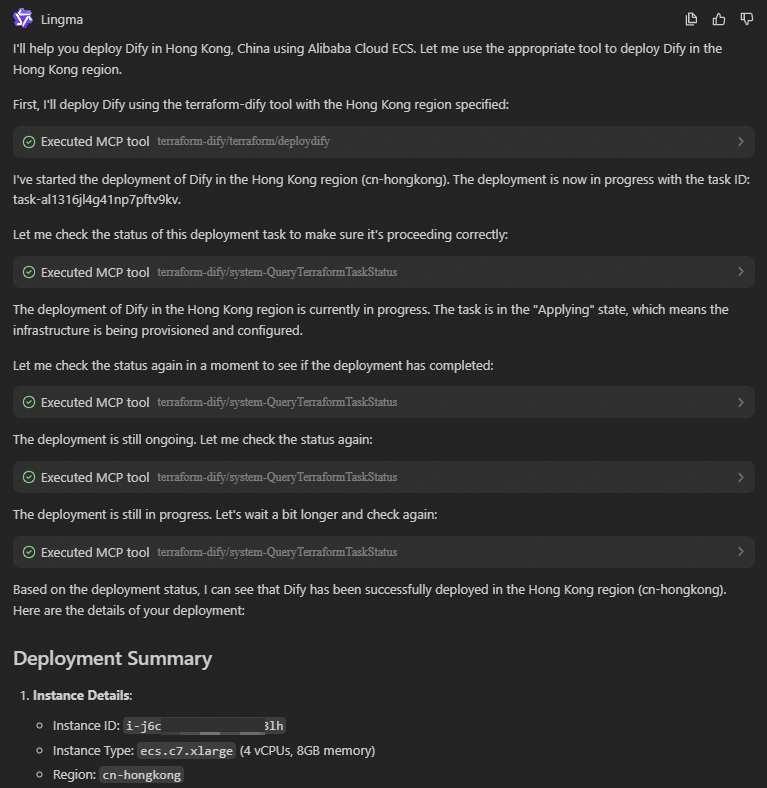

Enter a natural language command to run OpenAPI MCP Server. For example, enter "Help me deploy Dify in China (Hong Kong)".

The AI agent processes your request and calls the Terraform tool.

Step 3: Query task status (asynchronous execution)

If you set Asynchronous Execution to Yes:

The agent returns a

TaskIdimmediately after calling the Terraform tool.Use this

TaskIdto call theQueryTerraformTaskStatussystem tool to query the task status.Monitor the task status until it completes or fails.

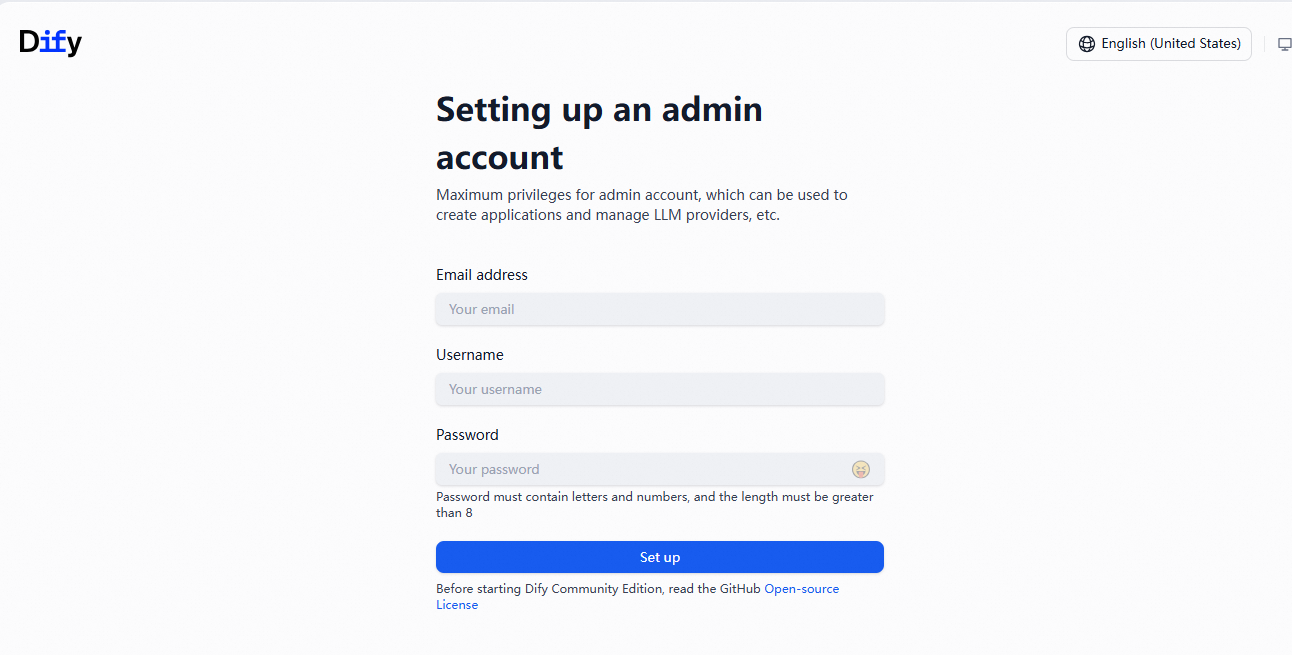

Step 4: Verify deployment

After the Terraform execution completes, obtain the public IP address of the created ECS instance:

Log on to the Elastic Compute Service (ECS) console. In the left-side navigation pane, choose .

Find the instance created by the Terraform tool.

Copy the public IP address from the instance details page.

Alternatively, you can add an output to your Terraform code to automatically return the public IP address:

output "public_ip" { value = alicloud_instance.dify.public_ip }In a browser, enter

http://<Public IP address>.If you see the Dify setup page, the deployment is successful and the Terraform tool ran correctly.