OpenSearch Retrieval Engine Edition is a large-scale distributed search engine developed by Alibaba. It powers the search services for the entire Alibaba Group, including Taobao, Tmall, Cainiao, Youku, and e-commerce businesses outside China. It also supports the OpenSearch service on Alibaba Cloud. After years of development, OpenSearch Retrieval Engine Edition has evolved to meet business needs for high availability (HA), real-time performance, and low cost. It also includes a mature automated operations and maintenance (O&M) system. This system lets you easily build search services tailored to your business needs.

Service architecture

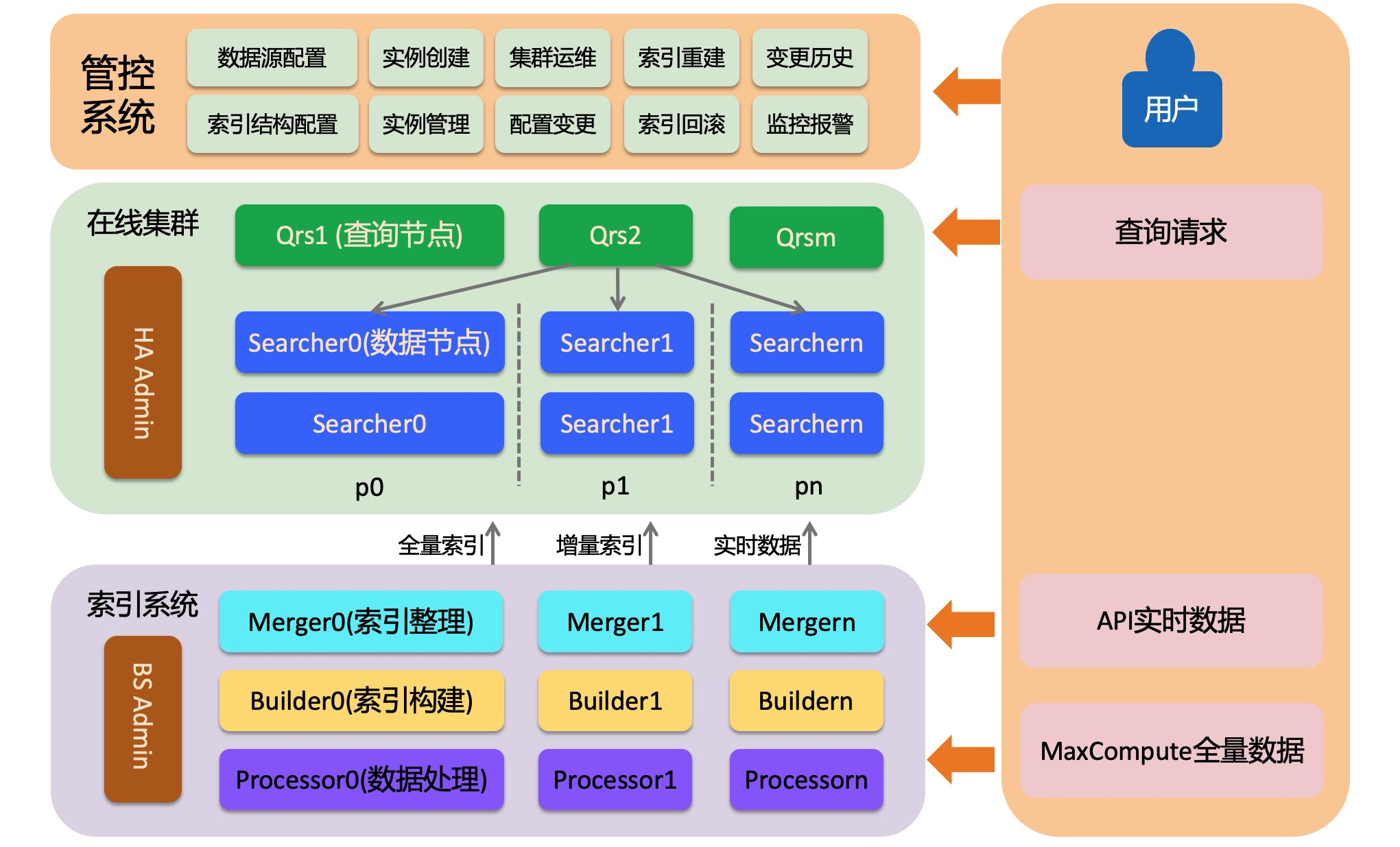

OpenSearch Retrieval Engine Edition consists of three main parts: an online system, an offline index building system, and a control system. The online system loads indexes and provides retrieval services. The offline index building system builds indexes from user data. This includes full indexes, batch incremental indexes, and real-time indexes. The control system provides automated O&M services and helps you create and manage clusters.

Online system

The online system is a distributed information retrieval system. It consists of three roles: admin, qrs, and searcher. The following sections describe each role.

HA Admin

The HA Admin is the brain of the online system. Each physical cluster has at least one admin. The HA admin receives commands from the control system and sends O&M instructions to the Qrs and Searcher nodes. The admin also performs real-time monitoring of the Qrs and Searcher nodes. If a node's heartbeat is abnormal, the admin automatically replaces it.

Qrs (QRS worker)

Qrs is also known as a QRS worker or a query and result processing node. It parses, validates, or rewrites incoming query requests. It then forwards the parsed requests to the Searcher for execution. It collects and merges the results from the Searcher, processes the results, and returns them to the user. A QRS worker is a compute-optimized node. It does not load user data and usually does not require much memory. However, it can consume a large amount of memory if many documents are returned or if many statistical entries are generated. If the processing capacity of a QRS worker becomes a bottleneck, you can increase the number of backup nodes or upgrade the node specifications.

Searcher (data node)

A Searcher loads user index data. It retrieves documents based on queries and performs operations such as filtering, statistics, and sorting. Indexes on a Searcher can be sharded. Sharding involves hashing the sharding field to a value between 0 and 65,535. This range is then divided into a specified number of shards. The number of shards is specified during index building. For clusters with large data volumes or high query performance requirements, sharding can improve the processing performance of a single request. To increase the overall processing capacity of the cluster, for example, from 1,000 QPS to 10,000 QPS, you can scale out by adding more backups. Scaling out backups involves adding multiple Searcher nodes to host all the data, not just a single node. The multiple shards must cover the complete [0, 65,535] range.

Offline index building system

OpenSearch Retrieval Engine Edition is a search engine that uses read/write splitting. Data writes do not affect the online retrieval service. This ensures stable query services while supporting large-scale, real-time data writes. The index building system includes two main flows: full and incremental. In each flow, three roles are responsible for data processing and index building.

Full flow

Indexes in OpenSearch Retrieval Engine Edition support multiple versions. Each index version is built from raw data (API data sources are empty by default). The first build initiates a full data processing flow. This flow is a one-time job that processes the data to generate a full index. The generated full index is then switched to the online cluster to provide retrieval services. Subsequent incremental data updates are applied to the new full index. Currently, full jobs can only read full data from MaxCompute or Hadoop Distributed File System (HDFS) data sources.

Multiple index versions ensure stability during data modifications. When the index schema or data structure changes, a new index is generated through a full build and is completely isolated from the old version. This lets you promptly roll back if the changes introduce any issues.

Full index generation involves multiple stages, such as data processing, index building, and index merging. To increase the generation speed, you can set the concurrency for index processing at each stage.

Incremental flow

After a full index is generated, subsequent data updates are pushed through an API. Data pushed through the API is handled by two processing pipelines. The data is either processed by a Processor and pushed directly to a data node to build a real-time index in memory, or it is processed by a Builder and a Merger to create an incremental index. This index is then applied to the data node through an incremental switch. During an incremental switch, the real-time index in memory is cleared, and data already included in the incremental index is deleted from the real-time index to reduce memory pressure on the data node.

Each index table corresponds to an incremental flow, which is a longtime job. You can improve real-time data processing by controlling the concurrency of each node in the flow.

Processor

A processor handles raw documents. Its main tasks include tokenization or rewriting field content based on business logic. In an incremental flow, the processor is a long-running distributed service. You can adjust its concurrency through configuration to improve data processing capacity. The processor supports the configuration of multiple data processing plugins. This feature is not yet publicly available. If you need it, contact us.

Builder

A Builder builds an index from processed documents. The Builder is not a long-running job. It runs alternately with the Merger. After each data build, a dependent Merger task starts to organize the index. Only the organized index is switched to the data node.

Merger

A Merger consolidates and organizes the index data produced by the Builder. This makes the resulting index more orderly and compact. As data is updated, legacy indexes will contain many records marked for deletion. The Merger cleans up and merges this data according to a specified index merge policy.

Index structure of Retrieval Engine Edition

|-- generation_0

|-- partition_0_32767

|-- index_format_version

|-- index_partition_meta

|-- schema.json

|-- version.0

|-- segment_0

|-- segment_info

|-- attribute

|-- deletionmap

|-- index

|-- summary

|-- segment_1

|-- segment_info

|-- attribute

|-- deletionmap

|-- index

|-- summary

|-- partition_32768_65535

|-- index_format_version

|-- index_partition_meta

|-- schema.json

|-- version.0

|-- segment_0

|-- segment_info

|-- attribute

|-- deletionmap

|-- index

|-- summary

|-- segment_1

|-- segment_info

|-- attribute

|-- deletionmap

|-- index

|-- summary|

Structure Name |

Description |

|

generation |

`generation_x` is an identifier that the engine uses to distinguish between different versions of a full index. |

|

partition |

A partition is the basic unit for a searcher to load an index. Too much data in one partition can degrade searcher performance. Online data is typically divided into multiple partitions to ensure the retrieval efficiency of each searcher. |

|

schema.json |

Index configuration file. It mainly records information such as fields, index, attribute, and summary. The engine uses this file to load the index. |

|

version.0 |

Version file. It mainly records the segments that the engine needs to load in the current partition and the timestamp of the latest document. During a real-time build, the engine filters legacy raw documents based on the timestamp of the incremental version. |

|

segment |

A segment is the basic unit of an index. A segment contains the inverted and forward index structures of a document. The index builder generates a segment with each dump. Multiple segments can be merged based on a merge policy. The available segments in a partition are specified in the version file. |

|

segment_info |

Segment information summary. It records the number of documents in the current segment, whether the segment has been merged, locator information, and the timestamp of the latest document. |

|

index |

Inverted index directory. |

|

attribute |

Forward index directory. |

|

deletionmap |

Deleted document records. |

|

summary |

Summary index directory. |

Control system

The control system is an O&M platform for OpenSearch Retrieval Engine Edition instances. This platform significantly reduces O&M costs. For more information about this O&M platform, see the Retrieval Engine Edition product documentation.

Product features

Stable

The underlying layer of Retrieval Engine Edition is implemented in C++. After more than a decade of development, it has supported multiple core businesses and is proven to be highly stable. It is ideal for core search scenarios that require high stability.

Efficient

OpenSearch Retrieval Engine Edition is a distributed search engine. It efficiently supports the retrieval of massive amounts of data. It also supports real-time data updates that take effect in seconds. It is ideal for search scenarios that are sensitive to query latency and require real-time data.

Low cost

OpenSearch Retrieval Engine Edition supports multiple index compression policies. It also supports multi-value index loading. This allows it to meet user query needs at a lower cost.

Rich features

OpenSearch Retrieval Engine Edition supports various analyzer types, multiple index types, and a powerful query syntax. These features can effectively meet user retrieval needs. It also provides a plugin mechanism that lets you customize your business processing logic.

SQL query

OpenSearch Retrieval Engine Edition supports SQL query syntax and online multi-table joins. It provides a rich set of built-in User-Defined Functions (UDFs) and a UDF customization mechanism to meet the retrieval needs of different users. SQL Studio will soon be integrated into the O&M system to help you develop and test SQL queries.