This FAQ covers common questions about graceful start and shutdown in Microservices Engine (MSE) Microservices Governance, including low-traffic service prefetching, readiness probes, proactive notification, and troubleshooting during rolling deployments.

Quick diagnosis

| Symptom | Most likely cause | Solution |

|---|---|---|

| QPS surges at one point during prefetching | Old nodes taken offline before prefetch completes | Configure minReadySeconds or use batch deployment |

| QPS does not gradually increase | Microservices Governance not enabled for the consumer | Enable governance for the consumer |

| Traffic drops to zero during release | 55199/readiness not configured | Configure the MSE readiness probe |

| Traffic does not drop to zero after shutdown | Proactive notification not enabled, or traffic from non-microservices sources | Enable proactive notification or check traffic sources |

| Release time increases after enabling graceful start | Legacy "service prefetch before readiness probe" feature still active | Check and disable the legacy feature |

| MSE readiness probe keeps failing | Graceful start not enabled, governance probe not connected, or startup/liveness probe failures | Troubleshoot readiness probe failures |

Where did the advanced features go?

If you already use the new version of Microservices Governance, this section does not apply to you.

The advanced settings from the old version of Microservices Governance are hidden in the new version to simplify the experience. The following describes how each setting is handled.

Service registration before readiness probe

Still available and enabled by default. If it was previously disabled, re-enabling graceful start on the Graceful Online/Offline page automatically turns it on. Do not disable it -- it has no negative impact and prevents the risk of traffic dropping to zero during releases. For details, see What is 55199/readiness and why does traffic drop to zero without it?.

Service prefetching before readiness probe

No longer available for new configurations. This feature delayed the readiness probe to allow more time for prefetching, which prolonged overall release time. If you previously enabled it, it continues to work and has no negative impact -- do not disable it. For new deployments, follow the practices described in What is the best practice for low-traffic prefetching?.

How does low-traffic service prefetching work?

Low-traffic service prefetching gradually ramps up traffic to newly started provider nodes instead of sending full traffic immediately.

How it works:

A provider starts and registers with the service registry. The registration metadata includes the provider's startup time.

When a consumer selects a provider, Microservices Governance calculates each provider's weight as a percentage (0% to 100%) based on how long the provider has been running.

A newly started provider begins with a low weight, so the consumer calls it with low probability.

The weight gradually increases over time until it reaches 100%, at which point prefetching is complete and the node receives traffic normally.

Requirements:

Microservices Governance must be enabled for both consumers and providers.

Prefetching starts only after the application receives its first request and ends after the configured prefetching duration elapses. If no external requests arrive, prefetching does not begin.

The default prefetching duration is 120 seconds.

Prefetching requires completed service registration. If prefetching start events appear before registration events in the console, see Why do prefetching events appear before service registration events?.

Why is the prefetching data trend in the QPS curve not as expected?

Before reading this section, make sure you understand how low-traffic service prefetching works.

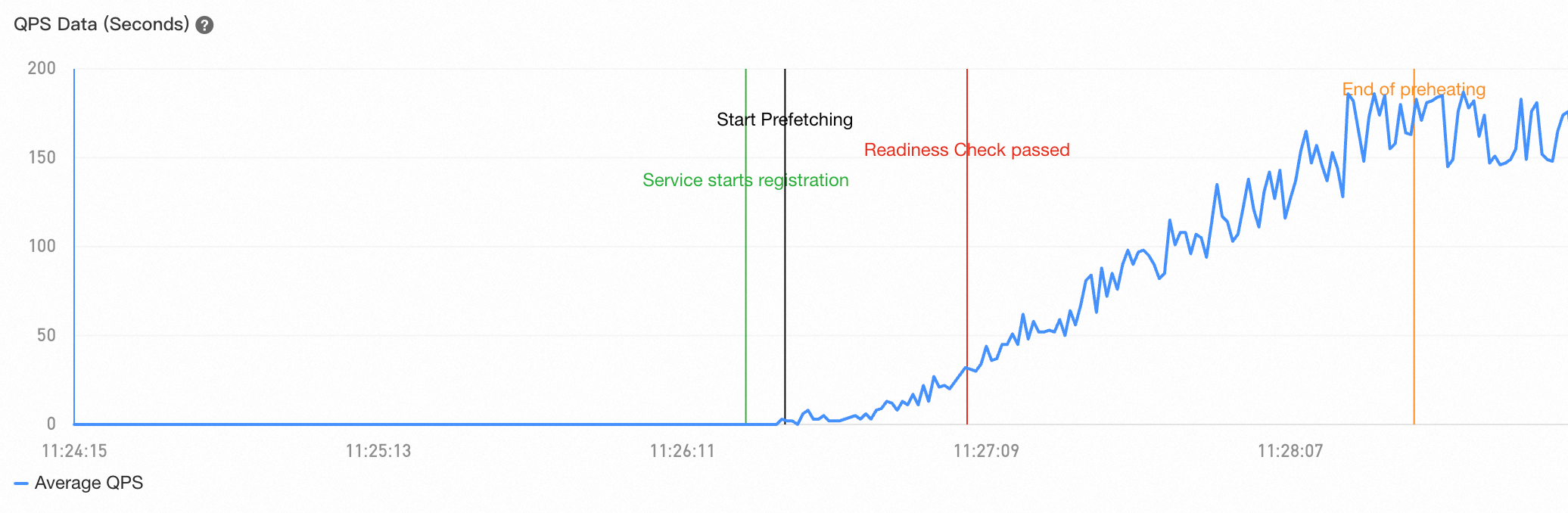

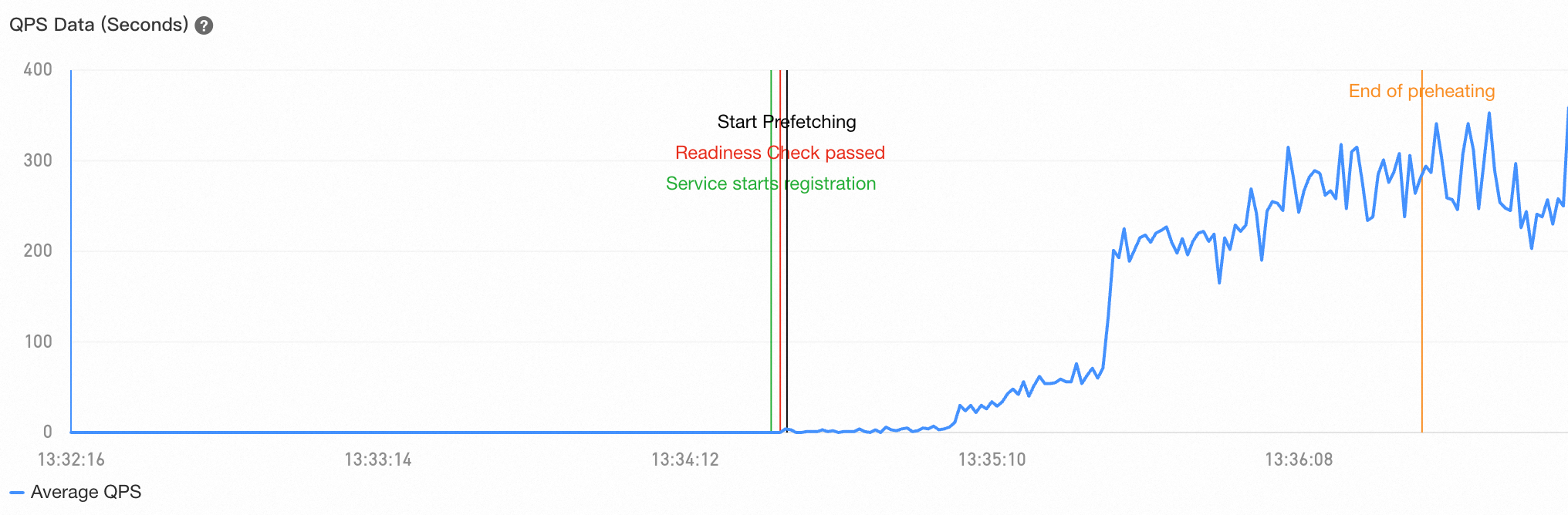

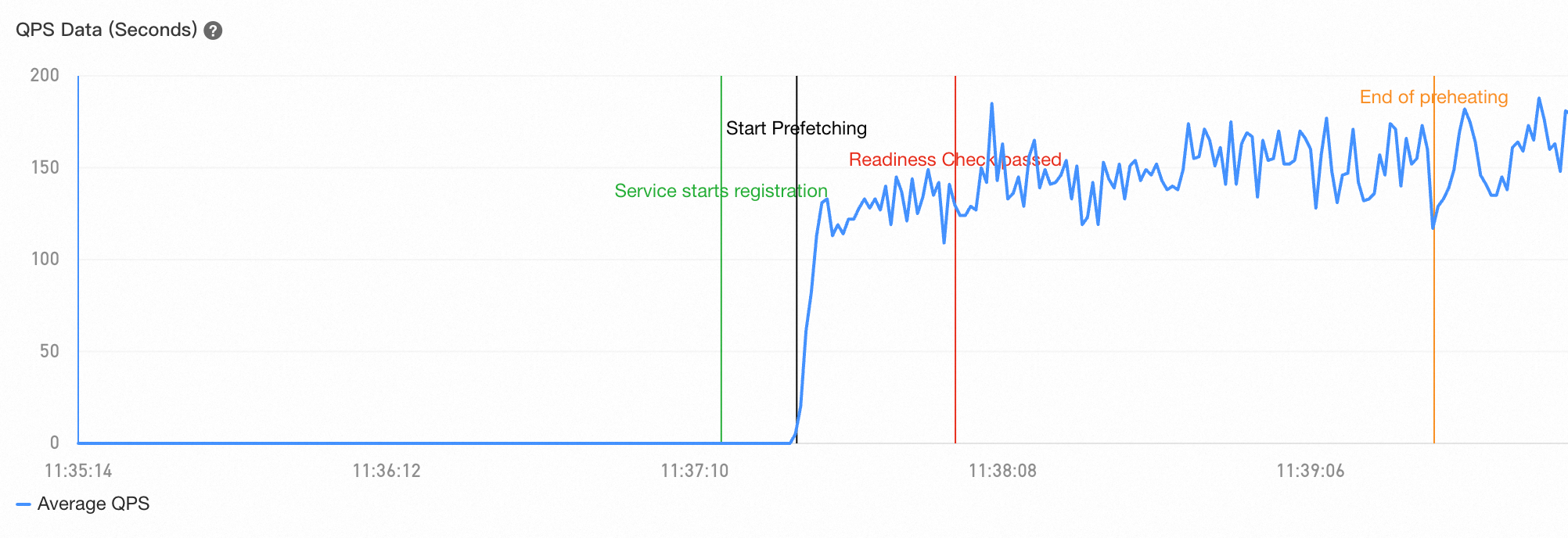

In normal cases, the Queries Per Second (QPS) curve for a prefetched service shows a gradual, smooth increase:

Two common deviations from this pattern:

QPS surges at a single point

This typically happens during rolling deployments when old nodes go offline before new nodes finish prefetching. Once all old nodes are removed, the consumer has no choice but to route all traffic to the new nodes, causing a sudden QPS spike.

Fix: Follow the best practices for low-traffic prefetching to keep old nodes available until new nodes complete prefetching.

QPS does not gradually increase

This usually means Microservices Governance is not enabled for the consumer. Without governance on the consumer side, the consumer cannot calculate provider weights and cannot perform gradual traffic ramping.

Fix: Enable Microservices Governance for the consumer. If traffic comes from an external source (such as a Java gateway) that does not have governance enabled, low-traffic prefetching is not supported for that traffic path.

What is the best practice for low-traffic prefetching?

During rolling deployments, prefetching often fails to complete before old nodes go offline. Use one of these approaches to fix this:

Set minimum ready time (recommended)

Configure .spec.minReadySeconds on your workload to a value greater than the prefetching duration. This tells Kubernetes to wait the specified time after a pod becomes ready before considering it available, which prevents the next rolling update step from proceeding until prefetching completes.

| Parameter | Description | Default |

|---|---|---|

.spec.minReadySeconds | Minimum time (in seconds) a newly created pod must be ready without any container crashes before the pod is considered available | 0 (pod is available as soon as it is ready) |

If you use Container Service for Kubernetes (ACK), navigate to Container Platform > your application > More > Upgrade Policy > Rolling Upgrade > Minimum Ready Time (minReadySeconds).

Use batch deployment (recommended)

Deploy workloads in batches using tools like OpenKruise. Set the interval between batches to be longer than the prefetching duration. Wait for each batch to finish prefetching before releasing the next batch.

Increase initialDelaySeconds (not recommended)

Increasing the initialDelaySeconds parameter delays the first readiness probe, but this approach has significant drawbacks:

The value must exceed the sum of the prefetching duration, delayed registration duration, and application startup time.

Application startup time varies as business logic evolves, making this value fragile.

Delaying the readiness probe may prevent newly started nodes from being added to Kubernetes service endpoints in time.

If the QPS curve still does not meet expectations after following these practices, verify that all traffic to the application comes from consumers with Microservices Governance enabled. Traffic from external load balancers or consumers without governance does not follow the prefetching curve.

What is 55199/readiness and why does traffic drop to zero without it?

55199/readiness is a built-in HTTP readiness probe endpoint provided by MSE Microservices Governance. It returns the following responses:

| Probe response | Condition |

|---|---|

| 500 (not ready) | The node has not completed service registration |

| 200 (ready) | The node has completed service registration |

Why this matters for zero-downtime deployments:

By default, Kubernetes does not take old pods offline until new pods are ready. When you configure the readiness probe to use 55199/readiness, a new pod becomes ready only after it completes service registration. This guarantees that old pods are not removed until new pods are registered with the service registry, so the registry always has available nodes.

Without 55199/readiness, old pods may be removed during a release before new pods register. This leaves no available nodes in the registry, causing all consumers to receive errors and traffic to drop to zero.

Strongly recommended: Enable graceful start and configure the 55199/readiness readiness probe for your application.

If your application's probe version is earlier than 4.1.10, configure the readiness check path as /health instead of /readiness. To check the probe version, go to MSE Console > Administration Center > Application Governance, click your application, and then select Node Details. The probe version is displayed on the right.

Why do prefetching events appear before service registration events?

Prefetching starts when the application receives its first external request. However, the first request may not be a microservices call -- it could be a Kubernetes liveness probe check. In that case, the system reports a prefetching start event even though service registration has not completed.

Fix: Add the following environment variable to the provider to exclude specific paths from triggering prefetching:

# Exclude paths /xxx and /yyy/zz from triggering the prefetch process

profile_micro_service_record_warmup_ignored_path="/xxx,/yyy/zz"Replace /xxx and /yyy/zz with your actual probe paths (for example, /healthz or /livez).

This parameter can also be set as a Java Virtual Machine (JVM) startup parameter.

The value does not support regular expressions.

What is proactive notification and when should I enable it?

Proactive notification is a graceful shutdown feature that lets a Spring Cloud provider actively notify consumers when it goes offline, rather than waiting for consumers to discover the change through the registry. In Spring Cloud environments, consumers cache the provider node list locally. Even after receiving a notification from the registry, the local cache may not be refreshed immediately, which can cause the consumer to continue calling offline nodes.

How default graceful shutdown works:

When a provider that is going offline receives a request, it adds a special header to the response. The consumer reads this header and removes the provider from its list. This works well when the consumer sends frequent requests to the provider.

The problem it solves:

If a consumer does not send any requests to a provider during the grace period (approximately 30 seconds) before the provider goes offline, the consumer never sees the special header. It may then send a request after the provider shuts down, which results in an error.

When to enable it:

Enable proactive notification for providers that receive infrequent requests from consumers -- for example, services called at long intervals. Once enabled, the provider proactively sends a network request to notify consumers of its offline status, regardless of whether the consumer is sending requests at that time.

Proactive notification is disabled by default.

Why does traffic not drop to zero after a graceful shutdown event?

In most cases, traffic drops to zero immediately after a graceful shutdown event. If it does not, check these common causes:

Traffic from non-microservices sources

The graceful shutdown solution handles only requests from microservice applications with Microservices Governance enabled. It does not apply to requests from external load balancers, local scripts, or scheduled tasks. For those traffic sources, configure shutdown handling through the infrastructure or framework directly.

Proactive notification not enabled

If the provider receives infrequent requests from consumers, enable proactive notification. See What is proactive notification and when should I enable it?.

Unsupported framework version

The graceful shutdown solution supports specific Java framework versions. If your application uses an unsupported version, upgrade to a supported version. For details, see Java frameworks supported by Microservices Governance.

Why does the release take longer after enabling graceful start and shutdown?

This is usually caused by the legacy "Complete service prefetch before passing the readiness probe" feature, which delays the readiness probe to allow more time for prefetching.

To check whether this feature is active:

Log on to the MSE console and select a region.

In the left navigation pane, choose Administration Center > Application Administration.

On the Application List page, click the target application and select Traffic Governance > Graceful Online/Offline.

Press F12 to open browser developer tools. In the Network tab, search for the

GetLosslessRuleByApprequest. Refresh the page if you do not see it.In the response body, check the

Relatedfield underData. If the value istrue, this legacy feature is active.

To disable this feature, submit a ticket.

Why does the MSE readiness probe keep failing?

Kubernetes provides three types of probes, each with different behavior on failure:

| Probe type | Purpose | On failure |

|---|---|---|

| Startup probe | Checks whether the application starts successfully | Pod is restarted after reaching the failure threshold |

| Liveness probe | Checks whether the application is alive. Starts after the startup probe succeeds and runs throughout the pod lifecycle. | Pod is restarted after reaching the failure threshold |

| Readiness probe | Checks whether the application is ready to accept traffic. Starts after the startup probe succeeds and runs throughout the pod lifecycle. | Pod is marked as unready but not restarted |

When integrated with MSE Microservices Governance, the 55199/readiness endpoint serves as a readiness probe. It returns a success response only after the application completes service registration, which prevents Kubernetes from proceeding with the rolling update until new pods are fully registered. For more details, see Service registration status check.

Common causes of persistent readiness probe failures:

Graceful start is not enabled. The

55199/readinessendpoint works only when graceful start is active. Enable graceful start for the application.The application is not connected to service governance. Check whether probe logs exist in the governance probe directory. In a Kubernetes environment, the default probe directory is

/home/admin/.opt/AliyunJavaAgentor/home/admin/.opt/ArmsAgent. If nologsdirectory exists inside, the application failed to connect. Submit a ticket for assistance.The pod keeps restarting due to startup or liveness probe failures. If the startup or liveness probe failure threshold is reached, Kubernetes restarts the pod, which prevents the readiness probe from ever succeeding. Check the pod's Kubernetes events for startup or liveness probe failure entries.