When input/output operations per second (IOPS) utilization reaches or approaches 100% on an ApsaraDB for MongoDB instance, the instance may respond slowly or become unavailable. This topic explains how to check IOPS utilization and resolve high IOPS issues.

Background information

To prevent hosts from competing for I/O resources, most cloud database providers use techniques such as control groups (cgroups) to isolate I/O resources and limit IOPS. The upper limit of IOPS varies based on instance specifications.

Monitoring limitations

The IOPS Usage and IOPS Usage (%) metrics cannot be displayed in the ApsaraDB for MongoDB console for the following instance types:

Standalone instances

Replica set instances that run MongoDB 4.2 and use cloud disks

Sharded cluster instances that run MongoDB 4.2 and use cloud disks

For these instances, both metrics appear as 0 on the Monitoring Data page. The value 0 does not reflect actual IOPS usage.

Check IOPS utilization

Use either of the following methods:

Log on to the ApsaraDB for MongoDB console. On the Basic Information page, locate the Specification Information section to find the maximum IOPS for your instance. For IOPS limits by instance type, see Instance types.

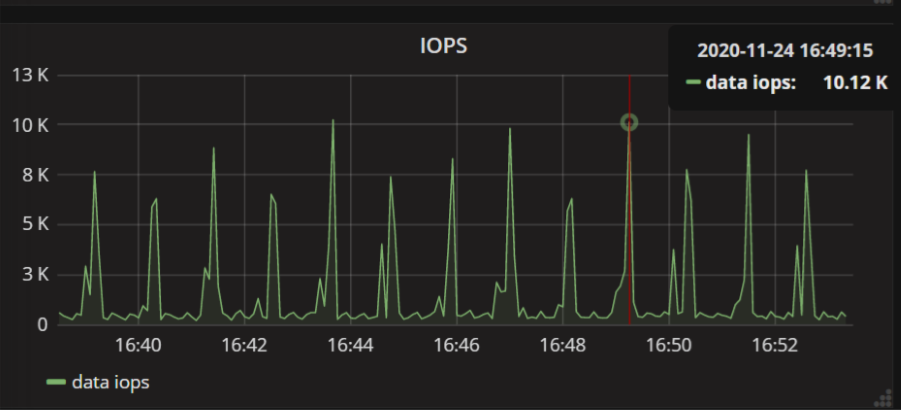

Log on to the ApsaraDB for MongoDB console. On the Monitoring Data page, review the IOPS Usage and IOPS Usage (%) metrics to determine current IOPS consumption. For metric definitions, see Monitoring items and metrics.

Common triggers

High IOPS is typically caused by one of the following:

Insufficient memory: A larger cache holds more hot data, reducing the disk I/O resources required and lowering the probability of I/O bottlenecks. A smaller cache holds less hot data. The system flushes dirty pages to disk more frequently, increasing I/O pressure and raising the probability of bottlenecks.

Parameter or configuration issues: Common misconfigurations include frequent refreshes of journal logs or runtime logs, an improperly configured Write Concern, or invalid moveChunk operations on a sharded cluster instance.

Fix the immediate problem

Take the following actions to reduce IOPS immediately:

Control concurrent write and read threads

MongoDB is a multi-threaded application. High volumes of concurrent writes and complex queries can cause IOPS bottlenecks and introduce continuous replication lag on secondary nodes. To horizontally scale out write throughput, upgrade to a sharded cluster instance, which distributes data across shards.

Schedule batch operations during off-peak hours

Regular batch writes or bulk data persistence can spike IOPS to the instance maximum. If peak write loads exceed current instance capacity, upgrade the instance configurations to meet peak write requirements. To smooth the write pattern and avoid simultaneous bursts, add a random timestamp offset to each batch write operation.

Schedule O&M operations during off-peak hours

Operations and maintenance (O&M) tasks that heavily affect I/O performance include batch writes, updates, or deletes, adding indexes, running compact operations on collections, and batch data exports. Perform these during off-peak hours to avoid competing with production workloads.

Implement a long-term solution

Address the root cause with these strategies:

Right-size your instance

Size your instance so that daily peak CPU and IOPS utilization both stay within 50%. The ratio of hot data to cache size is difficult to predict in advance.

Optimize indexes

Full table scans and poorly chosen indexes are common sources of high IOPS. Specific causes include:

Queries that scan entire collections consume large volumes of I/O.

Oversized indexes reduce the amount of hot data the WiredTiger cache can hold.

Each write operation requires more than one I/O to update associated indexes.

Create appropriate indexes to reduce unnecessary I/O and keep frequently accessed data in cache.