Each sub-error below maps to a specific ODPS-0130071 message. Find the message that matches your error output, then follow the fix.

Error 1: Partition column count mismatch

Error message

ODPS-0130071:[m,n] Semantic analysis exception - the number of input partition columns (n) doesn't equal to table's partition columns (m)The destination table has m partition columns, but the INSERT statement specifies only n (where n < m). Specify all partition columns — either with static values or using dynamic partitioning.

Example

-- Table definition: two partition columns (sale_date, region)

create table if not exists mf_sale_detail

(

shop_name string,

customer_id string,

total_price double

)

partitioned by

(

sale_date string,

region string

);

-- Incorrect: no partition clause specified

insert overwrite table mf_sale_detail

values ('s1','c1',100.1),('s2','c2',100.2),('s3','c3',100.3);

FAILED: ODPS-0130071:[1,24] Semantic analysis exception - the number of input partition columns (0) doesn't equal to table's partition columns (2)

-- Correct: all partition columns specified with static values

insert overwrite table mf_sale_detail partition(sale_date = '2013', region = 'china')

values ('s1', 'c1', 100.1), ('s2', 'c2', 100.2), ('s3', 'c3', 100.3);

OK

-- Correct: dynamic partitioning (region value comes from the data)

insert overwrite table mf_sale_detail partition(sale_date = '2013', region)

values ('s1', 'c1', 100.1, 'china'), ('s2', 'c2', 100.2, 'china'), ('s3', 'c3', 100.3, 'china');

OKError 2: Non-equi JOIN condition without map join hint

Error message

ODPS-0130071:[m,n] Semantic analysis exception - expect equality expression (i.e., only use '=' and 'AND') for join condition without mapjoin hintMaxCompute SQL uses sort-merge join as the physical JOIN algorithm. Sort-merge join requires at least one equality expression (=) in the ON clause — it uses the equality columns to shuffle data between the left and right tables. A non-equi-only condition (for example, t1.value > t2.value) has no equality expression for shuffling, so the query fails.

Fix this with one of two approaches:

-

Add an equality expression to the ON clause. The non-equi predicate can stay as an additional filter.

-

**Add a

/*+mapjoin(t1)*/hint.** Map join broadcasts the smaller table to all workers and allows non-equi conditions. Use this only when the broadcast table is small — a non-equi join with no equality column can produce a very large result set and significantly slow down the query.

Example

-- Incorrect: ON clause has only a non-equi expression

odps>select t1. *

from src t1

join src t2

on t1.value > t2.value;

FAILED: ODPS-0130071:[4,4] Semantic analysis exception - expect equality expression (i.e., only use '=' and 'AND') for join condition without mapjoin hint

-- Correct: equality expression added; non-equi predicate kept as additional filter

odps>select t1. *

from src t1

join src t2

on t1.key = t2.key and t1.value > t2.value;

-- Correct: map join hint added (broadcast t1 to all workers)

odps>select /*+mapjoin(t1)*/ t1. *

from src t1

join src t2

on t1.value > t2.value;Error 3: INSERT INTO not supported on HASH CLUSTERED tables

Error message

ODPS-0130071:[m,n] Semantic analysis exception - insert into HASH CLUSTERED table/partition xxx is not current supportedINSERT INTO cannot write data to a HASH CLUSTERED table. Use one of these approaches:

-

Switch to INSERT OVERWRITE on the clustered table.

-

Write to a non-clustered table instead.

Example

-- Create a clustered table

odps>create table sale_detail_hashcluster

(

shop_name STRING,

total_price decimal,

customer_id BIGINT

)

clustered by(customer_id)

sorted by(customer_id)

into 1024 buckets;

-- Incorrect: INSERT INTO on a clustered table

odps>insert into sale_detail_hashcluster

values ('a', 123, 'id123');

FAILED: ODPS-0130071:[1,13] Semantic analysis exception - insert into HASH CLUSTERED table/partition meta.sale_detail_hashcluster is not current supported

-- Correct option 1: write to a non-clustered table

odps>create table sale_detail

(

shop_name STRING,

total_price decimal,

customer_id BIGINT

);

odps>insert into sale_detail

values ('a', 123, 'id123');

-- Correct option 2: use INSERT OVERWRITE on the clustered table

odps>insert overwrite table sale_detail_hashcluster

values ('a', 123, 'id123');Error 4: Column not in GROUP BY key

Error message

ODPS-0130071:[m,n] Semantic analysis exception - column reference xx.yy should appear in GROUP BY keyAfter a GROUP BY aggregation, only two kinds of columns can appear in the SELECT list:

-

Columns listed in the GROUP BY clause — output directly or passed through a non-aggregate function.

-

Columns not in the GROUP BY clause — must be wrapped in an aggregate function such as SUM, COUNT, AVG, or ANY_VALUE.

Apply an aggregate function to every column that is not part of the GROUP BY key. Use ANY_VALUE when you need one value from the group but do not care which row it comes from.

Example

-- Incorrect: column c is not in GROUP BY and has no aggregate function

odps> select a, sum(b), c

from values (1L, 2L, 3L) as t(a, b, c)

group by a;

FAILED: ODPS-0130071:[1,19] Semantic analysis exception - column reference t.c should appear in GROUP BY key

-- Correct: ANY_VALUE picks one value of c from each group

odps> select a, sum(b), any_value(c)

from values (1L, 2L, 3L) as t(a, b, c)

group by a;Error 5: Invalid partition value

Error message

ODPS-0130071:[m,n] Semantic analysis exception - Invalid partition value: 'xxx'The partition value does not meet MaxCompute requirements. Valid partition values must:

-

Be 1 to 128 bytes long

-

Start with a letter

-

Contain only letters, digits, or these special characters: spaces,

:,_,$,#,.,!,@ -

Not contain double-byte characters such as Chinese characters

Characters such as \t, \n, and / have undefined behavior and should not be used.

Change the partition value to one that meets these rules.

Example

-- Table with a partition column

odps> create table mc_test

(

a bigint

)

partitioned by

(

ds string

);

-- Incorrect: '${today}' is invalid for the partition field

odps> alter table mc_test add partition(ds = '${today}');

FAILED: ODPS-0130071:[1,40] Semantic analysis exception - Invalid partition value: '${today}'

-- Correct: use a plain date string

odps> alter table mc_test add partition(ds='20221206');Error 6: MSCK REPAIR TABLE used on a non-OSS external table

Error message

ODPS-0130071:[m,n] Semantic analysis exception - only oss external table support msck repair syntaxMSCK REPAIR TABLE only works with Object Storage Service (OSS) external tables. Running it against a regular MaxCompute table causes this error.

Create an OSS external table first, then run MSCK REPAIR TABLE against it. For details on creating OSS external tables, see Create an OSS external table.

Adding partitions to an OSS external table

Two methods are available for registering partitions after the table is created.

Method 1 (recommended for one-time bulk registration): Let MaxCompute auto-detect the OSS directory structure and register all matching partitions at once.

msck repair TABLE <mc_oss_extable_name> ADD partitions [ WITH properties (key:VALUE, key: VALUE ...)];This method scans the entire OSS directory on every run. Avoid using it for directories with more than 1,000 partitions or when new partitions are added frequently — repeated full scans generate a large number of metadata update requests and reduce efficiency. Use Method 2 in those cases.

Method 2 (recommended for incremental partition registration): Add partitions individually using ALTER TABLE. Use this method when partitions are added on a recurring schedule.

ALTER TABLE <mc_oss_extable_name>

ADD PARTITION (<col_name>=<col_value>)[

ADD PARTITION (<col_name>=<col_value>)...][location URL];The values of col_name and col_value must match the names of the OSS subdirectories that store the partition data. Add one ADD PARTITION clause per OSS subdirectory.![]()

Full example with MSCK REPAIR TABLE

The following example creates an OSS external table with two partition columns (pt1, pt2) and uses MSCK REPAIR TABLE to register partitions whose OSS directory names differ from the column names.

-

Create the OSS directory structure:

-

demo8/$pt1=1/$pt2=2/demo8-pt1.txt -

demo8/$pt1=3/$pt2=4/demo8-pt2.txt

-

-

Create the external table and register partitions:

-- Create the OSS external table CREATE EXTERNAL TABLE mf_oss_spe_pt (id int, name string) partitioned BY (pt1 string, pt2 string) stored AS TEXTFILE location "oss://oss-cn-beijing-internal.aliyuncs.com/mfoss*******/demo8/"; -- Register partitions; map MaxCompute column names to OSS directory prefixes MSCK REPAIR TABLE mf_oss_spe_pt ADD PARTITIONS WITH PROPERTIES ('odps.msck.partition.column.mapping'='pt1:$pt1,pt2:$pt2'); -- Query data from the registered partition SELECT * FROM mf_oss_spe_pt WHERE pt1=1 AND pt2=2; -- Result: +------------+------------+------------+------------+ | id | name | pt1 | pt2 | +------------+------------+------------+------------+ | 1 | kyle | 1 | 2 | | 2 | nicole | 1 | 2 | +------------+------------+------------+------------+ SELECT * FROM mf_oss_spe_pt WHERE pt1=3 AND pt2=4; +------------+------------+------------+------------+ | id | name | pt1 | pt2 | +------------+------------+------------+------------+ | 3 | john | 3 | 4 | | 4 | lily | 3 | 4 | +------------+------------+------------+------------+ -

If the MaxCompute column names do not match the OSS directory names, specify the OSS path explicitly using ALTER TABLE:

-- Mappings: pt1=8 maps to OSS directory 8; pt2=8 maps to OSS directory $pt2=8 ALTER TABLE mf_oss_spe_pt ADD PARTITION (pt1=8,pt2=8) location 'oss://oss-cn-beijing-internal.aliyuncs.com/mfosscostfee/demo8/8/$pt2=8/'; -- Disable commit mode, then insert and query SET odps.sql.unstructured.oss.commit.mode=false; INSERT INTO mf_oss_spe_pt PARTITION (pt1=8,pt2=8) VALUES (1,'tere'); SET odps.sql.unstructured.oss.commit.mode=false; SELECT * FROM mf_oss_spe_pt WHERE pt1=8 AND pt2=8; +------+------+-----+-----+ | id | name | pt1 | pt2 | +------+------+-----+-----+ | 1 | tere | 8 | 8 | +------+------+-----+-----+

Error example

-- Regular MaxCompute table (not an OSS external table)

odps> create table mc_test

(

a bigint

)

partitioned by

(

ds string

);

-- Incorrect: MSCK REPAIR TABLE does not work on regular tables

odps> msck table mc_test add partitions;

FAILED: ODPS-0130071:[1,12] Semantic analysis exception - only oss external table support msck repair syntaxError 7: Incompatible data type on INSERT

Error message

ODPS-0130071:[m,n] Semantic analysis exception - column xx in source has incompatible type yy with destination column zz, which has type tttThe data type of the value being inserted does not match the destination column's type and cannot be implicitly converted. Modify the INSERT statement so the inserted value's type matches the destination column's type.

Example

-- Table with a DATETIME column

odps> create table mc_test

(

a datetime

);

-- Incorrect: inserting a BIGINT value into a DATETIME column

odps> insert overwrite table mc_test

values (1L);

FAILED: ODPS-0130071:[2,9] Semantic analysis exception - column __value_col0 in source has incompatible type BIGINT with destination column a, which has type DATETIME

-- Correct: insert a DATETIME literal

odps> insert overwrite table mc_test

values (datetime '2022-12-06 14:23:45');Error 8: DATEDIFF parameter type mismatch

Error message

ODPS-0130071:[m,n] Semantic analysis exception - function datediff cannot match any overloaded functions with (STRING, STRING, STRING), candidates are BIGINT DATEDIFF(DATE arg0, DATE arg1, STRING arg2); BIGINT DATEDIFF(DATETIME arg0, DATETIME arg1, STRING arg2); BIGINT DATEDIFF(TIMESTAMP arg0, TIMESTAMP arg1, STRING arg2); INT DATEDIFF(DATE arg0, DATE arg1); INT DATEDIFF(STRING arg0, STRING arg1); INT DATEDIFF(TIMESTAMP arg0, TIMESTAMP arg1)The argument types passed to DATEDIFF do not match any of its supported signatures. This commonly happens when the MaxCompute V2.0 data type edition is enabled, because V2.0 disables implicit conversions between types.

DATEDIFF accepts the following signatures:

| Return type | Signature |

|---|---|

| BIGINT | DATEDIFF(DATE, DATE, STRING) |

| BIGINT | DATEDIFF(DATETIME, DATETIME, STRING) |

| BIGINT | DATEDIFF(TIMESTAMP, TIMESTAMP, STRING) |

| INT | DATEDIFF(DATE, DATE) |

| INT | DATEDIFF(STRING, STRING) |

| INT | DATEDIFF(TIMESTAMP, TIMESTAMP) |

Fix this with one of two approaches:

-

Cast the arguments to a type that matches one of the signatures above.

-

Disable the V2.0 data type edition by adding

set odps.sql.type.system.odps2=false;before your SQL statement and submitting both together. This re-enables implicit conversions.

Error 9: RAM role not found for OSS external table

Error message

ODPS-0130071:[1,1] Semantic analysis exception - external table checking failure, error message: java.lang.RuntimeException: {"RequestId":"A7BFAD2F-8982-547A-AB5E-93DAF5061FBD","HostId":"sts.aliyuncs.com","Code":"EntityNotExist.Role","Message":"The role not exists: acs:ram::xxxxxx:role/aliyunodpsdefaultrole. ","Recommend":"https://next.api.aliyun.com/troubleshoot?q=EntityNotExist.Role&product=Sts"}The RAM role specified in odps.properties.rolearn does not exist, so role authentication fails when MaxCompute tries to access Object Storage Service (OSS).

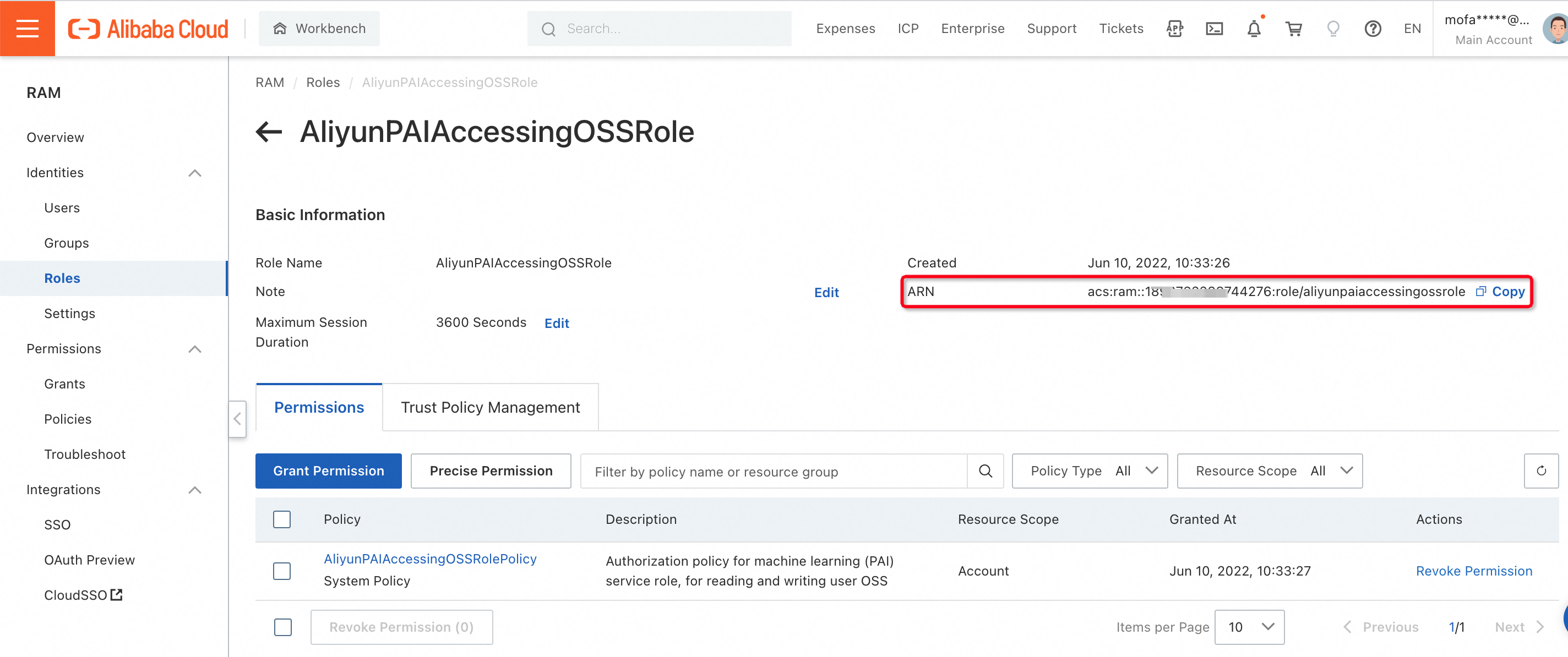

Set odps.properties.rolearn to the correct Alibaba Cloud Resource Name (ARN) of an existing RAM role. The ARN format is:

acs:ram::<UID>:role/<Role>-

UID: your 16-digit Alibaba Cloud account ID -

Role: the role name as configured in the Resource Access Management (RAM) console

Example

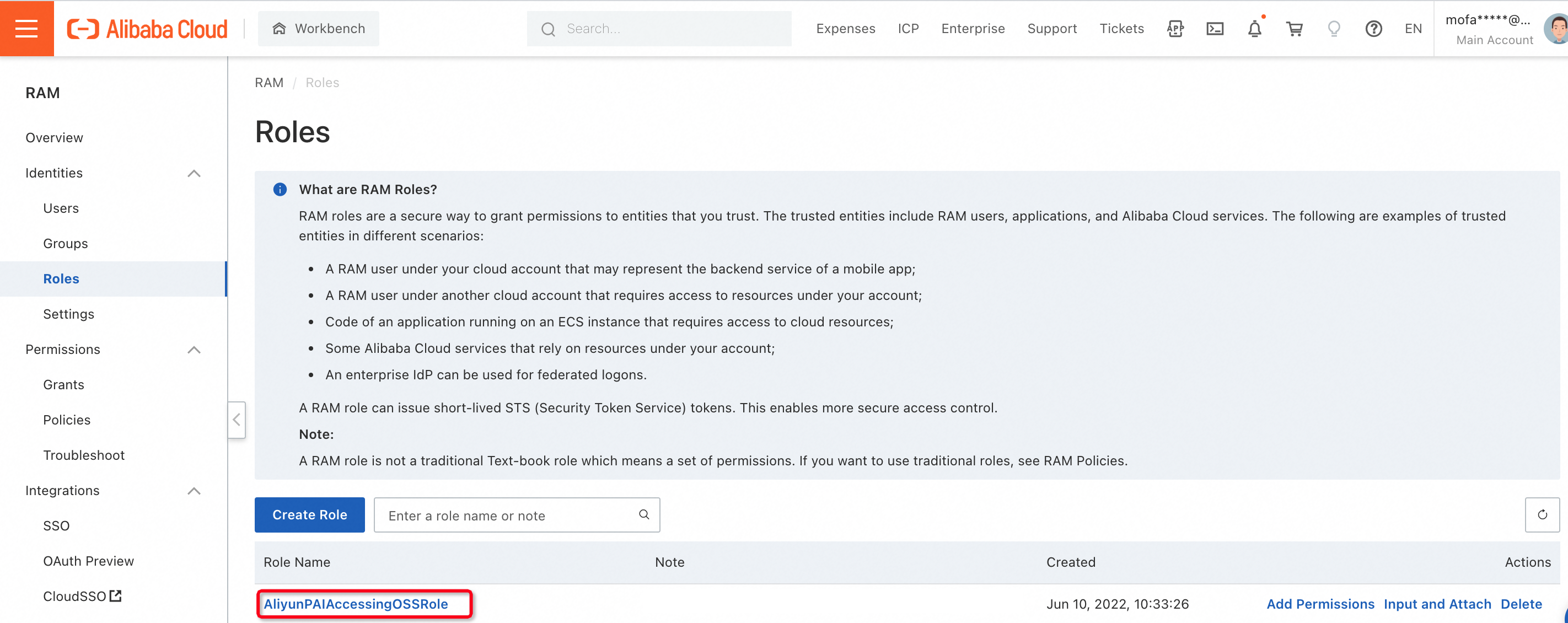

'odps.properties.rolearn'='acs:ram::189xxxxxxx76:role/aliyunpaiaccessingossrole'To find the ARN, open the RAM console, go to the Roles page, and click the role name to view its ARN.

Error 10: MAX_PT runtime exception

Error message

FAILED: ODPS-0130071:[33,26] Semantic analysis exception - encounter runtime exception while evaluating function MAX_PT, detailed message: nullThe partition that MAX_PT resolves to changed between query planning and execution, causing a data inconsistency error.

Two fixes are available:

-

Do not execute SQL statements in which MAX_PT is specified for new partitions.

-

If this error appears in a configuration task, configure the task to retry automatically on failure.

Error 11: Column cannot be resolved

Error message

ODPS-0130071:[73,12] Semantic analysis exception - column xxx cannot be resolvedThe column xxx does not exist in the referenced table. Check your SQL script and replace xxx with a valid column name.

Error 12: UDF evaluate function does not match annotation

Error message

FAILED: ODPS-0130071:[1,8] Semantic analysis exception - evaluate function in class test.MyPlus for user defined function my_plus does not match annotation bigint->bigintThe @annotate decorator on the user-defined function (UDF) class declares a different signature than the evaluate method's actual parameters. Update the UDF code so the annotation and the method signature agree.

Common mistakes in Python UDFs:

-

The annotation declares one input parameter, but

evaluatetakes two (plusself). -

The

evaluatemethod is missing theselfparameter.

Example

-- Incorrect: annotation says one input (bigint->bigint), but evaluate takes two args

from odps.udf import annotate

@annotate("bigint->bigint")

class MyPlus(object):

def evaluate(self, arg0, arg1):

if None in (arg0, arg1):

return None

return arg0 + arg1

-- Incorrect: evaluate is missing the self parameter

from odps.udf import annotate

@annotate("bigint,bigint->bigint")

class MyPlus(object):

def evaluate(arg0, arg1):

if None in (arg0, arg1):

return None

return arg0 + arg1

-- Correct: annotation matches evaluate signature; self is present

from odps.udf import annotate

@annotate("bigint,bigint->bigint")

class MyPlus(object):

def evaluate(self, arg0, arg1):

if None in (arg0, arg1):

return None

return arg0 + arg1Error 13: VPC not in whitelist for external table access

Error message

FAILED: ODPS-0130071:[0,0] Semantic analysis exception - physical plan generation failed: com.aliyun.odps.lot.cbo.plan.splitting.impl.vpc.AliHyperVpcRuntimeException: Vpc white list: , Vpc id: vpc-xxxx is not allowed to access. Contact project owner to set allowed accessed vpc id list.=MaxCompute cannot access the VPC-based service through the external table because the VPC is not in the allowed list. Add the VPC to the IP address whitelist and verify that MaxCompute can reach the service from a whitelisted IP address. For configuration steps, see the "Access over a VPC (dedicated connection)" section in Network connection process.

Error 14: Physical plan generation failed — service-linked role not assigned

Error message

FAILED: ODPS-0130071:[0,0] Semantic analysis exception - physical plan generation failed: com.aliyun.odps.common.table.na.NativeException: kNotFound:The role_arn you provide not exists in HOLO auth service. Please check carefully.The service-linked role (SLR) that MaxCompute uses to access the target Alibaba Cloud service has not been assigned to your account. Click RAM Quick Authorization to assign the SLR to your account.