ApsaraDB for HBase is a distributed database compatible with the standard HBase access protocol. MaxCompute supports Standard Edition and Performance-enhanced Edition clusters — not Serverless Edition.

By default, MaxCompute is not connected to the ApsaraDB for HBase service. After connecting MaxCompute to ApsaraDB for HBase over a virtual private cloud (VPC), you can create an HBase external table to link to an existing HBase table. This lets you read HBase data in MaxCompute for extract, transform, and load (ETL) processing and federated computing, or write MaxCompute data back to HBase.

ApsaraDB for HBase Performance-enhanced Edition is built on the Lindorm kernel. If you created your Performance-enhanced Edition instance in the Lindorm console, see Lindorm external tables instead. This topic covers HBase instances created directly in the ApsaraDB for HBase console.

Prerequisites

Before you begin, ensure that you have:

-

An ApsaraDB for HBase Standard Edition or Performance-enhanced Edition cluster with environment configuration complete.

-

Standard Edition: Quick start for ApsaraDB for HBase Standard Edition

-

Performance-enhanced Edition: Quick start for ApsaraDB for HBase Performance-enhanced Edition

-

-

A table created in the HBase cluster using an HBase client. This topic uses

mf_hbase_test_tas the example table, created with the following commands:# Create an HBase table with column family "f" create 'mf_hbase_test_t', 'f' # Insert a row: RowKey=1, column f:col1, value "hello" put 'mf_hbase_test_t', '1', 'f:col1', 'hello'For HBase Shell commands, see Use HBase Shell.

-

A MaxCompute project. See Create a MaxCompute project.

-

The MaxCompute client installed and configured. See Install and configure the MaxCompute client.

-

A VPC network connection established between MaxCompute and ApsaraDB for HBase, with the ApsaraDB for HBase whitelist configured. See Configure a whitelist and Network connection process.

Limitations

-

Supported regions: China (Beijing), China (Shanghai), China (Zhangjiakou), China (Ulanqab), China (Hangzhou), China (Shenzhen), China (Hong Kong), China East 2 Finance Cloud (Zone F), Japan (Tokyo), Singapore, Malaysia (Kuala Lumpur), Indonesia (Jakarta), Germany (Frankfurt), US (Silicon Valley), and US (Virginia). For VPC access details, see VPC access solution (leased line connection).

-

VPC access scope: After connecting, MaxCompute can only access the VPC specified by its VPC ID. To access a different region or another VPC in the same region, establish a separate connection between the VPCs.

-

HBase version: Only HBase 2.x clusters are supported.

-

Unsupported cluster types: EMR HBase clusters are not supported.

-

Unsupported properties: The

clusterproperty is not supported.

Syntax

Both editions use the same CREATE EXTERNAL TABLE statement structure. Enable Hive compatibility mode before running the statement. For Performance-enhanced Edition, also enable VPC network access and set the HBase version to lindorm.

-- Required for all clusters: enable Hive compatibility mode

set odps.sql.hive.compatible = true;

-- Required for Performance-enhanced Edition only:

-- set odps.sql.external.net.vpc=true;

-- set odps.sql.hbase.version=lindorm;

CREATE EXTERNAL TABLE IF NOT EXISTS <mc_hbase_external>

(

<col1_name> <data_type>,

<col2_name> <data_type>,

...

)

STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

WITH SERDEPROPERTIES (

'hbase.columns.mapping'='<:key,col-family:col-name1,col-family:col-name2,...>'

)

TBLPROPERTIES (

'hbase.table.name'='<hbase_table_name>',

'hbase.zookeeper.quorum'='<VPC ip1|host1:port,VPC ip2|host2:port,...>',

-- Performance-enhanced Edition only:

'hbase.client.username'='<username>',

'hbase.client.password'='<password>',

-- Optional:

'hbase.zookeeper.property.clientPort'='<port>',

'mcfed.zookeeper.session.timeout'='<milliseconds>',

'hbase.client.retries.number'='<count>',

'networklink'='<networklink_name>'

);Table and column names are not case-sensitive and cannot be forced to a specific case.

Parameters

Required for all clusters

| Parameter | Description |

|---|---|

hbase.table.name |

Name of the HBase table to connect to. Create this table in advance using an HBase client. |

hbase.zookeeper.quorum |

ZooKeeper endpoint (Standard Edition) or Java API endpoint (Performance-enhanced Edition). Format: ip1|host1:port,ip2|host2:port,.... This field also supports IP address ranges and CIDR notation, for example: 192.0.2.1-192.0.2.10:9000,192.0.2.20:70050 or 192.0.2.20/16:2181. For a distributed data source, you must provide the IP addresses and ports for ZooKeeper (2181 or 30020), HMaster (16000), and RegionServer (16020). Because this field supports IP address ranges and IP mapping consumes resources, the number of IP addresses mapped at a time is limited to 2,000 by default. |

networklink |

Name of the MaxCompute VPC network connection for the VPC where the HBase instance is located. |

hbase.columns.mapping |

Column mappings between the external table and the HBase table. See Column mapping rules below. |

Required for Performance-enhanced Edition clusters only

| Parameter | Description |

|---|---|

hbase.client.username |

Cluster username. In the ApsaraDB for HBase console, go to Clusters, click the cluster name, then click Database Connection. Find Default Username in the Connection Information section. |

hbase.client.password |

Cluster password. Find Default Password in the same Connection Information section. |

Optional

| Parameter | Default | Description |

|---|---|---|

hbase.zookeeper.property.clientPort |

2181 (Standard Edition) / 30020 (Performance-enhanced Edition) | ZooKeeper port. |

mcfed.zookeeper.session.timeout |

— | ZooKeeper session timeout in milliseconds. Recommended value: 30000. |

hbase.client.retries.number |

— | Number of HBase client connection retries. |

Parameters prefixed withmcfed.zookeeperandhbaserelate to ZooKeeper configuration.

Column mapping rules

The hbase.columns.mapping value is a comma-delimited string that maps each external table column to an HBase column. Follow these rules:

-

One entry per column: The number of entries must match the number of columns in the external table. Do not include whitespace between entries — it will be treated as part of the column name.

-

RowKey must come first: The

:keyentry maps to the HBase RowKey and must be the first item in the mapping string. -

Column format: Each non-RowKey entry uses the format

column-family:column-name(for example,f:col1). -

One column family per job: Only one column family can be specified per job.

-

Fewer columns than HBase: External table columns with no matching HBase column are filled with

NULL. -

More columns than HBase: HBase columns with no mapping in the external table are ignored.

Create an HBase external table

This example creates an external table for mf_hbase_test_t (an HBase table with column family f, columns col1 and col2), queries it, and writes data back.

Step 1: Create the external table

Standard Edition

set odps.sql.hive.compatible = true;

CREATE EXTERNAL TABLE IF NOT EXISTS mf_hbase_test_t_ext

(

key INT,

col1 STRING,

col2 STRING

)

STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

WITH SERDEPROPERTIES (

'hbase.columns.mapping'=':key,f:col1,f:col2'

)

TBLPROPERTIES (

'hbase.table.name'='mf_hbase_test_t',

'hbase.zookeeper.quorum'='hb-2zef****-master1-001.hbase.rds.aliyuncs.com,hb-2zef****-master2-001.hbase.rds.aliyuncs.com,hb-2zef****-master3-001.hbase.rds.aliyuncs.com',

'hbase.zookeeper.property.clientPort'='2181',

'networklink'='networklink4hbase'

);Performance-enhanced Edition

set odps.sql.external.net.vpc=true;

set odps.sql.hive.compatible = true;

set odps.sql.hbase.version=lindorm;

CREATE EXTERNAL TABLE mf_hbase_test_t_ext

(

key INT,

col1 STRING,

col2 STRING

)

STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

WITH SERDEPROPERTIES (

'hbase.columns.mapping'=':key,f:col1,f:col2'

)

TBLPROPERTIES (

'hbase.table.name'='mf_hbase_test_t',

'hbase.zookeeper.quorum'='hb-2zef****-master1-001.hbase.rds.aliyuncs.com,hb-2zef****-master2-001.hbase.rds.aliyuncs.com,hb-2zef****-master3-001.hbase.rds.aliyuncs.com',

'hbase.zookeeper.property.clientPort'='2181',

'networklink'='networklink4hbase'

);Step 2: Query the HBase table

SELECT * FROM mf_hbase_test_t_ext;Expected result (the row inserted during setup):

+------+------------+------------+

| key | col1 | col2 |

+------+------------+------------+

| 1 | hello | NULL |

+------+------------+------------+col2 is NULL because no value was set for f:col2 in the HBase table.

Step 3: Write data to HBase

INSERT OVERWRITE TABLE mf_hbase_test_t_ext SELECT '2', 'hbase', 'mftestinsert';Step 4: Verify the written data

SELECT * FROM mf_hbase_test_t_ext;Expected result:

+------+------------+--------------------+

| key | col1 | col2 |

+------+------------+--------------------+

| 1 | hello | NULL |

| 2 | hbase | mftestinsert |

+------+------------+--------------------+Load data to HBase using bulk load

To import large amounts of data into HBase, you can use the HBase bulk load method. This method first generates HFiles, which are the underlying storage files for HBase, and then directly moves these HFiles to the HBase storage directory. Compared with calling the `HTable.put` online interface, this method is more efficient and has less impact on HBase operations because it bypasses the HBase RegionServer and Write-ahead Logging (WAL).

-

Log on to the MaxCompute client and run the

CREATE EXTERNAL TABLEstatement to create an external table associated with the schema of the table in HBase.The following shows the DDL statement:

set odps.sql.hive.compatible=true; -- Enable Hive compatibility mode. create external table if not exists <mc_hfile_hbase_external> ( <col1_name> <data_type>, <col2_name> <data_type> ) stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler' -- The handler for the HBase data source. with serdeproperties ('hbase.table.name'='<hbase_table_name>', 'hbase.columns.mapping'='<:key,col-family:col-name1,col-family:col-name2,...>', 'odps.external.net.vpc'='true', 'odps.vpc.id'='<VPC ID>', 'odps.vpc.access.ips'='<VPC ip:port,VPC ipBegin-ipEnd:port|network_segment:port>', 'odps.vpc.region'='<RegionID>') location '<hbase://VPC ip:port>' tblproperties ( 'hbase.table.name'='<hbase_table_name>', 'hbase.columns.mapping'='<:key,col-family:col-name1,col-family:col-name2,...>', 'hfile.temp.path'='<hdfs://hbase-cluster/hfiletmp>', 'hadoop.user.name'='<admin>', 'mcfed.fs.defaultFS'='<hdfs://hbase-cluster>', 'mcfed.dfs.nameservices'='<hbase-cluster>', 'mcfed.dfs.ha.namenodes.hbase-cluster'='nn1,nn2', 'mcfed.dfs.namenode.rpc-address.hbase-cluster.nn1'='j63g09343.sqa.eu95:8020', 'mcfed.dfs.namenode.rpc-address.hbase-cluster.nn2'='j63g09355.sqa.eu95:8020', 'mcfed.dfs.client.failover.proxy.provider.hbase-cluster'='org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider', 'mcfed.zookeeper.session.timeout'='<value>', 'hbase.client.retries.number'='<value>', 'hbase.zookeeper.quorum'='<VPC ip1,VPC ip2...>', 'hbase.zookeeper.property.clientPort'='2181'tblproperties:

-

hfile.temp.path: The storage directory for temporary HFiles. Ensure that this directory is used only by MaxCompute to write HFiles. When you specify this configuration item, MaxCompute first writes data to this directory, and then runs the HBase BulkLoad command to import the HFiles into HBase.

-

hadoop.user.name: The user who writes HFiles in Hadoop Distributed File System (HDFS).

-

Configuration items that start with mcfed.fs.defaultFS and mcfed.dfs are related to HDFS High Availability (HA) configuration. For more information, see the DDL statement.

-

'hbase.zookeeper.quorum'='ip_address:port|ip_address_range:port|host_name': The IP address of the ZooKeeper for HBase. You can also set this to the ZooKeeper host name.

Sample command:

set odps.sql.hive.compatible=true; create external table if not exists test_hfile_hbase_external ( id string, cfa string, cfb string ) stored by 'org.apache.hadoop.hive.hbase.HBaseStorageHandler' with serdeproperties ('hbase.table.name'='htest', 'hbase.columns.mapping'=':key,cf:a,cf:b', 'odps.external.net.vpc'='true', 'odps.vpc.id'='vpc-uf630ldmi9l0rrr01****', 'odps.vpc.access.ips'='192.0.2.0-192.0.2.10:8020,192.0.2.15-192.0.2.20:2181', 'odps.vpc.region'='cn-shanghai') location 'hbase://192.0.2.0:2181' tblproperties ( 'hbase.table.name'='htest', 'hbase.columns.mapping'=':key,cf:a,cf:b', 'hfile.temp.path'='hdfs://hbase-cluster/hfiletmp', 'hadoop.user.name'='admin', 'mcfed.fs.defaultFS'='hdfs://hbase-cluster', 'mcfed.dfs.nameservices'='hbase-cluster', 'mcfed.dfs.ha.namenodes.hbase-cluster'='nn1,nn2', 'mcfed.dfs.namenode.rpc-address.hbase-cluster.nn1'='j63g09343.sqa.eu95:8020', 'mcfed.dfs.namenode.rpc-address.hbase-cluster.nn2'='j63g09355.sqa.eu95:8020', 'mcfed.dfs.client.failover.proxy.provider.hbase-cluster'='org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider', 'mcfed.zookeeper.session.timeout'='30', 'hbase.client.retries.number'='1', 'hbase.zookeeper.quorum'='j63g11301.sqa.eu95,j63g11306.sqa.eu95,j63g09367.sqa.eu95', 'hbase.zookeeper.property.clientPort'='2181'); -

-

Run the

INSERT OVERWRITEstatement to write data to the table in HBase and perform a global sort.-

To perform a global sort on the MaxCompute source table, you can use the range-clustered table mechanism of MaxCompute. When the optimizer generates an execution plan and detects that data is being written to an HBase table, it treats the table as a range-clustered table. The execution plan then performs a global sort on the source table. Sample command:

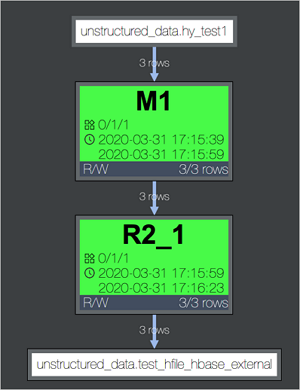

INSERT OVERWRITE TABLE test_hfile_hbase_external SELECT uuid(), a_name,a_age FROM hy_test1;The generated execution plan is shown in the following figure. In the M1 step, the data in the source table (hy_test1) is globally sorted by RowKey.

-

After you run the

INSERT OVERWRITEstatement, MaxCompute automatically writes the data to HDFS in HFile format. The temporary path for the HFiles is specified by the hfile.temp.path parameter.Each Fuxi instance corresponds to a region of the HBase external table, which is the R2_1 step in the preceding figure. In this step, the sorted data is written to HDFS in HFile format.

NoteWhen you write HFiles, note the following two limits:

-

The first column must be the RowKey, which is

:keyin hbase.columns.mapping. -

The remaining columns must belong to a single column family. This ensures that each instance writes to only one HFile.

-

-

Move the HFiles to the HBase storage directory and notify the HBase RegionServer to load them.

After the HFiles for all regions of the HBase external table are written to HDFS, MaxCompute automatically runs the bulk load operation. This operation moves the HFiles to the HBase storage directory and notifies the HBase RegionServer to load them.

-

-

Run the

SELECTstatement to verify the written data.Sample command:

select * from cluster_test_hbase_external;