This topic describes how to use Apache Hive to access LindormDFS.

Prerequisites

LindormDFS is activated for your Lindorm instance. For more information, see Activate LindormDFS.

Java Development Kits (JDKs) are installed on the compute engine nodes. The JDK version must be 1.8 or later.

Apache Derby is downloaded from the official website. Apache Derby V10.13.1.1 is used in this topic as an example.

The compressed Apache Hive package is downloaded from the official website. Apache Hive V2.3.7 is used in this topic as an example.

Configure Apache Derby

Decompress the Apache Hive package to the specified directory.

tar -zxvf db-derby-10.13.1.1-bin.tar.gz -C /usr/local/Modify the

/etc/profileconfiguration file and configure environment variables.Run the following command to open the configuration file

/etc/profile:vim /etc/profileAdd the following information to the end of the content in the file:

export DERBY_HOME=/usr/local/db-derby-10.13.1.1-bin export CLASSPATH=$CLASSPATH:$DERBY_HOME/lib/derby.jar:$DERBY_HOME/lib/derbytools.jarCreate a directory that is used to store the data.

mkdir $DERBY_HOME/dataStart the LindormDFS service.

nohup /usr/local/db-derby-10.13.1.1-bin/bin/startNetworkServer &

Configure Apache Hive

Decompress the Apache Hive package to the specified directory.

tar -zxvf apache-hive-2.3.7-bin.tar.gz -C /usr/local/Modify the

/etc/profileconfiguration file and configure environment variables.Run the following command to open the configuration file

/etc/profile:vim /etc/profileAdd the following information to the end of the content in the file:

export HIVE_HOME=/usr/local/apache-hive-2.3.7-bin

Modify the

hive-env.shfile.Run the following command to open the

hive-env.shfile:vim /usr/local/apache-hive-2.3.7-bin/hive-env.shModify the

hive-env.shfile. The following example shows the content that is modified in the file.# The heap size of the jvm stared by hive shell script can be controlled via export HADOOP_HEAPSIZE=1024 # Set HADOOP_HOME to point to a specific hadoop install directory HADOOP_HOME=/usr/local/hadoop-2.7.3 # Hive Configuration Directory can be controlled by: export HIVE_CONF_DIR=/usr/local/apache-hive-2.3.7-bin/conf # Folder containing extra ibraries required for hive compilation/execution can be controlled by: export HIVE_AUX_JARS_PATH=/usr/local/apache-hive-2.3.7-bin/lib

Modify the

hive-site.xmlfile.Run the following command to open the

hive-site.xmlfile:vim /usr/local/apache-hive-2.3.7-bin/conf/hive-site.xmlModify the

hive-site.xmlfile. The following example shows the content that is modified in the file.<configuration> <property> <name>hive.metastore.warehouse.dir</name> <value>/user/hive/warehouse</value> <description>location of default database for the warehouse</description> </property> <property> <name>hive.exec.scratchdir</name> <value>/tmp/hive</value> <description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permission}.</description> </property> <property> <name>hive.metastore.schema.verification</name> <value>false</value> <description> Enforce metastore schema version consistency. True: Verify that version information stored in metastore matches with one from Hive jars. Also disable automatic schema migration attempt. Users are required to manually migrate schema after Hive upgrade which ensures proper metastore schema migration. (Default) False: Warn if the version information stored in metastore doesn't match with one from in Hive jars. </description> </property> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:derby://127.0.0.1:1527/metastore_db;create=true </value> <description>JDBC connect string for a JDBC metastore </description> </property> <property> <name>datanucleus.schema.autoCreateAll</name> <value>true</value> </property> </configuration>

Create a

jpox.propertiesfile.Run the following command to open the

jpox.propertiesfile:vim /usr/local/apache-hive-2.3.7-bin/conf/jpox.propertiesModify the

jpox.propertiesfile. The following example shows the content that is modified in the file.javax.jdo.PersistenceManagerFactoryClass =org.jpox.PersistenceManagerFactoryImpl org.jpox.autoCreateSchema = false org.jpox.validateTables = false org.jpox.validateColumns = false org.jpox.validateConstraints = false org.jpox.storeManagerType = rdbms org.jpox.autoCreateSchema = true org.jpox.autoStartMechanismMode = checked org.jpox.transactionIsolation = read_committed javax.jdo.option.DetachAllOnCommit = true javax.jdo.option.NontransactionalRead = true javax.jdo.option.ConnectionDriverName = org.apache.derby.jdbc.ClientDriver javax.jdo.option.ConnectionURL = jdbc:derby://127.0.0.1:1527/metastore_db;create = true javax.jdo.option.ConnectionUserName = APP javax.jdo.option.ConnectionPassword = mine

Create the required directories for Apache Hive.

$HADOOP_HOME/bin/hadoop fs -ls / If the /user/hive/warehouse and /tmp/hive paths are not found in the hive-site.xml file, create the directories in the path and grant users the write permissions. $HADOOP_HOME/bin/hadoop fs -ls /user/hive/warehouse $HADOOP_HOME/bin/hadoop fs -ls /tmp/hive $HADOOP_HOME/bin/hadoop fs -chmod 775 /user/hive/warehouse $HADOOP_HOME/bin/hadoop fs -chmod 775 /tmp/hiveModify the

io.tmpdirdirectory.In addition, modify the value of each

${system:java.io.tmpdir}field in thehive-site.xmlfile. The value of the field is a path. You can create a path such as/tmp/hive/iotmpand use this path to replace the value.mkdir /usr/local/apache-hive-2.3.7-bin/iotmp chmod 777 /usr/local/apache-hive-2.3.7-bin/iotmpModify

${system:user.name}in the following code:<property> <name>hive.exec.local.scratchdir</name> <value>/usr/local/apache-hive-2.3.7-bin/iotmp/${system:user.name}</value> <description>Local scratch space for Hive jobs</description> </property>The following sample code shows the modification:

<property> <name>hive.exec.local.scratchdir</name> <value>/usr/local/apache-hive-2.3.7-bin/iotmp/${user.name}</value> <description>Local scratch space for Hive jobs</description> </property>Initialize the Apache Hive service.

nohup /usr/local/apache-hive-2.3.7-bin/bin/hive --service metastore & nohup /usr/local/apache-hive-2.3.7-bin/bin/hive --service hiveserver2 &

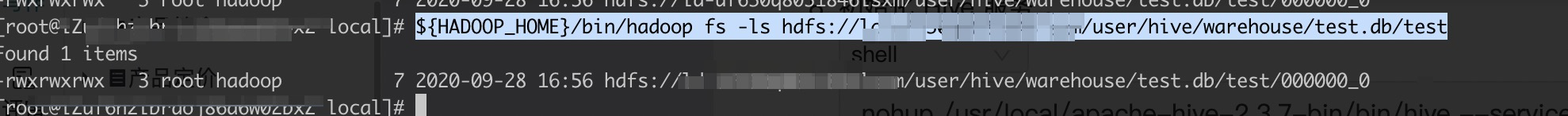

Verify Apache Hive

Create a table in the Apache Hive shell.

create table test (f1 INT, f2 STRING);Write data to the table.

insert into test values (1,'2222');Check whether the data has been written to LindormDFS.

${HADOOP_HOME}/bin/hadoop fs -ls /user/hive/warehouse/test.db/test