This document describes how to use intelligent workflows for smart media processing. You can create modular workflows and customize the processing flow.

Scenario 1: Live stream translation

You can use an intelligent workflow to perform speech recognition on a live stream. The workflow generates real-time translations and sends the intermediate and final results for each sentence to your HTTP server through a callback.

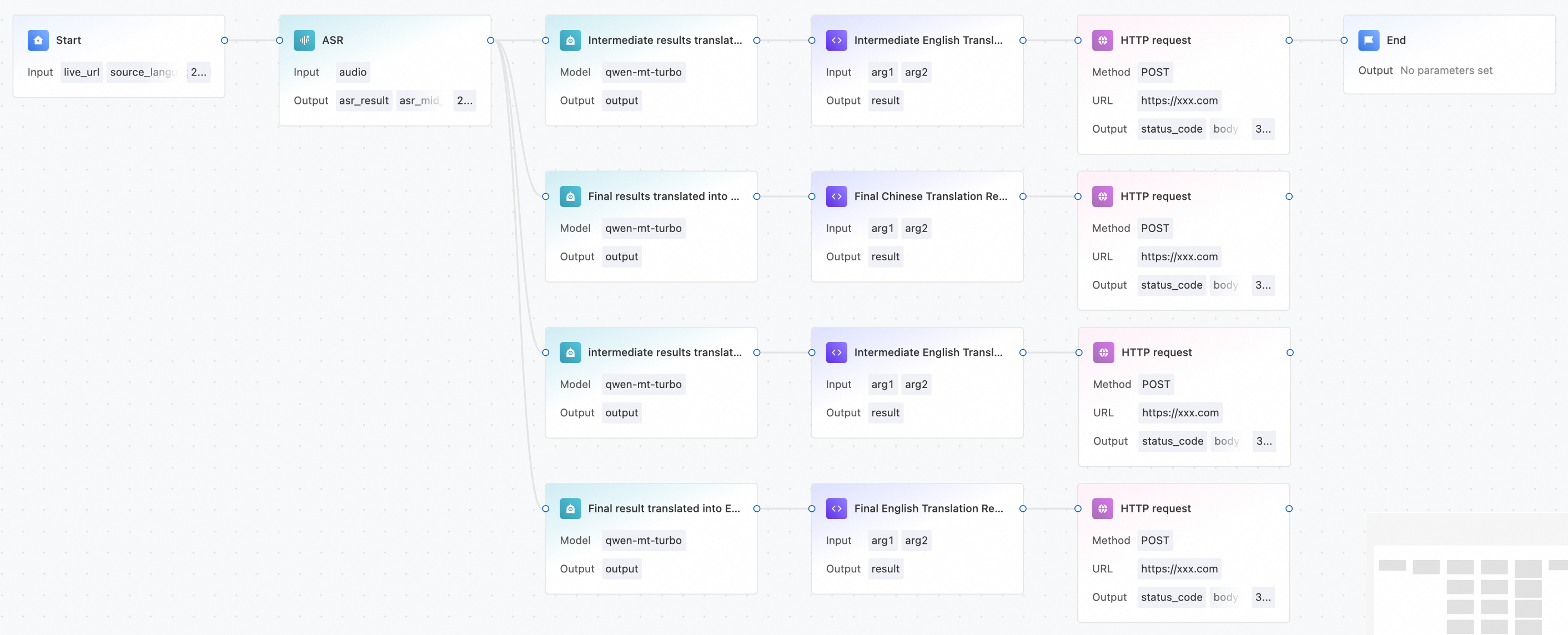

Overall topology configuration

The topology includes six nodes: Start, Automatic Speech Recognition (ASR), Large Language Model (LLM), Code Execution, HTTP Request, and End.

The node configurations are as follows:

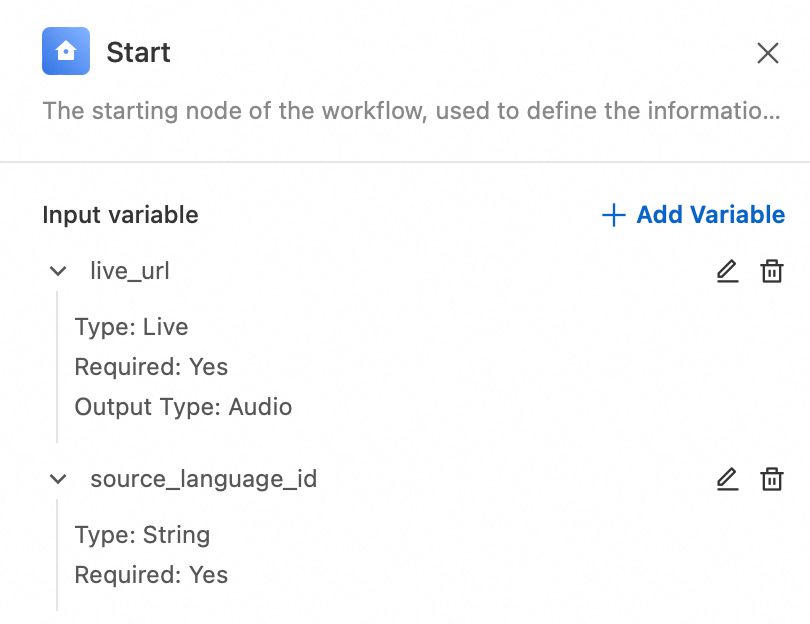

Start node

When you start the workflow, pass the following input parameters to the Start node:

{

"live_url": {

"Url": "rtmp://test.com/test_app/test_stream?auth_key=test",

"MaxIdleTime": 20

},

"source_language_id": "es"

}Parameter | Required | Description |

live_url | Yes | Pass as an Object with the following fields:

|

source_language_id | Yes | The source language. Select a value from the following list. |

Mandarin Chinese: zh

English: en

Spanish: es

Japanese: ja

Korean: ko

French: fr

Thai: th

Russian: ru

German: de

Guangxi dialect: guangxi

Portuguese: pt

Cantonese: yue

Traditional Cantonese: yue_hant

Minnan: minnan

Polish: pl

Italian: it

Ukrainian: uk

Dutch: nl

Arabic: ar

Indonesian: id

Turkish: tr

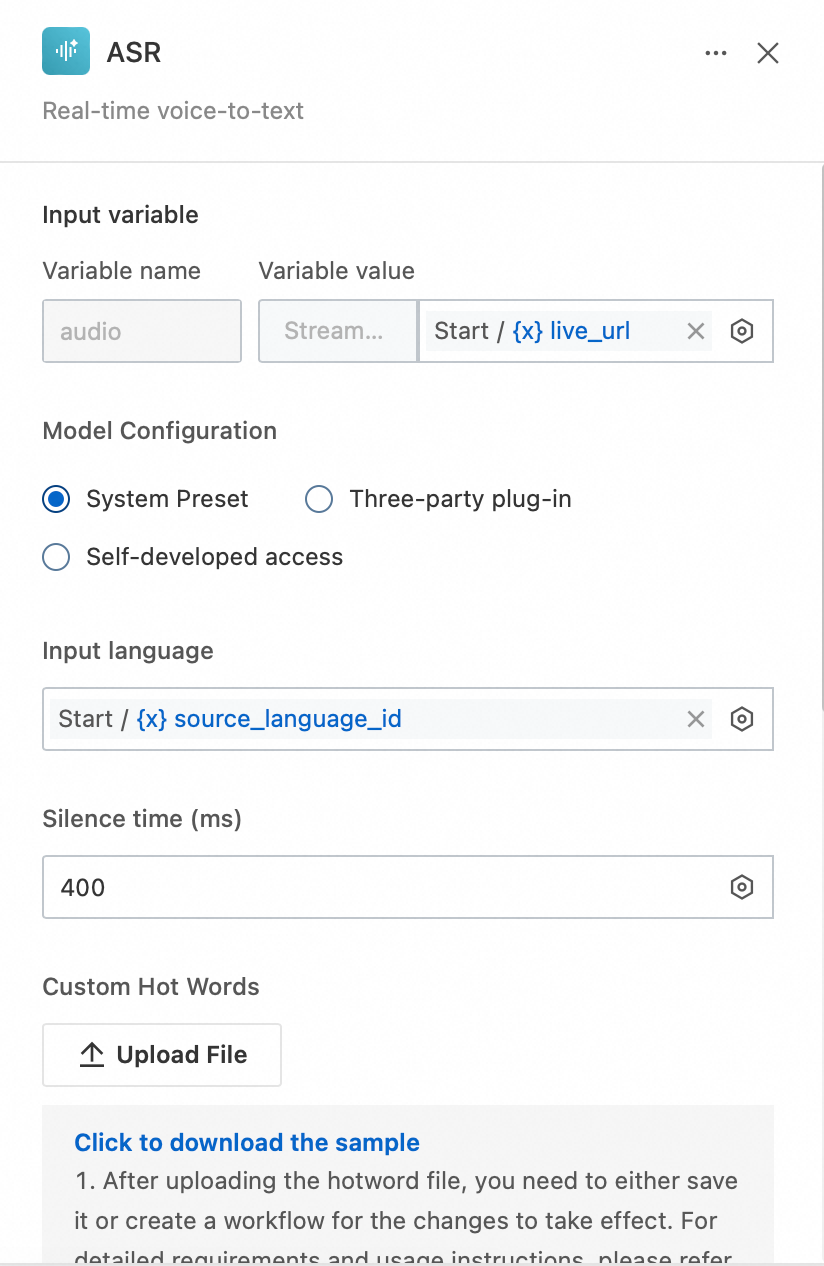

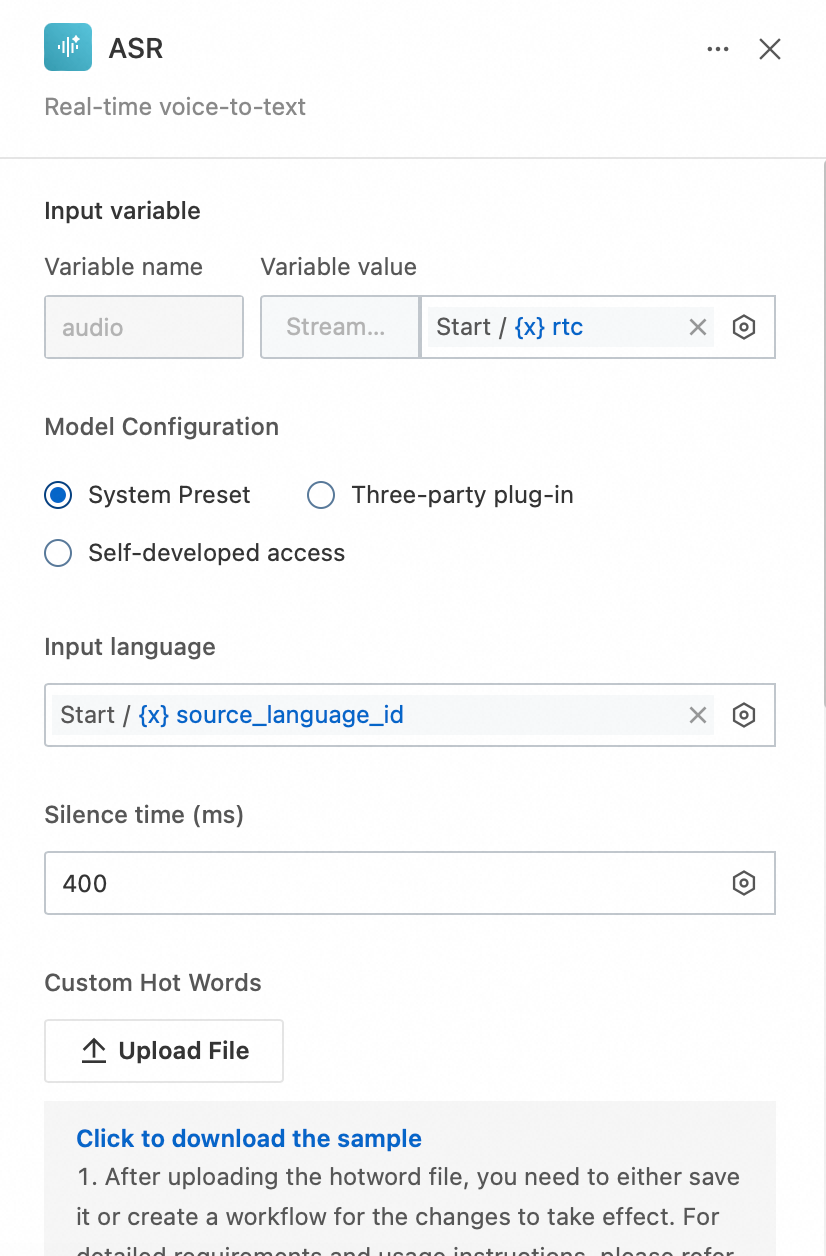

Vietnamese: viASR node

For the input variable, reference the live_url parameter of the Start node. For the input language, reference the source_language_id parameter of the Start node. You can leave the other parameters as default or configure them as needed.

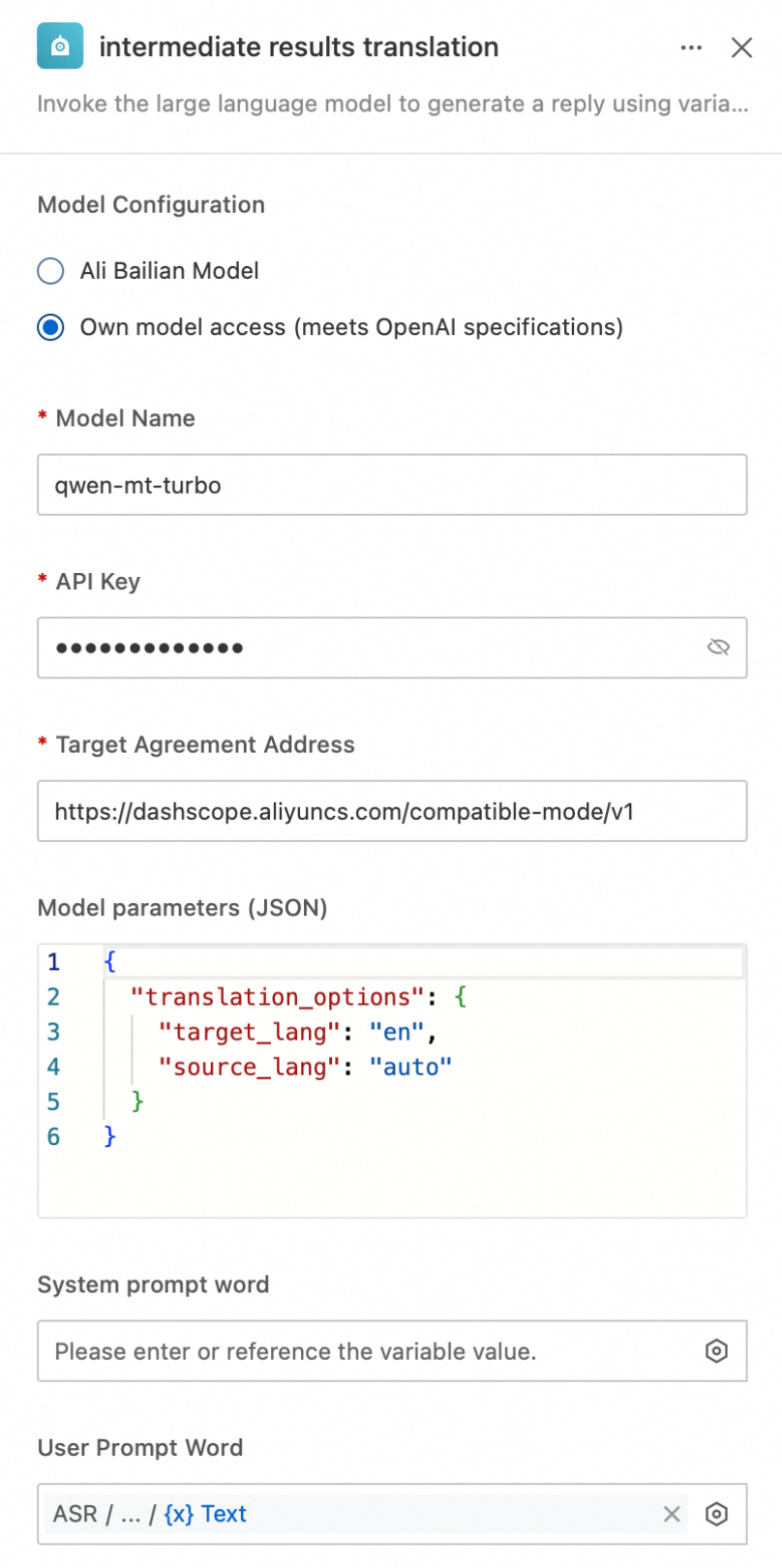

LLM node

This example shows how to configure the qwen-mt-turbo model using the Custom Model Integration (OpenAI-compliant) method. For more information about how to obtain an API key, see Obtain an API Key. In the model parameters, you must set the source language (which can be set to `auto`) and the target language. The user prompt can directly reference the intermediate or final results from the ASR node.

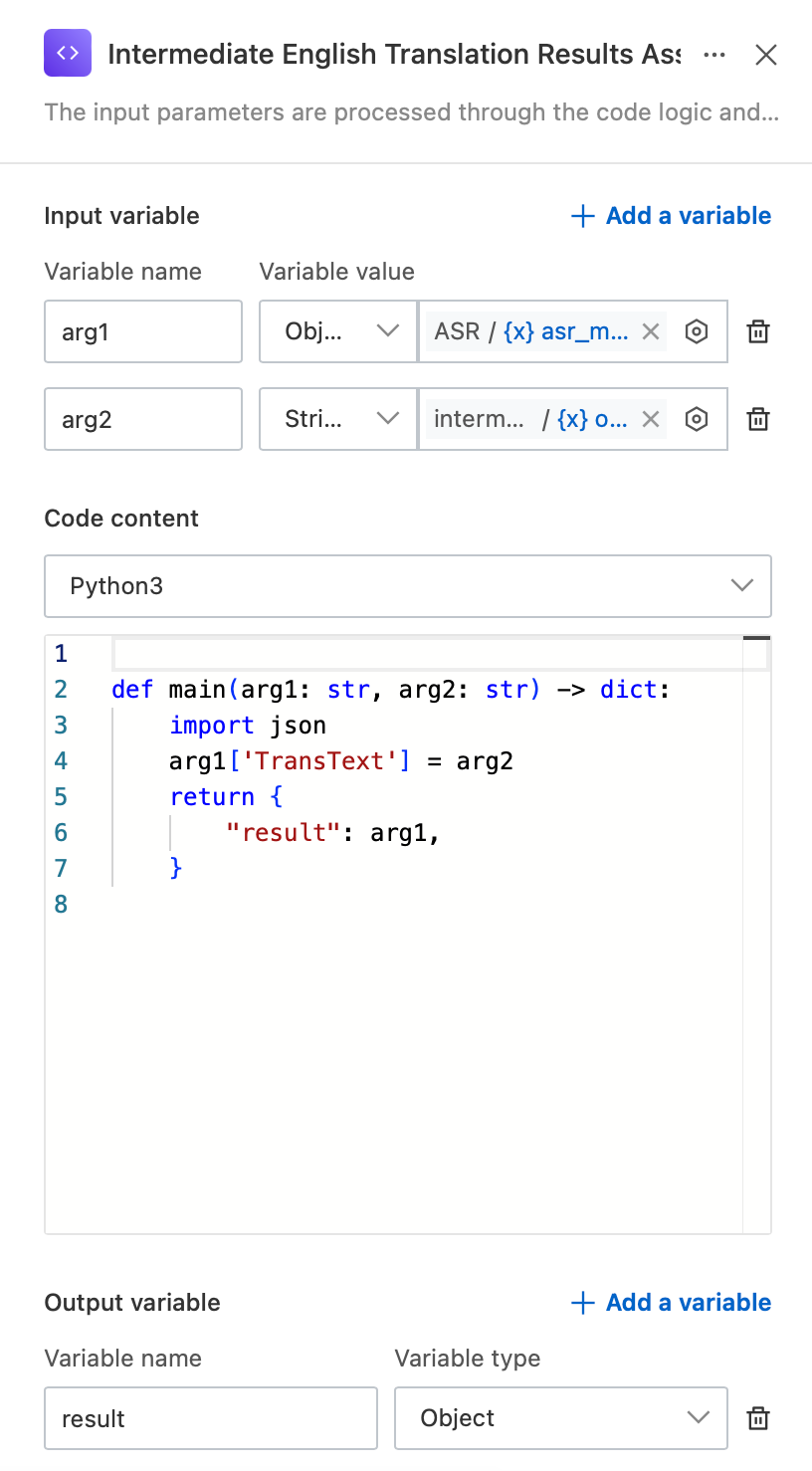

Code execution node

To merge the ASR results and the LLM translation results for the callback to your business server, you can use a Python script in the Code Execution node to assemble the results. Set the LLM output to the TransText field of the ASR result. Then, return a JSONObject as the callback data.

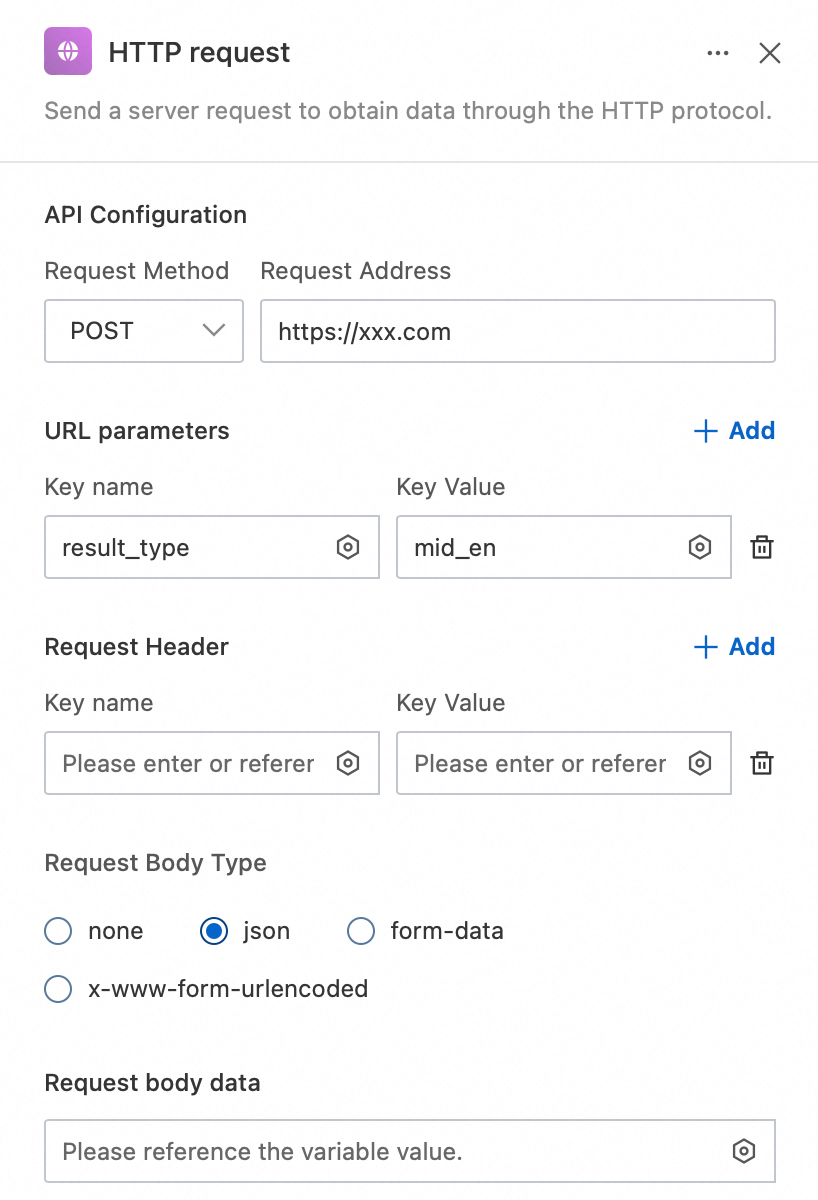

HTTP request node

Configure the following settings:

API configuration: The public address of your callback server.

URL parameters: result_type=mid_en. You can customize the callback type.

Request body type: json.

Request body data: Reference the JSON output from the callback data.

Scenario 2: RTC caption recognition

You can use an intelligent workflow to perform ASR on a specified audio stream in a Real-Time Communication (RTC) channel. The recognition results are sent to the client through a DataChannel callback to display captions.

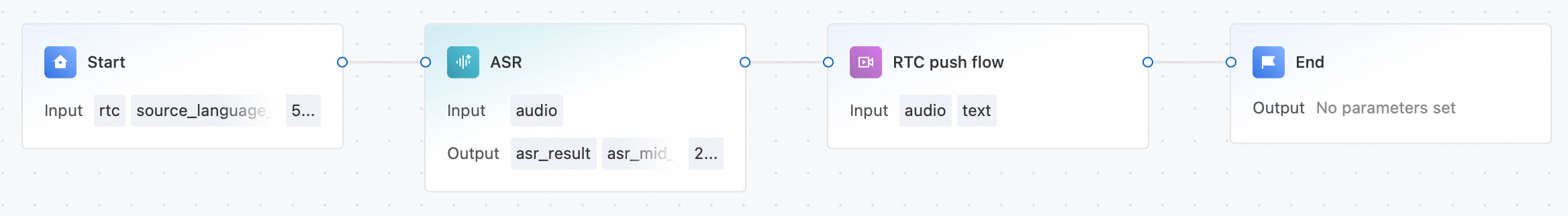

Overall topology configuration

The topology includes four nodes: Start, ASR, RTC Ingest, and End.

The node configurations are as follows:

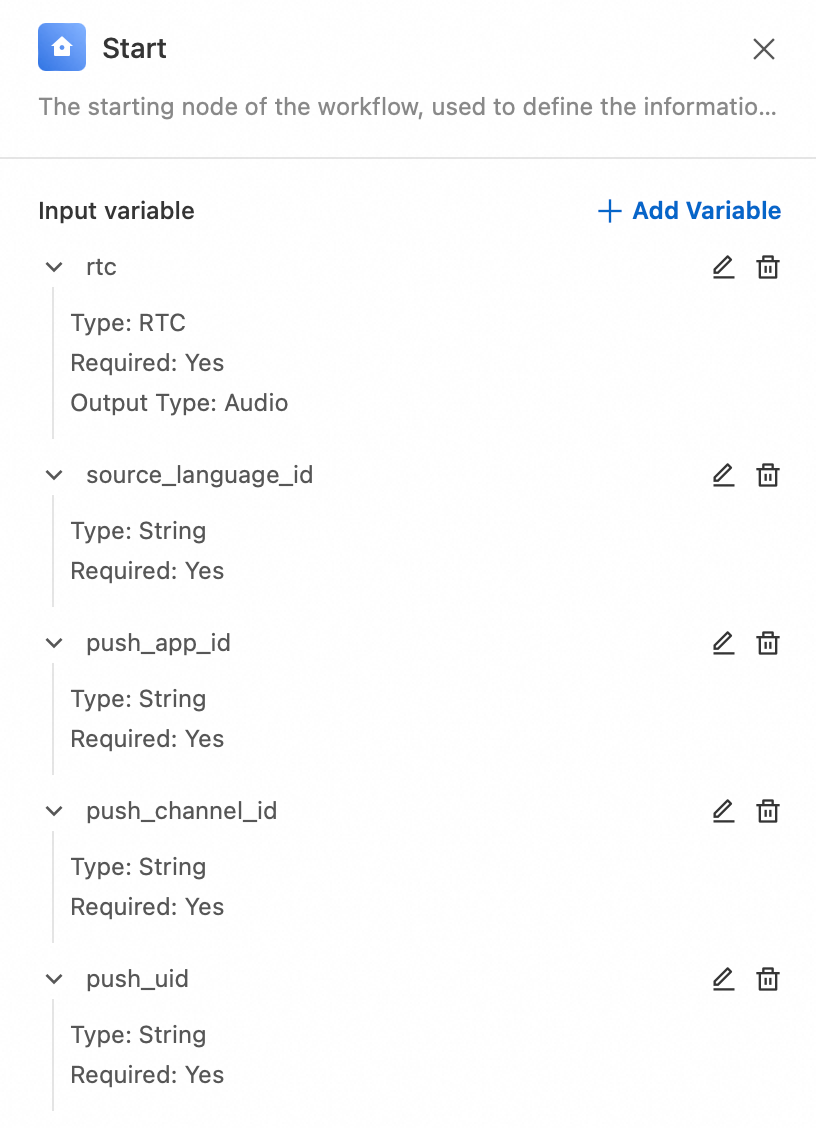

Start node

Variable descriptions:

rtc: When you start the workflow, pass the RTC parameters. These include AppId, ChannelId, and UserId. You also need to select the output audio stream.

source_language_id: The source language for recognition.

push_app_id: The RTC AppId for the DataChannel callback.

push_channel_id: The RTC ChannelId for the DataChannel callback.

push_uid: The RTC UserId for the DataChannel callback.

Variable example:

{

"rtc": {

"AppId": "xxx",

"ChannelId": "rtcaitest1",

"UserId": "userA"

},

"source_language_id": "zh",

"push_app_id": "app_id",

"push_channel_id": "channel_id",

"push_uid": "user_id"

}ASR node

For the input variable, reference the audio from the live stream input of the Start node. For the input language, reference the source_language_id parameter of the Start node. You can leave the other parameters at their default values or customize them as needed.

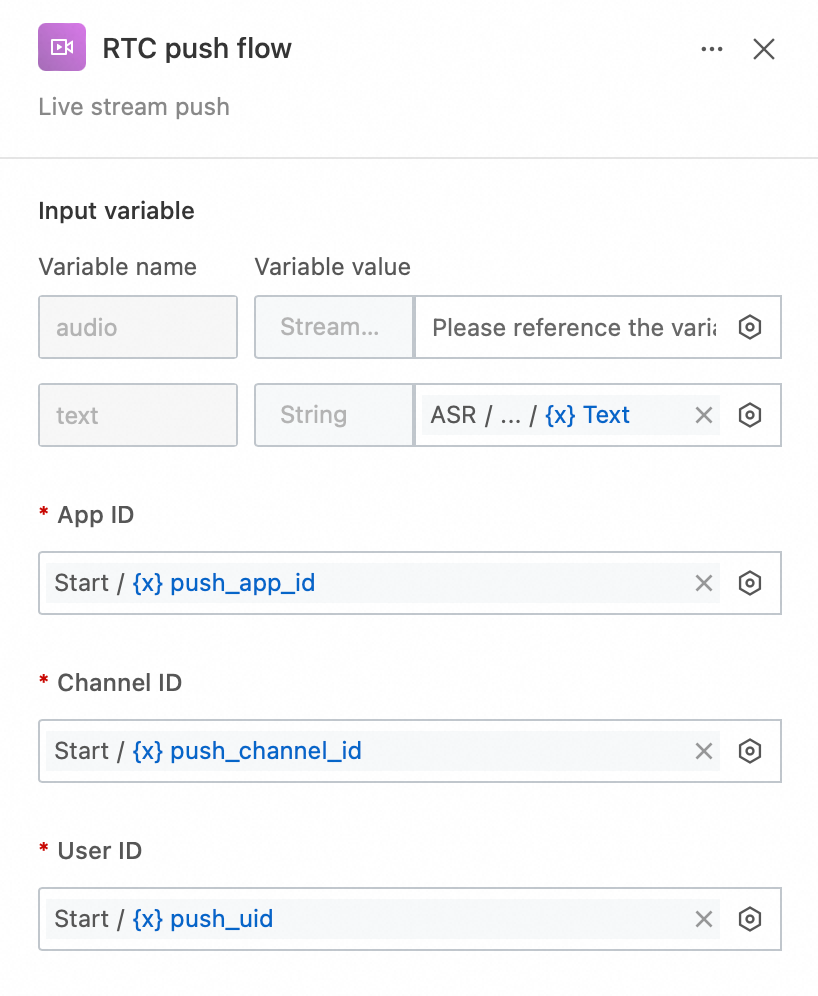

RTC ingest node

The text input variable must reference the output text from the Automatic Speech Recognition (ASR) service. The App ID, channel ID, and user ID correspond to the push_app_id, push_channel_id, and push_uid fields of the start node and represent the role information for DataChannel stream ingest.