When most queries hit a small number of shards on one worker node, that node becomes a bottleneck while others stay idle. Shard-level replication places copies of those hot shards on underutilized worker nodes, distributing read traffic and increasing queries per second (QPS). The same feature also improves availability: if a shard becomes unavailable, queries can be retried on a replica on another worker node.

How it works

Data in a Hologres instance is distributed across shards. Each shard owns a distinct slice of the data, and all shards together form the complete dataset.

By default, each shard has one replica—the leader shard—which handles all write requests. When you increase replica_count to a value greater than 1, Hologres creates follower shards. Read queries are then spread across the leader and followers according to the routing policy you configure.

Follower shards are runtime replicas: they occupy memory on worker nodes but do not increase storage costs. Due to anti-affinity scheduling, only one replica per shard can run on a given worker node, so replica_count cannot exceed the number of worker nodes.

Queries routed to a follower shard may have an additional 10–20 ms of latency due to metadata synchronization between the leader and follower.

When to add shard replicas

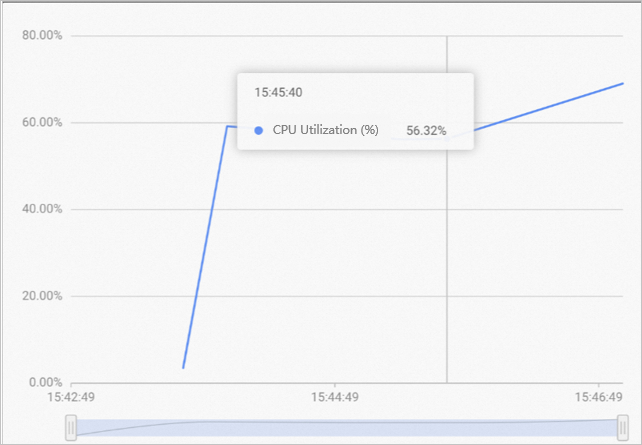

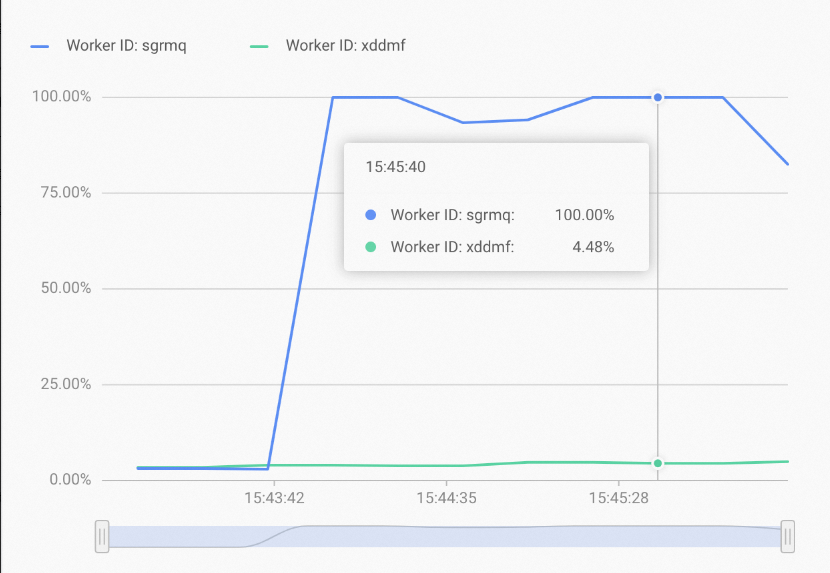

The clearest signal is uneven CPU utilization across worker nodes: the instance average appears moderate, but one worker node is overloaded while another is nearly idle.

This pattern indicates that most queries hit a small set of shards on one worker node. Adding replicas places copies of those shards on underutilized worker nodes, distributing the load and increasing QPS.

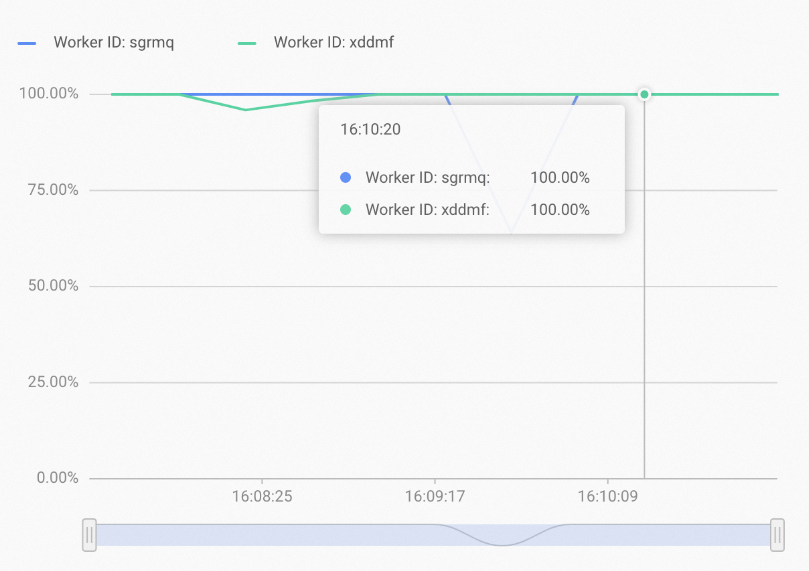

After rebalancing, resource utilization evens out across all worker nodes:

Shard metadata synchronization consumes resources. Add replicas only when the root cause is confirmed to be uneven query distribution, not general CPU pressure.

Replica count and shard count

Adding replicas without reducing shards wastes resources. To keep worker node load balanced, maintain this equation:

shard_count × replica_count = recommended shard count for your instanceFor recommended shard counts by instance size, see Instance management.

Limitations

| Limitation | Details |

|---|---|

| Minimum version for shard-level replication | Hologres V1.1 or later |

| Minimum version for high-availability (HA) mode | Hologres V1.3.45 or later |

replica_count upper bound (V1.3.53 and later) | Cannot exceed the number of worker nodes; exceeding the limit returns an error |

replica_count upper bound (before V1.3.53) | Must be less than or equal to the number of compute nodes |

Check the compute node count on the instance details page of the Hologres console.

To check your instance version, go to the instance details page in the Hologres console. If your version is V0.10 or earlier, see Instance upgrades for upgrade instructions, or join the Hologres DingTalk group to request an engineer-assisted upgrade. See also How to get online support?.

Configure shard replicas

Query table groups and current replica counts

List all table groups in the current database:

SELECT * FROM hologres.hg_table_group_properties;Check the replica count for a specific table group:

SELECT property_value

FROM hologres.hg_table_group_properties

WHERE tablegroup_name = '<table_group_name>'

AND property_key = 'replica_count';| Placeholder | Description |

|---|---|

<table_group_name> | The name of the table group to query |

Enable shard-level replication

Set replica_count to a value greater than 1. A value of 2 is typical for most workloads.

-- Set replica count for a table group

CALL hg_set_table_group_property ('<table_group_name>', 'replica_count', '<replica_count>');| Parameter | Description |

|---|---|

<table_group_name> | The name of the table group to modify |

<replica_count> | Number of replicas per shard. Must be less than or equal to the number of compute nodes. Typically set to 2. Default is 1 (replication disabled). |

Disable shard-level replication

Set replica_count back to 1:

-- Disable shard-level replication

CALL hg_set_table_group_property ('<table_group_name>', 'replica_count', '1');Verify replica distribution

After enabling replication, confirm that each replica has been assigned to a worker node:

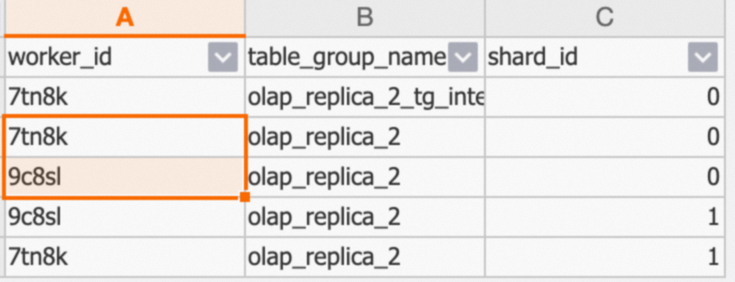

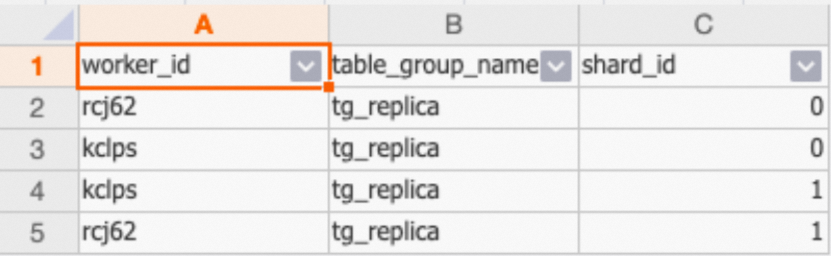

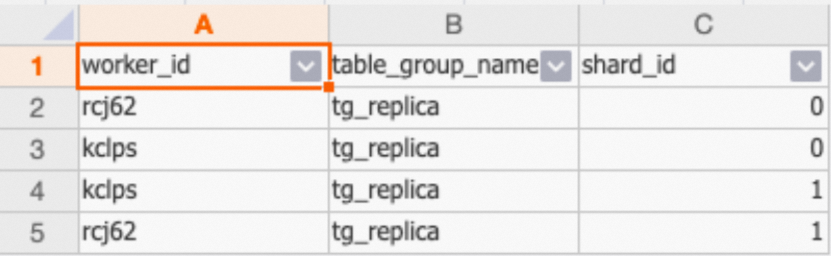

SELECT * FROM hologres.hg_worker_info;Before a shard's metadata loads onto a worker node, the worker_id field for that worker may appear empty.The result shows which shards are loaded on which worker nodes. In the example below, Shard 0 and Shard 1 of the olap_replica_2 table group are distributed across the 7tn8k and 9c8sl worker nodes:

Configure query routing

Once replicas are in place, use the following parameters to control how queries are distributed. The parameters work at two levels of granularity:

Coarse control —

hg_experimental_query_replica_modesets the overall routing strategy for all queries.Fine control —

hg_experimental_query_replica_leader_weightandhg_experimental_query_replica_fixed_plan_ha_modetune the behavior within that strategy, specifically for fixed-plan point queries.

Choose a routing strategy

Select the combination that matches your goal:

| Goal | hg_experimental_query_replica_mode | hg_experimental_query_replica_fixed_plan_ha_mode | Notes |

|---|---|---|---|

| Distribute load across all replicas (default) | leader_follower | any | Queries distributed by weight; default leader weight is 100 |

| Prefer leader, fall back on timeout | leader_follower | leader_first | Use for write-then-read workloads that require fresh data |

| Route only to leader | leader_only | — | Does not improve throughput or availability; use only for strict consistency requirements |

| Route only to followers | follower_only | — | Requires replica_count greater than 3 to have two or more follower shards |

hg_experimental_query_replica_mode

Controls which replicas receive queries.

| Value | Behavior | Default |

|---|---|---|

leader_follower | Distributes queries across the leader and all followers based on hg_experimental_query_replica_leader_weight | Yes |

leader_only | Routes all queries to the leader shard. Follower shards receive no traffic. | — |

follower_only | Routes all queries to follower shards only. Requires replica_count greater than 3. | — |

-- Session-level

SET hg_experimental_query_replica_mode = leader_follower;

-- Database-level

ALTER DATABASE <database_name>

SET hg_experimental_query_replica_mode = leader_follower;hg_experimental_query_replica_leader_weight

Sets the traffic weight of the leader shard relative to each follower shard. Each follower always has a fixed weight of 100. Applies when hg_experimental_query_replica_mode = leader_follower.

| Property | Value |

|---|---|

| Default | 100 |

| Min | 1 |

| Max | 10000 |

Example: With replica_count = 4 (1 leader + 3 followers) and the default leader weight of 100, all four replicas have equal weight (25% each). Set the leader weight lower to send more traffic to followers, or higher to concentrate traffic on the leader.

-- Session-level

SET hg_experimental_query_replica_leader_weight = 100;

-- Database-level

ALTER DATABASE <database_name>

SET hg_experimental_query_replica_leader_weight = 100;hg_experimental_query_replica_fixed_plan_ha_mode

Applies only to point queries processed with fixed plans. Controls retry behavior when a shard is unavailable.

| Value | Behavior | When to use |

|---|---|---|

any | Follows the same routing as hg_experimental_query_replica_mode | Default; use for general workloads |

leader_first | Sends point queries to the leader first. If the leader times out, retries on a follower. Only effective when hg_experimental_query_replica_mode = leader_follower. | Write-then-read workloads where follower replication lag is unacceptable |

off | No retry. Each query is routed only once. | Debugging or scenarios where you need to measure single-attempt latency without retry masking failures |

-- Session-level

SET hg_experimental_query_replica_fixed_plan_ha_mode = any;

-- Database-level

ALTER DATABASE <database_name>

SET hg_experimental_query_replica_fixed_plan_ha_mode = any;hg_experimental_query_replica_fixed_plan_first_query_timeout_ms

The timeout threshold in milliseconds for the first attempt of a point query in HA mode. If no result is returned within this window, the system retries on another available shard replica. Applies only to point queries processed with fixed plans.

| Property | Value |

|---|---|

| Default | 60 ms |

| Min | 0 ms |

| Max | 10000 ms |

-- Session-level

SET hg_experimental_query_replica_fixed_plan_first_query_timeout_ms = 60;

-- Database-level

ALTER DATABASE <database_name>

SET hg_experimental_query_replica_fixed_plan_first_query_timeout_ms = 60;Use cases

High throughput: distribute hot-data queries across worker nodes

Scenario: CPU utilization is uneven—one worker node is overloaded while others are idle—because most queries target a small number of shards.

Configuration:

Set

replica_countto 2 for the affected table group:-- Set replica count to 2 for the tg_replica table group CALL hg_set_table_group_property ('tg_replica', 'replica_count', '2');The default routing settings apply automatically:

hg_experimental_query_replica_mode = leader_followerhg_experimental_query_replica_leader_weight = 100

Queries are now distributed evenly across all replicas, increasing QPS even for hot-data workloads.

Verify that replicas are loaded on each worker node:

SELECT * FROM hologres.hg_worker_info WHERE table_group_name = 'tg_replica';The result should show one replica per worker node:

High availability: continue serving queries when a shard fails

Scenario: Queries fail when the shard they target becomes unavailable.

Configuration:

Set

replica_countto 2 for the affected table group:-- Set replica count to 2 for the tg_replica table group CALL hg_set_table_group_property ('tg_replica', 'replica_count', '2');The default HA settings apply automatically:

hg_experimental_query_replica_mode = leader_followerhg_experimental_query_replica_fixed_plan_ha_mode = anyhg_experimental_query_replica_fixed_plan_first_query_timeout_ms = 60

With these defaults, the system behaves as follows when a shard becomes unavailable:

Query type Behavior Online analytical processing (OLAP) queries The master node periodically checks replica availability. Unavailable replicas are removed from routing within 15 seconds (5 s detection + 10 s FE node removal). When the replica recovers, the system adds it back automatically. Fixed-plan point queries A retry mechanism routes failed queries to a replica on another worker node. Queries complete successfully, though response time increases temporarily. Write-then-read workloads Set hg_experimental_query_replica_fixed_plan_ha_mode = leader_firstto avoid follower replication lag. Queries go to the leader first; if the leader times out, they fall back to a follower.In high-availability mode, QPS is not improved for hot-data queries. To also improve throughput, combine this configuration with a higher

replica_count.Verify that replicas are loaded on each worker node:

SELECT * FROM hologres.hg_worker_info WHERE table_group_name = 'tg_replica';

Troubleshooting

Queries are not routed to follower shards after configuration.

In Hologres versions earlier than V1.3, the Grand Unified Configuration (GUC) parameter hg_experimental_enable_read_replica controls whether follower shards can receive queries. Its default value is off.

Check the current value:

SHOW hg_experimental_enable_read_replica;If the value is off, enable it at the database level:

ALTER DATABASE <database_name> SET hg_experimental_enable_read_replica = on;Replace <database_name> with your actual database name.