Connect Hologres to Realtime Compute for Apache Flink and DataV to build an end-to-end real-time operations dashboard — from streaming data ingestion to live visualization — without moving data through intermediate storage.

By the end of this tutorial, you will have a working dashboard that displays e-commerce store metrics in real time:

Total store traffic and visits per store

Regional sales volume on an interactive map

Best-selling products by sales amount

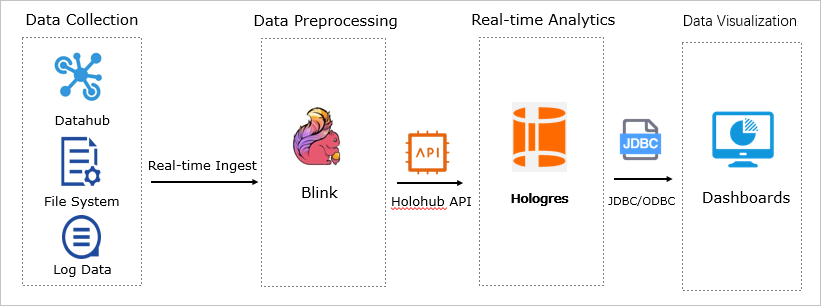

How it works

The pipeline has three stages:

Ingest: Realtime Compute for Apache Flink reads from a source connector and cleanses and aggregates the stream in real time.

Store and query: Flink writes the processed records to Hologres via the real-time data API. Hologres handles high-concurrency, second-level queries.

Visualize: DataV connects directly to Hologres and renders the live dashboard.

Without fast ingestion, dashboards display stale data. Without low-latency queries, dashboards are slow to load. Hologres addresses both: it accepts high-throughput real-time writes and serves interactive queries in seconds.

Hologres is compatible with PostgreSQL, so business intelligence (BI) tools connect to it directly without a separate query layer.

Prerequisites

Before you begin, make sure you have:

A Hologres instance with HoloWeb connected. See Connect to HoloWeb and execute queries.

Realtime Compute for Apache Flink activated. See Activate Realtime Compute for Apache Flink.

DataV activated. See Activate DataV Service.

Realtime Compute for Apache Flink and Hologres must be in the same region and use the same vSwitch and virtual private cloud (VPC).

Build the pipeline

Step 1: Create a data receiving table in Hologres

In HoloWeb, run the following SQL to create the public.order_details table. The table is column-oriented with sale_timestamp as the clustering and segment key, optimized for time-range queries.

BEGIN;

CREATE TABLE public.order_details (

"user_id" int8,

"user_name" text,

"item_id" int8,

"item_name" text,

"price" numeric(38,2),

"province" text,

"city" text,

"ip" text,

"longitude" text,

"latitude" text,

"sale_timestamp" timestamptz NOT NULL

);

CALL SET_TABLE_PROPERTY('public.order_details', 'orientation', 'column');

CALL SET_TABLE_PROPERTY('public.order_details', 'clustering_key', 'sale_timestamp:asc');

CALL SET_TABLE_PROPERTY('public.order_details', 'segment_key', 'sale_timestamp');

CALL SET_TABLE_PROPERTY('public.order_details', 'bitmap_columns', 'user_name,item_name,province,city,ip,longitude,latitude');

CALL SET_TABLE_PROPERTY('public.order_details', 'dictionary_encoding_columns','user_name:auto,item_name:auto,province:auto,city:auto,ip:auto,longitude:auto,latitude:auto');

CALL SET_TABLE_PROPERTY('public.order_details', 'time_to_live_in_seconds', '3153600000');

CALL SET_TABLE_PROPERTY('public.order_details', 'distribution_key', 'user_id');

CALL SET_TABLE_PROPERTY('public.order_details', 'storage_format', 'orc');

COMMIT;For step-by-step instructions on running SQL in HoloWeb, see Connect to HoloWeb and execute queries.

Step 2: Upload the source data connector to Flink

This tutorial generates order data directly from Flink using a custom connector, so no external data source is required.

In the Realtime Compute for Apache Flink console, upload the ordergen JAR file:

For upload instructions, see Upload and use a custom connector.

To use a different data source (for example, DataHub or business logs), replace the ordergen connector with the appropriate source connector in Step 3.Step 3: Create and deploy the Flink job

In the Realtime Compute for Apache Flink console, create an SQL draft with the following statement. For instructions, see Job development.

The job reads from the ordergen source connector and writes each record to Hologres in real time.

CREATE TEMPORARY TABLE source_table (

user_id BIGINT,

user_name VARCHAR,

item_id BIGINT,

item_name VARCHAR,

price NUMERIC(38, 2),

province VARCHAR,

city VARCHAR,

longitude VARCHAR,

latitude VARCHAR,

ip VARCHAR,

sale_timestamp TIMESTAMP

) WITH ('connector' = 'ordergen');

CREATE TEMPORARY TABLE hologres_sink (

user_id BIGINT,

user_name VARCHAR,

item_id BIGINT,

item_name VARCHAR,

price NUMERIC(38, 2),

province VARCHAR,

city VARCHAR,

longitude VARCHAR,

latitude VARCHAR,

ip VARCHAR,

sale_timestamp TIMESTAMP

) WITH (

'connector' = 'hologres',

'dbname' = '<holo_db>',

'tablename' = '<receive_table>',

'username' = '<uid>',

'password' = '<pid>',

'endpoint' = '<host>'

);

INSERT INTO hologres_sink

SELECT user_id, user_name, item_id, item_name, price,

province, city, longitude, latitude, ip, sale_timestamp

FROM source_table;Replace the placeholders in the hologres_sink WITH clause:

| Parameter | Required | Description | Example |

|---|---|---|---|

<holo_db> | Yes | Name of the Hologres database | my_db |

<receive_table> | Yes | Name of the table created in Step 1 | public.order_details |

<uid> | Yes | AccessKey ID of your Alibaba Cloud account | LTAI5tXxx |

<pid> | Yes | AccessKey secret of your Alibaba Cloud account | xXxXxXx |

<host> | Yes | VPC endpoint of the Hologres instance. Find it on the instance details page in the Hologres console under Network Information. | <instance-id>-cn-hangzhou.hologres.aliyuncs.com:80 |

Step 4: Start the Flink job

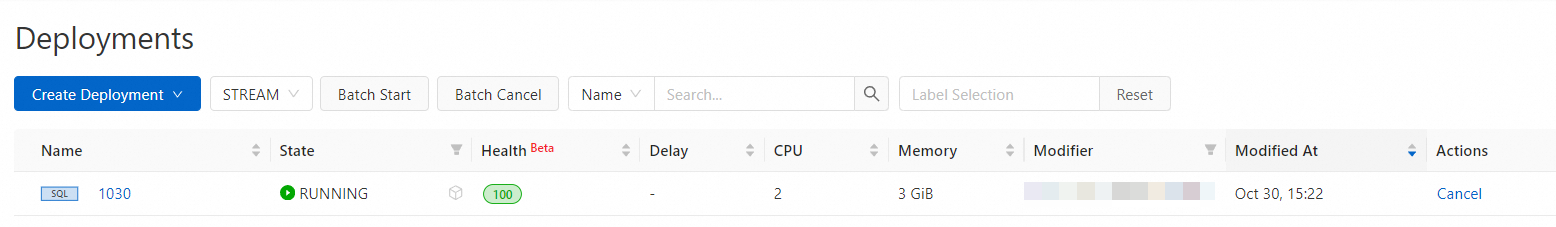

On the Job O&M page in the Realtime Compute for Apache Flink console, start the job and wait until its status changes to Running. For details, see Start a job.

Step 5: Verify real-time data in Hologres

In HoloWeb, run the following queries to confirm that data is flowing into order_details. Each query returns results within seconds.

-- Total GMV

SELECT SUM(price) AS "GMV" FROM order_details;

-- Unique visitors

SELECT COUNT(DISTINCT user_id) AS "UV" FROM order_details;

-- Visitors by city (top 100)

SELECT city AS "city", COUNT(DISTINCT user_id) AS "user_count"

FROM order_details

GROUP BY "city"

ORDER BY "user_count" DESC

LIMIT 100;

-- Top products by sales (top 100)

SELECT item_name AS "product", SUM(price) AS "sales_amount"

FROM order_details

GROUP BY "product"

ORDER BY "sales_amount" DESC

LIMIT 100;

-- Daily GMV trend (top 100 days)

SELECT to_char(sale_timestamp, 'MM-DD') AS "date", SUM(price) AS "GMV"

FROM order_details

GROUP BY "date"

ORDER BY "GMV" DESC

LIMIT 100;If the queries return rows, the pipeline is working. Proceed to connect DataV.

Step 6: Build the DataV dashboard

Add Hologres as a data source

Go to the DataV console. In the navigation pane on the left, choose Data Preparation > Data Source.DataV console

On the Data Source page, click Add Data Source.

In the Add Data Source panel, configure the connection parameters for your Hologres instance.

Click OK.

Configure widgets and display the dashboard

Select widgets and bind each one to the Hologres data source using the queries from Step 5. For a full list of available widgets, see Components overview.

This tutorial uses four widget types:

Ticker board — displays total GMV and UV

Bar chart — ranks products by sales amount

Carousel — cycles through city visit counts

Basic flat map — plots transaction locations by latitude and longitude

After binding the data source, configure each widget's border, font, and colors. Add decorative elements as needed.

The completed dashboard layout:

Left panel: real-time product visits and city sales

Center (map): transaction locations, total sales, and total visits

Right panel: product sales proportion and ranking

Troubleshooting

No data appears in the Hologres table after starting the job

Cause: The Flink job cannot reach the Hologres endpoint.

Solution: Verify that Flink and Hologres are in the same region and use the same VPC and vSwitch. Confirm the endpoint value in the Flink SQL matches the VPC endpoint shown in the Hologres console under Network Information. Check the Flink TaskManager logs for connection errors.

The Flink job fails to start

Cause: The ordergen JAR was not uploaded or the connector name is misspelled in the SQL.

Solution: In the Flink console, confirm the JAR appears under custom connectors. Make sure the 'connector' = 'ordergen' value in the source_table WITH clause exactly matches the registered connector name.

DataV cannot connect to Hologres

Cause: The Hologres endpoint or credentials in the DataV data source configuration are incorrect.

Solution: Use the public endpoint if DataV does not share the same VPC as the Hologres instance, or use the VPC endpoint if they are in the same VPC. Verify the AccessKey ID and AccessKey secret are correct and have the necessary permissions.

What's next

To learn about Hologres table properties such as clustering key and distribution key, see the Hologres table design documentation.

To use a production data source instead of the

ordergenconnector, replacesource_tablewith a DataHub or Kafka connector in the Flink SQL.To add more widget types to your DataV dashboard, see Components overview.