This topic answers frequently asked questions about monitoring, alerting, and logs.

How do I check the monitoring service type used by a workspace?

What are the limitations of CloudMonitor alerts compared to ARMS?

How do I disable the Prometheus Service that is automatically enabled?

How do I configure monitoring and alerting for Flink job restarts?

How do I view, search, and analyze historical Flink operational logs?

How do I resolve the issue where logs from non-static methods are not output to SLS?

Data is written correctly, but the Flink job status overview shows 0 data. What should I do?

What should I do if a Datastream job has no delay, but the output curve shows a delay?

How do I check the monitoring service type used by a workspace?

You select the monitoring service type when you create a workspace. After the workspace is created, go to the page and click a job name. If the Alert Configuration tab is displayed, the workspace uses the pay-as-you-go Prometheus Service, which is part of Application Real-Time Monitoring Service (ARMS). If the tab is not displayed, the workspace uses the free CloudMonitor service. For more information about how to configure alerts for different service types, see Job monitoring and alerting.

What are the limitations of CloudMonitor alerts compared to ARMS?

Query analysis syntax is not supported.

Curves at the subtask granularity are not available. In scenarios with multiple sources and subtasks, this makes it difficult to quickly locate latency issues after clustering.

Metrics reported from custom instrumentation within user code cannot be viewed. This can make troubleshooting difficult.

How do I configure or add an alert contact?

When you use CloudMonitor or configure alerts in the ARMS console, you must add or configure contacts in the corresponding console. For more information about alert configuration, see Configure monitoring and alerting.

If your workspace uses the ARMS monitoring service and you configure metric or job failure alerts for a single job directly in the Realtime Compute for Apache Flink development console, add or configure alert contacts by following these steps.

Access the alert configuration page.

Log on to the Realtime Compute for Apache Flink management console. In the Operation column of the target workspace, click Console.

On the page, click the target job name.

Click the Alert Configuration tab.

On the Alert Rules tab, choose to open the create rule panel.

Configure or add an alert contact.

Add

Click Notification Recipient Management next to the Notification Recipient parameter to add contacts, DingTalk robots, and more. For more information about how to configure alerts for DingTalk robots, new webhooks, and Lark robots, see FAQ.

After you add a contact, if you use phone calls for alerts, ensure the recipient's phone number is verified. Otherwise, the alerts will not be delivered. If the Unverified label appears in the Phone column for the target contact on the Contacts tab, click the label to complete the verification.

Configure

For the Notification Recipient parameter, select the desired alert contacts. If the desired contact is not available, add it by following the steps above.

How do I disable the Prometheus Service that is automatically enabled?

If you selected the pay-as-you-go Prometheus Service (ARMS) when you created your workspace, the ARMS service is automatically enabled. If you no longer need to monitor Realtime Compute for Apache Flink, follow these steps to uninstall the Prometheus Service:

Uninstalling the Prometheus instance for a workspace stops monitoring data collection for that workspace and results in the loss of job monitoring data curves. If a job becomes abnormal, you cannot locate the initial time of the abnormality or receive monitoring alerts. Proceed with caution.

Log on to the Prometheus console.

In the navigation pane on the left, click Instance List to go to the instance list page.

From the Tag Filtering drop-down list, select the ID or name of the target workspace.

Find the instance with Instance Type set to Prometheus for Flink Serverless and click Uninstall in the Operation column.

In the dialog box that appears, click Confirm.

How do I find the job that triggered an alert?

Alert events contain the JobID and Deployment ID. However, the JobID changes after a job failover. Therefore, use the Deployment ID to find the specific job that reported an error. You can view the Deployment ID in one of the following ways.

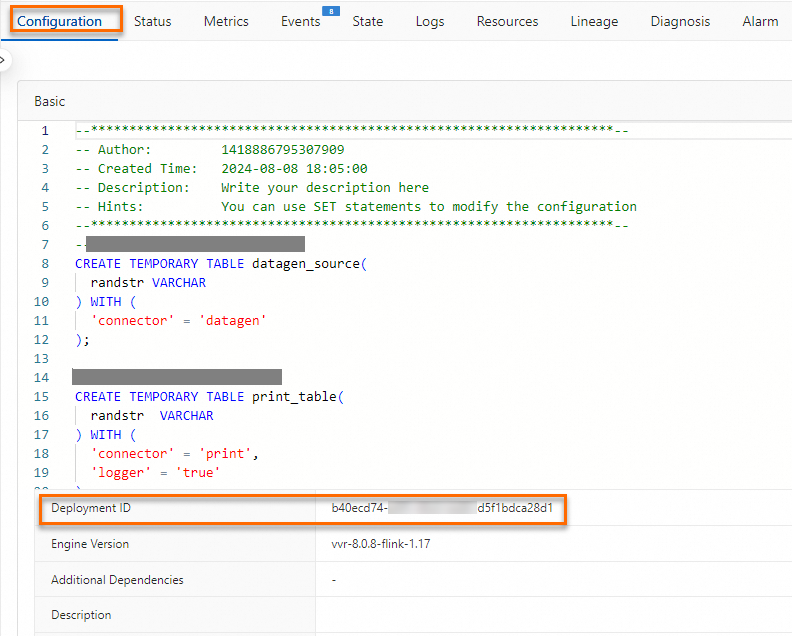

In the Realtime Compute for Apache Flink development console, on the Deployment Details tab, view the Deployment ID in the Basic Configuration section.

View the Deployment ID in the job's URL.

How do I configure monitoring and alerting for Flink job restarts?

The Realtime Compute for Apache Flink development console configures alert rules based on Flink metrics. Therefore, after a job failover, metric curves are not displayed, and alerts cannot be triggered. To handle critical events such as job restarts, you can configure a custom rule in the ARMS console based on the instantaneous growth rate of the `flink_jobmanager_job_numRestarts` metric. This allows alerting for job manager (JM) failover events. The following steps provide an example:

Log on to the Realtime Compute for Apache Flink management console.

In the Operation column of the target workspace, click to go to the Application Real-Time Monitoring Service (ARMS) console.

On the Alert Rules page, click Create Prometheus Alert Rule.

Set Detection Type to Custom PromQL and select the instance for alerting.

Write a custom Prometheus Query Language (PromQL) statement.

For example,

irate(flink_jobmanager_job_numRestarts{jobId=~"$jobId",deploymentId=~"$deploymentId"}[1m])>0queries the data for `flink_jobmanager_job_numRestarts` over the last 1 minute and triggers an alert if the instantaneous rate of change is greater than 0.Click Finish.

How do I set log level parameters for a single class?

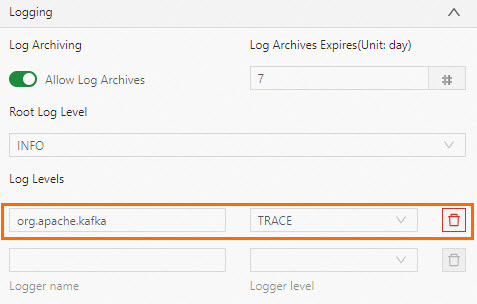

For example, to set Kafka connector-related parameters such as log4j.logger.org.apache.kafka.clients.consumer=trace (for a source table) and log4j.logger.org.apache.kafka.clients.producer=trace (for a sink table), you must configure them in Log Levels, not in Other Configuration.

How do I enable GC log parameters?

On the page, click the target job name. On the Deployment Details tab, in the Parameter Settings section, add the following code to Other Configuration and save it to apply the changes.

env.java.opts: >-

-XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:/flink/log/gc.log

-XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=2 -XX:GCLogFileSize=50M

A job fails to start after configuring logs for SLS

Problem details

After modifying the job to output logs to Simple Log Service (SLS), the job fails to start with the message Job startup failed. Please retry. and reports the following error:

Unknown ApiException {exceptionType=com.ververica.platform.appmanager.controller.domain.TemplatesRenderException, exceptionMessage=Failed to render {userConfiguredLoggers={}, jobId=3fd090ea-81fc-4983-ace1-0e0e7b******, rootLoggerLogLevel=INFO, clusterName=f7dba7ec27****, deploymentId=41529785-ab12-405b-82a8-1b1d73******, namespace=flinktest-default, priorityClassName=flink-p5, deploymentName=test}} 029999 202312121531-8SHEUBJUJUCause

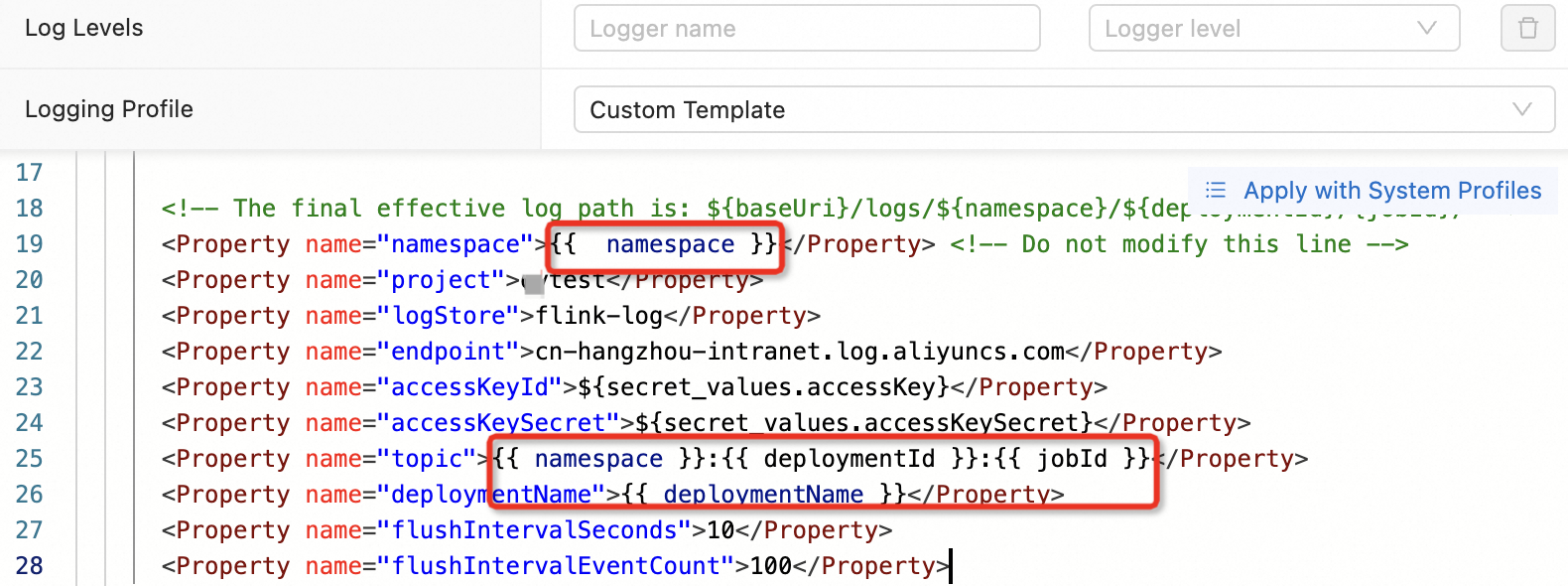

When you configure job logs, check if you have modified any Twig variables, such as `namespace` or `deploymentId`.

Solution

Reconfigure the settings by following the instructions in Configure job log output. Modify the parameters described in the table as needed.

How do I view, search, and analyze historical Flink operational logs?

Realtime Compute for Apache Flink supports viewing and analyzing historical operational logs in the development console or in external storage.

View and analyze in the job log interface of the development console.

On the Deployment Details tab, you can enable the Log Archiving feature, which is enabled by default. You can also set the Log Archive Retention Period. The default retention period is 7 days, and the most recent 5 MB of operational logs are retained.

View and analyze in external storage:

You can configure job logs to be sent to external storage, such as OSS, SLS, or Kafka. You can also configure the log level for the output. For more information, see Configure job log output.

How do I resolve the issue where logs from non-static methods are not output to SLS?

Problem details

Due to the implementation logic of the SLS Logger Appender, logs from non-static methods are not output to SLS.

Solution

Use the standard definition:

private static final Logger LOG = LoggerFactory.getLogger(xxx.class);.

Data is written correctly, but the Flink job status overview shows 0 data. What should I do?

Problem details

If a job has only one node, the volume of data read and written is not displayed in the job topology graph. This occurs when the source has only output and the sink has only input.

Solution

To view the specific data traffic in the topology graph, you need to split the operators. Split the source and sink operators into independent operators and connect them separately to other operators to form a new topology. Then, you can view the data flow and traffic in the topology graph.

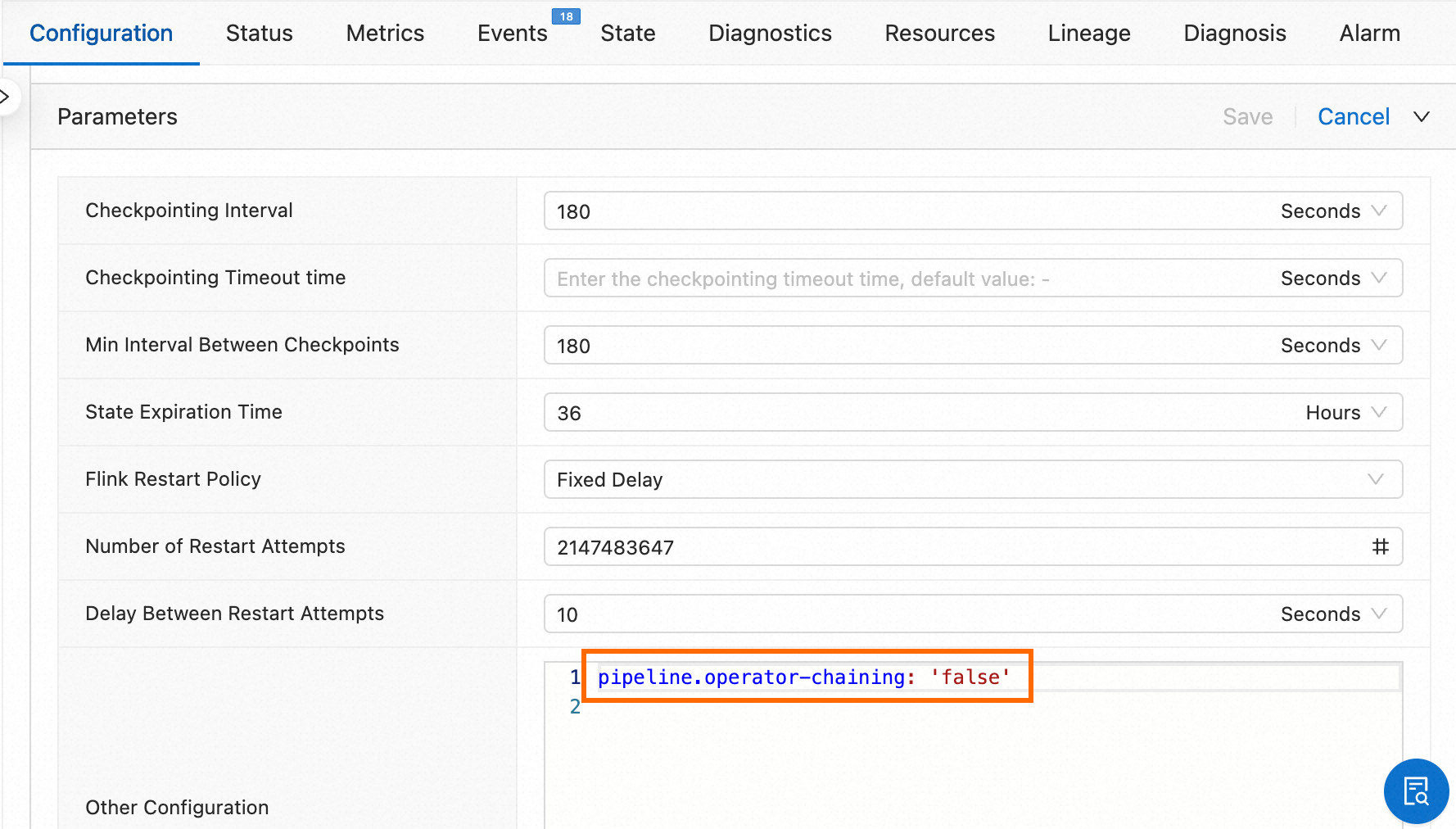

On the page, click the target job name. On the Deployment Details tab, in the Parameter Settings section, add the operator splitting parameter configuration

pipeline.operator-chaining: 'false'to Other Configuration.

What should I do if a Datastream job has no delay, but the output curve shows a delay?

Problem description

A source table continuously feeds data into Flink, and each partition of the Kafka physical table is also a continuous data stream. However, the `CurrentEmitEventTimeLag` and `CurrentFetchEventTimeLag` metrics for the Datastream job's Kafka source indicate a delay of 52 years.

Cause

The Kafka dependency in Datastream is a community connector, not a Flink built-in connector. Community connectors do not implement the logic for reporting curves. As a result, curves for metrics such as input and output delay appear abnormal.

Solution

Use the dependency for the Flink built-in connector. For more information, see Maven Repository.

What should I do if a NullPointerException is thrown in the TM logs of a Datastream job without a detailed stack trace?

On the page, click the target job name. On the Deployment Details tab, in the Parameter Settings section, add the following code to Other Configuration.

env.java.opts: "-XX:-OmitStackTraceInFastThrow"