This topic answers common questions about draft development and deployment debugging in Realtime Compute for Apache Flink.

-

When running DDL and DML statements together, why does validation fail?

-

How do I use special characters in Entry Point Main Arguments?

-

Why does my UDF JAR package fail to upload after multiple modifications?

-

Why are fields misaligned when I use a POJO class as UDTF return types?

-

Why does Flink reject my table with "Invalid primary key. Column 'xxx' is nullable."?

-

Why do JSON files open in the browser instead of downloading?

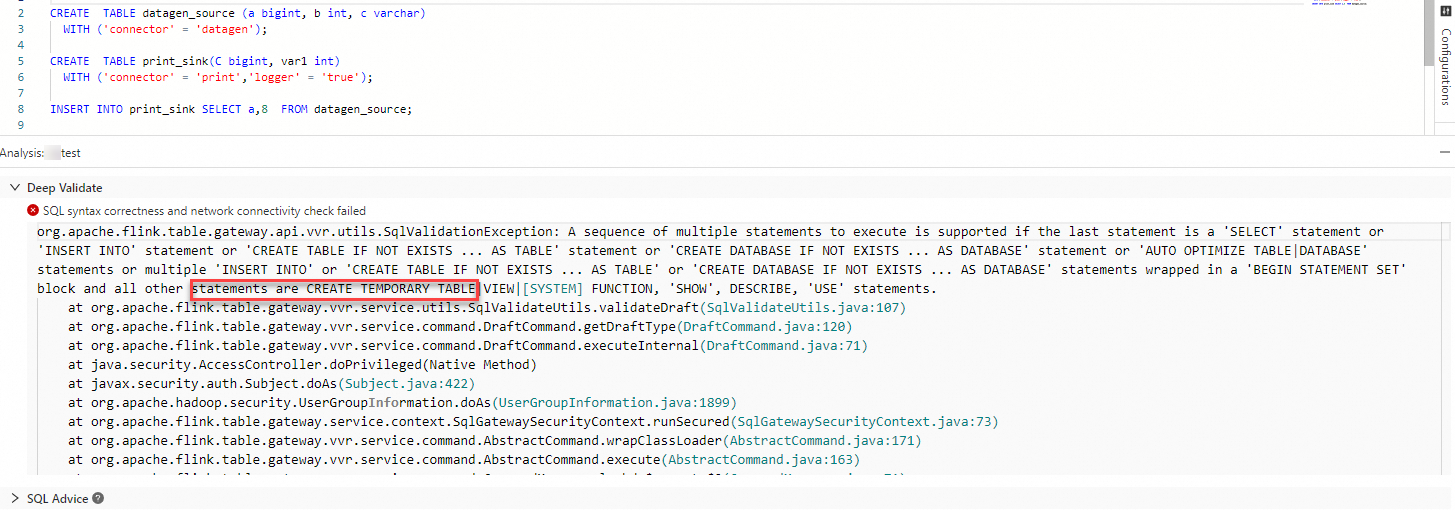

When running DDL and DML statements together, why does validation fail?

Use CREATE TEMPORARY TABLE instead of CREATE TABLE in your DDL statement. When a DDL and a DML statement appear in the same draft, CREATE TABLE causes a validation error when you click Validate.

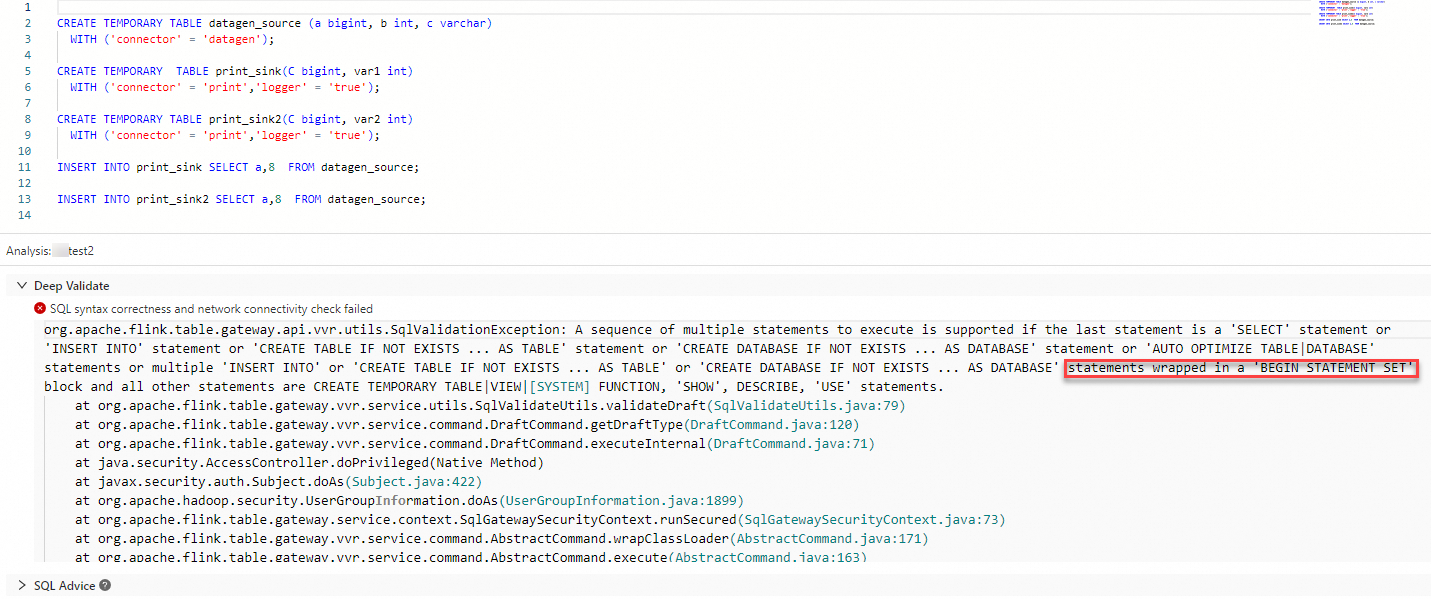

How do I write multiple INSERT INTO statements?

Wrap all INSERT INTO statements between BEGIN STATEMENT SET; and END; to form a single logical unit. Without this wrapper, clicking Validate raises an error.

For details on statement set syntax, see INSERT INTO.

How do I use special characters in Entry Point Main Arguments?

If the Entry Point Main Arguments value contains # (number sign) or $ (dollar sign), backslash escaping does not work — the special character is silently discarded.

Add the following configuration to the Other Configuration field in the Parameters section of the Configuration tab on the Deployments page:

env.java.opts: -Dconfig.disable-inline-comment=trueFor details, see How do I configure custom running parameters for a job?.

Why does my UDF JAR package fail to upload after multiple modifications?

The upload fails when the JAR package contains a class with the same name as a class in an already-uploaded JAR package, causing a user-defined function (UDF) conflict.

Two options to resolve this:

-

Delete and re-upload: Delete the existing JAR package from the console and upload the updated package.

-

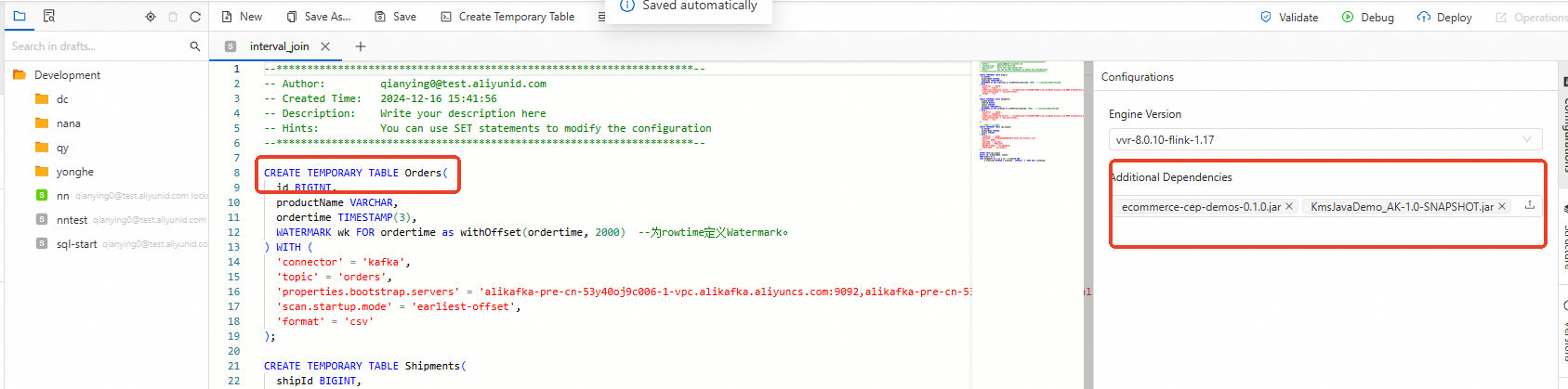

Declare as a temporary function: In the SQL editor, declare the UDF as a temporary function before uploading. Then click the Upload icon to upload the JAR package from the Additional Dependencies section on the Configurations tab of the ETL page.

CREATE TEMPORARY FUNCTION `cp_record_reduce` AS 'com.taobao.test.udf.blink.CPRecordReduceUDF';For details on registering UDFs, see Register a UDF.

Why are fields misaligned when I use a POJO class as UDTF return types?

When a Plain Old Java Object (POJO) class is used as return types for a user-defined table-valued function (UDTF) and you explicitly alias the returned fields in SQL, the fields can be misaligned even though data types appear consistent.

Root cause

The order of returned fields depends on whether the POJO has a parameterized constructor:

-

With a parameterized constructor: Fields are returned in constructor parameter order.

-

Without a parameterized constructor: Fields are returned in alphabetical order, not declaration order.

For example, given a POJO with fields declared as c (int), d (String), a (boolean), b (String) and no parameterized constructor, the actual return order is alphabetical: a (BOOLEAN), b (VARCHAR), c (INTEGER), d (VARCHAR). Aliasing the fields with AS T(c, d, a, b) in the SQL statement reassigns names by position, causing misalignment.

package com.aliyun.example;

public class TestPojoWithoutConstructor {

public int c;

public String d;

public boolean a;

public String b;

}package com.aliyun.example;

import org.apache.flink.table.functions.TableFunction;

public class MyTableFuncPojoWithoutConstructor extends TableFunction<TestPojoWithoutConstructor> {

private static final long serialVersionUID = 1L;

public void eval(String str1, Integer i2) {

TestPojoWithoutConstructor p = new TestPojoWithoutConstructor();

p.d = str1 + "_d";

p.c = i2 + 2;

p.b = str1 + "_b";

collect(p);

}

}CREATE TEMPORARY FUNCTION MyTableFuncPojoWithoutConstructor as 'com.aliyun.example.MyTableFuncPojoWithoutConstructor';

CREATE TEMPORARY TABLE src (

id STRING,

cnt INT

) WITH (

'connector' = 'datagen'

);

CREATE TEMPORARY TABLE sink (

f1 INT,

f2 STRING,

f3 BOOLEAN,

f4 STRING

) WITH (

'connector' = 'print'

);

INSERT INTO sink

SELECT T.* FROM src, LATERAL TABLE(MyTableFuncPojoWithoutConstructor(id, cnt)) AS T(c, d, a, b);This produces the following validation error:

org.apache.flink.table.api.ValidationException: SQL validation failed. Column types of query result and sink for 'vvp.default.sink' do not match.

Cause: Sink column 'f1' at position 0 is of type INT but expression in the query is of type BOOLEAN NOT NULL.

Hint: You will need to rewrite or cast the expression.

Query schema: [c: BOOLEAN NOT NULL, d: STRING, a: INT NOT NULL, b: STRING]

Sink schema: [f1: INT, f2: STRING, f3: BOOLEAN, f4: STRING]

at org.apache.flink.table.sqlserver.utils.FormatValidatorExceptionUtils.newValidationException(FormatValidatorExceptionUtils.java:41)Fix

Choose one of the following approaches:

-

Select fields by name instead of aliasing: If the POJO has no parameterized constructor, do not use a rename list. Select fields by their actual (alphabetical) names instead:

-- Select by field name to avoid positional misalignment SELECT T.c, T.d, T.a, T.b FROM src, LATERAL TABLE(MyTableFuncPojoWithoutConstructor(id, cnt)) AS T; -

Add a parameterized constructor: Implement a constructor to explicitly control field order. The returned fields follow the constructor parameter order:

package com.aliyun.example; public class TestPojoWithConstructor { public int c; public String d; public boolean a; public String b; // Using specific fields order instead of alphabetical order public TestPojoWithConstructor(int c, String d, boolean a, String b) { this.c = c; this.d = d; this.a = a; this.b = b; } }

For information on packaging and registering UDTFs, see Overview and Register a deployment-level UDF.

How do I troubleshoot dependency conflicts?

Dependency conflicts in Realtime Compute for Apache Flink fall into two categories: explicit errors (Java exceptions) and silent failures (no exception, but unexpected behavior).

Explicit errors

If you see any of the following exceptions, a dependency conflict is likely the cause:

java.lang.AbstractMethodError

java.lang.ClassNotFoundException

java.lang.IllegalAccessError

java.lang.IllegalAccessException

java.lang.InstantiationError

java.lang.InstantiationException

java.lang.InvocationTargetException

java.lang.NoClassDefFoundError

java.lang.NoSuchFieldError

java.lang.NoSuchFieldException

java.lang.NoSuchMethodError

java.lang.NoSuchMethodExceptionSilent failures

Some dependency conflicts produce no exception but cause unexpected behavior:

-

Logs not generated or Log4j config not taking effect: The JAR package likely contains a bundled Log4j configuration that overrides the runtime config. Check whether your JAR includes a Log4j config file and, if so, configure Maven exclusions to remove it. If you use multiple Log4j versions, use

maven-shade-pluginto relocate Log4j classes. -

Remote procedure call (RPC) failures: By default, Akka RPC errors caused by dependency conflicts are not logged. Enable debug logging to surface them. A typical symptom is TaskManagers repeatedly reporting

Cannot allocate the requested resources. Trying to allocate ResourceProfile{xxx}while the JobManager log stops atRegistering TaskManager with ResourceID xxxwith no further output untilNoResourceAvailableExceptionappears. After enabling debug logging, the underlyingInvocationTargetExceptionbecomes visible.

Identify the conflict

Run these commands to locate the conflicting dependency:

# View the contents of your JAR

jar tf foo.jar

# Inspect the full dependency tree

mvn dependency:treeYou can also review your pom.xml for unnecessary dependencies that should not be bundled.

Fix the conflict

Set Flink and Hadoop dependencies to `provided` scope

The runtime environment already provides Flink and Hadoop dependencies. Bundling them in your JAR causes conflicts. Set scope to provided for these dependencies:

-

DataStream Java

<dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-streaming-java_2.11</artifactId> <version>${flink.version}</version> <scope>provided</scope> </dependency> -

DataStream Scala

<dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-streaming-scala_2.11</artifactId> <version>${flink.version}</version> <scope>provided</scope> </dependency> -

DataSet Java

<dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-java</artifactId> <version>${flink.version}</version> <scope>provided</scope> </dependency> -

DataSet Scala

<dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-scala_2.11</artifactId> <version>${flink.version}</version> <scope>provided</scope> </dependency>

Include connector dependencies with `compile` scope

Connector dependencies are not provided by the runtime and must be bundled. The default Maven scope is compile. The following example uses the Kafka connector:

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_2.11</artifactId>

<version>${flink.version}</version>

</dependency>Exclude indirect Hadoop dependencies

Avoid adding Hadoop, Log4j, or Realtime Compute for Apache Flink dependencies directly. If your dependencies pull them in transitively:

-

For direct dependencies, set

scopetoprovided:<dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <scope>provided</scope> </dependency> -

For indirect dependencies, configure

<exclusions>:<dependency> <groupId>foo</groupId> <artifactId>bar</artifactId> <exclusions> <exclusion> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> </exclusion> </exclusions> </dependency>

Why does my UDTF throw "Could not parse type at position 50: expected but was. Input type string: ROW"?

The @DataTypeHint annotation in your UDTF uses a reserved SQL keyword (from, to, or type) as a field name. Flink's type parser rejects these during SQL validation.

Full error:

Caused by: org.apache.flink.table.api.ValidationException: Could not parse type at position 50: <IDENTIFIER> expected but was <KEYWORD>. Input type string: ROW<resultId String,pointRange String,from String,to String,type String,pointScope String,userId String,point String,triggerSource String,time String,uuid String>Two options to fix this:

-

Rename the field to a non-reserved name. For example, rename

totofto. -

Wrap the field name in backticks (`

) in the@DataTypeHint` annotation:@FunctionHint( output = @DataTypeHint("ROW<resultId String,pointRange String,`from` String,`to` String,`type` String,...>"))

Why does Flink reject my table with "Invalid primary key. Column 'xxx' is nullable."?

Flink enforces strict primary key semantics: all primary key columns must be explicitly declared as NOT NULL. Even if the column's data contains no null values at runtime, Flink rejects the DDL during parsing if the column definition allows NULL (for example, INT NULL).

Recreate the table and declare each primary key column as NOT NULL:

CREATE TABLE my_table (

id INT NOT NULL,

name STRING,

PRIMARY KEY (id) NOT ENFORCED

) WITH (...);Why do JSON files open in the browser instead of downloading?

When you download a JSON file from the Artifacts page, it opens in a browser tab instead of saving to disk. This happens because the file in Object Storage Service (OSS) is missing the Content-Disposition: attachment HTTP response header. Without this header, browsers render the content directly.

Two options to fix this:

-

Re-upload the file: This bug was fixed for files uploaded from May 2025 onwards. Re-uploading the file applies the fix automatically.

-

Update the OSS object metadata manually: Add the

Content-Dispositionheader to the object's metadata: For details, see Manage object metadata.-

Header name:

Content-Disposition -

Header value:

attachment

-