This topic answers frequently asked questions about data synchronization in Realtime Compute for Apache Flink.

How do I handle JSON schema changes when JSON-formatted messages are sent from Kafka to Hologres via Flink?

The traditional workaround — canceling the deployment, modifying the code and the Hologres table schema, redeploying, and restarting — delays data delivery and risks errors. Realtime Compute for Apache Flink handles this automatically with three built-in optimizations:

-

Self-adaptive schema evolution: When the JSON schema changes, Flink automatically synchronizes the schema to Hologres without requiring you to cancel the deployment or modify the code.

-

Type inference for Kafka JSON messages: Flink infers data types automatically, so you don't need to declare them in the Kafka table's DDL.

-

Recursive JSON expansion: Nested fields are expanded automatically. For example,

{"nested": {"col": true}}is expanded intonested.col.

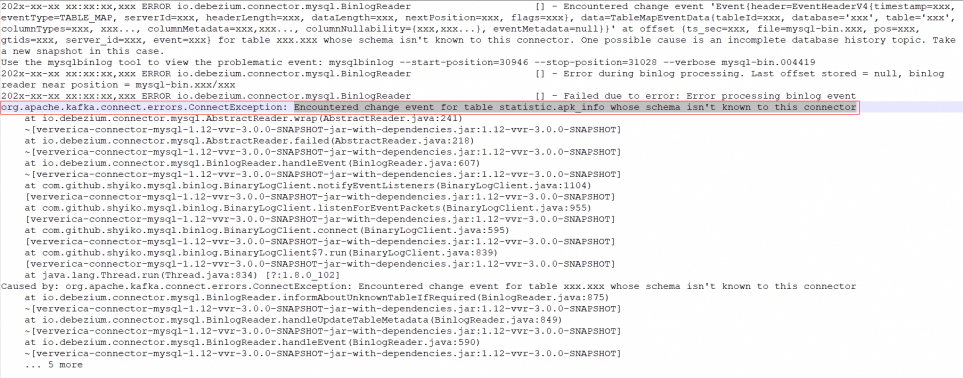

How do I fix the "Encountered change event for table xxx.xxx whose schema isn't known to this connector" error?

This error occurs when you use CREATE DATABASE AS (CDAS) or CREATE TABLE AS (CTAS) to synchronize new tables.

There are three common causes, each with a corresponding fix:

Insufficient database permissions — the database account configured for the Flink job's connector lacks permissions to access the required databases.

Grant permissions on all databases used in the Flink job. Required permissions typically include reading, writing, and updating data, creating and updating table schemas, and creating tables. For details, see the Connector topics for your specific connector.

`'debezium.snapshot.mode'='never'` is set — Debezium reads from the beginning of binary logs, but the table schema at that point in the logs no longer matches the current table schema.

Use 'debezium.inconsistent.schema.handling.mode' = 'warn' instead of 'debezium.snapshot.mode'='never'.

A change that Debezium cannot handle, such as DEFAULT (now()).

Check the io.debezium.connector.mysql.MySqlSchema WARN log entry to find the specific change causing the issue, such as DEFAULT (now()).

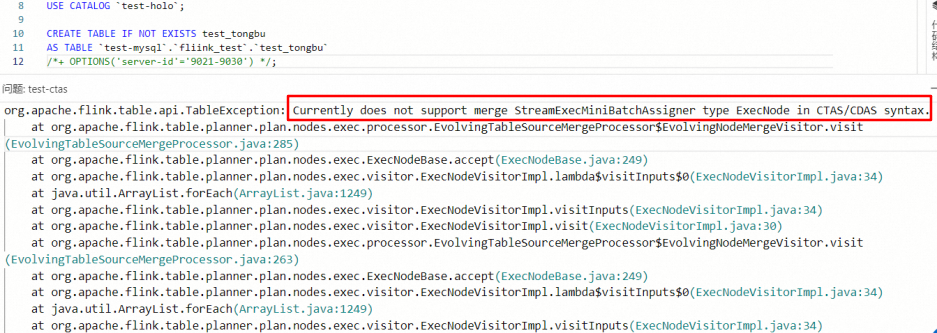

How do I fix the "Currently does not support merge StreamExecMiniBatchAssigner type ExecNode in CTAS/CDAS syntax" error?

This error occurs when deploying or starting an SQL deployment that contains CTAS or CDAS statements with 'table.exec.mini-batch.enabled' = 'true'. CTAS and CDAS do not support MiniBatch, so remove the MiniBatch configuration before proceeding.

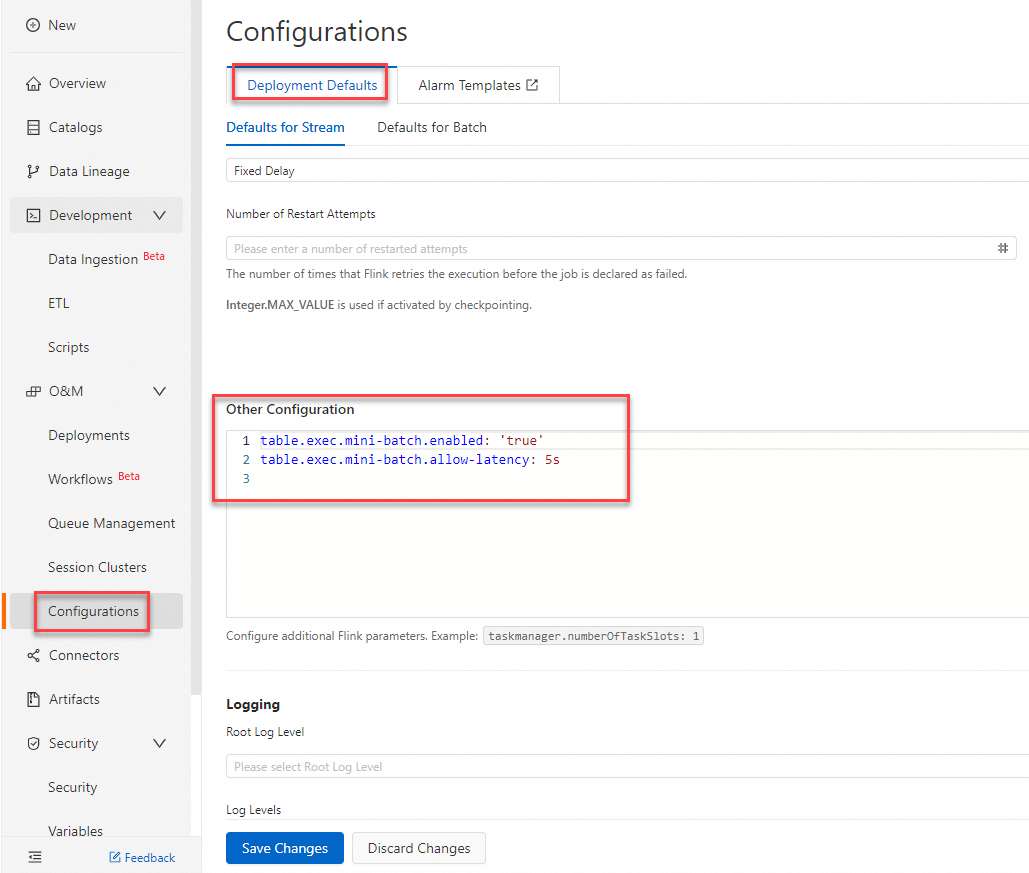

If the SQL draft has not been deployed:

-

In the left-side navigation pane of the development console, choose O\&M \> Configurations.

-

On the Configurations pane, select the Deployment Defaults tab.

-

In the Other Configuration section, remove the MiniBatch configurations or set

table.exec.mini-batch.enabledtofalse. -

Click Save Changes.

-

Deploy the draft again.

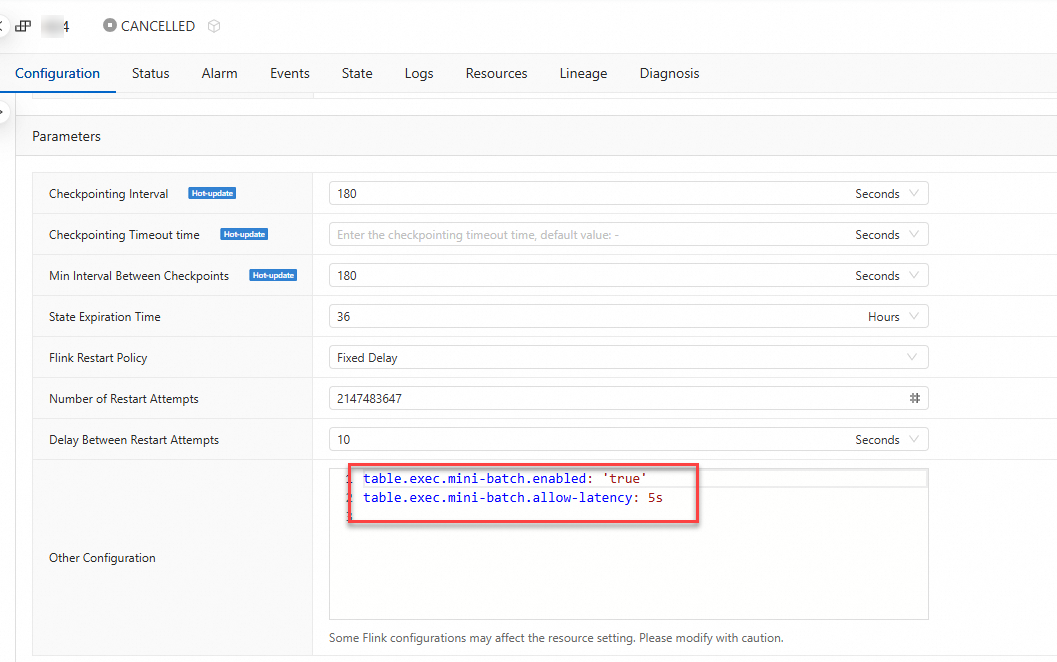

If the SQL draft has already been deployed (by skipping validation):

-

In the left-side navigation pane of the development console, choose O\&M \> Deployments.

-

Click the name of your SQL deployment.

-

Select the Configuration tab.

-

In the Parameters section, click Edit.

-

In the Other Configuration field, remove the MiniBatch configurations or set

table.exec.mini-batch.enabledtofalse. -

Restart the deployment.