When you debug a Realtime Compute for Apache Flink program that uses connectors in IntelliJ IDEA, the default connector JAR omits runtime classes. This causes a ClassNotFoundException at runtime—for example:

Caused by: java.lang.ClassNotFoundException: com.alibaba.ververica.connectors.odps.newsource.split.OdpsSourceSplitSerializer

at java.net.URLClassLoader.findClass(URLClassLoader.java:387)

at java.lang.ClassLoader.loadClass(ClassLoader.java:418)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:355)

at java.lang.ClassLoader.loadClass(ClassLoader.java:351)To fix this, add the connector uber JAR to the classpath and configure the ClassLoader JAR in IntelliJ IDEA.

Before you begin

-

Remove `pipeline.classpaths` before deploying to the cloud. This configuration is for local debugging only. Leaving it in the compiled JAR causes errors when you upload to Realtime Compute for Apache Flink.

-

MaxCompute connector on framework versions older than `1.17-vvr-8.0.11-1`: Use the

1.17-vvr-8.0.11-1uber JAR for local debugging. When you build the JAR for cloud deployment, include an older version of the connector uber JAR and remove any connector options that are only supported by newer framework versions. -

MySQL connector: In addition to the steps below, configure Maven dependencies as described in Debug MySQL DataStream.

-

Network connectivity: Your Flink application must reach the upstream and downstream systems. Either run those services locally on the same network, or verify that Flink can reach your cloud services over the Internet and add your device's public IP address to those services' whitelists.

Step 1: Add the connector uber JAR

The default connector JAR is a thin JAR that excludes third-party runtime classes. The uber JAR bundles all runtime dependencies, which is what you need for local debugging.

Download the connector uber JAR from the Maven central repository. For example, if you are using the MaxCompute connector at version 1.17-vvr-8.0.11-1, download ververica-connector-odps-1.17-vvr-8.0.11-1-uber.jar from the Maven central repository directory.

After downloading, set the pipeline.classpaths parameter to the local path of the uber JAR when you obtain the execution environment.

| Path format | Example |

|---|---|

| Single JAR | file:///path/to/a-uber.jar |

| Multiple JARs (semicolon-separated) | file:///path/to/a-uber.jar;file:///path/to/b-uber.jar |

| Windows path (include the drive letter) | file:///D:/path/to/a-uber.jar;file:///E:/path/to/b-uber.jar |

For a DataStream program:

Configuration conf = new Configuration();

conf.setString("pipeline.classpaths", "file://<absolute-path-to-uber-jar>");

StreamExecutionEnvironment env =

StreamExecutionEnvironment.getExecutionEnvironment(conf);For a Table API program:

Configuration conf = new Configuration();

conf.setString("pipeline.classpaths", "file://<absolute-path-to-uber-jar>");

EnvironmentSettings envSettings =

EnvironmentSettings.newInstance().withConfiguration(conf).build();

TableEnvironment tEnv = TableEnvironment.create(envSettings);Replace <absolute-path-to-uber-jar> with the local path of the downloaded uber JAR.

Step 2: Configure the ClassLoader JAR in IntelliJ IDEA

The ClassLoader JAR enables Flink to load the connector's runtime classes during local execution. Download the JAR that matches your Ververica Runtime (VVR) version:

| VVR version | Download |

|---|---|

| VVR 6.x | ververica-classloader-1.15-vvr-6.0-SNAPSHOT.jar |

| VVR 8.x | ververica-classloader-1.17-vvr-8.0-SNAPSHOT.jar |

| VVR 11.x | ververica-classloader-1.20-vvr-11.2-SNAPSHOT.jar |

After downloading, add the JAR to the run configuration in IntelliJ IDEA:

-

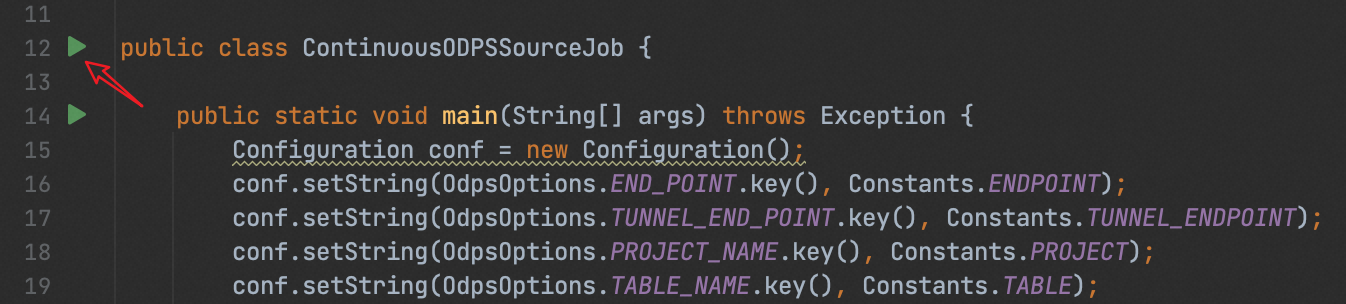

Open the program file in IntelliJ IDEA.

-

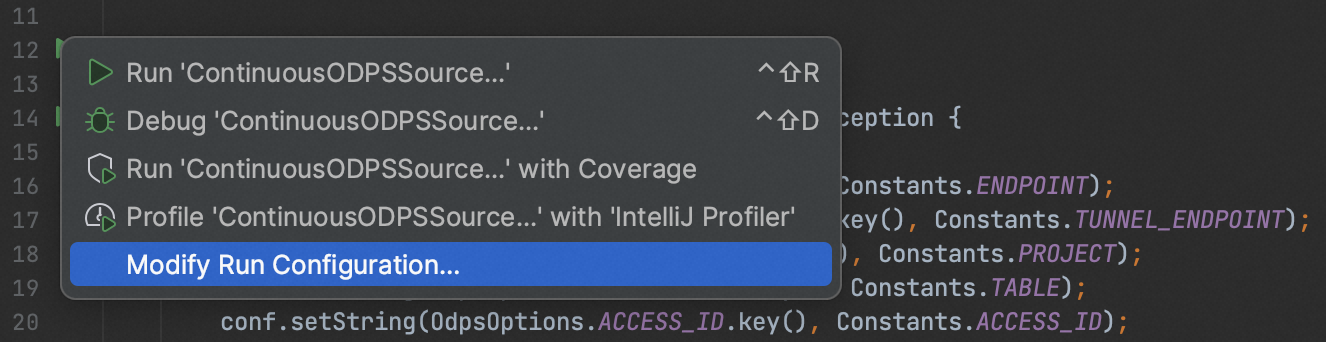

Click the green icon to the left of the entry class to expand the menu.

-

Select Modify Run Configuration....

-

Click Modify options.

-

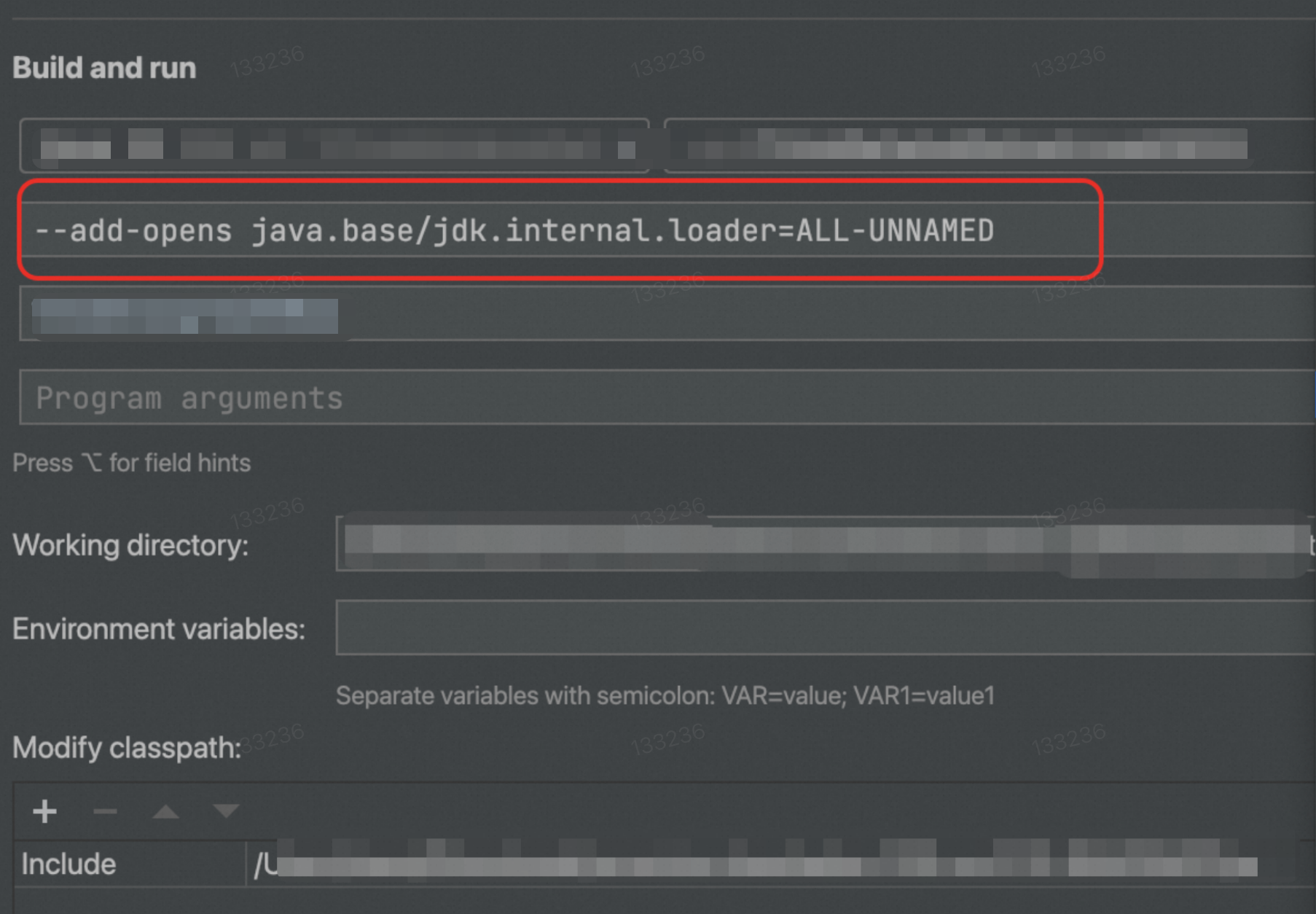

In the Add Run Options drop-down list, select Modify classpath under the Java section. The Modify classpath section appears.

-

In the Modify classpath section, click +, choose Include, and select the downloaded ClassLoader JAR.

-

(Required for VVR 11.1 and later) Add

--add-opens java.base/jdk.internal.loader=ALL-UNNAMEDas a JVM option.

-

Save the configuration.

If an error indicates missing common Flink classes, click Modify options and select Add dependencies with "provided" scope to classpath.

Debug Table API jobs (VVR 11.1 and later)

Starting with VVR 11.1, Realtime Compute for Apache Flink connectors are no longer fully compatible with Apache Flink's flink-table-common package. Running Table API jobs may produce:

java.lang.ClassNotFoundException: org.apache.flink.table.factories.OptionUpgradabaleTableFactoryTo fix this, update your pom.xml: replace org.apache.flink:flink-table-common with com.alibaba.ververica:flink-table-common, using the correct version.