Apache Flink Change Data Capture (CDC) connectors are open-source connectors that comply with the Apache Flink 2.0 protocol. This topic explains which CDC connectors are available, how to use them in SQL and JAR deployments, and how to rename a connector to avoid name conflicts.

The open-source CDC connectors have a different service level agreement (SLA) from the commercially released CDC connectors in Realtime Compute for Apache Flink. If you encounter issues such as configuration failure, deployment failure, or data loss, find solutions in the open-source community. Alibaba Cloud does not provide technical support for these connectors, and you are responsible for guaranteeing the SLA.

Available CDC connectors

The following connectors are built into Realtime Compute for Apache Flink with full commercial support. Use these instead of the open-source equivalents when available:

| Connector | Documentation |

|---|---|

| MySQL CDC | MySQL CDC connector |

| PostgreSQL CDC | PostgreSQL CDC connector |

| MongoDB CDC connector (public preview) | MongoDB CDC connector |

The following connectors are available as open-source CDC connectors for Apache Flink. They are not available for commercial use, and you must manage them independently:

| Connector | Documentation |

|---|---|

| OceanBase CDC | OceanBase CDC |

| Oracle CDC | Oracle CDC |

| SQL Server CDC | SQL Server CDC |

| TiDB CDC | TiDB CDC |

| Db2 CDC | Db2 CDC |

| Vitess CDC | Vitess CDC |

If the default name of an open-source CDC connector conflicts with a built-in connector or an existing custom connector in Realtime Compute for Apache Flink, rename it to avoid conflicts. For the SQL Server CDC and Db2 CDC connectors, rename the connector in the community source and repackage it. For example, rename sqlserver-cdc to sqlserver-cdc-test. For instructions, see Rename a connector.

Version mappings between CDC connectors for Apache Flink and VVR

Select the CDC connector release version that matches your Ververica Runtime (VVR) version.

| VVR version | CDC connector release version |

|---|---|

| vvr-4.0.0-flink-1.13 to vvr-4.0.6-flink-1.13 | release-1.4 |

| vvr-4.0.7-flink-1.13 to vvr-4.0.9-flink-1.13 | release-2.0 |

| vvr-4.0.10-flink-1.13 to vvr-4.0.12-flink-1.13 | release-2.1 |

| vvr-4.0.13-flink-1.13 to vvr-4.0.14-flink-1.13 | release-2.2 |

| vvr-4.0.15-flink-1.13 to vvr-6.0.2-flink-1.15 | release-2.3 |

| vvr-6.0.2-flink-1.15 to vvr-8.0.5-flink-1.17 | release-2.4 |

| vvr-8.0.1-flink-1.17 to vvr-8.0.7-flink-1.17 | release-3.0 |

| vvr-8.0.11-flink-1.17 to vvr-11.1-jdk11-flink-1.20 | release-3.4 |

Use an Apache Flink CDC connector

SQL deployments

-

Go to the Apache Flink CDC connectors page and select the desired release version.

NoteUse the latest stable version. To avoid compatibility issues, match the CDC release version to your VVR version. See Version mappings between CDC connectors for Apache Flink and VVR.

-

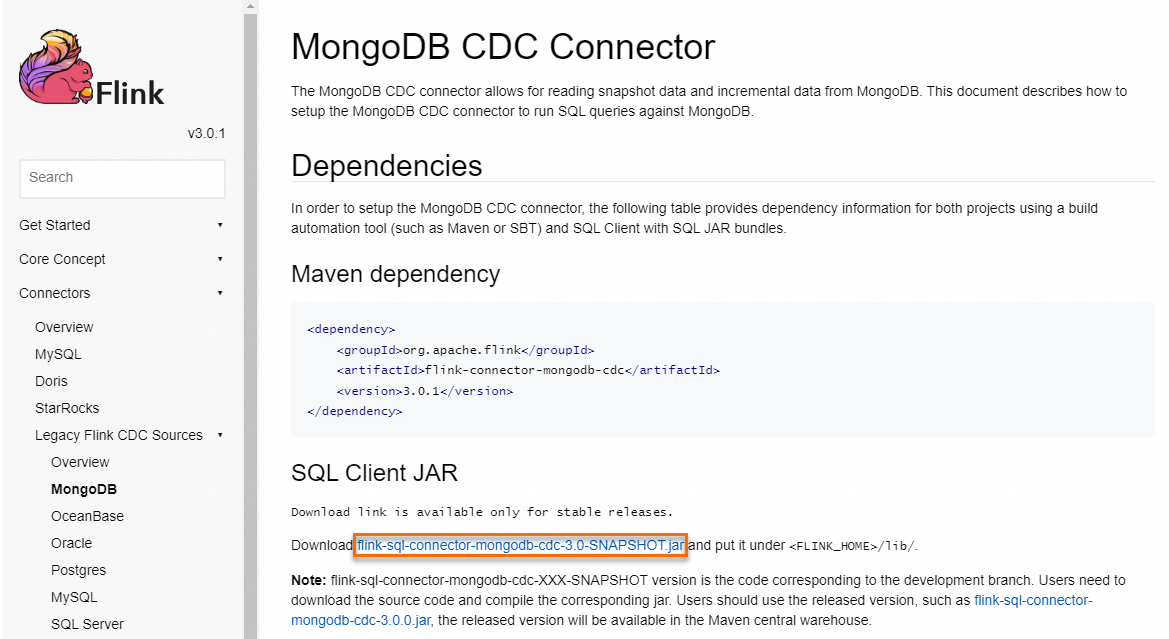

In the left-side table of contents, click Connectors and select the connector you want. On the connector page, go to the SQL Client JAR section and click the download link to get the JAR file.

NoteYou can also download the JAR from the Maven repository.

-

Log on to the Realtime Compute for Apache Flink management console.

-

Click Console in the Actions column of your workspace. The development console opens.

-

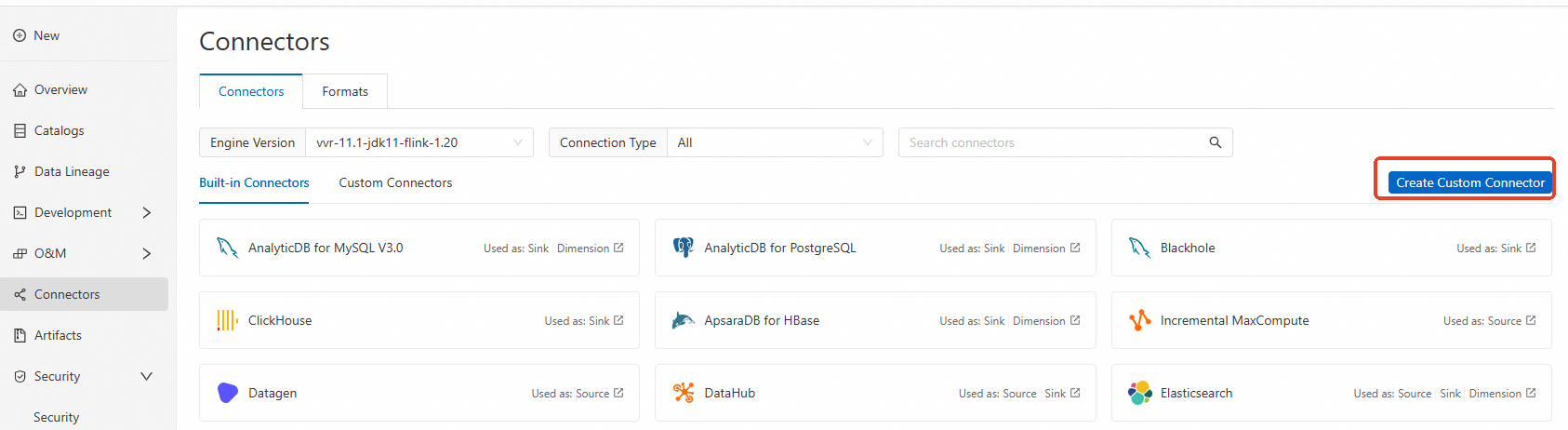

In the left-side navigation pane, click Connectors.

-

On the Connectors page, click Create Custom Connector.

-

In the dialog box, upload the JAR file you downloaded in step 2. For details, see Manage custom connectors.

-

Write your SQL job and set the

connectoroption to the name of the CDC connector. For the options supported by each connector, see CDC connectors for Apache Flink.

JAR deployments

Before using an open-source CDC connector in a JAR deployment, understand the difference between the two artifact types:

| Artifact | Contents | When to use |

|---|---|---|

flink-connector-xxx |

Connector code only; dependencies must be declared separately | When you need fine-grained dependency control |

flink-sql-connector-xxx |

All dependencies bundled into a single JAR | When creating a custom connector in the development console |

Add the Maven dependency

Declare the following dependency in your pom.xml file:

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-${Name of the desired connector}-cdc</artifactId>

<version>${Version of the connector for Apache Flink}</version>

</dependency>The Maven repository contains only release versions, not snapshot versions. To use a snapshot version, clone the GitHub repository and compile the JAR locally.

Use the connector in code

Import the relevant implementation class with the import keyword and follow the connector's documentation to use it.

Rename a connector

Rename a connector when its default name conflicts with a built-in connector or an existing custom connector. The following example shows how to rename the SQL Server CDC connector from sqlserver-cdc to sqlserver-cdc-test.

-

Clone the GitHub repository and switch to the branch for the version you want to use.

-

Change the factory identifier in the SQL Server CDC connector's factory class (

com.ververica.cdc.connectors.sqlserver.table.SqlServerTableFactory):@Override public String factoryIdentifier() { return "sqlserver-cdc-test"; } -

Compile and package the

flink-sql-connector-sqlserver-cdcsubmodule. -

In the development console, go to the left-side navigation pane, click Connectors, then click Create Custom Connector. Upload the JAR file packaged in step 3. For details, see Manage custom connectors.

-

In your SQL job, set the

connectorparameter tosqlserver-cdc-test.