Overview

This feature is currently in invitational preview. To use it, fill out the feature invitational preview request form.

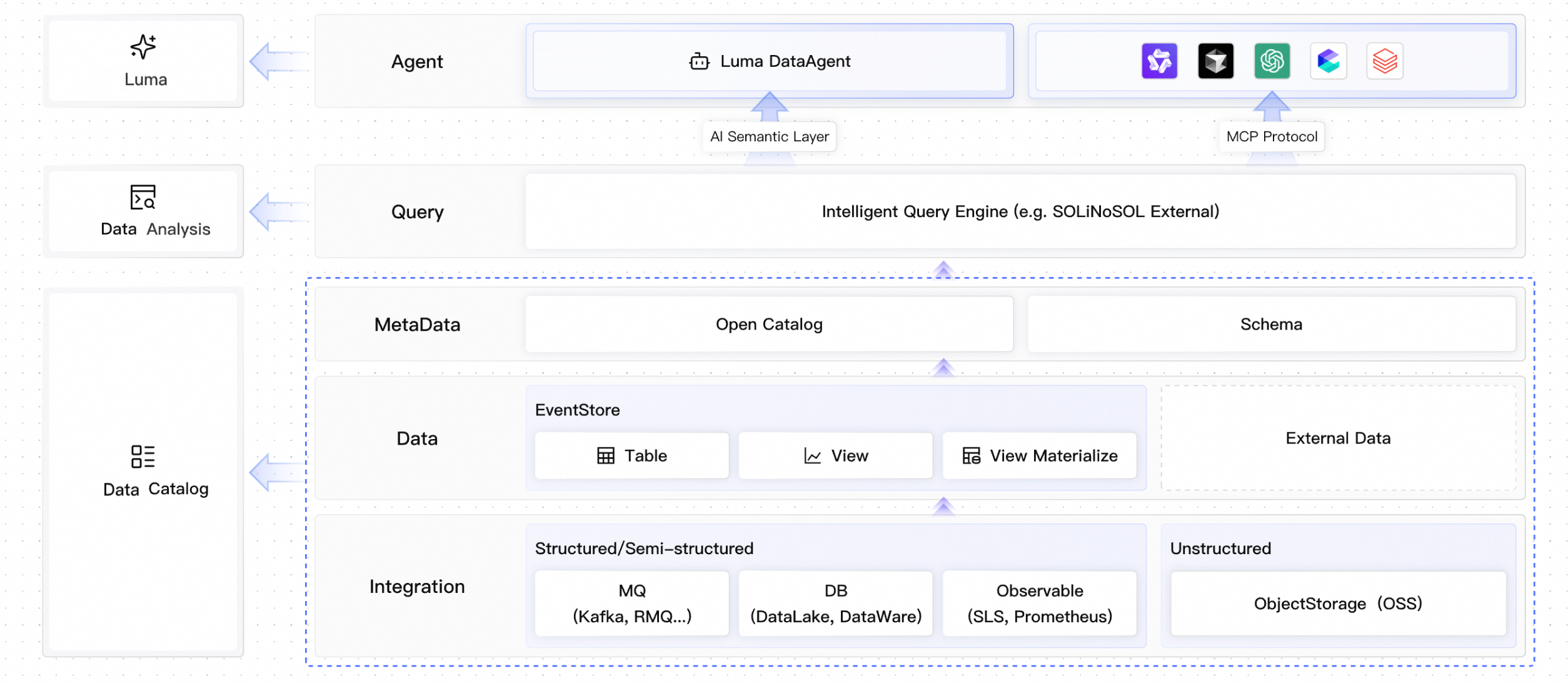

EventHouse is EventBridge’s cloud-native event lakehouse. It handles storage, governance, and intelligent analytics for event data.

EventBridge’s event bus solves event routing and delivery. EventHouse builds on this to address what to do with event data after it is stored. EventHouse unifies structured, semi-structured, and unstructured data from message queues (such as Kafka and RocketMQ), relational databases (such as MySQL), and object storage (such as OSS) into a standard event model. Using its built-in Open Catalog and AI semantic layer, EventHouse manages heterogeneous data sources with Zero-ETL and enables real-time analysis through SQL queries or AI agents.

Core components

EventHouse consists of three core components. Each works independently but collaborates closely:

Component | Location | Core capabilities |

Metadata management center | Multi-source metadata registration, schema evolution management, data lineage tracking, fine-grained access control | |

Compute engine layer | Unified stream and batch SQL, federated query, materialized view, real-time anomaly detection | |

AI analytics layer | AI semantic layer, MCP protocol integration, autonomous DataAgent analysis, natural language querying |

Core value

Zero-ETL (seamless data integration)

Map external data sources (such as RDS and OSS) directly. Run federated queries without moving data into EventHouse. This reduces data latency and storage cost.

Unified governance

Use Open Catalog to manage metadata and track lineage for “dark data” from message queues that lack schema definitions. Break down data silos.

Agentic analytics

Natively integrate MCP (Model Context Protocol). AI agents understand event data structures and perform analytics using natural language questions.

Data catalog

The data catalog is EventHouse’s metadata management center. It manages metadata, schema definitions, access permissions, and data lineage for all connected data sources.

Unified metadata management

Multi-source mapping: The catalog automatically discovers and registers metadata from data sources such as Kafka, RocketMQ, and RDS.

Schema evolution: Automatically infer and manage schema versions for event data. When upstream fields change, maintain compatibility to prevent downstream analysis tasks from breaking.

Data lineage tracking: Track events across their full lifecycle—from production (producer) and storage (eventstore) to analysis—to support troubleshooting and impact assessment.

Open ecosystem compatibility

Open Catalog supports open table formats such as Iceberg, Hudi, and Delta Lake. Avoid vendor lock-in and choose your compute engine freely.

Permissions and security

Provide fine-grained access control (ACL) at the database, table, and column levels.

Scenario: Unified data view

In E-commerce, order data may be split between RocketMQ (real-time stream) and MySQL (persistence). Use the catalog to create a unified view that logically joins the real-time order stream in MQ with user information tables in the database. Query this view directly—no need to know where data is physically stored.

Data analysis

Data analysis is EventHouse’s compute engine layer. It delivers high-performance SQL queries, stream processing, and federated query capabilities.

Intelligent query engine

Multimodal querying: Support three query modes—SQL (structured), NoSQL (document-based), and External (external data source queries).

Unified stream and batch: Use the same SQL syntax to query historical archived data (batch) and real-time event streams (streaming).

Materialized view: Precompute and cache frequent query results in materialized views for millisecond-level response times.

Federated query

Cross-source joint analysis: Without data migration, use SQL

JOINto directly link internal tables in EventHouse with external sources such as OSS log files or RDS dimension tables.Predicate pushdown: Push filter conditions down to the source. Pull only necessary data to improve query efficiency.

Real-time anomaly detection

Use built-in time window functions (Tumble, Hop, Session) to compute real-time metrics such as transaction success rate and latency distribution.

Combine with a rules engine. When analysis results cross a threshold (for example, “more than 100 failed orders in one minute”), trigger an alert event automatically.

Technical advantages

Feature | Description |

Storage-compute separation | Store data on low-cost object storage. Scale compute resources elastically to handle traffic spikes. |

High compression ratio | Apply columnar compression optimized for event data (JSON/CloudEvents). Reduce storage costs by over 50% compared to traditional databases. |

Data intelligence (Luma)

Luma is EventHouse’s AI analytics layer. Using an AI semantic layer and MCP protocol, it enables large language models (LLMs) to understand and analyze event data directly.

DataAgent

Luma includes a built-in DataAgent that autonomously runs a “sense–plan–act” loop:

Sense: Detect an unusual drop in transaction volume.

Plan: Decide to query payment gateway logs and database connection pool status.

Act: Generate SQL for correlation analysis and output a root cause report.

AI semantic layer

Traditional database fields (such as col_1 and status_code) lack business meaning for AI models. Luma lets you add business descriptions, synonyms, and calculation logic to fields in the catalog. Use this semantic information to improve Text-to-SQL accuracy.

Example: Ask in natural language, “Show me payment-failed orders from Beijing yesterday.” Luma auto-generates the matching SQL and returns results.

Scenario: E-commerce risk control

An operator asks, “Were there any abnormal brushing behaviors in the last 30 minutes?”

The Luma agent uses MCP to fetch catalog info and identifies

Transaction_TableandUser_Behavior_Log.The agent auto-generates a correlated SQL query (with time windows, IP aggregation, and device fingerprint analysis) and runs it in the EventHouse analysis engine.

Return a list of suspected brusher UserIDs and generate a risk report using the knowledge base.

MCP protocol integration

EventHouse natively supports MCP (Model Context Protocol). Any MCP-compatible AI agent (such as LangChain, Dify, or a custom agent) can connect to EventHouse:

Tool-based querying: Wrap query capabilities as MCP tools. Agents invoke them based on user intent.

Context awareness: Agents use data schemas as context to produce more accurate analysis results.

MCP protocol integration is not yet available. Watch product updates for the release date.