Use the Logs page in the Elasticsearch console to search Logstash cluster logs by keyword and time range. Logs help you identify cluster issues and perform day-to-day O&M.

Prerequisites

Before you begin, ensure that you have:

A running Logstash cluster on Alibaba Cloud Elasticsearch

Access to the Elasticsearch console

Log types

Logstash exposes four log types. The following table describes each type, its default collection status, and when to use it.

| Log type | Description | Default status | When to use |

|---|---|---|---|

| Cluster log | Records the operational status of each node and pipeline activity in the cluster. Pipeline activity includes network connectivity between a source and a destination, pipeline creation and configuration changes, and pipeline errors. | Enabled | Start here when errors occur in your business. Check cluster logs alongside monitoring data to troubleshoot performance and configuration issues. |

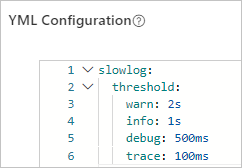

| Slow log | Records pipeline events that exceed a configured time threshold. | Enabled | Investigate slow data write operations. Common causes: insufficient resources on the pipeline source or destination, or Pipeline Batch Size and Pipeline Workers values that are too small. |

| GC log | Records garbage collection (GC) events triggered by JVM heap memory usage, including Old GC, Concurrent Mark Sweep (CMS) GC, Full GC, and Minor GC. | Enabled | Diagnose performance bottlenecks by checking whether GC operations are slow or frequent. |

| Debug log | Records the output data of a Logstash pipeline. Requires installing the logstash-output-file_extend plug-in and configuring the file_extend parameter in the pipeline output. | Disabled | Inspect pipeline output data or debug pipeline configurations without checking the destination directly. |

Do not remove the slow log collection configuration from the YML file. It is required to locate Logstash issues. For details, see Configure a YML file.

Query logs

Go to the Logstash Clusters page of the Alibaba Cloud Elasticsearch console.

Select the region where the cluster resides from the top navigation bar.

On the Logstash Clusters page, find the cluster and click its ID.

In the left-side navigation pane, click Logs.

On the Cluster Log, Slow Log, GC Log, or Debug Log tab, enter a query string, select a start time and end time, and then click Search. Query rules: Example: To find cluster logs where the log level is INFO, the node IP is

172.16.xx.xx, and the content contains the wordrunning, use:Logs from the previous seven days are searchable.

Results are sorted by time in descending order by default.

Queries use Lucene query string syntax.

ANDmust be uppercase.If you omit the end time, the current system time is used. If you omit the start time, it defaults to one hour before the end time.

host:172.16.xx.xx AND level:info AND content:running

Log fields

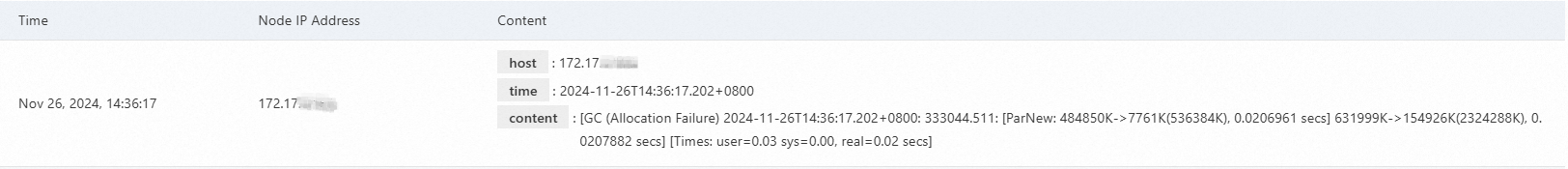

Each log entry on the Cluster Log tab contains three columns.

| Field | Description |

|---|---|

| Time | The time when the log was generated. |

| Node IP Address | The IP address of the node that generated the log. |

| Content | The full log details. Contains the following sub-fields: level (log level: TRACE, DEBUG, INFO, WARN, or ERROR; not present in GC logs), host (node IP address), time (log generation time), and content (log message). |

The following screenshot shows an example GC log entry.

The following screenshot shows an example slow log entry.

Enable debug log collection

Debug log collection is disabled by default. To enable it:

Install the

logstash-output-file_extendplug-in. For details, see Install and remove a plug-in.Configure the

file_extendparameter in the output section of the pipeline configuration. For details, see Use configuration files to manage pipelines.

After you complete both steps, pipeline output data appears on the Debug Log tab.

For a guided walkthrough of the pipeline configuration debugging feature, see Use the pipeline configuration debugging feature.