Use DuckDB sessions for interactive DuckDB SQL development to leverage lightweight and efficient data analysis.

Background

DuckDB is a lightweight, high-performance embedded analytical database engine optimized for Online Analytical Processing (OLAP) use cases.

Features

Embedded architecture: DuckDB does not require a separate server. It embeds directly into applications as a library, similar to SQLite, and supports both in-memory and on-disk modes.

Columnar storage: Data is stored by column, which optimizes performance for aggregate queries and scans.

Vectorized execution: It processes data in batches by using Single Instruction, Multiple Data (SIMD) instructions, which reduces CPU overhead.

Standard compliance: It supports SQL-92 and SQL:2011 standards, including Common Table Expressions (CTEs), window functions, JOIN operations (such as ASOF JOIN), and subqueries.

Direct-read for multiple formats: Directly query CSV, Parquet, and JSON files without importing them.

Zero-copy integration: It seamlessly converts data to and from in-memory data structures such as Pandas and Arrow, avoiding data migration overhead.

Federated query: You can use the

httpfsextension to access remote files, such as those on S3, or connect to external databases like PostgreSQL for federated queries.

Use cases

Interactive analysis: Quickly process datasets ranging from gigabytes (GB) to terabytes (TB), serving as an alternative to Pandas or Excel for large-scale data.

Edge computing: It can be deployed on edge devices to perform local data analysis.

Data science: It seamlessly integrates with the Python and R ecosystems and can be used as a data preprocessing engine for machine learning (ML).

Real-time OLAP: It supports analytical workloads that require both frequent updates and complex queries.

Limitations

DuckDB sessions are supported only on engine versions esr-4.8.0 or later and esr-3.7.0 or later.

Create a DuckDB session

Log on to the EMR Console.

In the left-side navigation pane, choose EMR Serverless > Spark.

On the Spark page, click the name of the target workspace.

On the EMR Serverless Spark page, in the left-side navigation pane, click Session Management.

On the Session Management page, click the DuckDB Session tab.

On the DuckDB session list page, click Create DuckDB Session.

In the Create DuckDB Session dialog box, configure the following parameters.

ImportantWe recommend setting the maximum concurrency of the selected deployment queue to at least the resource size required by the DuckDB session. This value is displayed on the console.

Parameter

Description

Name

The name of the new DuckDB session. The name must be 1 to 64 characters long and can contain Chinese characters, letters, digits, underscores (_), and hyphens (-). The session name must be unique within the same workspace.

Deployment Queue

Select a resource queue for the DuckDB session. You can select only queues that are ready. If a resource queue is not available, create one on the Resource Queue Management page. For more information, see Manage Resource Queues.

Engine Version

Select the engine version for the DuckDB session. For information about the supported versions, see Release notes.

Auto Stop

The idle time after which the session stops automatically. If you enable this feature, the system automatically stops the session to save resources when it is idle for the specified period. This feature is enabled by default, with a default period of 45 minutes.

Network Connection

If your DuckDB job needs to access data sources or external services within a Virtual Private Cloud (VPC), you must configure a network connection. Select the name of an existing network connection from the drop-down list. For more information, see Add Network Connection.

Cores

The number of CPU cores for DuckDB. The default value is 2.

Memory

The amount of memory for DuckDB. The default value is 8 GB.

MemoryOverhead

The amount of overhead memory for DuckDB. The default value is

max(384 MB, 10% × memory).Spark Configurations

Enter the Spark configurations, separated by spaces. For example,

spark.sql.catalog.paimon.metastore dlf.Click Create.

After the session is created, you can view it on the DuckDB session list page. When the session status is Running, you can use it for DuckDB SQL development.

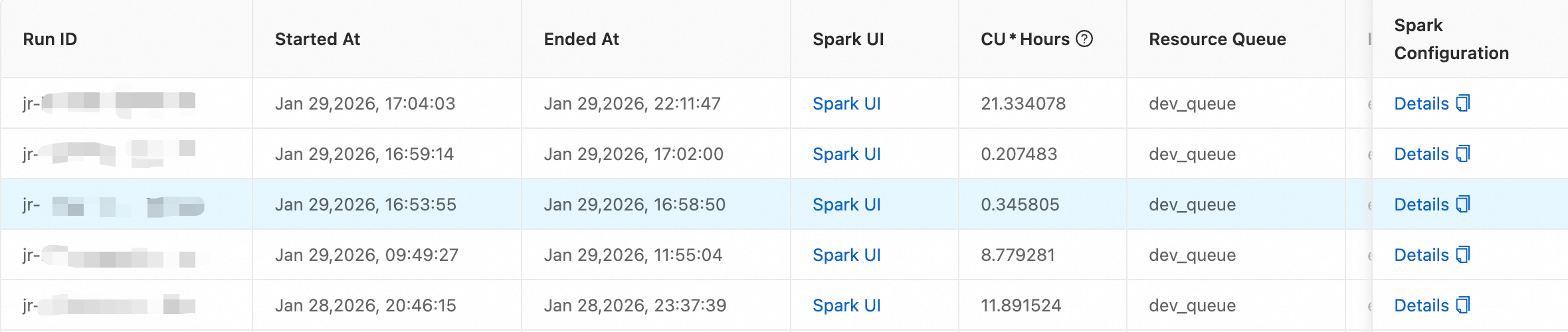

View run records

After a data development job completes, you can view its run records on the Session Management page.

On the session list page, click the session name.

Click the Run Records tab.

On this page, you can view detailed run information for the job, including the run ID, start time, and Spark UI.