In a Hadoop cluster, the master node manages job scheduling, monitoring, and termination across the cluster. To run a job, submit it through the master node using SSH.

Prerequisites

Before you begin, make sure you have:

-

An EMR on ECS cluster. For details, see Create a cluster.

-

A network connection from your local machine to the master node. When creating a cluster, enable the Public Network switch. Alternatively, attach a public network to the master node in the ECS console after the cluster is created by assigning a static public IP address or an Elastic IP Address (EIP) to the master node ECS instance. For details, see Elastic IP Address.

-

Port 22 open in the security group associated with the cluster.

Submit a Spark job

-

Log on to the master node using SSH. For details, see Log on to a cluster.

-

Run the following command to submit a Spark job. This example uses Spark 3.1.1 and runs the SparkPi estimation:

spark-submit \ --class org.apache.spark.examples.SparkPi \ --master yarn \ --deploy-mode client \ --driver-memory 512m \ --num-executors 1 \ --executor-memory 1g \ --executor-cores 2 \ /opt/apps/SPARK3/spark-current/examples/jars/spark-examples_2.12-3.1.1.jar 10Key parameters:

Parameter Description --classThe main class to run. org.apache.spark.examples.SparkPiis a built-in example that estimates the value of Pi.--master yarnSubmits the job to YARN for resource management. --deploy-mode clientRuns the driver on the master node. Use clusterto run the driver on a worker node instead.--driver-memoryMemory allocated to the driver process. --num-executorsNumber of executor processes to launch. --executor-memoryMemory allocated to each executor. --executor-coresNumber of CPU cores per executor. Notespark-examples_2.12-3.1.1.jaris the example JAR file bundled with Spark 3.1.1. To verify the JAR path on your cluster, log on to the cluster and run:ls /opt/apps/SPARK3/spark-current/examples/jars

View job execution records

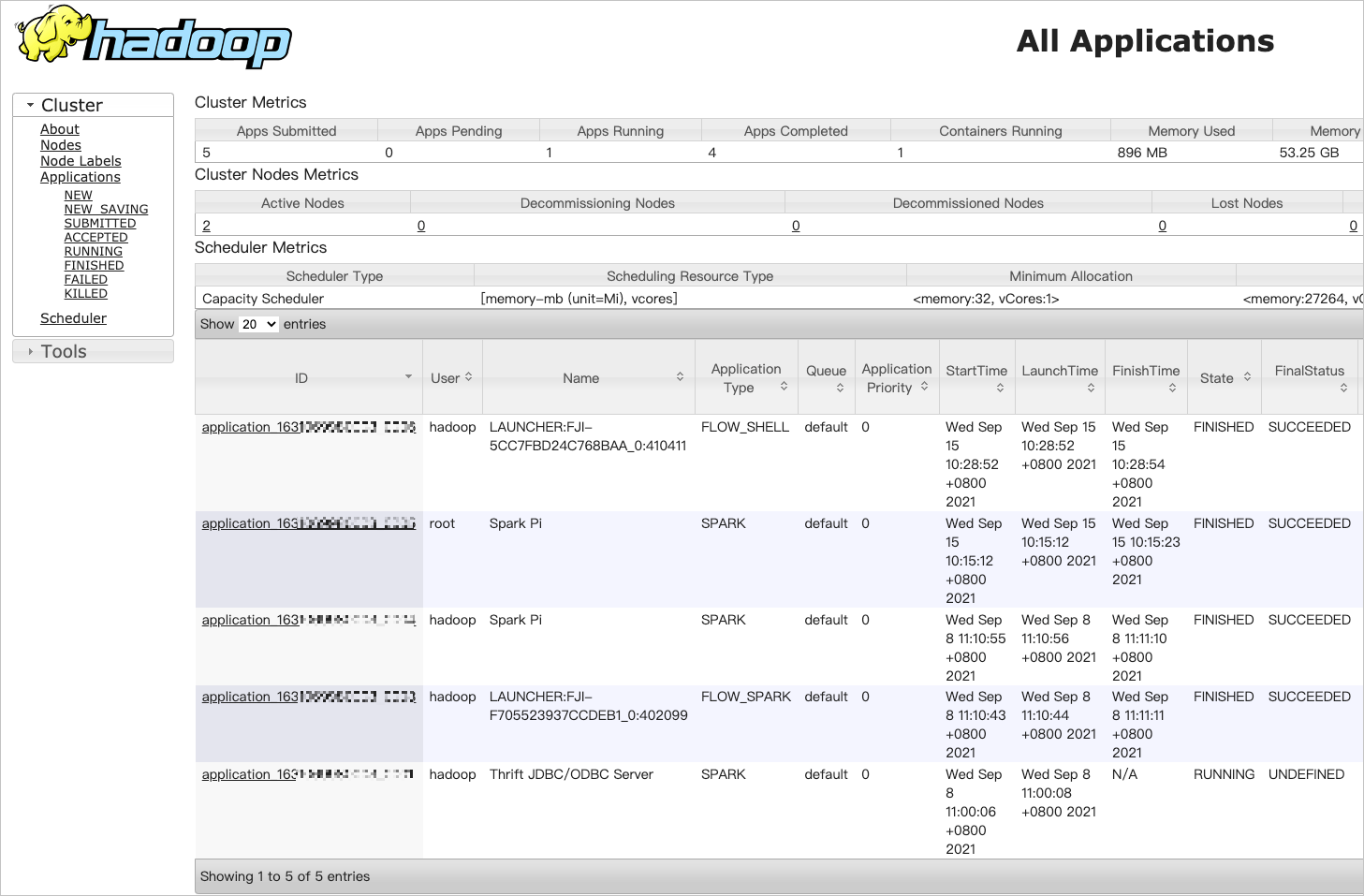

After submitting a job, view its execution status in the YARN web UI.

-

Enable port 8443 in the security group. For details, see Manage security groups.

-

Add a Knox user. For details, see OpenLDAP user management. Note the Knox account username and password — you will need them to authenticate.

-

On the EMR on ECS page, click Cluster Services in the row of the target cluster.

-

Click the Access Links and Ports tab.

-

In the YARN UI row, click the public link. Use the Knox credentials from step 2 to log on.

-

On the All Applications page, click the target job ID to view execution details.