SSL encrypts data in transit between producers, consumers, and brokers in a Dataflow cluster, protecting against eavesdropping and tampering.

By default, SSL is disabled for Kafka clusters. E-MapReduce (EMR) supports two methods to enable it: using the default EMR certificate (faster to set up) or providing a custom certificate.

Prerequisites

Before you begin, ensure that you have:

-

A Dataflow cluster created in the EMR console with Kafka selected as a service. See Create a Dataflow Kafka cluster

Step 1: Enable SSL

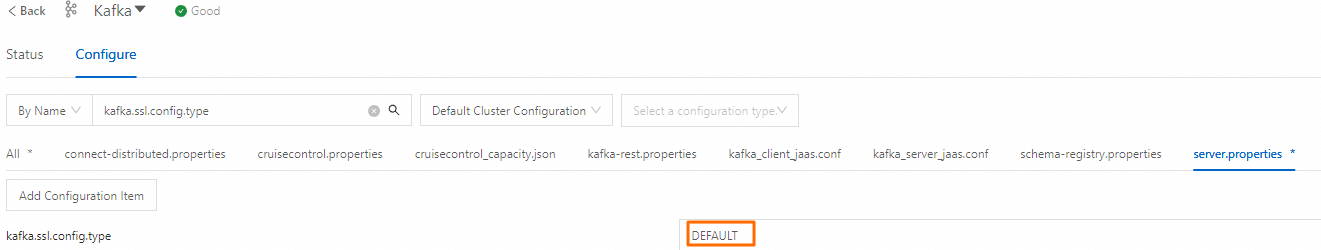

EMR controls SSL configuration through the kafka.ssl.config.type item in server.properties. Set it to DEFAULT to use the EMR-managed certificate, or CUSTOM to supply your own.

Method 1: Use the default certificate

-

Go to the Configure tab of the Kafka service page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select the region where your cluster resides and select a resource group.

-

On the EMR on ECS page, find your cluster and click Services in the Actions column.

-

On the Services tab, find the Kafka service and click Configure.

-

-

On the Configure tab, click the server.properties tab. Change the value of kafka.ssl.config.type to DEFAULT.

-

Click Save. In the dialog box that appears, enter an execution reason and click Save.

-

Restart the Kafka service: choose More > Restart in the upper-right corner. Enter an execution reason, click OK, then click OK in the Confirm dialog box.

Method 2: Use a custom certificate

Use this method when you need to supply your own keystore and truststore files for compliance or organizational PKI requirements.

-

Go to the Configure tab of the Kafka service page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select the region where your cluster resides and select a resource group.

-

On the EMR on ECS page, find your cluster and click Services in the Actions column.

-

On the Services tab, find the Kafka service and click Configure.

-

-

On the Configure tab, click the server.properties tab. Change the value of kafka.ssl.config.type to CUSTOM.

-

Click Save. In the dialog box that appears, enter an execution reason and click Save.

-

Configure the SSL-related items (all except

listeners) based on your requirements:Configuration item Description ssl.keystore.locationPath to the keystore file, which holds the broker's own identity certificate ssl.keystore.passwordPassword to unlock the keystore ssl.key.passwordPassword for the private key within the keystore ssl.keystore.typeKeystore file format (for example, JKS)ssl.truststore.locationPath to the truststore file, which holds the certificates the broker trusts ssl.truststore.passwordPassword to unlock the truststore ssl.truststore.typeTruststore file format (for example, JKS) -

Restart the Kafka service: choose More > Restart in the upper-right corner. Enter an execution reason, click OK, then click OK in the Confirm dialog box.

Step 2: Access Kafka over SSL

To connect to an SSL-enabled Kafka cluster, configure a client properties file with the minimum required settings: security.protocol, ssl.truststore.location, and ssl.truststore.password.

The following example uses the Kafka built-in producer and consumer tools to verify the SSL connection from the cluster master node.

-

Log on to the master node of your cluster over SSH. See Log on to a cluster.

-

Create

ssl.propertieswith the following content:NoteTo run jobs outside the Kafka cluster, copy the truststore and keystore files from

/var/taihao-security/ssl/ssl/on a cluster node to your runtime environment, then update the paths inssl.propertiesaccordingly.security.protocol=SSL ssl.truststore.location=/var/taihao-security/ssl/ssl/truststore ssl.truststore.password=${password} ssl.keystore.location=/var/taihao-security/ssl/ssl/keystore ssl.keystore.password=${password} ssl.endpoint.identification.algorithm=On the Configure tab of the Kafka service page in the EMR console, you can view the values of the preceding configuration items.

-

Create a test topic:

kafka-topics.sh --partitions 10 --replication-factor 2 --bootstrap-server core-1-1:9092 --topic test --create --command-config ssl.properties -

Produce test messages:

export IP=<your_InnerIP> kafka-producer-perf-test.sh --topic test --num-records 123456 --throughput 10000 --record-size 1024 --producer-props bootstrap.servers=${IP}:9092 --producer.config ssl.properties -

Consume the test messages:

export IP=<your_InnerIP> kafka-consumer-perf-test.sh --broker-list ${IP}:9092 --messages 100000000 --topic test --consumer.config ssl.propertiesReplace

<your_InnerIP>with the internal IP address of the master-1-1 node.

What's next

To add user identity verification on top of SSL encryption, configure Simple Authentication and Security Layer (SASL) for your cluster. See Log on to a Kafka cluster by using SASL.