This tutorial shows you how to load the official ClickHouse sample dataset into an Alibaba Cloud E-MapReduce (EMR) ClickHouse cluster, using a distributed table with rand() to spread writes randomly across all core nodes.

Prerequisites

Before you begin, ensure that you have:

-

A ClickHouse cluster created in EMR. For details, see Create a ClickHouse cluster.

-

SSH access to the cluster. For details, see Log on to a cluster.

Step 1: Connect to the cluster

Log on to the ClickHouse cluster via SSH, then start the ClickHouse client on a core node:

clickhouse-client -h core-1-1 -mcore-1-1 is an example core node name. If your cluster has multiple core nodes, connect to any one of them. To find your node names, go to the Nodes tab of your cluster in the E-MapReduce console.

Step 2: Create the database

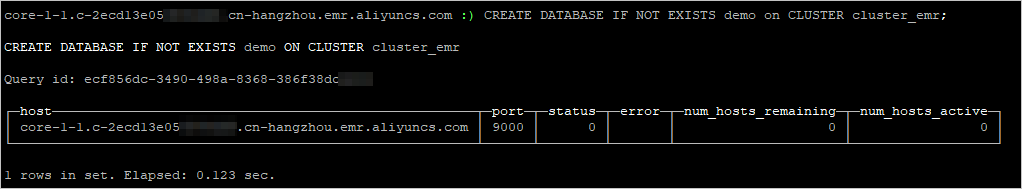

Create a database across all nodes using the ON CLUSTER clause. The ON CLUSTER clause applies the DDL statement to every node in the specified cluster at once, so you only need to run it once:

CREATE DATABASE IF NOT EXISTS demo ON CLUSTER cluster_emr;The output confirms that the database was created on each node:

Step 3: Create a replicated table

Create a local replicated table on every node. A replicated table stores actual data and keeps it consistent across all replicas using the ReplicatedMergeTree engine:

CREATE TABLE demo.hits_local ON CLUSTER cluster_emr

(

`WatchID` UInt64,

`JavaEnable` UInt8,

`Title` String,

`GoodEvent` Int16,

`EventTime` DateTime,

`EventDate` Date,

`CounterID` UInt32,

`ClientIP` UInt32,

`ClientIP6` FixedString(16),

`RegionID` UInt32,

`UserID` UInt64,

`CounterClass` Int8,

`OS` UInt8,

`UserAgent` UInt8,

`URL` String,

`Referer` String,

`URLDomain` String,

`RefererDomain` String,

`Refresh` UInt8,

`IsRobot` UInt8,

`RefererCategories` Array(UInt16),

`URLCategories` Array(UInt16),

`URLRegions` Array(UInt32),

`RefererRegions` Array(UInt32),

`ResolutionWidth` UInt16,

`ResolutionHeight` UInt16,

`ResolutionDepth` UInt8,

`FlashMajor` UInt8,

`FlashMinor` UInt8,

`FlashMinor2` String,

`NetMajor` UInt8,

`NetMinor` UInt8,

`UserAgentMajor` UInt16,

`UserAgentMinor` FixedString(2),

`CookieEnable` UInt8,

`JavascriptEnable` UInt8,

`IsMobile` UInt8,

`MobilePhone` UInt8,

`MobilePhoneModel` String,

`Params` String,

`IPNetworkID` UInt32,

`TraficSourceID` Int8,

`SearchEngineID` UInt16,

`SearchPhrase` String,

`AdvEngineID` UInt8,

`IsArtifical` UInt8,

`WindowClientWidth` UInt16,

`WindowClientHeight` UInt16,

`ClientTimeZone` Int16,

`ClientEventTime` DateTime,

`SilverlightVersion1` UInt8,

`SilverlightVersion2` UInt8,

`SilverlightVersion3` UInt32,

`SilverlightVersion4` UInt16,

`PageCharset` String,

`CodeVersion` UInt32,

`IsLink` UInt8,

`IsDownload` UInt8,

`IsNotBounce` UInt8,

`FUniqID` UInt64,

`HID` UInt32,

`IsOldCounter` UInt8,

`IsEvent` UInt8,

`IsParameter` UInt8,

`DontCountHits` UInt8,

`WithHash` UInt8,

`HitColor` FixedString(1),

`UTCEventTime` DateTime,

`Age` UInt8,

`Sex` UInt8,

`Income` UInt8,

`Interests` UInt16,

`Robotness` UInt8,

`GeneralInterests` Array(UInt16),

`RemoteIP` UInt32,

`RemoteIP6` FixedString(16),

`WindowName` Int32,

`OpenerName` Int32,

`HistoryLength` Int16,

`BrowserLanguage` FixedString(2),

`BrowserCountry` FixedString(2),

`SocialNetwork` String,

`SocialAction` String,

`HTTPError` UInt16,

`SendTiming` Int32,

`DNSTiming` Int32,

`ConnectTiming` Int32,

`ResponseStartTiming` Int32,

`ResponseEndTiming` Int32,

`FetchTiming` Int32,

`RedirectTiming` Int32,

`DOMInteractiveTiming` Int32,

`DOMContentLoadedTiming` Int32,

`DOMCompleteTiming` Int32,

`LoadEventStartTiming` Int32,

`LoadEventEndTiming` Int32,

`NSToDOMContentLoadedTiming` Int32,

`FirstPaintTiming` Int32,

`RedirectCount` Int8,

`SocialSourceNetworkID` UInt8,

`SocialSourcePage` String,

`ParamPrice` Int64,

`ParamOrderID` String,

`ParamCurrency` FixedString(3),

`ParamCurrencyID` UInt16,

`GoalsReached` Array(UInt32),

`OpenstatServiceName` String,

`OpenstatCampaignID` String,

`OpenstatAdID` String,

`OpenstatSourceID` String,

`UTMSource` String,

`UTMMedium` String,

`UTMCampaign` String,

`UTMContent` String,

`UTMTerm` String,

`FromTag` String,

`HasGCLID` UInt8,

`RefererHash` UInt64,

`URLHash` UInt64,

`CLID` UInt32,

`YCLID` UInt64,

`ShareService` String,

`ShareURL` String,

`ShareTitle` String,

`ParsedParams` Nested(Key1 String,Key2 String,Key3 String,Key4 String,Key5 String,ValueDouble Float64),

`IslandID` FixedString(16),

`RequestNum` UInt32,

`RequestTry` UInt8

)

ENGINE = ReplicatedMergeTree('/clickhouse/tables/{shard}/{database}/hits_local', '{replica}')

PARTITION BY toYYYYMM(EventDate)

ORDER BY (CounterID, EventDate, intHash32(UserID))

SAMPLE BY intHash32(UserID);The {shard} and {replica} placeholders are macros that EMR automatically generates for each ClickHouse cluster node. They map to each node's shard number and replica name, so you can use them directly without modification.

Step 4: Create a distributed table

Create a distributed table as a query layer over the local replicated tables. A distributed table does not store data — it routes queries and writes across the underlying local tables on each node. The rand() function distributes incoming writes randomly across all nodes:

CREATE TABLE demo.hits_all ON CLUSTER cluster_emr AS demo.hits_local

ENGINE = Distributed(cluster_emr, demo, hits_local, rand());Step 5: Download the sample dataset

Exit the ClickHouse client, then download the official ClickHouse sample dataset:

curl https://datasets.clickhouse.com/hits/tsv/hits_v1.tsv.xz | unxz --threads=`nproc` > hits_v1.tsvStep 6: Import data

From the directory where hits_v1.tsv is stored, insert the data into the distributed table:

clickhouse-client -h core-1-1 --query "INSERT INTO demo.hits_all FORMAT TSV" --max_insert_block_size=100000 < hits_v1.tsvData is written to the distributed table and distributed randomly to the local tables on each node.

Step 7: Verify the data distribution

Restart the ClickHouse client, then check the total row count across the cluster:

clickhouse-client -h core-1-1 -mQuery the distributed table to confirm all rows were imported:

SELECT count(*) FROM demo.hits_all;Because writes are distributed randomly, each node holds a different share of the total rows. To check the local row count on core-1-1:

SELECT count(*) FROM demo.hits_local;To check the local row count on another node, open a new client session connected to that node:

clickhouse-client -h core-1-2 -mThen run the same query:

SELECT count(*) FROM demo.hits_local;The local counts across all nodes differ, but their sum equals the total returned by demo.hits_all.

What's next

-

ClickHouse documentation — learn more about the

ReplicatedMergeTreeengine, theDistributedengine, and query optimization.