Use this guide to diagnose and resolve common Hive job failures on E-MapReduce (EMR), including out-of-memory (OOM) errors, Hive Metastore failures, User-Defined Function (UDF) conflicts, engine compatibility issues, and known open source defects.

Locate the root cause

Before investigating specific errors, identify which logs contain the failure details.

Hive client logs — check these first for syntax errors and client-side exceptions:

Hive CLI jobs:

/tmp/hive/$USER/hive.logor/tmp/$USER/hive.logon the cluster or gateway nodeHive Beeline or Java Database Connectivity (JDBC) jobs: the HiveServer2 log directory, typically

/var/log/emr/hiveor/mnt/disk1/log/hive

YARN application logs — check these for task-level failures:

yarn logs -applicationId application_xxx_xxx -appOwner userNameMemory-related issues

OOM error due to insufficient container memory

Error: java.lang.OutOfMemoryError: GC overhead limit exceeded or java.lang.OutOfMemoryError: Java heap space

Increase the container memory for the execution engine running your Hive job.

Hive on MapReduce (MR)

On the Configure tab of the YARN service page in the EMR console, click the mapred-site.xml tab and increase the mapper and reducer memory:

mapreduce.map.memory.mb=4096

mapreduce.reduce.memory.mb=4096Also update the JVM heap size to 80% of the container memory:

mapreduce.map.java.opts=-Xmx3276m

mapreduce.reduce.java.opts=-Xmx3276mKeep all other existing options in the java.opts parameters unchanged.Hive on Tez

If the Tez container memory is insufficient, click the hive-site.xml tab on the Configure tab of the Hive service page and increase

hive.tez.container.size:hive.tez.container.size=4096If the Tez ApplicationMaster memory is insufficient, click the tez-site.xml tab on the Configure tab of the Tez service page and increase

tez.am.resource.memory.mb:tez.am.resource.memory.mb=4096

Hive on Spark

On the Configure tab of the Spark service page, click the spark-defaults.conf tab and increase spark.executor.memory:

spark.executor.memory=4gContainer killed by YARN for exceeding memory limits

Error: Container killed by YARN for exceeding memory limits

Cause: The total memory used by the task — including JVM heap memory, JVM off-heap memory, and child process memory — exceeds what the job requested from YARN. The most common cause is a mismatch between the JVM heap size and the YARN container memory. For example, if the Map task JVM heap is set to 4 GB (mapreduce.map.java.opts=-Xmx4g) but the YARN container memory is only 3 GB (mapreduce.map.memory.mb=3072), the YARN NodeManager kills the container.

Fix:

Hive on MR: Increase

mapreduce.map.memory.mbandmapreduce.reduce.memory.mbto at least 1.25x the corresponding-Xmxvalues.Hive on Spark: Increase

spark.executor.memoryOverheadto at least 25% ofspark.executor.memory.

OOM error caused by an oversized sort buffer

Error:

Error running child: java.lang.OutOfMemoryError: Java heap space

at org.apache.hadoop.mapred.MapTask$MapOutputBuffer.init(MapTask.java:986)Cause: The sort buffer size exceeds the container memory. For example, if the container is allocated 1,300 MB but the sort buffer is set to 1,024 MB, there is not enough memory left for the task to run.

Fix: Either increase the container memory or reduce the sort buffer size:

Hive on Tez:

tez.runtime.io.sort.mbHive on MR:

mapreduce.task.io.sort.mb

OOM error caused by GROUP BY statements

Error:

java.lang.OutOfMemoryError: GC overhead limit exceeded

at org.apache.hadoop.hive.ql.exec.GroupByOperator.updateAggregations(GroupByOperator.java:611)Cause: The hash tables generated by GROUP BY statements consume excessive memory.

Fix: Use any combination of the following approaches:

Reduce the input split size to 128 MB or smaller to increase parallelism. Set

mapreduce.input.fileinputformat.split.maxsizeto134217728(128 MB) or67108864(64 MB).Increase the concurrency of mappers and reducers.

Increase the container memory. See OOM error due to insufficient container memory.

OOM error when reading Snappy-compressed files

Cause: Standard Snappy files written by services such as Simple Log Service (SLS) use a different format from Hadoop Snappy files. EMR defaults to Hadoop Snappy format, which causes an OOM error when processing standard Snappy files.

Fix: Set the following parameter for the Hive job:

set io.compression.codec.snappy.native=true;Hive Metastore issues

Dropping a large partitioned table times out

Error:

FAILED: Execution ERROR, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask.

org.apache.thrift.transport.TTransportException: java.net.SocketTimeoutException: Read timeoutCause: The table has too many partitions. The drop operation takes longer than the Hive client's socket timeout for connecting to the Hive Metastore.

Fix:

On the Configure tab of the Hive service page in the EMR console, click the hive-site.xml tab and increase the Hive client socket timeout:

hive.metastore.client.socket.timeout=1200sDrop partitions in batches using a condition-based statement. Repeat as needed:

ALTER TABLE [TableName] DROP IF EXISTS PARTITION (ds<='20220720');

INSERT OVERWRITE into a dynamic partition fails with a directory cleanup error

Error: When running INSERT OVERWRITE into a dynamic partition, the following error appears in the HiveServer2 logs:

Error in query: org.apache.hadoop.hive.ql.metadata.HiveException: Directory oss://xxxx could not be cleaned up.;Cause: The metadata records a partition, but the corresponding directory does not exist in the storage system. The cleanup step fails because of this inconsistency.

Fix: Resolve the metadata inconsistency and run the job again.

java.net.UnknownHostException when reading or deleting a Hive table

Error: java.lang.IllegalArgumentException: java.net.UnknownHostException: emr-header-1.xxx

Cause: If the database was created using Data Lake Formation (DLF) or a unified metastore in the old EMR console, its initial path points to the Hadoop Distributed File System (HDFS) of the original cluster — for example, hdfs://master-1-1.xxx:9000/user/hive/warehouse/test.db or hdfs://emr-header-1.cluster-xxx:9000/user/hive/warehouse/test.db. When you access that database from a cluster in the new EMR console, the new cluster cannot connect to the old cluster's HDFS. If the old cluster has been released, the connection fails with UnknownHostException.

Fix: Choose the approach that matches your data situation.

If the data is temporary or no longer needed: Change the table location to an Object Storage Service (OSS) path and then drop the table or database.

-- Drop a table

ALTER TABLE test_tbl SET LOCATION 'oss://bucket/not/exists';

DROP TABLE test_tbl;

-- Drop a partition

ALTER TABLE test_pt_tbl PARTITION (pt=xxx) SET LOCATION 'oss://bucket/not/exists';

ALTER TABLE test_pt_tbl DROP PARTITION (pt=xxx);

-- Drop a database

ALTER DATABASE test_db SET LOCATION 'oss://bucket/not/exists';

DROP DATABASE test_db;If the data is valid and must be preserved: Migrate the data from HDFS to OSS and recreate the table.

hadoop fs -cp hdfs://emr-header-1.xxx/old/path oss://bucket/new/path

hive -e "CREATE TABLE new_tbl LIKE old_tbl LOCATION 'oss://bucket/new/path'"UDF and third-party package issues

Conflict caused by a third-party package in the Hive lib directory

Cause: A third-party JAR was placed in $HIVE_HOME/lib, or the original Hive JAR was replaced.

Fix:

If a third-party package was added to

$HIVE_HOME/lib, remove it.If the original Hive JAR was replaced, restore it.

Hive fails to use the reflect function

Cause: Ranger authentication is enabled and reflect is on the blocklist.

Fix: Remove reflect from the UDF blocklist in hive-site.xml:

hive.server2.builtin.udf.blacklist=empty_blacklistSlow job caused by a custom UDF

Cause: If a job runs slowly with no error output, the bottleneck is often a poorly performing custom UDF.

Fix: Capture a thread dump of the Hive task to identify the slow UDF, then optimize its implementation.

Exception when parsing the grouping() function

Error: grouping() requires at least 2 argument, got 1

Cause: This is a known open source Hive bug. Hive parses the grouping() function in a case-sensitive manner. Using lowercase grouping() causes an argument parsing failure.

Fix: Replace grouping() with GROUPING() in the SQL statement.

Engine compatibility issues

Different query results when running Hive on Spark due to time zone mismatch

Symptom: Hive uses UTC, but Spark uses the local time zone by default, producing different results for timestamp-related queries.

Fix: Set the Spark session time zone to UTC. Add the following to the Spark SQL session or to the Spark configuration file:

spark.sql.session.timeZone=UTCKnown Hive version defects

Hive jobs run slowly on Spark when dynamic resource allocation is enabled

Cause: Due to a known open source Hive defect, enabling spark.dynamicAllocation.enabled=true when connecting to Spark via Beeline causes the shuffle partition count to default to 1.

Fix: Disable dynamic resource allocation for Hive on Spark, or run the job on Tez instead.

spark.dynamicAllocation.enabled=falseTez throws a RuntimeException when hive.optimize.dynamic.partition.hashjoin is set to true

Error:

java.lang.RuntimeException: java.lang.RuntimeException: cannot find field _col1 from [0:key, 1:value]

at org.apache.hadoop.hive.ql.exec.tez.TezProcessor.initializeAndRunProcessor(TezProcessor.java:296)Cause: Known open source Hive defect.

Fix: Set hive.optimize.dynamic.partition.hashjoin to false:

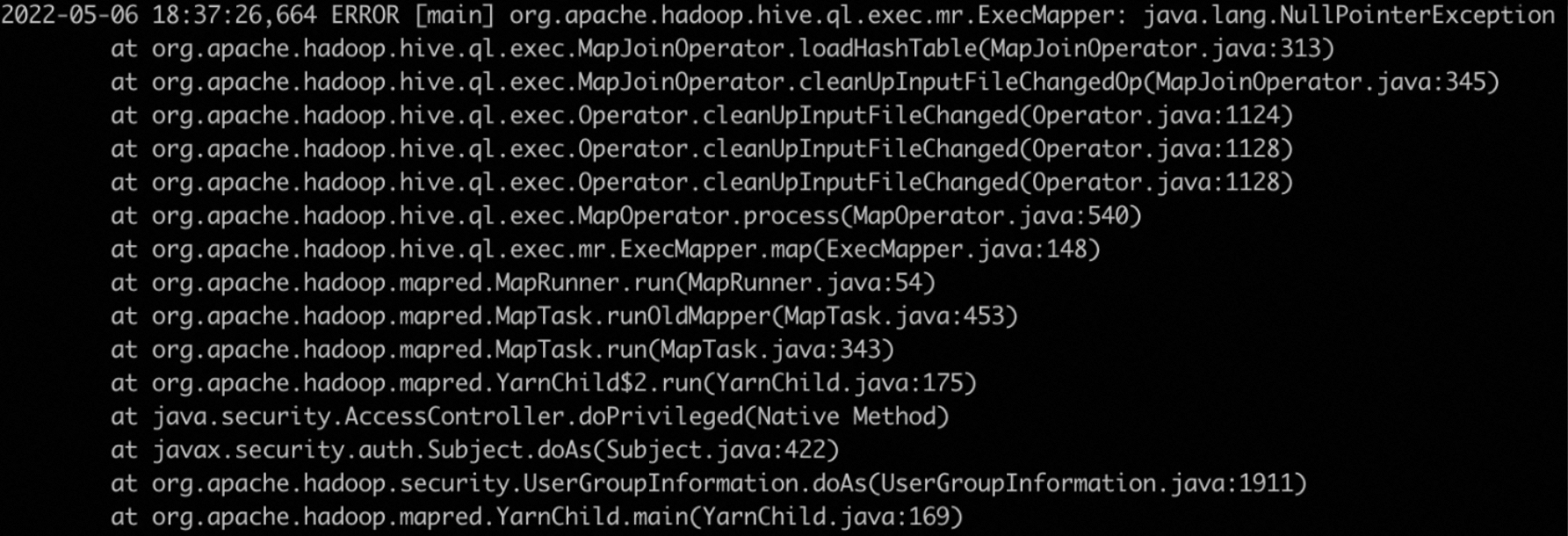

hive.optimize.dynamic.partition.hashjoin=falseMapJoinOperator throws a NullPointerException

Error:

Cause: hive.auto.convert.join.noconditionaltask is set to true.

Fix: Set hive.auto.convert.join.noconditionaltask to false:

hive.auto.convert.join.noconditionaltask=falseHive throws an IllegalStateException on Tez

Error:

java.lang.RuntimeException: java.lang.IllegalStateException: Was expecting dummy store operator but found: FS[17]

at org.apache.hadoop.hive.ql.exec.tez.TezProcessor.initializeAndRunProcessor(TezProcessor.java:296)

at org.apache.hadoop.hive.ql.exec.tez.TezProcessor.run(TezProcessor.java:250)Cause: Known open source Hive defect triggered when tez.am.container.reuse.enabled is set to true.

Fix: Disable container reuse for the Hive job:

set tez.am.container.reuse.enabled=false;Other issues

SELECT COUNT(1) returns 0

Cause: Hive uses cached table statistics to answer COUNT(1) queries. If the statistics are stale or inaccurate, the result can be 0.

Fix: Use either approach:

Bypass statistics for the query:

hive.compute.query.using.stats=falseRefresh the table statistics:

ANALYZE TABLE <table_name> COMPUTE STATISTICS;

Error when submitting a Hive job on a self-managed ECS instance

Hive jobs must be submitted from an EMR gateway cluster or by using EMR CLI — not from a self-managed Elastic Compute Service (ECS) instance. For setup instructions, see Use EMR-CLI to deploy a gateway.

Job failure caused by data skew

Symptoms:

Shuffle data exhausts available disk space

Some tasks take significantly longer than others

OOM errors occur in specific tasks or containers

Fix:

Enable skew join optimization:

set hive.optimize.skewjoin=true;Increase the concurrency of mappers and reducers.

Increase the container memory. See OOM error due to insufficient container memory.

Error: "Too many counters: 121 max=120"

Symptom: This error occurs when running a Hive SQL job on Tez or MR.

Cause: The job's counter count (121) exceeds the default maximum (120).

Fix: On the Configure tab of the YARN service page in the EMR console, search for mapreduce.job.counters.max, increase its value, and resubmit the Hive job. If the job was submitted via Beeline or JDBC, restart HiveServer2 after updating the configuration.