Use a self-hosted RDS for MySQL database or an ApsaraDB RDS for MySQL database as the Hive metastore backend for E-MapReduce (EMR) DataLake, Custom, and Hadoop clusters. Unlike the default embedded metastore, an external database persists metadata across cluster lifecycles and can be shared across multiple clusters.

Prerequisites

Before you begin, ensure that you have:

An ApsaraDB RDS for MySQL instance. For more information, see Create an ApsaraDB RDS for MySQL instance.

Network connectivity between the EMR cluster's virtual private cloud (VPC) and the RDS instance. See Network requirements below.

Network requirements

Before creating the EMR cluster, confirm that the two resources can reach each other over the private network.

Same VPC

The EMR cluster and the RDS for MySQL instance communicate through the private network by default. Add the IPv4 CIDR block of the VPC where the EMR cluster will be created to the RDS for MySQL whitelist. After the whitelist is updated, the two resources can connect.

Different VPCs

Establish connectivity first using a VPC peering connection or another network method. For more information, see Use VPC peering connections for private network communication between VPCs. Then add the IPv4 CIDR block of the EMR cluster's VPC to the RDS for MySQL whitelist.

Step 1: Prepare a metadatabase

Create a database in the RDS for MySQL instance. For more information, see Create a database.

Create a standard account and grant it read and write permissions on the database. For more information, see Create an account.

Save the username and password — you will enter them when creating the cluster in Step 2.

Obtain the internal endpoint of the RDS instance:

Add the IPv4 CIDR block of the VPC where the EMR cluster will be created to the RDS for MySQL whitelist. For more information, see Configure an IP address whitelist.

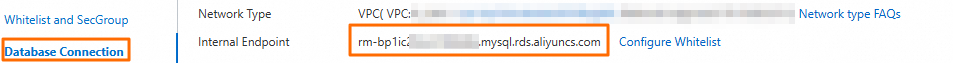

On the instance details page, click Database Connection in the left-side navigation pane.

On the Database Connection page, click the internal endpoint address to copy it.

Save the internal endpoint — you will use it to build the connection URL in Step 2.

Step 2: Create a cluster

When creating the cluster, go to the Software Configuration step and configure the metastore connection. For all other parameters, see Create a cluster.

The parameters differ by cluster type:

DataLake and Custom clusters

The Metadata parameter is available only when you select the HDFS (OSS-HDFS), YARN, and Hive services.

| Parameter | Value |

|---|---|

| Metadata | Select Self-managed RDS. |

| javax.jdo.option.ConnectionURL | Enter the JDBC connection URL in the format jdbc:mysql://rm-xxxxxx.mysql.rds.aliyuncs.com/<Database name>, where rm-xxxxxx.mysql.rds.aliyuncs.com is the internal endpoint from Step 1 and <Database name> is the name of the database you created. |

| javax.jdo.option.ConnectionUserName | Enter the username from Step 1. |

| javax.jdo.option.ConnectionPassword | Enter the password from Step 1. |

Hadoop clusters

| Parameter | Value |

|---|---|

| RDS Endpoint | Enter the JDBC connection URL in the format jdbc:mysql://rm-xxxxxx.mysql.rds.aliyuncs.com/<Database name>, where rm-xxxxxx.mysql.rds.aliyuncs.com is the internal endpoint from Step 1 and <Database name> is the name of the database you created. |

| RDS Username | Enter the username from Step 1. |

| RDS Password | Enter the password from Step 1. |

(Optional) Step 3: Initialize the Metastore service

Skip this step if you are using a DataLake or Custom cluster — those cluster types initialize the Hive metadatabase automatically during creation.

Initialize the Metastore service if either of the following applies:

You created a Hadoop cluster running EMR V3.38.X or earlier, EMR V4.9.X or earlier, or EMR V5.4.X or earlier.

You changed the metadata storage of an existing cluster to an RDS for MySQL database.

Before initialization, the HiveMetaStore and HiveServer2 components of Hive and the ThriftServer component of Spark may be in an abnormal state. They recover automatically after initialization completes.

To initialize the Metastore service:

Log on to the master node of the cluster over SSH. For more information, see Log on to a cluster.

Switch to the hadoop user:

su - hadoopInitialize the Metastore service:

schematool -initSchema -dbType mysql

After the command completes, the RDS for MySQL database is ready to use as the Hive metadatabase.