When cluster utilization stays low for an extended period, you can remove nodes from a Core or Task node group to reduce costs.

Pay-as-you-go Task node groups can be scaled in directly from the EMR console. For all other node group types — pay-as-you-go Core, subscription Task, and subscription Core — follow the steps in this topic.

Limitations

-

HDFS data loss risk: If the number of Core nodes equals the number of Hadoop Distributed File System (HDFS) replicas, do not scale in the Core node group.

-

Old-version HA clusters: If your cluster is an old-version high availability (HA) Hadoop cluster with 2 master nodes, do not remove the emr-worker-1 node. ZooKeeper is deployed on that node.

Usage notes

-

This operation cannot be rolled back. Components cannot be recovered after they are unpublished.

-

Evaluate the impact on your workloads before proceeding. Skipping this assessment can cause job scheduling failures and data security risks.

How it works

Manually shrinking a node group is a three-stage process:

-

Select a node to remove — Identify underutilized nodes using YARN metrics or the YARN web UI.

-

Unpublish components on the node — Safely decommission each service component deployed on the node. This step drains in-flight data and migrates it to remaining nodes before the node is removed.

-

Release the ECS instance — After all components are unpublished, release the corresponding ECS instance from the ECS console.

Skipping step 2 can interrupt running jobs and put data at risk.

Prerequisites

Before you begin, ensure that you have:

-

Access to the EMR console and SSH access to the master node

-

(If using a RAM user) The AliyunECSFullAccess policy attached to the RAM user — required to release ECS instances

Step 1: Identify a node to remove

Select a node based on resource utilization. Use one of the following methods.

Method 1: EMR console monitoring

-

Go to the Metric Monitoring page.

-

On the YARN-Queues dashboard, check the AvailableVCores metric. A consistently high value means many CPU cores are idle — consider scaling in the Core or Task node group.

-

On the YARN-NodeManagers dashboard, check the AvailableGB metric per node. A node with persistently high available memory is a good candidate for removal.

Check other metrics in the context of your workload before making a decision.

Method 2: YARN web UI

-

Open the YARN Resource Manager UI and check overall queue resource utilization. Underutilized queues indicate capacity to scale in.

-

Go to the Nodes page. Sort nodes by Nodes Address and identify the node with the largest amount of available memory. That node is a good candidate for removal.

For old-version Hadoop clusters, note the following restrictions:

-

Non-HA clusters: do not remove emr-worker-1 or emr-worker-2.

-

HA clusters with 2 master nodes: do not remove emr-worker-1.

Step 2: View components deployed on the node

Before unpublishing components, check which ones are deployed on the target node. Go to the Nodes page in the EMR console.

Unpublish each of the following components if they are present on the target node:

| Component | Service | Action |

|---|---|---|

| NodeManager | YARN | Unpublish NodeManager (YARN) |

| DataNode | HDFS | Unpublish DataNode (HDFS) |

| Backend (BE) | StarRocks | Unpublish Backend (StarRocks) |

| HRegionServer | HBase | Unpublish HRegionServer (HBase) |

| DataNode | HBase-HDFS | Unpublish DataNode (HBase-HDFS) |

| JindoStorageService | SmartData (Hadoop clusters) | Unpublish JindoStorageService (SmartData) |

Unpublish NodeManager (YARN)

-

Go to the Status tab of the YARN service page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select your region and resource group.

-

On the EMR on ECS page, find the target cluster and click Services in the Actions column.

-

On the Cluster Services page, click Status in the YARN service area.

-

-

Unpublish the NodeManager component on the target node.

-

In the Components list, click > Unpublish in the Actions column for NodeManager.

-

In the dialog box, set Execution Scope to Specific Machine, enter an Execution Reason, and click OK.

-

Click OK to confirm.

-

-

Click Operation History in the upper-right corner to track progress.

Unpublish DataNode (HDFS)

-

Log on to the master node via SSH. For details, see Log on to a cluster.

-

Switch to the

hdfsuser and list all NameNodes:sudo su - hdfs hdfs haadmin -getAllServiceState -

Log on to a NameNode via SSH and add the target node hostname to the

dfs.excludefile. Add one node at a time.-

Hadoop clusters

touch /etc/ecm/hadoop-conf/dfs.exclude vim /etc/ecm/hadoop-conf/dfs.excludeIn vim, press

oto insert a new line and enter the hostname:emr-worker-3.cluster-xxxxx emr-worker-4.cluster-xxxxx -

Non-legacy Hadoop clusters

touch /etc/taihao-apps/hdfs-conf/dfs.exclude vim /etc/taihao-apps/hdfs-conf/dfs.excludeIn vim, press

oto insert a new line and enter the hostname:core-1-3.c-0894dxxxxxxxxx core-1-4.c-0894dxxxxxxxxx

-

-

On the NameNode, switch to the

hdfsuser and refresh the node list. HDFS begins decommissioning the DataNode automatically.sudo su - hdfs hdfs dfsadmin -refreshNodes -

Verify that decommissioning is complete:

hadoop dfsadmin -reportWhen the status shows

Decommissioned, all data has been migrated to other nodes and the DataNode is unpublished.

Unpublish Backend (StarRocks)

-

Log on to the cluster and connect via a client. For details, see Quick Start.

-

Decommission the Backend (BE) node gracefully:

ALTER SYSTEM DECOMMISSION backend "be_ip:be_heartbeat_service_port";Replace the placeholders as needed:

Placeholder Description Where to find it be_ipInternal IP address of the BE node Nodes page in the EMR console be_heartbeat_service_portHeartbeat port of the BE node (default: 9050) Run show backends;If

DECOMMISSIONis slow, force-remove the BE node usingDROP:ImportantOnly use

DROPif the system has three complete replicas.ALTER SYSTEM DROP backend "be_ip:be_heartbeat_service_port"; -

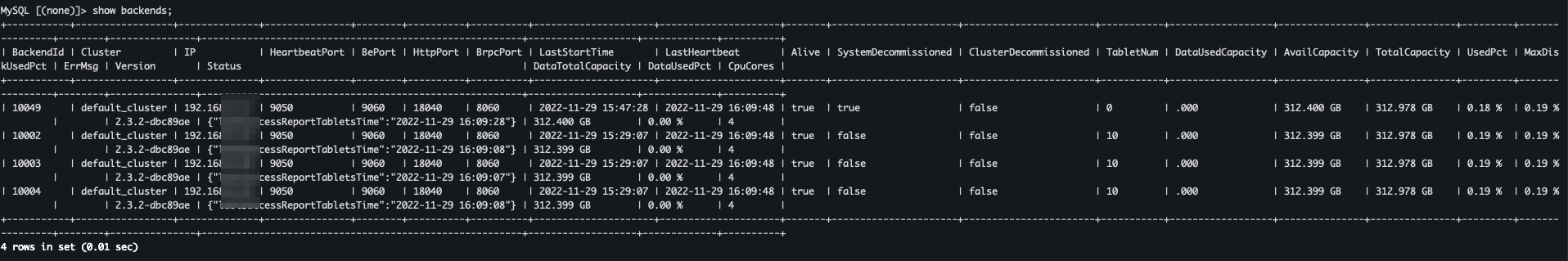

Check the removal status:

show backends;

-

SystemDecommissioned = true: the node is being removed. -

TabletNum = 0: metadata cleanup is complete. -

Node no longer listed: removal is successful.

-

Unpublish HRegionServer (HBase)

-

Go to the Status tab of the HBase service page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select your region and resource group.

-

On the EMR on ECS page, find the target cluster and click Services in the Actions column.

-

On the Cluster Services page, click Status in the HBase service area.

-

-

Stop the HRegionServer component on the target node.

-

In the Components section, click Stop in the Actions column for HRegionServer.

-

In the dialog box, set Execution Scope to Specific Machine, enter an Execution Reason, and click OK.

-

Click OK to confirm.

-

-

Click Operation History in the upper-right corner to track progress.

Unpublish DataNode (HBase-HDFS)

-

Log on to the master node via SSH. For details, see Log on to a cluster.

-

Switch to the

hdfsuser and set the environment variable for HBase-HDFS:sudo su - hdfs export HADOOP_CONF_DIR=/etc/taihao-apps/hdfs-conf/namenode -

Check NameNode status:

hdfs dfsadmin -report -

Log on to a NameNode via SSH and add the target node hostname to the

dfs.excludefile. Add one node at a time.touch /etc/taihao-apps/hdfs-conf/dfs.exclude vim /etc/taihao-apps/hdfs-conf/dfs.excludeIn vim, press

oto insert a new line and enter the hostname:core-1-3.c-0894dxxxxxxxxx core-1-4.c-0894dxxxxxxxxx -

On the NameNode, switch to the

hdfsuser, set the environment variable, and refresh the node list:sudo su - hdfs export HADOOP_CONF_DIR=/etc/taihao-apps/hdfs-conf/namenode hdfs dfsadmin -refreshNodes -

Verify that decommissioning is complete:

hadoop dfsadmin -reportWhen the status shows

Decommissioned, the DataNode is unpublished.

Unpublish JindoStorageService (SmartData)

-

Go to the Status tab of the SmartData service page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select your region and resource group.

-

On the EMR on ECS page, find the target cluster and click Services in the Actions column.

-

On the Cluster Services page, click Status in the SmartData service area.

-

-

Unpublish the JindoStorageService component on the target node.

-

In the Components List, click > Unpublish in the Actions column for JindoStorageService.

-

In the dialog box, set Execution Scope to Specific Machine, enter an Execution Reason, and click OK.

-

Click OK to confirm.

-

-

Click Operation History in the upper-right corner to track progress.

Step 3: Release the node

To remove a node from an EMR cluster, release its corresponding ECS instance from the ECS console. If performing this as a RAM user, the AliyunECSFullAccess policy must be attached to your account.

-

Go to the Nodes page.

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select your region and resource group.

-

On the EMR on ECS page, find the target cluster and click Nodes in the Actions column.

-

-

On the Nodes page, click the ECS instance ID of the node to release. You are redirected to the ECS console.

-

Release the instance. For details, see Release an instance.

What's next

-

To scale in a Task node group that uses pay-as-you-go or preemptible instances directly from the console, see Scale in a cluster.

-

To add nodes to a Core or Task node group, see Scale out a cluster.

-

To automatically adjust cluster capacity based on workload, see Auto Scaling.