The Professional Mode of Bots allows you to set conditions in rules for fields such as IP, Referer, and User-Agent to filter access requests. You can monitor, require a slider, or block requests that match these conditions. This section provides examples of fields and how to configure rules.

The examples are for reference only. Configure Bot policies based on your needs.

User-Agent

User-Agent is an important HTTP request header for identifying the access device's operating system, browser type, and version. By setting User-Agent blacklist and whitelist rules, you can control access sources and enhance the security of your acceleration services.

Example

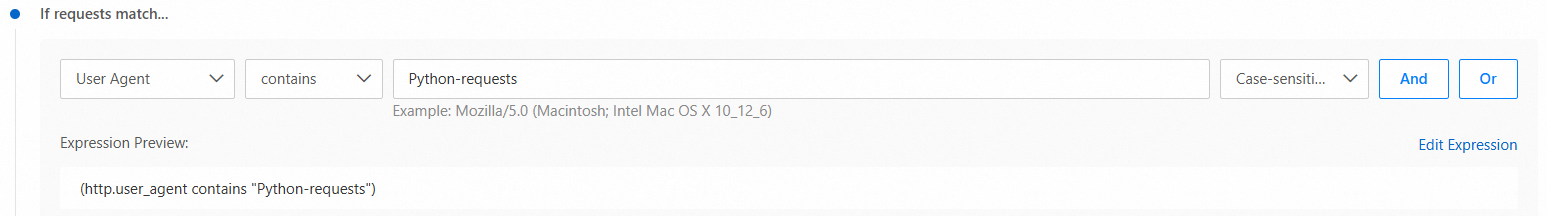

Company A's business resources were targeted by malicious crawlers, leading to a sudden increase in domain bandwidth costs. Analysis showed that these crawler requests used a User-Agent containing Python-requests. To block these requests, they set the following rule conditions:

In the If requests match... area, set the match field to

User-Agent, the match operator to contains, and the match value toPython-requests.

In the Then execute... area, turn on the Fake Spider Blocking switch to block search engine crawlers that match the conditions.

Static request

A static request is a request from a client (such as a browser) for a static file, like audio, video, or image, that is already stored on the server and does not require dynamic processing.

When the match field is set to Serves Static Resources, the "on" icon ![]() means the policies are applied to static requests, while the "off" icon

means the policies are applied to static requests, while the "off" icon ![]() means the policies apply to non-static requests.

means the policies apply to non-static requests.

Example

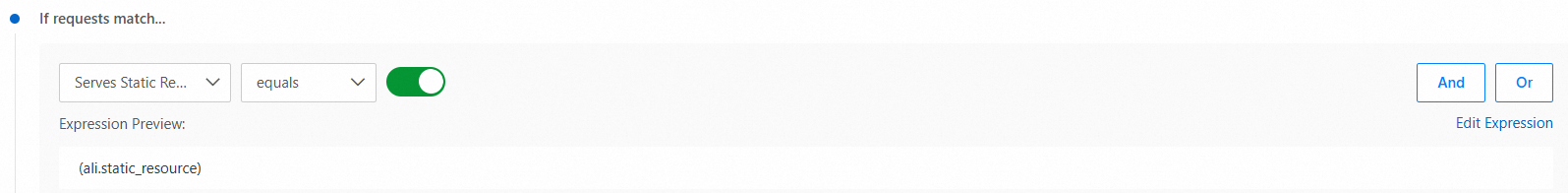

An e-commerce platform found that many bots, disguised as normal users, were making frequent requests for product images. This caused a sudden rise in ESA bandwidth costs. To address this, they set a mitigation policy that can accurately distinguish between legitimate users and malicious bots requesting static resources.

In the If requests match... area, set the match field to Serves Static Resources, the match operator to equals, and set the switch to

.

.

In the Then execute... area, configure Legitimate Bot Management, Bot Characteristic Detection and Bot Behavior Detection and other mitigation policies.

JavaScript detection

JavaScript detection works by adding a small, invisible JavaScript snippet to responses for HTML page requests. Requests from non-browser tools that cannot run JavaScript will be blocked. Requests that pass JavaScript detection are allowed to continue.

When the match field is set to If requests match..., the "on" icon ![]() means the policies are applied to requests that have passed JavaScript detection, while the "off" icon

means the policies are applied to requests that have passed JavaScript detection, while the "off" icon ![]() means the policies apply to requests that have not passed JavaScript detection.

means the policies apply to requests that have not passed JavaScript detection.

Example

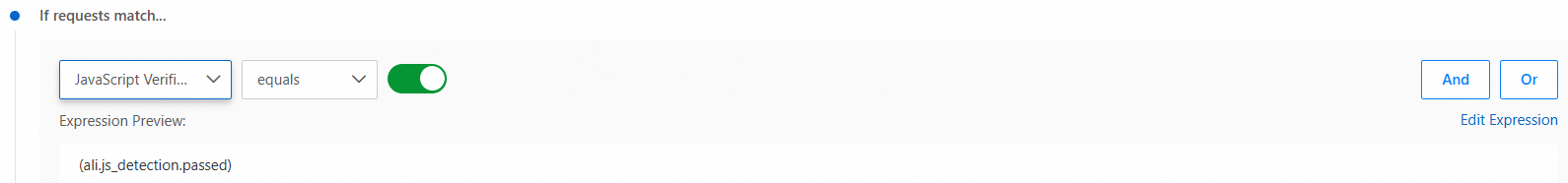

Company B has enabled JavaScript Detection on its website. To bypass Bot management checks for approved search engine crawlers, they need to add these crawlers to the whitelist after they pass JavaScript detection.

In the If requests match... area, set the match field to JavaScript Verified, the match operator to equals, and set the switch to

.

.

In the Then execute... area, click Legitimate Bot Management and then click Configure on the right to select the search engines that you want to add to the whitelist.

JA3/JA4 fingerprint

JA3 and JA4 are technical fingerprints used to identify SSL/TLS clients. JA3 creates a unique MD5 hash by analyzing the Client Hello packet during the TLS handshake. JA4 supports more protocols and uses a readable, modular string format. This improves both anti-forgery measures and flexibility.

Example

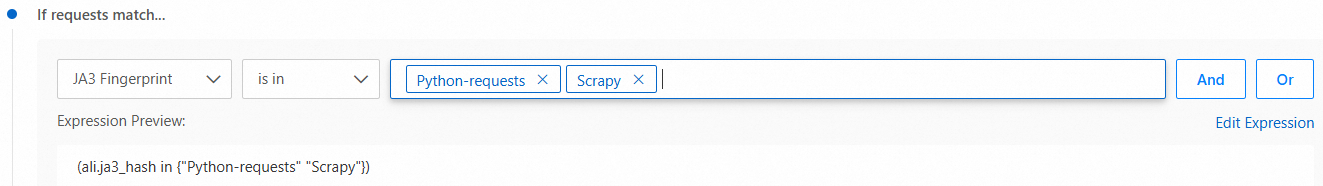

An e-commerce platform's API provides product price and inventory queries. They noticed unusual traffic that increased bandwidth usage. Analysis showed that attackers were using Python crawlers disguised as browsers to access the API, causing data breaches and performance issues. To prevent this, they configured a JA3 fingerprint blacklist rule in Professional Mode to block requests matching known malicious fingerprints, such as fingerprints from Python-requests and Scrapy.

In the If requests match... area, set the match field to JA3 Fingerprint, the match operator to is in, and the match values to

Python-requestsandScrapy.

In the Then execute... area, turn on the Fake Spider Blocking switch to quickly block crawlers that match the conditions.

Related topics

For more information on rules, see Match fields, Match operators, Match values.