This guide walks you through creating a DataLake cluster on E-MapReduce (EMR) and running a WordCount job — the canonical MapReduce example for counting word frequencies in large text datasets.

What you'll do

| Step | Action | Where |

|---|---|---|

| 1 | Create a DataLake cluster | EMR console |

| 2 | Prepare and upload input data | SSH terminal |

| 3 | Submit a WordCount job | SSH terminal |

| 4 | View the job results | SSH terminal + YARN web UI |

| 5 (optional) | Release the cluster | EMR console |

Before you begin

Before you begin, make sure you have:

-

An Alibaba Cloud account with real-name verification complete

-

Default EMR and Elastic Compute Service (ECS) roles assigned to your account — see Assign roles to an Alibaba Cloud account

The cluster you create in this guide runs in a live environment and incurs charges. Use pay-as-you-go billing so you can release the cluster when you're done and avoid ongoing costs.

Precautions

The runtime environment of the code is managed and configured by the owner of the environment.

Step 1: Create a cluster

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select a region and a resource group.

You cannot change the region after the cluster is created.

-

Click Create Cluster and configure the following parameters:

OSS-HDFS must be available in your target region. For supported regions, see Enable OSS-HDFS and grant access permissions. You can select the OSS-HDFS service when you create a DataLake cluster in the new data lake scenario, a Dataflow cluster, a DataServing cluster, or a custom cluster of EMR V5.12.1, EMR V3.46.1, or a minor version later than EMR V5.12.1 or EMR V3.46.1.

Software configuration

Parameter Example value Notes Region China (Hangzhou) Cannot be changed after creation Business Scenario Data Lake EMR pre-configures components and services for this scenario Product Version EMR-5.18.1 Select the latest version High Service Availability Off Distributes master nodes across hardware to reduce failure risk. Leave off for testing Optional Services Hadoop-Common, OSS-HDFS, YARN, Hive, Spark3, Tez, Knox, OpenLDAP Select Knox and OpenLDAP to access service web UIs Collect Service Operational Logs On (default) Used only for cluster diagnostics. Disabling it limits health checks and technical support. After you create a cluster, you can modify the Collection Status of Service Operational Logs parameter on the Basic Information tab. For more information about how to disable log collection and the impacts, see How do I stop collection of service operational logs? Metadata Built-in MySQL Suitable for testing only. For production, use Self-managed RDS or DLF Unified Metadata Root Storage Directory of Cluster oss://******.cn-hangzhou.oss-dls.aliyuncs.comRequired when you select the OSS-HDFS service Hardware configuration

Parameter Example value Notes Billing Method Pay-as-you-go Use pay-as-you-go for testing. Switch to subscription for production Zone Zone I Cannot be changed after creation VPC vpc_Hangzhou/vpc-bp1f4epmkvncimpgs**** Select a virtual private cloud (VPC) in the current region, or click Create VPC vSwitch vsw_i/vsw-bp1e2f5fhaplp0g6p**** Select a vSwitch in the specified zone Default Security Group sg_seurity/sg-bp1ddw7sm2risw**** Do not use an advanced security group created in the ECS console Node Group Enable Assign Public Network IP for the master node group For more information, see Select hardware specifications and network configurations Basic configuration

Parameter Example value Notes Cluster Name Emr-DataLake 1–64 characters; letters, digits, hyphens (-), and underscores (_) only Identity Credentials Password Select Key Pair for passwordless authentication — see Manage SSH key pairs Password / Confirm Password Custom password Save this password — you'll need it to log on to the cluster -

Click Next: Confirm and follow the on-screen instructions to finish.

The cluster is ready when its status changes to Running. For a full parameter reference, see Create a cluster.

Step 2: Prepare data

This step runs in an SSH terminal on the cluster master node.

-

Log on to your cluster over SSH. For instructions, see Log on to a cluster.

-

Create a text file named

wordcount.txtas input for the WordCount job:hello world hello wordcount -

Upload the file to OSS-HDFS. In the following commands, replace

<yourBucketname>with your OSS bucket name andcn-hangzhouwith your region.You can upload input data to Hadoop Distributed File System (HDFS), OSS, or OSS-HDFS. This example uses OSS-HDFS. To upload to OSS instead, see Simple upload.

-

Create an

inputdirectory in your OSS-HDFS bucket:hadoop fs -mkdir oss://<yourBucketname>.cn-hangzhou.oss-dls.aliyuncs.com/input/ -

Upload

wordcount.txtto theinputdirectory:hadoop fs -put wordcount.txt oss://<yourBucketname>.cn-hangzhou.oss-dls.aliyuncs.com/input/

-

Step 3: Submit a job

Run the following command in your SSH terminal to submit the WordCount job. Replace <yourBucketname> with your bucket name and cn-hangzhou with your region.

hadoop jar /opt/apps/HDFS/hadoop-3.2.1-1.2.16-alinux3/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.1.jar wordcount -D mapreduce.job.reduces=1 "oss://<yourBucketname>.cn-hangzhou.oss-dls.aliyuncs.com/input/wordcount.txt" "oss://<yourBucketname>.cn-hangzhou.oss-dls.aliyuncs.com/output/"| Parameter | Description |

|---|---|

/opt/apps/.../hadoop-mapreduce-examples-3.2.1.jar |

Built-in JAR containing sample MapReduce programs. The version suffix matches your cluster: 3.2.1 for EMR V5.X clusters, 2.8.5 for EMR V3.X clusters |

-D mapreduce.job.reduces=1 |

Forces a single reducer, producing one output file (part-r-00000). Without this flag, Hadoop may create multiple output files based on input volume |

oss://...input/wordcount.txt |

Input path — the file you uploaded in step 2 |

oss://...output/ |

Output path where the job writes its results |

Step 4: View results

View the job output

Run the following command in your SSH terminal to read the output file:

hadoop fs -cat oss://<yourBucketname>.cn-hangzhou.oss-dls.aliyuncs.com/output/part-r-00000The output lists each word and its count:

hello 2

wordcount 1

world 1

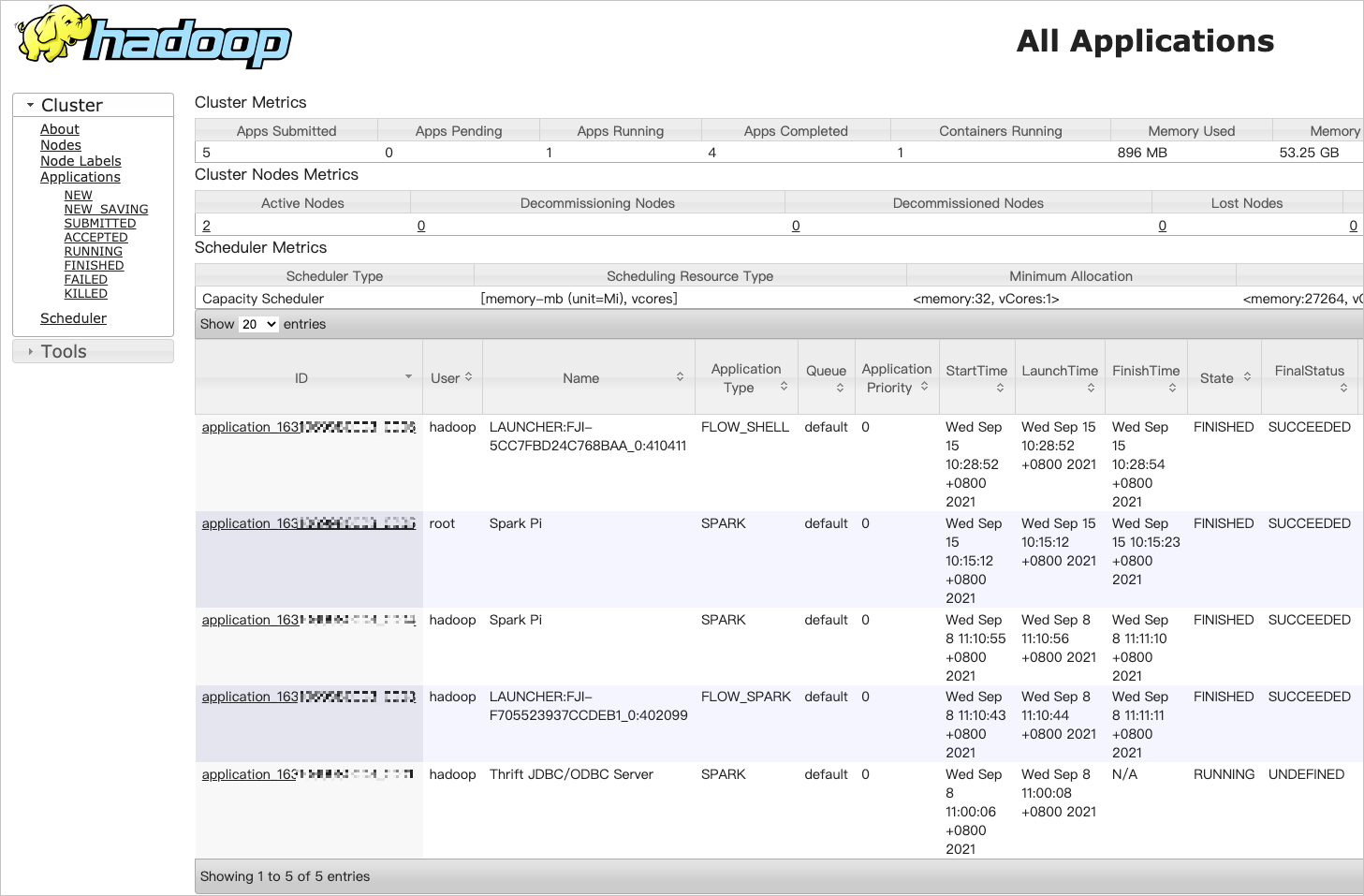

View job details in the YARN web UI

YARN tracks job status, task details, logs, and resource usage. To access the YARN web UI:

-

Enable port 8443 in the cluster security group. For instructions, see Manage security groups.

-

Add a Knox user. For instructions, see Manage OpenLDAP users.

-

In the EMR console, go to EMR on ECS, find your cluster, and click Services in the Actions column.

-

On the page that appears, click the Access Links and Ports tab.

-

In the Knox Proxy Address column for YARN UI, click the Internet link. Log on with the Knox credentials you created in step 2.

-

On the All Applications page, click a job ID to view its details.

Step 5: Release the cluster (optional)

Release the cluster when you no longer need it to stop incurring charges.

-

Pay-as-you-go clusters can be released at any time. Subscription clusters can only be released after they expire.

-

The cluster must be in the Initializing, Running, or Idle state before you can release it.

On release, EMR forcibly terminates all running jobs and releases all ECS instances. Most clusters release in seconds; large clusters take no more than 5 minutes.

To release a cluster:

-

In the EMR console, go to EMR on ECS, find your cluster, move the pointer over the

icon, and select Release. Alternatively, click the cluster name, and in the upper-right corner of the Basic Information tab, choose All Operations > Release.

icon, and select Release. Alternatively, click the cluster name, and in the upper-right corner of the Basic Information tab, choose All Operations > Release. -

In the Release Cluster dialog, click OK.

What's next

-

Paths of frequently used files — find commonly referenced file paths in EMR

-

List of operations by function — API reference for cluster and service management

-

FAQ — answers to common EMR questions