This topic describes how to run LAMMPS in an Elastic High Performance Computing (E-HPC) cluster to perform a manufacturing simulation based on the 3d Lennard-Jones melt model and visualize the simulation result.

Background information

Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) is a classical molecular dynamics program. It has potentials for solid-state materials (metals, semiconductors), soft matter (biomolecules, polymers), and coarse-grained or mesoscopic systems.

High-performance and flexible E-HPC clusters provide auxiliary analysis for complex engineering and mechanics. You can take advantage of these benefits to run large amounts of simulations and optimize product structures and performance. More and more software packages are used for manufacturing simulations.

Preparations

Create an E-HPC cluster. For more information, see Create a cluster by using the wizard.

The following parameter configurations are used in this example.

Parameter

Description

Hardware settings

Set the Deploy Mode to Standard. Specify two management nodes, one compute node, and one logon node. Select an instance type that has 32 or more vCPUs as the compute node. For example, you can select ecs.c7.8xlarge.

NoteThis example is designed for an application with relatively low computing requirements. Adjust the number of compute nodes based on the computing requirements of your application.

Software settings

Deploy a CentOS 7.6 public image and the PBS scheduler. Turn on VNC.

Create a cluster user. For more information, see Create a user.

The user is used to log on to the cluster, compile software, and submit jobs. The following settings are used in this example:

Username: testuser

User group: sudo permission group

Install software. For more information, see Install software.

Install the following software:

lammps-openmpi V31Mar17

openmpi V1.10.7

VMD V1.9.3

Step 1: Connect to the cluster

Connect to the cluster by using one of the following methods. This example uses testuser as the username. After you connect to the cluster, you are automatically logged on to the /home/testuser.

Use an E-HPC client to log on to a cluster

The scheduler of the cluster must be PBS. Make sure that you have downloaded and installed an E-HPC client and deployed the environment required for the client. For more information, see Deploy an environment for an E-HPC client.

Start and log on to your E-HPC client.

In the left-side navigation pane, click Session Management.

In the upper-right corner of the Session Management page, click terminal to open the Terminal window.

Use the E-HPC console to log on to a cluster

Log on to the E-HPC console.

In the upper-left corner of the top navigation bar, select a region.

In the left-side navigation pane, click Cluster.

On the Cluster page, find the cluster and click Connect.

In the Connect panel, enter a username and a password, and click Connect via SSH.

Step 2: Submit a job

Run the following command to create a sample file named lj.in:

vim lj.inThe sample file:

# 3d Lennard-Jones melt variable x index 1 variable y index 1 variable z index 1 variable xx equal 20*$x variable yy equal 20*$y variable zz equal 20*$z units lj atom_style atomic lattice fcc 0.8442 region box block 0 ${xx} 0 ${yy} 0 ${zz} create_box 1 box create_atoms 1 box mass 1 1.0 velocity all create 1.44 87287 loop geom pair_style lj/cut 2.5 pair_coeff 1 1 1.0 1.0 2.5 neighbor 0.3 bin neigh_modify delay 0 every 20 check no fix 1 all nve dump 1 all xyz 100 sample.xyz run 10000Run the following command to create a job script file named lammps.pbs:

vim lammps.pbsThe sample script:

NoteIn this example, the compute node has 32 vCPUs and 32 Message Passing Interface (MPI) processes to perform high-performance computing. Configure the number of vCPUs based on the actual specification of compute nodes that you use. The number of vCPUs must be greater than 32.

#!/bin/sh #PBS -l select=1:ncpus=32:mpiprocs=32 #PBS -j oe export MODULEPATH=/opt/ehpcmodulefiles/ # The environment variables on which the module command depends. module load lammps-openmpi/31Mar17 module load openmpi/1.10.7 echo "run at the beginning" mpirun lmp -in ./lj.in # Use the actual path of the lj.in file.Run the following command to submit the job:

qsub lammps.pbsThe following command output is returned, which indicates that the generated job ID is 0.scheduler.

0.scheduler

Step 3: View the job result

Run the following command to view the result of the job:

cat lammps.pbs.o0NoteIf you do not specify a standard output path for the job, the output file is generated based on scheduler behaviors. By default, the output file is stored in the

/home/<username>/directory. For example, the output of this example is stored in the/home/testuser/lammps.pbs.o0directory.The following code provides an example of the expected returned output.

...... Per MPI rank memory allocation (min/avg/max) = 3.777 | 3.801 | 3.818 Mbytes Step Temp E_pair E_mol TotEng Press 0 1.44 -6.7733681 0 -4.6134356 -5.0197073 10000 0.69814375 -5.6683212 0 -4.6211383 0.75227555 Loop time of 9.81493 on 32 procs for 10000 steps with 32000 atoms Performance: 440145.641 tau/day, 1018.856 timesteps/s 97.0% CPU use with 32 MPI tasks x no OpenMP threads MPI task timing breakdown: Section | min time | avg time | max time |%varavg| %total --------------------------------------------------------------- Pair | 6.0055 | 6.1975 | 6.3645 | 4.0 | 63.14 Neigh | 0.90095 | 0.91322 | 0.92938 | 0.9 | 9.30 Comm | 2.1457 | 2.3105 | 2.4945 | 6.9 | 23.54 Output | 0.16934 | 0.1998 | 0.23357 | 4.3 | 2.04 Modify | 0.1259 | 0.13028 | 0.13602 | 0.8 | 1.33 Other | | 0.06364 | | | 0.65 Nlocal: 1000 ave 1022 max 986 min Histogram: 5 3 6 3 4 4 2 2 1 2 Nghost: 2705.62 ave 2733 max 2668 min Histogram: 1 1 0 3 7 5 4 5 4 2 Neighs: 37505 ave 38906 max 36560 min Histogram: 7 3 2 4 5 2 3 3 2 1 Total # of neighbors = 1200161 Ave neighs/atom = 37.505 Neighbor list builds = 500 Dangerous builds not checked Total wall time: 0:00:10Use VNC to view the result of the job.

Enable VNC.

NoteMake sure that the ports required by VNC are enabled for the security group to which the cluster belongs. When you use the console, the system automatically enables the port 12016. When you use the client, you need to enable the ports manually. Port 12017 allows only one user to open the VNC Viewer window. If multiple users need to open the VNC Viewer window, you need to enable the corresponding number of ports, starting from port 12017.

Use the client

In the left-side navigation pane, click Session Management.

In the upper-right corner of the Session Management page, click VNC to open VNC Viewer.

Use the console

In the left-side navigation pane of the E-HPC console, click Cluster.

On the Cluster page, select a cluster. Choose More > VNC.

Use VNC to remotely connect to a visualization service. For more information, see Use VNC to manage a visualization service.

In the Virtualization Service dialog box of the cloud desktop, choose Application > System Tools > Terminal.

Run the

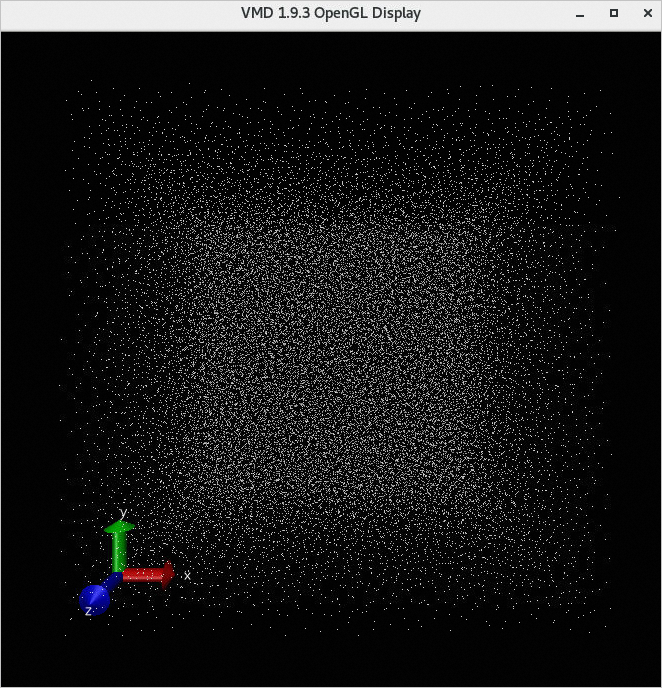

/opt/vmd/1.9.3/vmdto open Visual Molecular Dynamics (VMD).On the VMD Main page, choose File > New Molecule....

Click the Browse... button and select the sample.xyz file.

NoteThe sample.xyz file is stored in the /home/testuser/sample.xyz directory.

Click Load. You can view the result of the job in the VMD 1.9.3 OpenGL Display window.