This topic describes how to use an E-HPC cluster to run BWA, GATK, and Samtools for gene sequencing.

Background information

The rapid development of gene sequencing technology in life sciences has led to an exponential increase in the amount of gene sequence data. Processing this large volume of data requires massive data processing and parallel computing for tasks such as homology searches, alignments, and mutation detection. High Performance Computing (HPC) provides the powerful computing power needed for these tasks. HPC uses various schedulers to improve concurrency and GPUs to accelerate computation.

This topic uses the classic Whole Genome Sequencing (WGS) workflow as an example. It demonstrates the general process for human WGS using the GATK software. In a real-world bioinformatics analysis, you must adjust the process based on your specific needs. For example, you might need to add quality control and filtering for mutation detection.

For gene sequencing, you can use BWA to build an index and align records. Then, you can use Samtools to sort the alignment records. Finally, you can use GATK to remove duplicate sequences, recalibrate base quality scores, and detect mutations.

BWA (Burrows-Wheeler Alignment Tool) is a software that maps DNA sequences to a reference genome, such as the human genome.

GATK (The Genome Analysis Toolkit) is a software toolkit for analyzing second-generation resequencing data. It is primarily used to remove duplicate sequences, recalibrate base quality scores, and detect mutations.

Samtools is a tool for processing SAM and BAM formats. It lets you view binary files, convert file formats, and sort and merge files. It also uses information from flags and tags in SAM files to summarize alignment results.

Preparations

Create an E-HPC cluster. For more information, see Create a Standard Edition cluster.

The following table shows an example of the cluster configuration.

Configuration item

Configuration

Series

Standard Edition

Deployment mode

Public cloud cluster

Cluster type

SLURM

Node configuration

Includes one control plane node, one logon node, and one compute node with the following specifications:

Control plane node: Uses the ecs.c7.large instance type, which has 2 vCPUs and 4 GiB of memory.

Logon node: Uses the ecs.c7.large instance type, which has 2 vCPUs and 4 GiB of memory.

Compute node: Uses the ecs.g7.16xlarge instance type, which has 64 vCPUs and 256 GiB of memory.

Cluster image

CentOS 7.6 public image

Create a cluster user. For more information, see User management.

A cluster user is used to log on to the cluster to compile software, submit jobs, and perform other operations. The following example shows the user that is created for this topic:

Username: testuser

User group: sudo permission group

Install the software.

The following table provides details about the software to install.

Software Name

Version

Download URL

BWA

4.2.0.0

GATK

0.7.17

Samtools

1.14

Step 1: Connect to the E-HPC cluster

Connect to the E-HPC cluster. For more information, see Connect to a cluster.

Run the following command to create a job execution directory. This topic uses

/home/data/humanas an example.NoteTo ensure that you have enough disk space to download and process data, create the job execution directory in the

/homeshared storage directory that is mounted on the cluster.sudo mkdir -p /home/data/human

Step 2: Download and preprocess the data

Run the following command to create and open a job script file named

data_pre.slurm.sudo vim data_pre.slurmThe script is as follows:

#!/bin/bash -l #SBATCH -J data_pre #SBATCH --partition comp #SBATCH -N 1 #SBATCH -n 1 #SBATCH --output=data_pre.log cd /home/data/human/ # Download and decompress the human reference genome and two human gene sequencing samples. wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/b37_human_g1k_v37.fasta wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/b37_1000G_phase1.indels.b37.vcf wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/b37_Mills_and_1000G_gold_standard.indels.b37.vcf wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/b37_dbsnp_138.b37.vcf.zip unzip b37_dbsnp_138.b37.vcf.zip wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/gatk-examples_example1_NA20318_ERR250971_1.filt.fastq.gz gunzip gatk-examples_example1_NA20318_ERR250971_1.filt.fastq.gz wget https://public-ehpc-package.oss-cn-hangzhou.aliyuncs.com/lifescience/gatk-examples_example1_NA20318_ERR250971_2.filt.fastq.gz gunzip gatk-examples_example1_NA20318_ERR250971_2.filt.fastq.gz # Compress the files using bgzip. bgzip -f b37_1000G_phase1.indels.b37.vcf bgzip -f b37_Mills_and_1000G_gold_standard.indels.b37.vcf bgzip -f b37_dbsnp_138.b37.vcf # Build an index using tabix. tabix -p vcf b37_1000G_phase1.indels.b37.vcf.gz tabix -p vcf b37_Mills_and_1000G_gold_standard.indels.b37.vcf.gz tabix -p vcf b37_dbsnp_138.b37.vcf.gzRun the following command to submit the job.

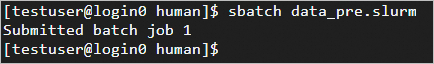

sbatch data_pre.slurmThe following output indicates that the job was submitted successfully.

Run the following command to check the job status.

squeueAfter the job is complete, run the following command to view the results in the

data_pre.logfile.cat data_pre.log

Step 3: Build the reference genome index

Run the following command to create and open a job script file named

bwa_index.slurm.sudo vim bwa_index.slurmThe script is as follows:

#!/bin/bash -l #SBATCH -J bwa_index #SBATCH --partition comp #SBATCH -N 1 #SBATCH -n 1 #SBATCH --output=bwa_index.log cd /home/data/human/ # Build the index database of the reference genome for BWA alignment. time bwa index b37_human_g1k_v37.fasta # Create an index for the FASTA sequence format. samtools faidx b37_human_g1k_v37.fastaRun the following command to submit the job.

sbatch --dependency=afterok:jobid1 bwa_index.slurmRun the following command to check the job status.

squeueAfter the job is complete, run the following command to view the results in the

bwa_index.logfile.cat bwa_index.log

Step 4: Run the gene sequencing analysis

Run the following command to create and open a job script file named

wgs_main_process.slurm.sudo vim wgs_main_process.slurmThe script is as follows:

ImportantIn line 6,

#SBATCH -w compute000, replacecompute000with the name of your compute node.#!/bin/bash -l #SBATCH -J wgs_main_process #SBATCH --partition comp #SBATCH -N 1 #SBATCH -n 64 #SBATCH -w compute000 #SBATCH --output=wgs_main_process.log cd /home/data/human/ # Align the sample sequencing data reads with the human reference genome and convert the output file to BAM format to save disk space. time bwa mem -t 64 \ -R '@RG\tID:ehpc\tPL:illumina\tLB:library\tSM:b37' \ b37_human_g1k_v37.fasta \ gatk-examples_example1_NA20318_ERR250971_1.filt.fastq \ gatk-examples_example1_NA20318_ERR250971_2.filt.fastq | samtools view -S -b - > ERR250971.bam # Sort the alignment records in ascending order. time samtools sort -@ 64 -O bam -o ERR250971.sorted.bam ERR250971.bam # Remove duplicate reads generated by PCR amplification of the DNA sequence. time gatk MarkDuplicates \ -I ERR250971.sorted.bam -O ERR250971.markdup.bam \ -M ERR250971.sorted.markdup_metrics.txt # Build an index for the markdup sequencing BAM file. samtools index ERR250971.markdup.bam # Generate a dict file for the reference genome. time gatk CreateSequenceDictionary \ -R b37_human_g1k_v37.fasta \ -O b37_human_g1k_v37.dict # Use the existing SNP and indel databases to build a model and create a recalibration table. time gatk BaseRecalibrator \ -R b37_human_g1k_v37.fasta \ -I ERR250971.markdup.bam \ --known-sites b37_1000G_phase1.indels.b37.vcf.gz \ --known-sites b37_Mills_and_1000G_gold_standard.indels.b37.vcf.gz \ --known-sites b37_dbsnp_138.b37.vcf.gz -O ERR250971.BQSR.table # Adjust the original base quality scores. time gatk ApplyBQSR \ --bqsr-recal-file ERR250971.BQSR.table \ -R b37_human_g1k_v37.fasta \ -I ERR250971.markdup.bam \ -O ERR250971.BQSR.bam # Build an index for the BQSR sequencing BAM file. time samtools index ERR250971.BQSR.bam # Output the mutation detection results to a VCF file. time gatk HaplotypeCaller \ -R b37_human_g1k_v37.fasta \ -I ERR250971.BQSR.bam \ -O ERR250971.HC.vcf.gzRun the following command to submit the job.

sbatch --dependency=afterok:jobid2 wgs_main_process.slurmRun the following command to check the job status.

squeueAfter the job is complete, run the following command to view the results in the

wgs_main_process.logfile.cat wgs_main_process.log

Sequencing time

The compute node used in this topic is ecs.g7.16xlarge. This is for demonstration purposes only. The entire whole genome sequencing process takes about 6 to 7 hours. The following table lists the approximate time for each step for your reference. These times are not benchmarks for optimal performance.

Process | Function | Runtime (min) |

bwa index | Build index | 54 |

bwa mem | Align | 25 |

samtools sort | Sort | 2 |

gatk MarkDuplicates | Remove duplicates | 13 |

gatk CreateSequenceDictionary | Create dictionary | 0.15 |

gatk BaseRecalibrator | Building a calibration table | 40 |

gatk ApplyBQSR | Recalibrate | 21 |

gatk HaplotypeCaller | Detect mutations | 211 |