MaxCompute external projects map to Data Lake Formation (DLF) catalogs, giving MaxCompute real-time read/write access to Paimon-format tables stored in DLF-managed Object Storage Service (OSS). Permission management is delegated to DLF, and catalog-level mapping enables multi-engine collaboration on shared data lake metadata.

Paimon_DLF external projects are currently in the invitational preview stage.

Limitations

-

Only Paimon-format tables stored in DLF-managed OSS are supported.

-

Writing to Dynamic Bucket tables is not supported.

-

Writing to Cross Partition tables is not supported.

How permissions work

Accessing Paimon_DLF data from MaxCompute requires satisfying two independent permission layers. Both must be configured — missing either one causes access errors.

| Layer | What it controls | Where it is managed |

|---|---|---|

| Control plane | Console operations — creating an external project and attaching a DLF catalog | RAM console |

| Data plane | SQL operations — reading and writing Paimon tables, managing schemas and tables in the attached DLF catalog | DLF console |

MaxCompute passes the current task executor's identity to DLF when reading or writing data. Each system then enforces its own permission scope independently.

Set up the integration

| Step | Action | Purpose |

|---|---|---|

| 1 | Grant permissions | Authorize cloud resource access, activate DLF, and create a service-linked role |

| 2 | Create a Paimon_DLF external data source | Define the connection between MaxCompute and DLF |

| 3 | Create an external project | Map a DLF catalog to a MaxCompute project |

| 4 | Query and write data using SQL | Read and write Paimon tables in DLF-managed OSS |

Step 1: Grant permissions

The following table shows which account type performs each task in this step.

| Task | Performed by |

|---|---|

| Grant RAM policies to a RAM user | Alibaba Cloud account owner |

| Authorize cloud resource access in the DLF console | Alibaba Cloud account owner |

| Activate DLF | Alibaba Cloud account owner |

| Create the MaxCompute service-linked role | Alibaba Cloud account owner |

| Grant DLF data permissions to RAM users | Data lake administrator (DLF console) |

Grant RAM policies to a RAM user

If you are using a Resource Access Management (RAM) user, attach the following policies. For instructions, see Grant permissions to a RAM user.

| Policy | Required for |

|---|---|

AliyunRAMFullAccess |

Must be granted by the Alibaba Cloud account owner if the RAM user lacks it |

AliyunMaxComputeFullAccess |

Creating external data sources and external projects |

AliyunDLFReadOnlyAccess |

Creating external projects — grants the List permission on the DLF catalog |

Authorize cloud resource access and activate DLF

-

Log on to the DLF console and select your region in the upper-left corner.

-

To the right of Permissions to access cloud resources are granted., click Authorize.

-

Click Activate to the right of DLF is activated.

MaxCompute and DLF must be in the same region.

Create a service-linked role for MaxCompute

MaxCompute uses the AliyunServiceRoleForMaxComputeLakehouse service-linked role to access DLF data. Create this role in the RAM console:

-

Log on to the RAM console.

-

In the left navigation pane, choose Identities > Roles.

-

On the Roles page, click Create Role.

-

In the upper-right corner, click Create Service Linked Role.

-

Set Select Service to

AliyunServiceRoleForMaxComputeLakehouse, then click Create Service Linked Role. If a message indicates the role already exists, it is already authorized. No further action is needed.

Grant DLF data permissions to RAM users

Data plane permissions are managed in the DLF console. Log on to the DLF console and grant permissions to RAM users who need to read or write Paimon tables.

Step 2: Create a Paimon_DLF external data source

-

Log on to the MaxCompute console and select your region.

-

In the left navigation pane, choose Manage Configurations > External Data Source.

-

Click Create External Data Source.

-

Configure the following parameters:

Parameter Required Description External Data Source Type Required Select Paimon_DLF External Data Source Name Required Starts with a letter; lowercase letters, underscores, and digits only; maximum 128 characters. Example: paimon_dlfDescription Optional Custom description Region Required Defaults to the current region Authentication And Authorization Required Defaults to Alibaba Cloud RAM role Service-linked Role Required Auto-generated Endpoint Required Auto-generated. For the China (Hangzhou) region: cn-hangzhou-intranet.dlf.aliyuncs.comForeign Server Supplemental Properties Optional Additional attributes for the external data source -

Click OK.

-

On the External Data Source page, find the new data source and click Details in the Actions column to verify the configuration.

Step 3: Create an external project

-

Log on to the MaxCompute console and select your region.

-

In the left navigation pane, choose Manage Configurations > Projects.

-

On the External Project tab, click Create Project.

-

Configure the following parameters and click OK:

Parameter Required Description Project Type Required Defaults to External Project Region Required Defaults to the current region; cannot be changed Project Name (Globally Unique) Required Starts with a letter; letters, digits, and underscores only; 3–28 characters MaxCompute Foreign Server Type Optional Defaults to Paimon_DLF MaxCompute Foreign Server Optional Use Existing to select a previously created external data source, or Create Foreign Server to create a new one MaxCompute Foreign Server Name Required Select from existing data sources, or enter the name of the new one Data Catalog Required The DLF data catalog to attach Billing Method Required Subscription or Pay-as-you-go Default Quota Required Select an existing quota Description Optional Custom description

Step 4: Query and write data using SQL

Select a connection tool to log on to the external project, then use the following SQL commands.

List schemas

-- Enable schema syntax at the session level.

SET odps.namespace.schema=true;

SHOW schemas;

-- Sample output:

-- ID = 20250919****am4qb

-- default

-- system

-- OKList tables in a schema

-- Replace <schema_name> with the schema name shown in the output above.

USE schema <schema_name>;

SHOW tables;

-- Sample output:

-- ID = 20250919****am4qb

-- acs:ram::<uid>:root emp

-- OKCreate a schema

-- Replace <schema_name> with your schema name.

CREATE schema <schema_name>;Create a table and insert data

When writing TIMESTAMP data to a Paimon source table, the data is truncated based on its precision: precision 0–3 truncates to 3 decimal places, precision 4–6 truncates to 6 decimal places, and precision 7–9 truncates to 9 decimal places.

Command format:

-- Create a table.

CREATE TABLE [IF NOT EXISTS] <table_name>

(

<col_name> <data_type>,

...

)

[COMMENT <table_comment>]

[PARTITIONED BY (<col_name> <data_type>, ...)]

;

-- Insert data.

INSERT {INTO|OVERWRITE} TABLE <table_name> [PARTITION (<pt_spec>)] [(<col_name> [,<col_name> ...])]

<select_statement>

FROM <from_statement>Example — create a table and insert rows:

CREATE TABLE schema_table(id int, name string);

INSERT INTO schema_table VALUES (101, 'Zhang San'), (102, 'Li Si');

-- Verify the data.

SELECT * FROM schema_table;

-- Result:

-- +------------+------------+

-- | id | name |

-- +------------+------------+

-- | 101 | Zhang San |

-- | 102 | Li Si |

-- +------------+------------+If you are logged on as a RAM user, creating tables and inserting data requires DLF catalog permissions. See Data authorization management.

Read and write to the default schema

USE schema default;

SHOW tables;

-- Sample output:

-- ID = 20250919*******yg5

-- acs:ram::<uid>:root emp

-- acs:ram::<uid>:root emp_detail

-- acs:ram::<uid>:root test_table

-- OK

-- Read data.

SELECT * FROM test_table;

-- Result:

-- +------------+------------+

-- | id | name |

-- +------------+------------+

-- | 101 | Zhang San |

-- | 102 | Li Si |

-- +------------+------------+

-- Write data and verify.

INSERT INTO test_table VALUES (103, 'Wang Wu');

SELECT * FROM test_table;

-- Result:

-- +------------+------------+

-- | id | name |

-- +------------+------------+

-- | 101 | Zhang San |

-- | 102 | Li Si |

-- | 103 | Wang Wu |

-- +------------+------------+Troubleshooting

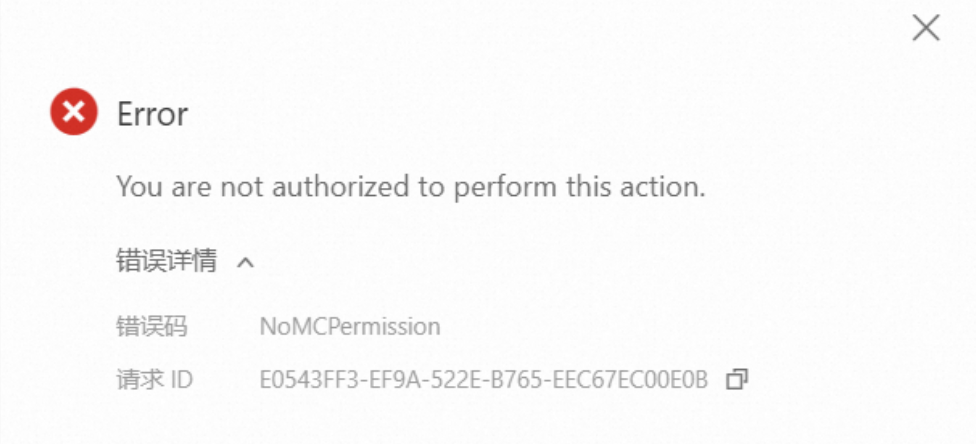

"You are not authorized to perform this action" when creating an external project

The RAM user lacks the required permissions. Check the following:

"Forbidden: User acs:ram::\<uid\>:user/\*\* doesn't have privilege LIST on DATABASE default" when running SHOW TABLES

The RAM user lacks data plane permissions on the DLF catalog.

-

Log on to the DLF console. In the left navigation pane, click System And Security. On the Access Control tab, refresh the page and check whether the RAM user appears.

-

If the user exists, click Roles and grant the required permissions to the RAM user.