Each DataWorks task instance running on the E-MapReduce (EMR) compute engine contains multiple EMR jobs that run in sequence. When one job fails or stays in a RUNNING state longer than expected, it blocks the entire task instance and its downstream instances. The engine O&M page gives you visibility into each individual EMR job so you can identify, stop, and remove problematic jobs before they cascade.

Limits

-

Engine O&M is supported only for EMR jobs. To get O&M data, submit a ticketsubmit a ticket to upgrade your EMR execution package.

-

Engine Maintenance appears in the left-side navigation pane of Operation Center only after you register an EMR cluster to your DataWorks workspace.

-

If you use an exclusive resource group for scheduling, submit a ticketsubmit a ticket to upgrade its configurations. Without the upgrade, specific fields display as hyphens (-) on the engine O&M page.

Usage notes

YARN applications can be reused across EMR services. When an application is reused, the same job ID (application ID) appears for that job regardless of which DataWorks service triggered it.

-

The engine O&M page shows only the first application ID generated when an EMR job runs in DataWorks.

-

After a DataWorks task instance finishes — whether successfully or with a failure — the corresponding YARN application may still be in the RUNNING state.

Example: The kyuubi.engine.share.level parameter defaults to USER for EMR Kyuubi, meaning each user shares one engine. All jobs that a user triggers on that engine share the same application ID. If you run an EMR Kyuubi task in DataStudio and later analyze the same task in DataAnalysis, no new application ID is generated — the DataStudio ID is reused.

Additionally, kyuubi.session.engine.idle.timeout controls how long an idle session is kept alive. With kyuubi.session.engine.idle.timeout=PT30M, the YARN application stays in RUNNING state for 30 minutes after the job finishes. View Kyuubi parameter settings on the EMR on ECS page.

Prerequisites

Before you begin, ensure that you have:

-

An EMR cluster registered to your DataWorks workspace. See Register an EMR cluster to DataWorks.

-

Relevant EMR tasks run in DataWorks. See Usage notes for development of EMR nodes in DataWorks.

Go to the engine O&M page

-

Log on to the DataWorks console. In the top navigation bar, select the target region. In the left-side navigation pane, choose Data Development and O&M > Operation Center.

-

Select the target workspace from the drop-down list and click Go to Operation Center.

-

In the left-side navigation pane of the Operation Center page, choose Other > Engine Maintenance > E-MapReduce.

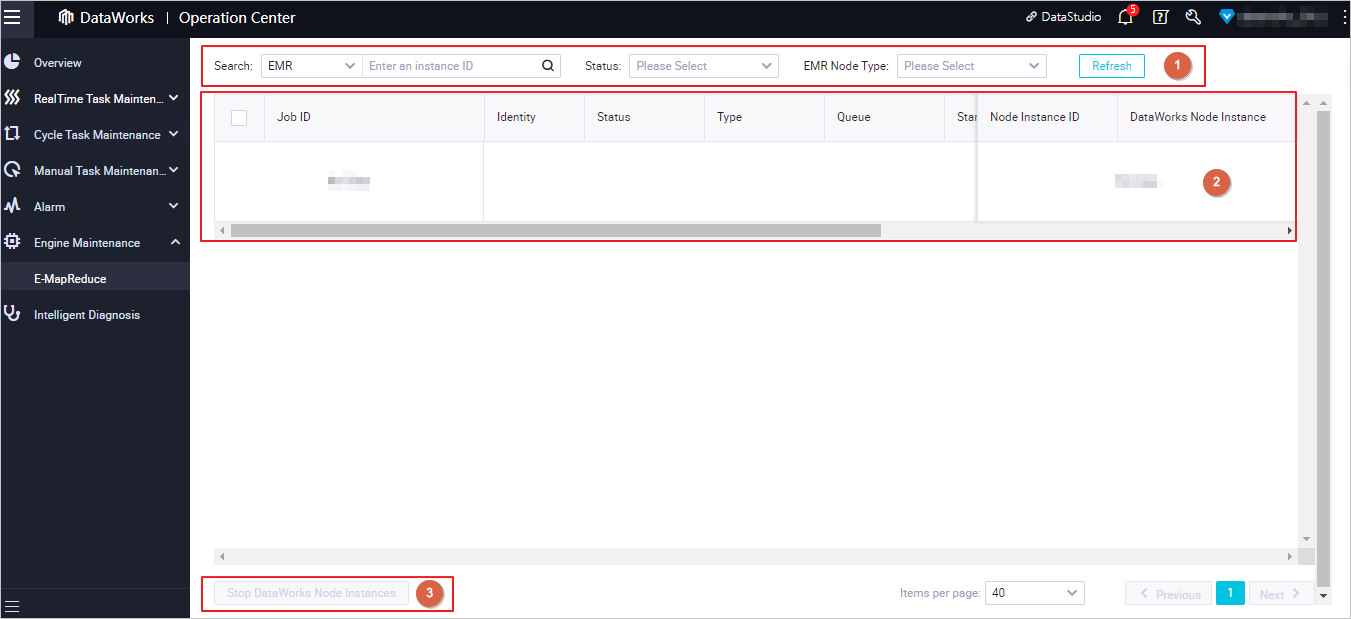

View and search for EMR jobs

The engine O&M page lists all EMR jobs created across DataWorks workspaces in the current region.

By default, the page shows data from the previous three days. Use the filters at the top of the page (Area 1) to narrow results by job ID, job type, DataWorks instance ID, and other conditions.

Searching by job ID or DataWorks instance ID queries only instances from the previous seven days. The DataWorks Instance ID field accepts only IDs of instances run in Operation Center.

Each job row (Area 2) shows the following details:

| Field | Description |

|---|---|

| Job ID | The unique identifier for the EMR job. Click it to go to the job details page. |

| Job status | The current state of the job. See Job statuses. |

| Running duration | How long the job has been running or took to complete. |

| Job source | The DataWorks service that triggered the job (for example, DataStudio or DataAnalysis). |

| Node Instance ID | The DataWorks task instance the job belongs to. Jobs that start at different times are treated as belonging to different task instances, even if they share the same node. Tasks triggered by Data Quality, DataStudio, or DataAnalysis show hyphens (-) in this column. |

| Queue usage (%) | The percentage of YARN queue resources allocated to this job. |

| Start time / End time | Sortable columns for viewing job execution sequence and duration. |

Job statuses

A job's final status reflects whether the job itself completed successfully. The statuses, in lifecycle order, are:

| Status | Meaning |

|---|---|

| NEW | The job was just created. |

| NEW_SAVING | The job is being saved. |

| SUBMITTED | The job has been submitted for execution. |

| ACCEPTED | The scheduling system approved the execution request. |

| RUNNING | The job is actively running. |

| FINISHED | The job finished running. |

| SUCCESSED | The job completed successfully. |

| FAILED | The job failed. Investigate immediately to prevent the failure from blocking the task instance and its downstream instances. |

| KILLED | The job was stopped by a user or administrator. |

Only EMR jobs of the MapReduce and Spark types are visible on this page.

Respond to job issues

Stop a stuck or long-running job

If a job stays in the RUNNING state longer than expected — for example, because of an internal error that prevents automatic termination — stop it to free up resources and unblock other jobs.

Only the workspace administrator, users with the O&M role, and task owners can stop task instances. Stopping a running job sets the entire DataWorks task instance to FAILED and blocks its downstream instances. If multiple EMR jobs belong to the same DataWorks task instance and you terminate one of them, the entire DataWorks task instance enters the FAILED state. Proceed with caution.

-

To stop a single job, find it in the list and click Terminate Running in the Actions column.

-

To stop multiple jobs at once, select the jobs and click Stop DataWorks Node Instances in the lower-left corner of the page.

Only instances in the running state can be stopped.

Investigate and recover from a failed job

When a job is in the FAILED state, take the following steps to identify the cause and resume execution:

-

Click the job ID or Node Instance ID to open the details page and start troubleshooting.

-

Alternatively, click the service button in the Actions column (for example, DataStudio) to go directly to the service page where the job was triggered.

Access permissions for service pages:

-

DataAnalysis: Only file owners can view SQL query files.

-

DataStudio: All workspace developers can view the instance. Only the user who triggered it can view the running history.