Data Integration in DataWorks provides the MongoDB Writer plugin, which lets you read data from various data sources and write it to MongoDB. This topic walks you through an offline sync from MaxCompute to MongoDB using a batch synchronization node.

Prerequisites

Before you begin, ensure that you have:

-

An activated DataWorks instance with a MaxCompute data source configured

-

An exclusive resource group for Data Integration. For setup instructions, see Use an exclusive resource group for Data Integration

A General-purpose resource group also works. For details, see Use a Serverless resource group.

Prepare sample data tables

Prepare the MaxCompute source table

-

Create a partitioned table named

test_write_mongowith the partition fieldpt.CREATE TABLE IF NOT EXISTS test_write_mongo( id STRING , col_string STRING, col_int int, col_bigint bigint, col_decimal decimal, col_date DATETIME, col_boolean boolean, col_array string ) PARTITIONED BY (pt STRING) LIFECYCLE 10; -

Add a partition with the value

20230215.insert into test_write_mongo partition (pt='20230215') values ('11','name11',1,111,1.22,cast('2023-02-15 15:01:01' as datetime),true,'1,2,3'); -

Verify the table.

SELECT * FROM test_write_mongo WHERE pt='20230215';

Prepare the MongoDB destination collection

This example uses ApsaraDB for MongoDB. Create a collection named test_write_mongo.

db.createCollection('test_write_mongo')Configure the offline sync task

Step 1: Add a MongoDB data source

Add a MongoDB data source and verify network connectivity between the data source and the exclusive resource group for Data Integration. For details, see Configure a MongoDB data source.

Step 2: Create and configure an offline sync node

In DataStudio, create an offline sync node and configure the source and destination. For parameters not covered below, use the default values. For a full parameter reference, see Configure a sync task in the codeless UI.

Configure network connectivity

Select the MongoDB and MaxCompute data sources you created. Select the exclusive resource group for Data Integration you configured. Then test the network connectivity.

Select data sources

Select the MaxCompute partitioned table as the source and the MongoDB collection as the destination.

The following parameters control write behavior:

| Parameter | Value | Behavior |

|---|---|---|

| Write mode (overwrite) | No (default) |

Inserts each record as a new document |

| Write mode (overwrite) | Yes |

Overwrites documents matching the Business primary key. You must specify a business primary key when using this mode. |

| Business primary key | A single field name | The field used to match documents for overwrite. Only one field can be specified. Usually the primary key in MongoDB (_id). |

| Pre-import statements | JSON object | Runs before data is written. See Pre-import statement format below. |

If Write mode is set toYesand you specify a field other than_idas the business primary key, a runtime error may occur:After applying the update, the (immutable) field '_id' was found to have been altered to _id: "2". This happens when the data contains records whose_iddoes not match thereplaceKey. For details, see Error: After applying the update, the (immutable) field '_id' was found to have been altered to _id: "2".

Pre-import statement format

The pre-import statement is a JSON object with a required type field. Valid values are remove and drop. The value must be lowercase.

type |

json field |

Description |

|---|---|---|

remove |

Required. Standard MongoDB Query syntax. See Query Documents. | Deletes documents matching the query. |

drop |

Not required | Drops the collection before writing. |

Configure field mapping

For MongoDB data sources, Same-row Mapping is used by default. To manually specify field types, click ![]() and edit the field configuration. The following example maps each column in the source table to a MongoDB field type:

and edit the field configuration. The following example maps each column in the source table to a MongoDB field type:

{"name":"id","type":"string"}

{"name":"col_string","type":"string"}

{"name":"col_int","type":"long"}

{"name":"col_bigint","type":"long"}

{"name":"col_decimal","type":"double"}

{"name":"col_date","type":"date"}

{"name":"col_boolean","type":"bool"}

{"name":"col_array","type":"array","splitter":","}After saving, the field mapping is shown in the interface.

Step 3: Submit and publish the sync node

If you are using a standard mode workspace and want to schedule this task periodically, submit and publish the sync node to the production environment. For details, see Publish a task.

Step 4: Run the node and verify the results

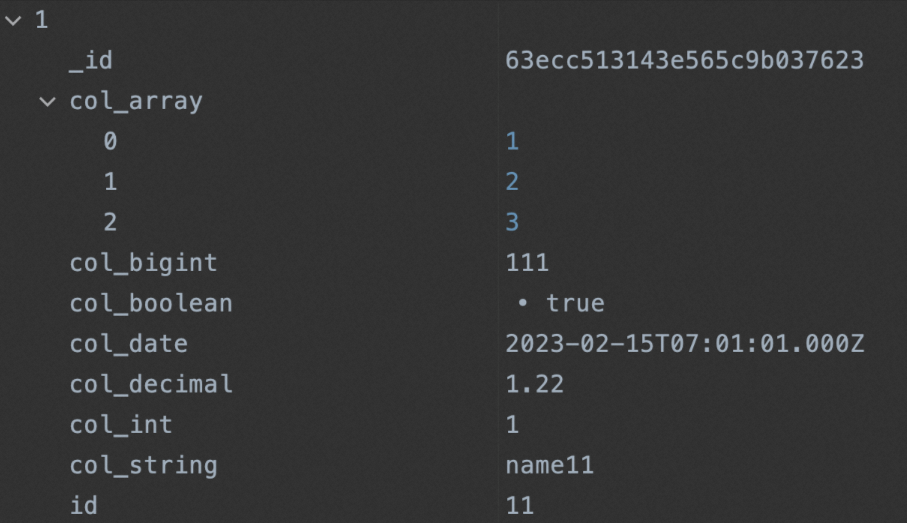

Run the sync node. After it completes successfully, verify the synchronized data in the MongoDB collection.

Appendix: Data type conversion

Supported types

The MongoDB Writer plugin supports the following types: INT, LONG, DOUBLE, STRING, BOOL, DATE, and ARRAY.

ARRAY type

Set type to array and configure the splitter property to split a delimited string into a MongoDB array.

| Input (source) | Configuration | Output (MongoDB) |

|---|---|---|

a,b,c |

"type":"array","splitter":"," |

["a","b","c"] |