DataWorks Data Integration provides the MongoDB Reader plugin to read data from MongoDB and synchronize it with other data sources. This tutorial walks you through syncing data from MongoDB to MaxCompute using an offline sync node.

In this tutorial, you will:

-

Prepare a MongoDB collection and a MaxCompute partitioned table with sample data

-

Add a MongoDB data source and configure network connectivity

-

Create and configure an offline sync node in DataStudio

-

Submit, publish, and run the node to verify the sync result

Prerequisites

Before you begin, make sure you have:

-

A DataWorks workspace with a MaxCompute data source configured

-

An exclusive resource group for Data Integration (must be purchased and configured). For more information, see Use an exclusive resource group for Data Integration

-

(Optional) A General-purpose resource group (Serverless resource group) as an alternative. For more information, see Use a Serverless resource group

Prepare sample data

Prepare a MongoDB collection and a MaxCompute partitioned table before configuring the sync task.

Step 1: Prepare a MongoDB collection

This tutorial uses ApsaraDB for MongoDB as an example.

-

Create a collection named

di_mongodb_conf_test.db.createCollection('di_mongodb_conf_test') -

Insert the sample data into the collection.

db.di_mongodb_conf_test.insertOne({ 'col_string':'mock string value', 'col_int32':NumberInt("1"), 'col_int32_min':NumberInt("-2147483648"), 'col_int32_max':NumberInt("2147483647"), 'col_int64':NumberLong("1234567890123456"), 'col_int64_min':NumberLong("-9223372036854775807"), 'col_int64_max':NumberLong("9223372036854775807"), 'col_decimal':NumberDecimal("9999999.4999999999"), 'col_double':9999999.99, 'col_boolean':true, 'col_timestamp':ISODate(), 'col_date':new Date(), 'col_array_to_json':['a','b'], 'col_array_to_join':['a','b'], 'col_doc':{ 'key_string':'mock string value', 'key_int32':NumberInt("1"), 'key_int32_min':NumberInt("-2147483648"), 'key_int32_max':NumberInt("2147483647"), 'key_int64':NumberLong("1234567890123456"), 'key_int64_min':NumberLong("-9223372036854775807"), 'key_int64_max':NumberLong("9223372036854775807"), 'key_decimal':NumberDecimal("9999999.4999999999"), 'key_double':9999999.99, 'key_boolean':true, 'key_timestamp':ISODate(), 'key_date':new Date(), 'key_array_to_json':['a','b'], 'key_array_to_join':['a','b'], }, 'col_extra_1':'this is extra 1', 'col_extra_2':'this is extra 2', }) -

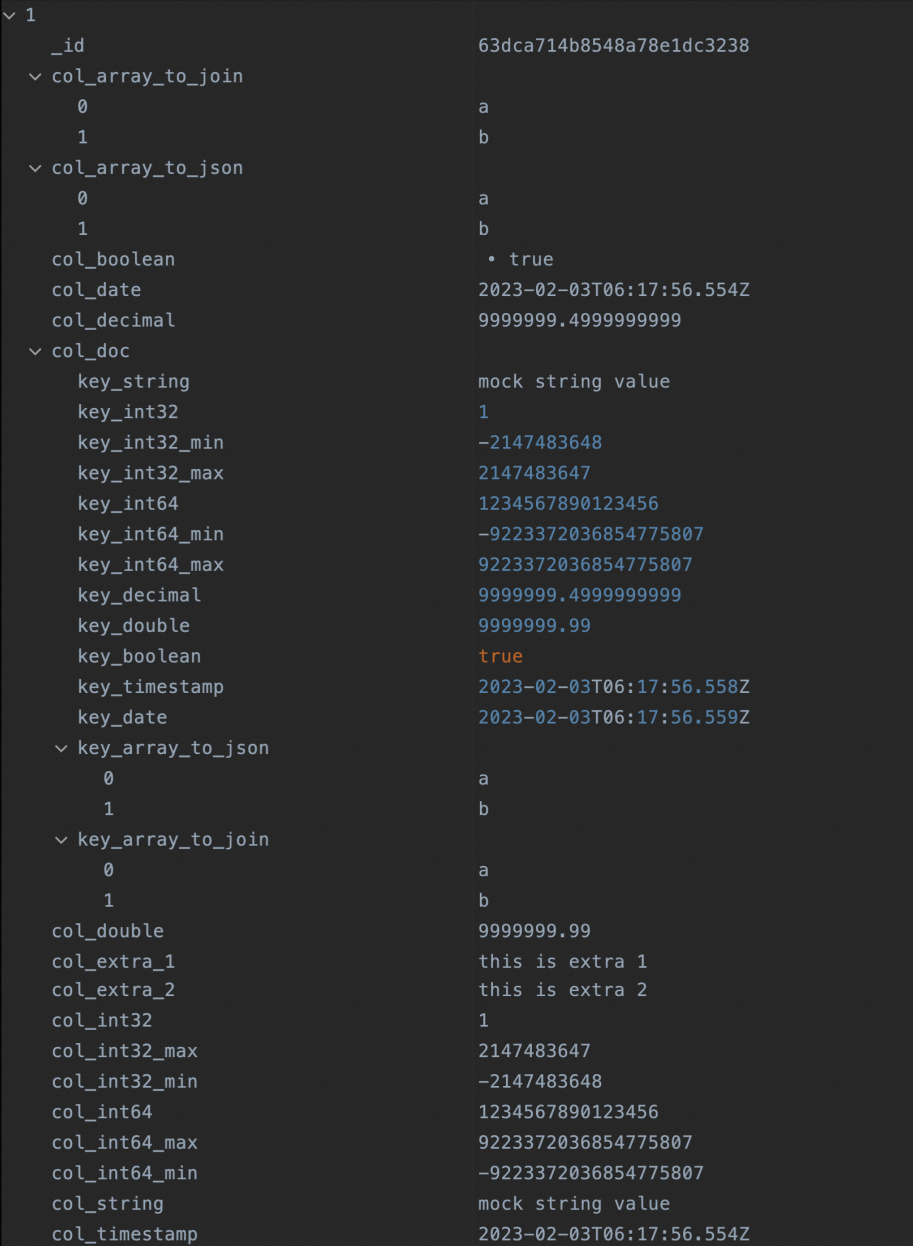

Verify the inserted data.

db.getCollection("di_mongodb_conf_test").find({})The query result is shown in the following figure:

Step 2: Prepare a MaxCompute table

-

Create a partitioned table named

di_mongodb_conf_testwith the partition fieldpt.CREATE TABLE IF NOT EXISTS di_mongodb_conf_test ( `id` STRING ,`col_string` STRING ,`col_int32` INT ,`col_int32_min` INT ,`col_int32_max` INT ,`col_int64` BIGINT ,`col_int64_min` BIGINT ,`col_int64_max` BIGINT ,`col_decimal` DECIMAL(38,18) ,`col_double` DOUBLE ,`col_boolean` BOOLEAN ,`col_timestamp` TIMESTAMP ,`col_date` DATE ,`col_array_to_json` STRING ,`col_array_to_join` STRING ,`key_string` STRING ,`key_int32` INT ,`key_int32_min` INT ,`key_int32_max` INT ,`key_int64` BIGINT ,`key_int64_min` BIGINT ,`key_int64_max` BIGINT ,`key_decimal` DECIMAL(38,18) ,`key_double` DOUBLE ,`key_boolean` BOOLEAN ,`key_timestamp` TIMESTAMP ,`key_date` DATE ,`key_array_to_json` STRING ,`key_array_to_join` STRING ,`col_doc` STRING ,`col_combine` STRING ) PARTITIONED BY ( pt STRING ) LIFECYCLE 36500 ; -

Add a partition with the value

20230202.ALTER TABLE di_mongodb_conf_test ADD IF NOT EXISTS PARTITION (pt='20230202'); -

Verify the partition was created.

SELECT * FROM di_mongodb_conf_test WHERE pt='20230202';

Configure the offline sync task

Step 1: Add a MongoDB data source

Add a MongoDB data source and verify network connectivity between the data source and the exclusive resource group for Data Integration. For more information, see Configure a MongoDB data source.

Step 2: Create and configure an offline sync node

In DataWorks DataStudio, create an offline sync node, then configure the source, destination, and field mapping. The steps below cover the key configuration points. For parameters not mentioned here, use the default values. For more information, see Configure a task in the codeless UI.

-

Configure network connectivity. Select the MongoDB and MaxCompute data sources and the exclusive resource group, then test connectivity.

-

Select data sources. Select the MongoDB collection and the MaxCompute partitioned table prepared in the previous steps.

-

Configure field mapping. For MongoDB data sources, Same-line Mapping is used by default. To manually specify fields, click the

icon and enter the field definitions. The example below covers all field types used in this tutorial.

icon and enter the field definitions. The example below covers all field types used in this tutorial.{"name":"_id","type":"string"} {"name":"col_string","type":"string"} {"name":"col_int32","type":"long"} {"name":"col_int32_min","type":"long"} {"name":"col_int32_max","type":"long"} {"name":"col_int64","type":"long"} {"name":"col_int64_min","type":"long"} {"name":"col_int64_max","type":"long"} {"name":"col_decimal","type":"double"} {"name":"col_double","type":"double"} {"name":"col_boolean","type":"boolean"} {"name":"col_timestamp","type":"date"} {"name":"col_date","type":"date"} {"name":"col_array_to_json","type":"string"} {"name":"col_array_to_join","type":"array","splitter":","} {"name":"col_doc.key_string","type":"document.string"} {"name":"col_doc.key_int32","type":"document.long"} {"name":"col_doc.key_int32_min","type":"document.long"} {"name":"col_doc.key_int32_max","type":"document.long"} {"name":"col_doc.key_int64","type":"document.long"} {"name":"col_doc.key_int64_min","type":"document.long"} {"name":"col_doc.key_int64_max","type":"document.long"} {"name":"col_doc.key_decimal","type":"document.double"} {"name":"col_doc.key_double","type":"document.double"} {"name":"col_doc.key_boolean","type":"document.boolean"} {"name":"col_doc.key_timestamp","type":"document.date"} {"name":"col_doc.key_date","type":"document.date"} {"name":"col_doc.key_array_to_json","type":"document"} {"name":"col_doc.key_array_to_join","type":"document.array","splitter":","} {"name":"col_doc","type":"string"} {"name":"col_combine","type":"combine"}After saving the manual edits, the interface displays the source-to-destination field mapping.

Step 3: Submit and publish the node

If you use a standard mode workspace and want the task to run on a schedule in the production environment, submit and publish the node. For more information, see Publish a task.

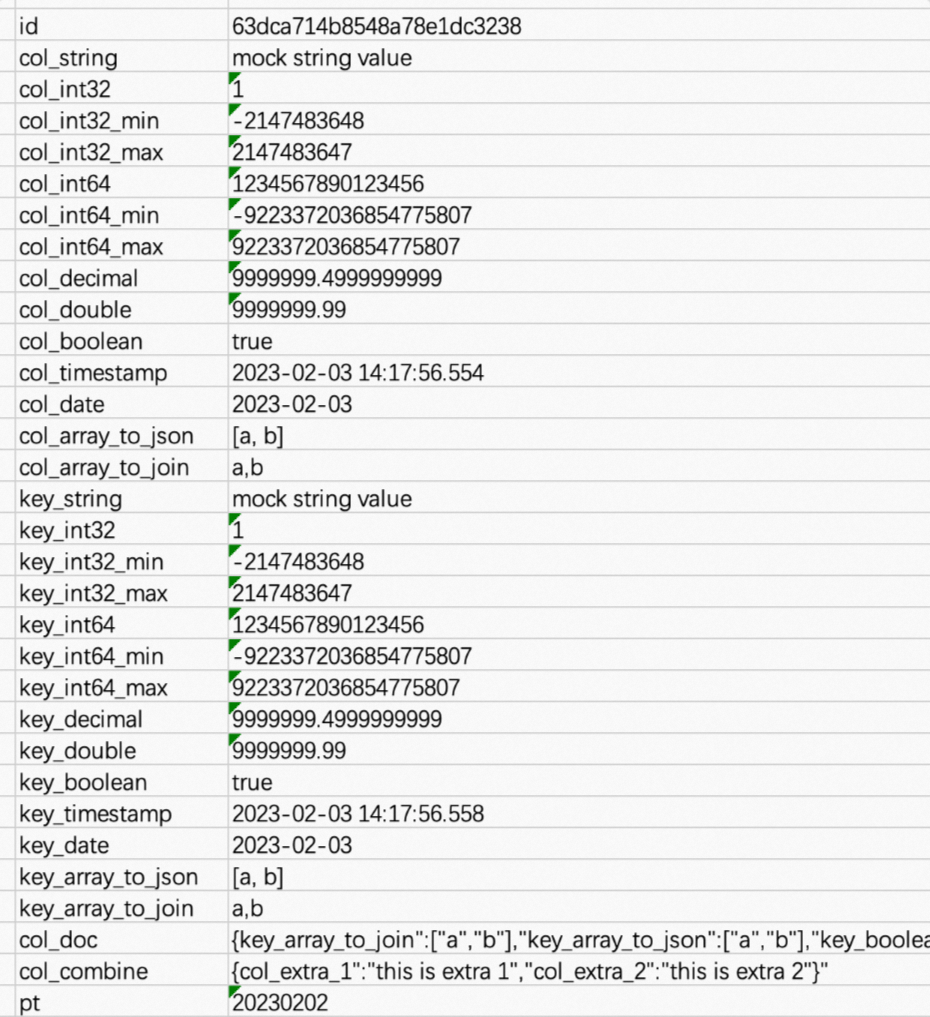

Step 4: Run the node and verify the result

Run the offline sync node. After it completes successfully, the synchronized data is available in the MaxCompute table.

-

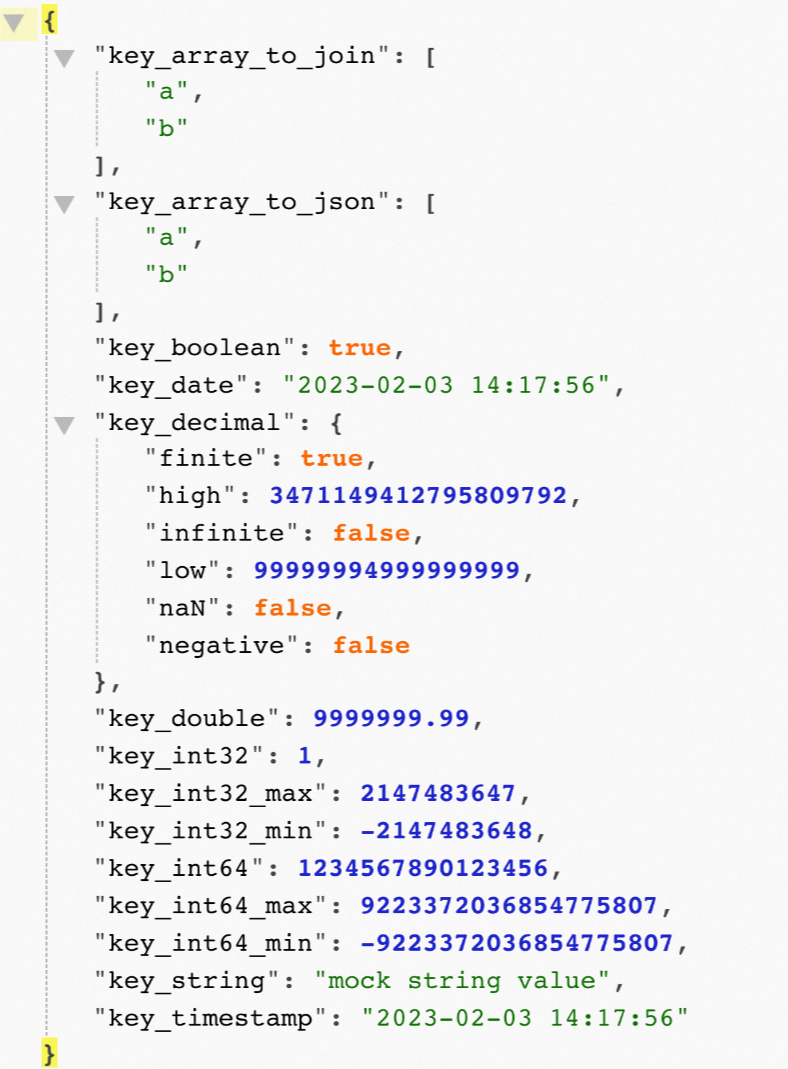

The content of the

col_docfield is shown in the following figure.

-

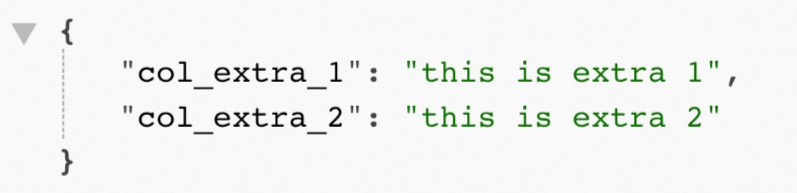

The content of the

col_combinefield is shown in the following figure.

For details on the Decimal type output, see Appendix 2: Output of the Decimal type in a document.

Appendix 1: Data format transformation reference

The MongoDB Reader plugin handles arrays, nested documents, and complex types through specific type settings in the field mapping configuration. The following table summarizes all supported transformations.

| Scenario | Raw MongoDB data | Field mapping configuration | Output to MaxCompute |

|---|---|---|---|

| Array to JSON string | {"col_array_to_json": ["a", "b"]} |

{"name":"col_array_to_json","type":"string"} — set type to string to serialize the array as JSON |

[a, b] |

| Array to concatenated string | {"col_array_to_join": ["a", "b"]} |

{"name":"col_array_to_join","type":"array","splitter":","} — set type to array and specify a splitter; elements are joined using the splitter |

a,b |

| Read a field from a nested document | {"col_doc": {"key_string": "mock string value"}} |

{"name":"col_doc.key_string","type":"document.string"} — use dot notation in name to specify the field path within the document |

mock string value |

| Serialize an entire nested document to JSON | {"col_doc": {"key_string": "mock string value", "key_int32": 1}} |

{"name":"col_doc","type":"string"} — set type to string to serialize the entire document as JSON |

{"key_string":"mockstringvalue","key_int32":1} |

| Serialize remaining fields to JSON | {"col_1": "value1", "col_2": "value2", "col_3": "value3", "col_4": "value4"} |

{"name":"col_combine","type":"combine"} — all fields not explicitly mapped are serialized to JSON |

{"col_3":"value3","col_4":"value4"} |

Appendix 2: Output of the Decimal type in a document

When a document is serialized to JSON format, a Decimal128 field is output as a JSON object by default:

{

"key_decimal":

{

"finite": true,

"high": 3471149412795809792,

"infinite": false,

"low": 99999994999999999,

"naN": false,

"negative": false

}

}To output the value as a number type, follow these steps:

-

In the offline sync task editor, click Convert to Script to switch to the code editor.

-

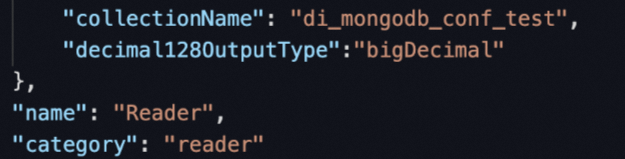

In the Reader configuration, add the

decimal128OutputTypeparameter and set its value tobigDecimal.

-

Rerun the task. The Decimal128 field is now output as a numeric string:

{ "key_decimal": "9999999.4999999999" }