Data Push lets you query data from a data source using SQL and automatically send the results to a webhook or email on a schedule. In a few steps, you write the query, compose the message, configure when and where to send it, and publish.

Overview

Data Push lets you create a push task where you can write SQL queries to define the data scope and compose the message content as Rich Text or tables. You can configure a Scheduled Task to periodically push data to a destination webhook or email address.

Supported data sources and channels

Data sources:

-

MySQL (compatible with StarRocks and Doris)

-

PostgreSQL (compatible with Snowflake and Amazon Redshift)

-

Hologres

-

MaxCompute (ODPS)

-

ClickHouse

Push channels: DingTalk, Lark, WeCom, Teams, and Email.

Limitations

-

Each SELECT statement returns a maximum of 10,000 rows.

-

Payload size limits vary by destination:

-

DingTalk: 20 KB

-

Lark: 20 KB; images must be smaller than 10 MB

-

WeCom: 20 messages per minute per bot

-

Teams: 28 KB

-

Email: one email body per push task; for other limits, see your email service provider's SMTP restrictions

-

-

Data Push is available in the following regions: China (Hangzhou), China (Shanghai), China (Beijing), China (Shenzhen), China (Chengdu), China (Hong Kong), Singapore, Japan (Tokyo), US (Silicon Valley), US (Virginia), and Germany (Frankfurt).

Prerequisites

Before you begin, make sure you have:

-

A data source created in DataWorks. See Manage data sources.

-

Public network access enabled for the resource group. See Network connectivity solutions.

Step 1: Create a push task

-

Log on to the DataWorks console and switch to the region where your data source is located.

-

In the left-side navigation pane, choose Data Analysis and Service > DataService Studio. Select your workspace and click Go to DataService Studio.

-

In DataService Studio, choose Service Development > Data Push in the left-side navigation pane.

-

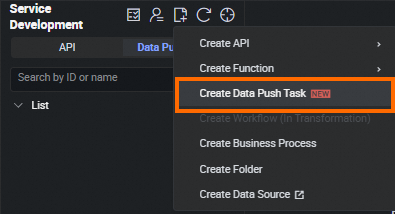

On the Data Push page, click the

icon, select Create Data Push Task, enter a name, and click OK.

icon, select Create Data Push Task, enter a name, and click OK.

Step 2: Configure the push task

Prepare sample data (optional)

The following sections walk through an example that pushes daily sales totals per department — and the day-over-day change — to a specified channel. The example uses a MaxCompute table named sales.

To follow along, create the sales table in your environment. For details on creating a MaxCompute table, see Create and use a MaxCompute table.

CREATE TABLE IF NOT EXISTS sales (

id BIGINT COMMENT 'Unique identifier',

department STRING COMMENT 'Department name',

revenue DOUBLE COMMENT 'Revenue amount'

) PARTITIONED BY (ds STRING);

-- Insert sample data into partitions

INSERT INTO TABLE sales PARTITION(ds='20240101')(id, department, revenue) VALUES (1, 'Dept. 1', 12000.00);

INSERT INTO TABLE sales PARTITION(ds='20240101')(id, department, revenue) VALUES (2, 'Dept. 2', 21000.00);

INSERT INTO TABLE sales PARTITION(ds='20240101')(id, department, revenue) VALUES (3, 'Dept. 3', 5000.00);

INSERT INTO TABLE sales PARTITION(ds='20240102')(id, department, revenue) VALUES (1, 'Dept. 1', 11000.00);

INSERT INTO TABLE sales PARTITION(ds='20240102')(id, department, revenue) VALUES (2, 'Dept. 2', 20000.00);

INSERT INTO TABLE sales PARTITION(ds='20240102')(id, department, revenue) VALUES (3, 'Dept. 3', 10000.00);Select a data source

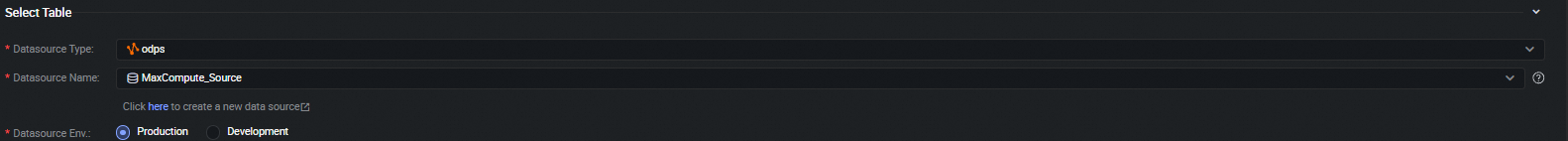

Select the Data source type, Data source name, and Data source env. If you're following the example, select the environment where you created the sales table.

For supported data source types, see Supported data sources and channels.

Write the query SQL

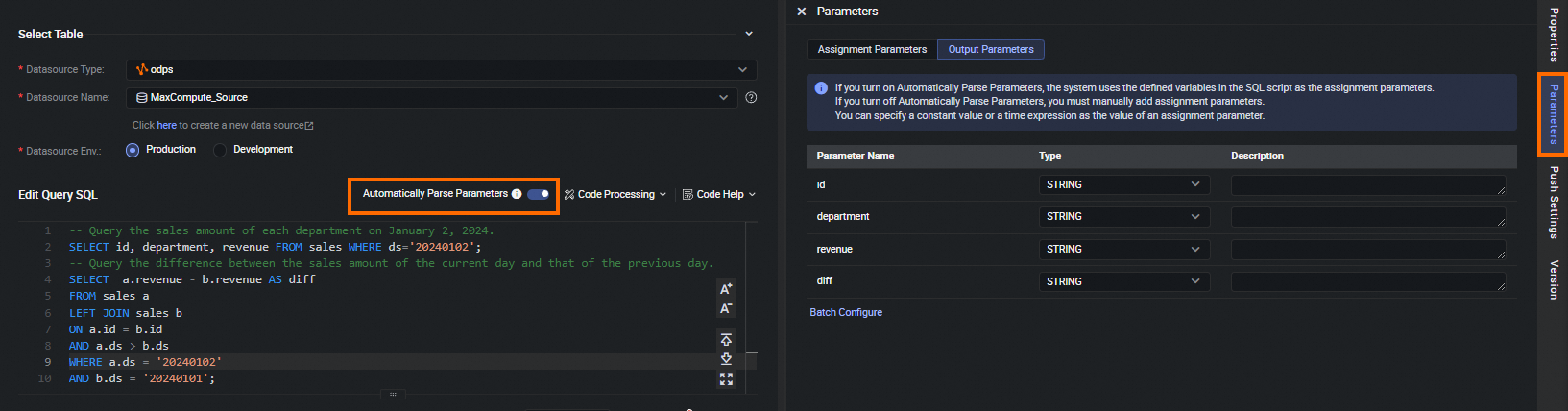

In the Edit Query SQL section, write the SQL queries that define the data to push.

Static query example — retrieve sales data for a specific date:

-- Sales amount per department on 2024-01-02

SELECT id, department, revenue FROM sales WHERE ds='20240102';

-- Day-over-day revenue change

SELECT a.revenue - b.revenue AS diff

FROM sales a

LEFT JOIN sales b ON a.id = b.id AND a.ds > b.ds

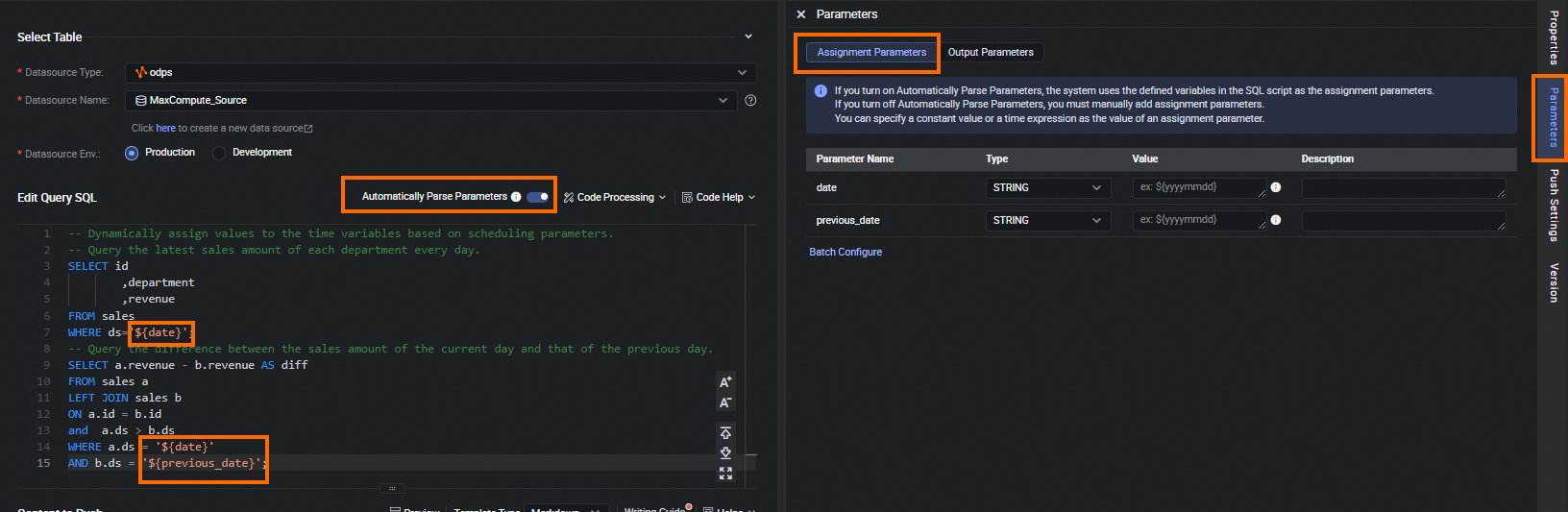

WHERE a.ds = '20240102' AND b.ds = '20240101';After you write the SQL, the query result fields are automatically added to Parameters > Output Parameters. If parsing fails or the results are incorrect, turn off Automatically Parse Parameters and add parameters manually.

Dynamic query example — use scheduling parameters to run the same query daily:

Use ${variable_name} syntax to define variables in SQL. These become Assignment Parameters, which you can assign a constant value or a scheduling parameter's date and time expression.

-- Sales amount per department, dynamically assigned date

SELECT id, department, revenue FROM sales WHERE ds='${date}';

-- Day-over-day change using scheduling parameters

SELECT a.revenue - b.revenue AS diff

FROM sales a

LEFT JOIN sales b ON a.id = b.id AND a.ds > b.ds

WHERE a.ds = '${date}' AND b.ds = '${previous_date}';

Segmented queries — for large tables, use the Next Token method to query data in segments. Click Code Help > Code Template > Next Token in the code editor toolbar for usage instructions.

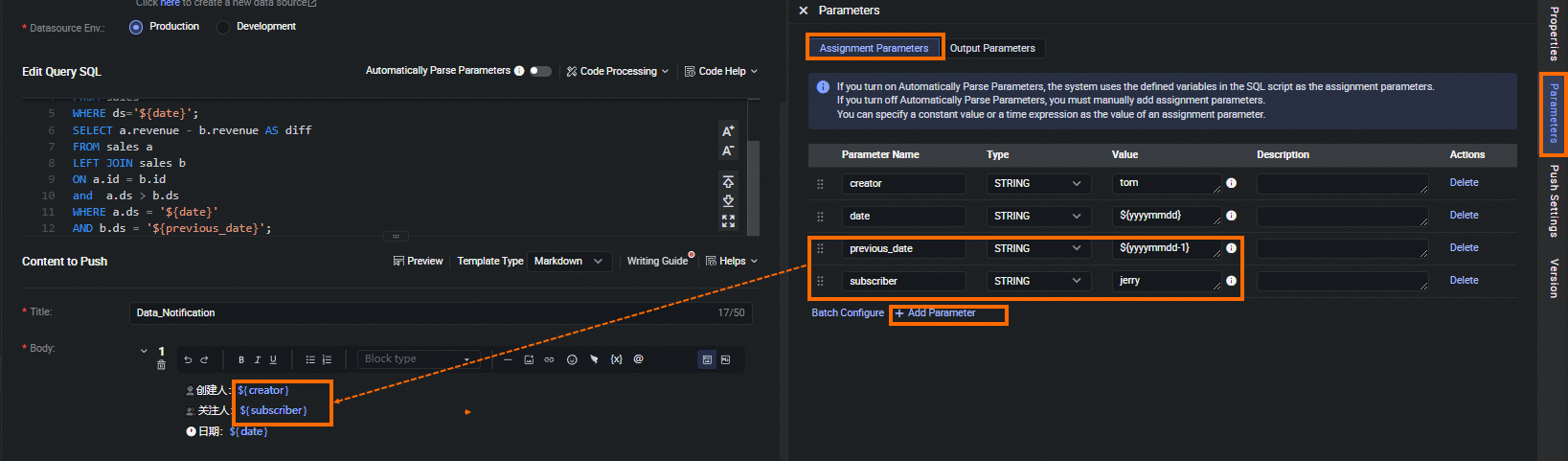

Compose the push content

In the Content to Push section, set a message title in the Title field, then click Add to choose the content type: Markdown, Table, or Email Body.

Click Preview in the toolbar to see how the message will look before sending.

If the destination is an email, Markdown and Table content is sent as attachments. Table content is rendered and displayed in the email body.

If the destination is a webhook, Markdown and Table content appears as the message body. Email Body content is hidden.

Markdown content

Embed parameters in Markdown using ${parameter_name} syntax:

-

Assignment Parameters — assign a constant or a scheduling date/time expression in Parameters > Assignment Parameters.

-

Output Parameters — column names or aliases from your SQL query (for example,

A,BfromSELECT A, B FROM table). These are replaced with query results when the task runs.

You can also:

-

@mention members in Lark: By default, Markdown mode uses Rich Text. To use

<at id="all" />or<at email="username@example.com" />tags, click the icon to switch to Markdown source mode.

icon to switch to Markdown source mode. -

Add images or insert DingTalk Emojis.

Table content

Click Add Column to add columns and associate Parameters with them.

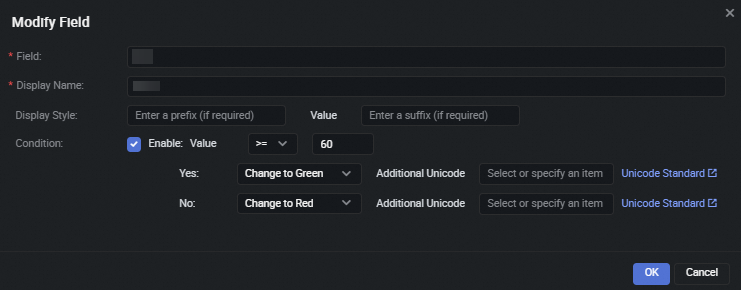

When the destination is a Lark webhook, click the ![]() icon next to a column to open Modify Field and customize the display:

icon next to a column to open Modify Field and customize the display:

| Setting | Description |

|---|---|

| Field | Switch to a different Output Parameters field |

| Display Name | The column header displayed in the pushed table |

| Display Style | Add a fixed prefix or suffix to the value |

| Condition | Compare the value against a configured threshold; customize display color and additional Unicode based on whether the condition is met |

Table rendering support by channel:

-

DingTalk: Supports Markdown tables and Data Push's built-in tables. However, it does not support the Display Style and Condition settings. The DingTalk mobile app does not support displaying tables.

-

Lark: Supports both Markdown tables and Data Push's built-in tables, and can render them.

-

WeCom: Supports pushing Markdown tables but does not render them.

-

Teams mobile app: Supports Markdown tables and can render them.

Email body

Each push task supports only one email body. The email body is rendered only when the destination is an email address — it is hidden for webhook destinations.

Step 3: Configure push settings

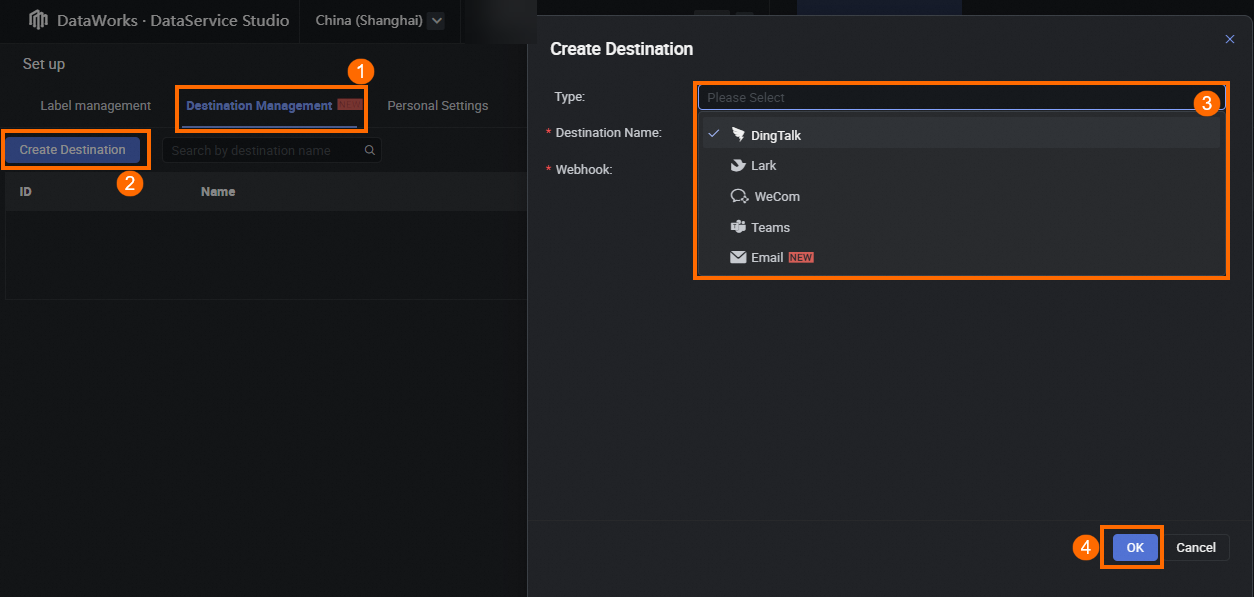

Before configuring push settings, create a destination. In DataService Studio, click the ![]() icon in the lower-left corner to open the settings panel, switch to the Destination Management tab, and click Create Destination.

icon in the lower-left corner to open the settings panel, switch to the Destination Management tab, and click Create Destination.

Create a webhook destination

Configure the following parameters:

| Parameter | Description |

|---|---|

| Type | Select the channel: DingTalk, Lark, WeCom, or Teams |

| Destination Name | A custom name for this destination |

| Webhook | The webhook URL for the selected channel |

To get a Lark bot webhook URL, see Configure a Lark Webhook trigger.

To get a Teams webhook URL, see Create Incoming Webhooks using Microsoft Teams workflow.

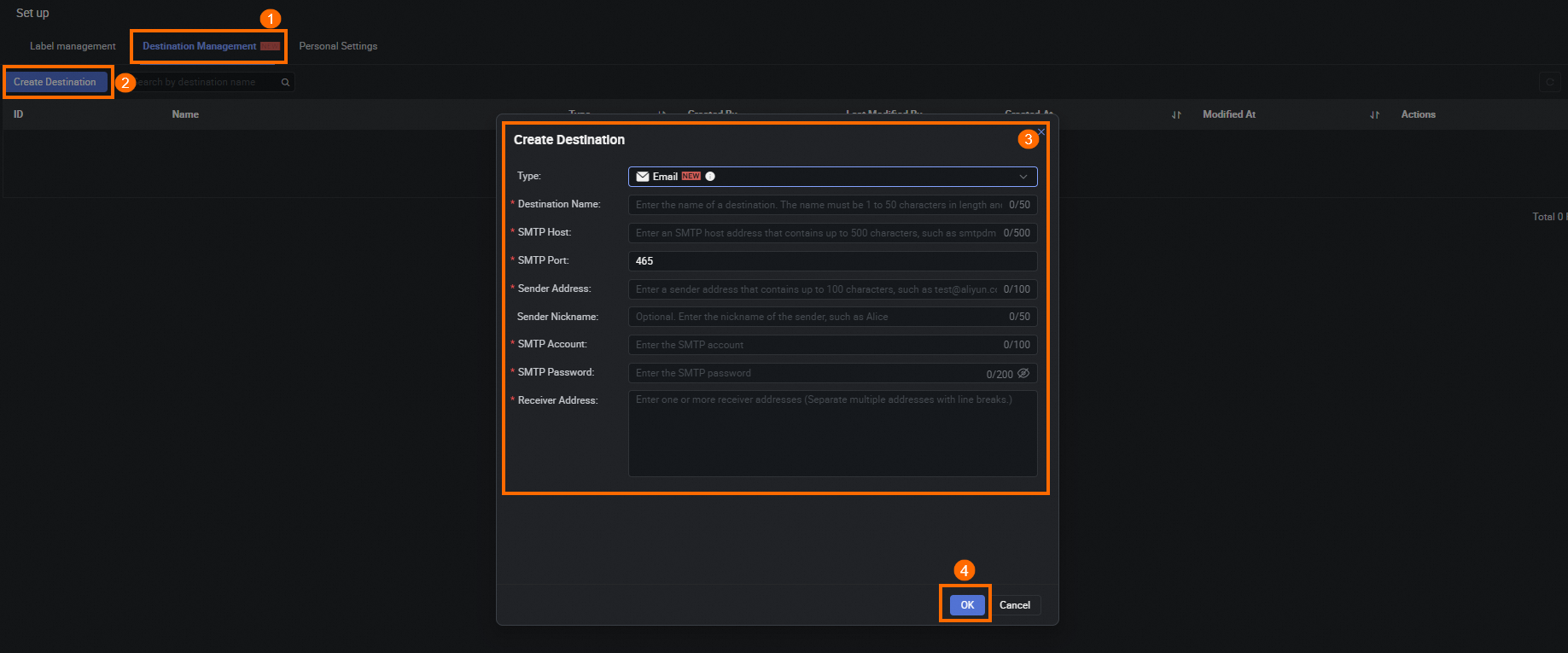

Create an email destination

Configure the following parameters:

| Parameter | Description |

|---|---|

| Type | Select Email |

| Destination Name | A custom name for this destination |

| SMTP Host | The email server address |

| SMTP Port | The email server port (default: 465, which can be modified) |

| Sender Address | The sender email address |

| SMTP Account | The full email account name |

| SMTP Password | The password for the email account |

| Receiver Address | The destination email address |

Push settings

Click Push Settings on the right to configure the schedule, resource group, and destination.

Schedule configuration:

| Schedule | Behavior | Example |

|---|---|---|

| Month | Runs on specified days of the month at a set time | 1st of every month at 08:00 |

| Week | Runs on specified days of the week at a set time | Every Monday at 09:00 |

| Day | Runs every day at a set time | Every day at 08:00 |

| Hour | Runs at a set hourly interval, or at specified hours and minutes | Every hour from 00:00 to 23:59; or at 00:10 and 01:10 daily |

Other settings:

-

Timeout definition: Limits task duration. With the System Default setting, the timeout is dynamically adjusted between 3 and 7 days. Set a Custom timeout (for example, 1 hour) to terminate the task if it runs longer than that.

-

Effective Date: Sets the date range during which the task is automatically scheduled. Select Permanent to run without a date limit. If you set a specific range (for example, 2024-01-01 to 2024-12-31), the task only runs within that period.

-

Resource Group for Scheduling: Assign an exclusive resource group for scheduling or a serverless resource group (general-purpose). See Manage resource groups.

-

Destination: Select from the destinations you created in Destination Management.

When pushing to a DingTalk webhook, add keywords in the bot's Security Settings > Custom Keywords section. The push content must contain at least one of these keywords for the push to succeed.

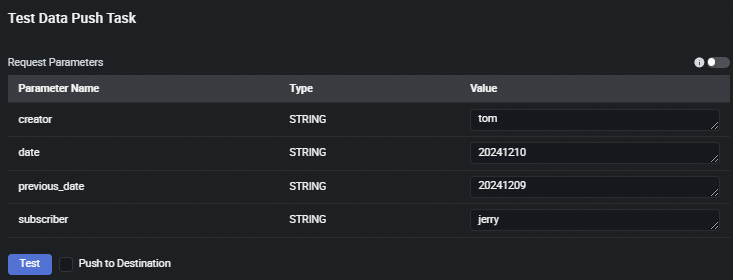

Step 4: Test the push task

-

Click Save in the toolbar to save the current configuration.

-

Click Test to run a test in the development environment. For variables, assign constant values manually during testing.

A push task must pass a test in the development environment before you can submit and publish it.

Testing tip: Start by testing with a dedicated test webhook or a test email address. Once the output looks correct, update the destination to your actual recipients before publishing.

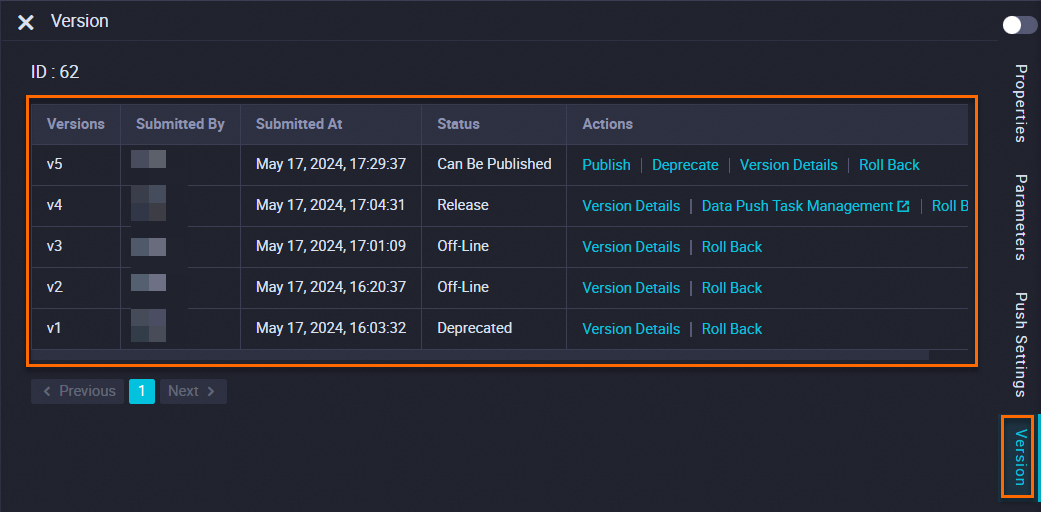

Step 5: Publish the push task

Submit and publish

-

After the test passes, click Submit. Unsubmitted tasks remain as drafts and do not generate a new version.

-

Submitting creates a new version. On the Version panel, find the version marked Can Be Published and click Publish. The task is published and runs on its configured schedule.

Version panel actions:

| Task status | Available actions | Description |

|---|---|---|

| Published | Data Push Task Management | Go to the task management page to view details |

| Can Be Published | Publish | Publish this version |

| Deprecate | Mark this version as deprecated | |

| Off-Line, Deprecated | Version Details | View the configuration and push content for this version |

| Roll back | Roll back to this version |

Version Details and Roll back are available for tasks in all statuses.

Manage published tasks

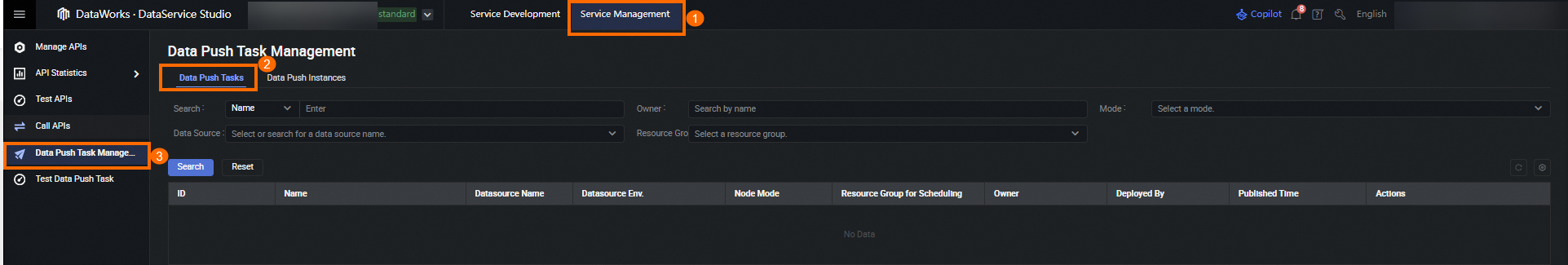

After publishing, go to the Data Push Tasks list by clicking Data Push Task Management in the Actions column of the Version panel, or by navigating to Service Management > Data Push Task Management.

The list shows all published tasks with their ID, Name, data source name, Data source env., Node Mode, Resource Group for Scheduling, Owner, publisher, and Published Time.

| Action | Description |

|---|---|

| Unpublish | Take the task offline |

| Test | Go to the Test Data Push Task page for this task |

Click theicon in the Name column to go to the Version Details page.

Test a published task

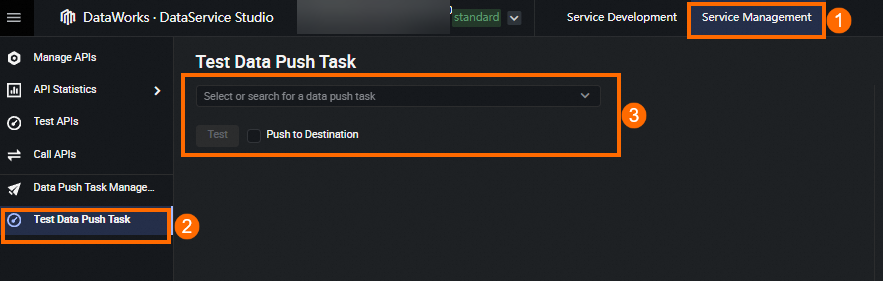

To verify that a published task pushes data correctly, go to the Test Data Push Task page:

-

Option 1: Choose Service Management > Test Data Push Task.

-

Option 2: Choose Service Management > Data Push Task Management > Data Push Tasks, then click Test in the Actions column.