The ODPS Spark node in DataWorks lets you schedule Spark on MaxCompute offline jobs and integrate them with other node types in a workflow. Spark on MaxCompute is compatible with open-source Spark and runs on top of MaxCompute's unified computing resource and data permission system. When run as offline jobs in DataWorks, Spark on MaxCompute jobs execute in cluster mode.

Jobs can be written in Java, Scala, or Python. For information about run modes, see Runtime modes.

Limitations

-

If submission fails for an ODPS Spark node that uses Spark 3.x, purchase and use a Serverless Resource Group. For more information, see Use serverless resource groups.

-

ODPS Spark nodes cannot be run directly from the Data Development page. To run a job, go to Operation Center and trigger a Data Backfill instance.

Prerequisites

Before you begin, ensure that you have completed the setup for your development language.

Java/Scala

-

A Linux or Windows development environment. For setup instructions, see Set up a Linux development environment or Set up a Windows development environment.

-

Spark on MaxCompute code developed locally. Use the sample project template to get started.

-

The packaged JAR uploaded to DataWorks as a MaxCompute resource. See Create and use MaxCompute resources.

Python (default environment)

-

A Python resource containing your PySpark code, uploaded to DataWorks. See Create and use MaxCompute resources and Develop a Spark on MaxCompute application by using PySpark.

The default Python environment has limited support for third-party packages. If your job requires additional dependencies, use a custom Python environment (see below), or switch to the PyODPS 2 node or PyODPS 3 node.

Python (custom environment)

-

A local Python environment configured per the PySpark Python version and dependency support requirements.

-

The environment compressed into a ZIP package and uploaded to DataWorks as a MaxCompute resource. This package provides the execution environment for the job. See Create and use MaxCompute resources.

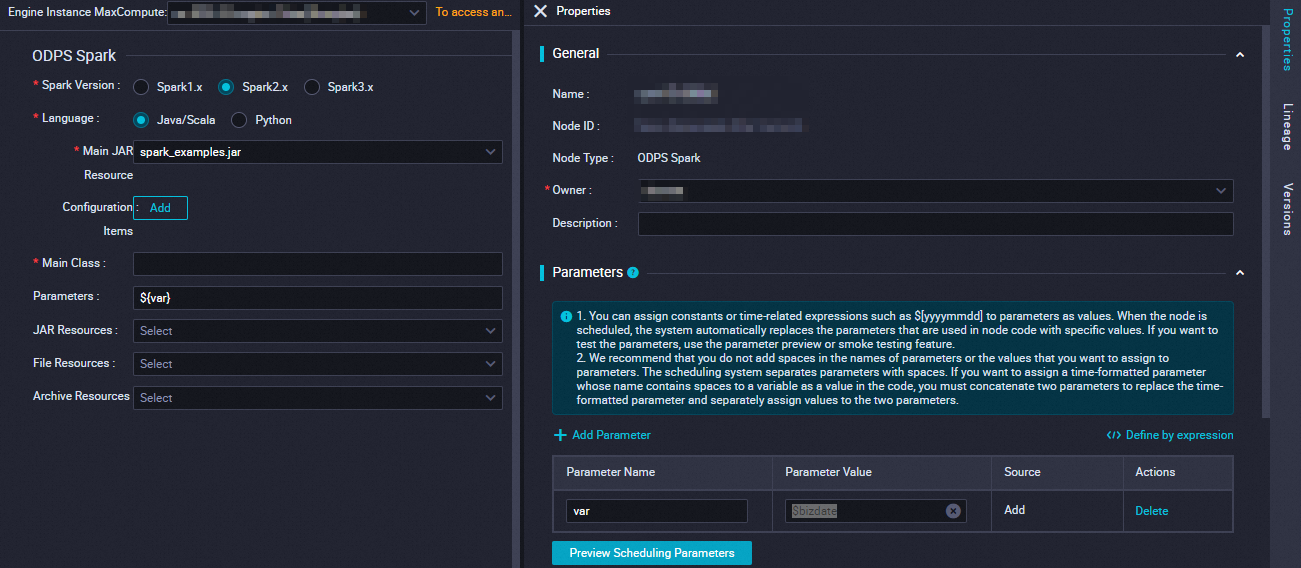

Configure the node

DataWorks runs Spark on MaxCompute offline jobs in cluster mode. In this mode, you must specify a custom program entry point (main). The job terminates when the main method completes and returns a success or failure status.

Do not upload the spark-defaults.conf file. Instead, add each of its settings as a separate Configuration Item on the ODPS Spark node.

| Parameter | Description | spark-submit equivalent |

|---|---|---|

| Spark version | Available options: Spark 1.x, Spark 2.x, Spark 3.x. If submission fails for a Spark 3.x node, purchase and use a Serverless Resource Group. | N/A (UI only) |

| Language | Select Java/Scala or Python based on the development language of your job. | N/A (UI only) |

| Select main resource | The main JAR resource or Python resource for the job. Upload and commit the resource file to DataWorks before selecting it. See Create and use MaxCompute resources. | app jar or Python file |

| Configuration Item | Configuration items for submitting the job. The following items are auto-configured from the MaxCompute project and do not need to be set unless you want to override them: spark.hadoop.odps.access.id, spark.hadoop.odps.access.key, and spark.hadoop.odps.end.point. Add each setting from spark-defaults.conf separately here—for example, the number of executor instances, memory, and spark.hadoop.odps.runtime.end.point. |

--conf PROP=VALUE |

| Main Class | The main class name. Required for Java/Scala jobs only. | --class CLASS_NAME |

| Arguments | Arguments for the job, separated by spaces. Supports scheduling parameters in the format ${variable_name}. After setting an argument, assign its value in Scheduling Configuration > Parameters. For supported formats, see Supported formats for scheduling parameters. |

[app arguments] |

| Select other resources | Additional resources for the job. Upload and commit resource files to DataWorks before selecting them. See Create and use MaxCompute resources. Options: JAR resources (--jars JARS, Java/Scala only), Python resources (--py-files PY_FILES, Python only), file resources (--files FILES), archive resources (--archives ARCHIVES, ZIP format only). |

Varies by resource type |

Simple example

This example walks through checking whether a string is numeric using a PySpark job. You will:

-

Create a Python resource with the PySpark script.

-

Configure an ODPS Spark node to run the script.

-

Trigger the job via Data Backfill in Operation Center.

-

View the results in the run log.

Step 1: Create the Python resource

-

On the Data Development page, create a Python resource named spark_is_number.py. For more information, see Create and use MaxCompute resources.

# -*- coding: utf-8 -*- import sys from pyspark.sql import SparkSession try: # for python 2 reload(sys) sys.setdefaultencoding('utf8') except: # python 3 not needed pass if __name__ == '__main__': spark = SparkSession.builder\ .appName("spark sql")\ .config("spark.sql.broadcastTimeout", 20 * 60)\ .config("spark.sql.crossJoin.enabled", True)\ .config("odps.exec.dynamic.partition.mode", "nonstrict")\ .config("spark.sql.catalogImplementation", "odps")\ .getOrCreate() def is_number(s): try: float(s) return True except ValueError: pass try: import unicodedata unicodedata.numeric(s) return True except (TypeError, ValueError): pass return False print(is_number('foo')) print(is_number('1')) print(is_number('1.3')) print(is_number('-1.37')) print(is_number('1e3')) -

Save and commit the resource.

Step 2: Configure the ODPS Spark node

In the ODPS Spark node, configure the following parameters, then save and commit the node.

| Parameter | Value |

|---|---|

| Spark version | Spark 2.x |

| Language | Python |

| Select main Python resource | spark_is_number.py |

Step 3: Run the job

Go to Operation Center for the development environment and run a Data Backfill job. For detailed instructions, see Data backfill instance O&M.

Step 4: View the results

After the Data Backfill instance completes successfully, go to its tracking URL in the run log to view the output:

False

True

True

True

TrueAdvanced examples

For more examples covering different use cases:

What's next

-

Scheduling: Configure rerun settings and scheduling dependencies so the node runs periodically. See Overview of task scheduling properties.

-

Task debugging: Test and verify the node's code logic. See Task debugging process.

-

Task deployment: Deploy all nodes after development is complete. Deployed nodes run on their configured schedule. See Deploy tasks.

-

Diagnose Spark jobs: Use the Logview tool and Spark web UI to verify correct submission and execution. See Diagnose Spark Jobs.