In this tutorial, you build a data pipeline that pulls user behavior data from two sources — website access logs (via HttpFile) and basic user information (via MySQL) — syncs the data to an Object Storage Service (OSS) bucket, and creates E-MapReduce (EMR) Hive external tables to query the results. By the end, you have a scheduled workflow in DataWorks that runs automatically every day at 00:30.

Prerequisites

Before you begin, ensure that you have:

-

A prepared DataWorks environment. For details, see Prepare environments.

-

A security group rule added in the Elastic Compute Service (ECS) console for network connectivity. The rule must use port

10000of the ECS instance to connect to DataWorks, with Authorization Object set to the CIDR block of the vSwitch associated with your resource group. For details, see Add a security group rule.

Step 1: Add data sources

Add three data sources to your DataWorks workspace:

| Data source | Type | Purpose |

|---|---|---|

user_behavior_analysis_httpfile |

HttpFile | Read website access logs |

user_behavior_analysis_mysql |

MySQL | Read basic user information |

test_g |

OSS | Store the synced data |

Add the HttpFile data source

-

Log on to the DataWorks console. In the top navigation bar, select your region. In the left-side navigation pane, choose More > Management Center. Select your workspace from the drop-down list and click Go to Management Center.

-

In the left-side navigation pane of the SettingCenter page, click Data Sources.

-

In the upper-left corner of the Data Sources page, click Add Data Source. In the dialog box, click HttpFile.

-

On the Add HttpFile Data Source page, configure the following parameters:

Parameter Value Data Source Name user_behavior_analysis_httpfileData Source Description Used as the source of a batch synchronization task to access website access log test data. Read-only. URL https://dataworks-workshop-2024.oss-cn-shanghai.aliyuncs.com(same for development and production environments) -

Find the resource group you want to use. Click Test Network Connectivity in both the Connection Status (Development Environment) and Connection Status (Production Environment) columns, then confirm that Connected appears in both columns.

ImportantAt least one resource group must show Connected. Otherwise, you cannot use the codeless UI to configure a data synchronization task for this data source.

-

Click Complete Creation.

Add the MySQL data source

-

In the left-side navigation pane of the SettingCenter page, click Data Sources. In the upper-left corner, click Add Data Source.

-

In the dialog box, select MySQL.

-

On the Add MySQL Data Source page, configure the following parameters:

Parameter Value Data Source Name user_behavior_analysis_mysqlData Source Description Used as the source of a batch synchronization task to access basic user information test data. Read-only. Configuration Mode Connection String Mode Host IP Address rm-bp1z69dodhh85z9qa.mysql.rds.aliyuncs.comPort Number 3306Database Name workshopUsername workshopPassword workshop#2017Authentication Method No Authentication -

Find the resource group you want to use. Click Test Network Connectivity in both the Connection Status (Development Environment) and Connection Status (Production Environment) columns, then confirm that Connected appears in both columns.

-

Click Complete Creation.

Add the OSS data source

The MySQL data and the HttpFile access logs are both synced to a private OSS data source.

-

In the left-side navigation pane of the SettingCenter page, click Data Sources. In the upper-left corner, click Add Data Source.

-

In the dialog box, select OSS.

-

On the Add OSS Data Source page, configure the following parameters:

ImportantThe AccessKey secret is displayed only when you create it. Store it securely. If the AccessKey pair is leaked or lost, delete it and create a new AccessKey pair.

Parameter Value Data Source Name test_gData Source Description The description of the data source. Access Mode AccessKey Mode AccessKey ID Your AccessKey ID. Go to the AccessKey page to copy it. AccessKey Secret Your AccessKey secret. Endpoint http://oss-cn-shanghai-internal.aliyuncs.comBucket dw-emr-demo(the OSS bucket you created during environment preparation) -

Find the resource group you want to use. Click Test Network Connectivity in both the Connection Status (Development Environment) and Connection Status (Production Environment) columns, then confirm that Connected appears in both columns.

At least one resource group must show Connected. Otherwise, you cannot use the codeless UI to configure a data synchronization task for this data source.

-

Click Complete Creation.

Step 2: Create the workflow

-

On the Data Sources page, click the

icon in the upper-left corner and choose All Products > Data Development And Task Operation > DataStudio.

icon in the upper-left corner and choose All Products > Data Development And Task Operation > DataStudio. -

In the Scheduled Workflow pane, right-click Business Flow and select Create Workflow.

-

In the Create Workflow dialog box, set Workflow Name to

workshop_emrand click Create. -

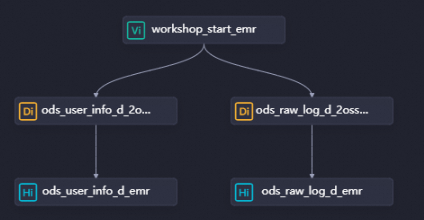

Double-click the new workflow to open the configuration tab. Create three nodes on the canvas:

-

Click Create Node and drag Zero-Load Node (in the General section) to the canvas. In the dialog box, set Name to

workshop_start_emrand click Confirm. -

Click Create Node and drag Offline synchronization (in the Data Integration section) to the canvas. Create two nodes named

ods_raw_log_d_2oss_emrandods_user_info_d_2oss_emr. These nodes sync the HttpFile access logs and MySQL user information to OSS.

-

-

Draw dependency lines from

workshop_start_emrto both batch synchronization nodes, making it the ancestor node of both.

Step 3: Configure the nodes

Configure the initial node

The zero-load node workshop_start_emr controls when the workflow runs. It triggers the entire workflow daily at 00:30.

-

In the Scheduled Workflow pane, double-click the

workshop_start_emrnode. In the right-side navigation pane, click Properties. -

Configure the scheduling properties:

Section Setting Schedule Set the scheduling time to 00:30. Set Rerun to Allow Regardless of Running Status — this lets you re-run the workflow manually without waiting for it to finish or fail.Scheduling dependencies Configure workshop_start_emras a descendant of the workspace root node (named in theWorkspace name_rootformat). The root node triggers this workflow to run. -

Click the

icon to save.

icon to save.

Configure the batch synchronization nodes

Sync MySQL user information to OSS

-

On the DataStudio page, double-click the

ods_user_info_d_2oss_emrnode. -

Set the network connections between your resource group and the data sources: After completing the connection configuration, click Next and finish the connectivity test.

Parameter Value Source MySQL / user_behavior_analysis_mysqlResource Group Your serverless resource group Destination OSS / test_g -

Configure the synchronization settings:

Parameter Value Source > Table ods_user_info_dSource > Split key uid(primary key; only INTEGER fields are supported)Destination > Text type textDestination > Object Name (Path Included) ods_user_info_d/user_${bizdate}/user_${bizdate}.txt—ods_user_info_dis the folder you created in the OSS bucket;${bizdate}resolves to the previous day's date.Destination > Column Delimiter | -

Click Properties in the right-side navigation pane and configure the scheduling settings:

Section Setting Scheduling Parameter Click Add Parameter. Set Parameter Name to bizdateand Parameter Value to$[yyyymmdd-1]. This passes the previous day's date to the sync task at run time.Schedule Set Rerun to Allow Regardless of Running Status. Dependencies Make sure the output table is set to this node. The output table name follows the Workspace name.Node nameformat. -

Click the

icon to save.

icon to save.

Sync HttpFile access logs to OSS

-

On the DataStudio page, double-click the

ods_raw_log_d_2oss_emrnode. -

Set the network connections between your resource group and the data sources: After completing the connection configuration, click Next and finish the connectivity test.

Parameter Value Source HttpFile / user_behavior_analysis_httpfileResource Group Your serverless resource group Destination OSS / test_g -

Configure the synchronization settings:

Parameter Value Source > File Path /user_log.txtSource > Text type textSource > Column Delimiter |Source > Compression format None Source > Skip Header No Destination > Text type textDestination > Object Name (Path Included) ods_raw_log_d/log_${bizdate}/log_${bizdate}.txt—ods_raw_log_dis the folder you created in the OSS bucket;${bizdate}resolves to the previous day's date.Destination > Column Delimiter | -

Click Properties in the right-side navigation pane and configure the scheduling settings:

Section Setting Scheduling Parameter Click Add Parameter. Set Parameter Name to bizdateand Parameter Value to$[yyyymmdd-1]. This passes the previous day's date to the sync task at run time.Schedule Set Rerun to Allow Regardless of Running Status. Dependencies Make sure the output table is set to this node. The output table name follows the Workspace name.Node nameformat. -

Click the

icon to save.

icon to save.

Create EMR Hive tables

Create two EMR Hive external tables — ods_user_info_d_emr and ods_raw_log_d_emr — that point to the synced data in OSS. These tables let you query the synchronized data directly.

-

In the Scheduled Workflow pane, click the

workshop_emrworkflow, right-click EMR, and then choose Create Node > EMR Hive. -

Create two nodes:

ods_user_info_d_emrandods_raw_log_d_emr. Then draw dependency lines on the workflow configuration tab so both EMR Hive nodes depend on the corresponding batch synchronization nodes.

-

Configure the

ods_user_info_d_emrnode:-

Double-click the node. On the configuration tab, enter the following SQL:

CREATE EXTERNAL TABLE IF NOT EXISTS ods_user_info_d_emr ( `uid` STRING COMMENT 'The user ID', `gender` STRING COMMENT 'The gender', `age_range` STRING COMMENT 'The age range', `zodiac` STRING COMMENT 'The zodiac sign' ) PARTITIONED BY ( dt STRING ) ROW FORMAT delimited fields terminated by '|' LOCATION 'oss://dw-emr-demo/ods_user_info_d/'; ALTER TABLE ods_user_info_d_emr ADD IF NOT EXISTS PARTITION (dt='${bizdate}') LOCATION 'oss://dw-emr-demo/ods_user_info_d/user_${bizdate}/';NoteThe

LOCATIONpath matches the Object Name (Path Included) value you set in theods_user_info_d_2oss_emrsynchronization node.dw-emr-demois the OSS bucket you created during environment preparation. -

Click Properties and configure the scheduling settings:

Section Setting Scheduling Parameter Set Parameter Name to bizdate, Parameter Value to$[yyyymmdd-1].Schedule Set Rerun to Allow Regardless of Running Status. Dependencies Set the output table to this node. The output table name follows the Workspace name.Node nameformat.Resource Group Select your serverless resource group. -

Click the

icon to save and run the node.

icon to save and run the node.

-

-

Configure the

ods_raw_log_d_emrnode:-

Double-click the node. On the configuration tab, enter the following SQL:

-- Create the table used to store website access logs. CREATE EXTERNAL TABLE IF NOT EXISTS ods_raw_log_d_emr ( `col` STRING ) PARTITIONED BY ( dt STRING ); ALTER TABLE ods_raw_log_d_emr ADD IF NOT EXISTS PARTITION (dt='${bizdate}') LOCATION 'oss://dw-emr-demo/ods_raw_log_d/log_${bizdate}/';NoteThe

LOCATIONpath matches the Object Name (Path Included) value you set in theods_raw_log_d_2oss_emrsynchronization node.dw-emr-demois the OSS bucket you created during environment preparation. -

Click Properties and configure the scheduling settings:

Section Setting Scheduling Parameter Set Parameter Name to bizdate, Parameter Value to$[yyyymmdd-1].Schedule Set Rerun to Allow Regardless of Running Status. Dependencies Set the output table to this node. The output table name follows the Workspace name.Node nameformat.Resource Group Select your serverless resource group. -

Click the

icon to save and run the node.

icon to save and run the node.

-

Step 4: Run the workflow and verify results

Run the workflow

On the DataStudio page, double-click the workshop_emr workflow under Business Flow. On the workflow configuration tab, click the ![]() icon in the toolbar to run all nodes in dependency order.

icon in the toolbar to run all nodes in dependency order.

Check node status

-

Nodes in the

state are running normally.

state are running normally. -

If a node shows

and the error

and the error "java.net.ConnectException: Connection timed out (Connection timed out)"appears, add a security group rule in the ECS console. Use port10000of your ECS instance to connect to DataWorks, and set Authorization Object to the CIDR block of the vSwitch associated with your resource group. To find the vSwitch CIDR block, go to the Resource Groups page, find your resource group, click Network Settings, and check the VPC Binding tab. For details, see Add a security group rule.

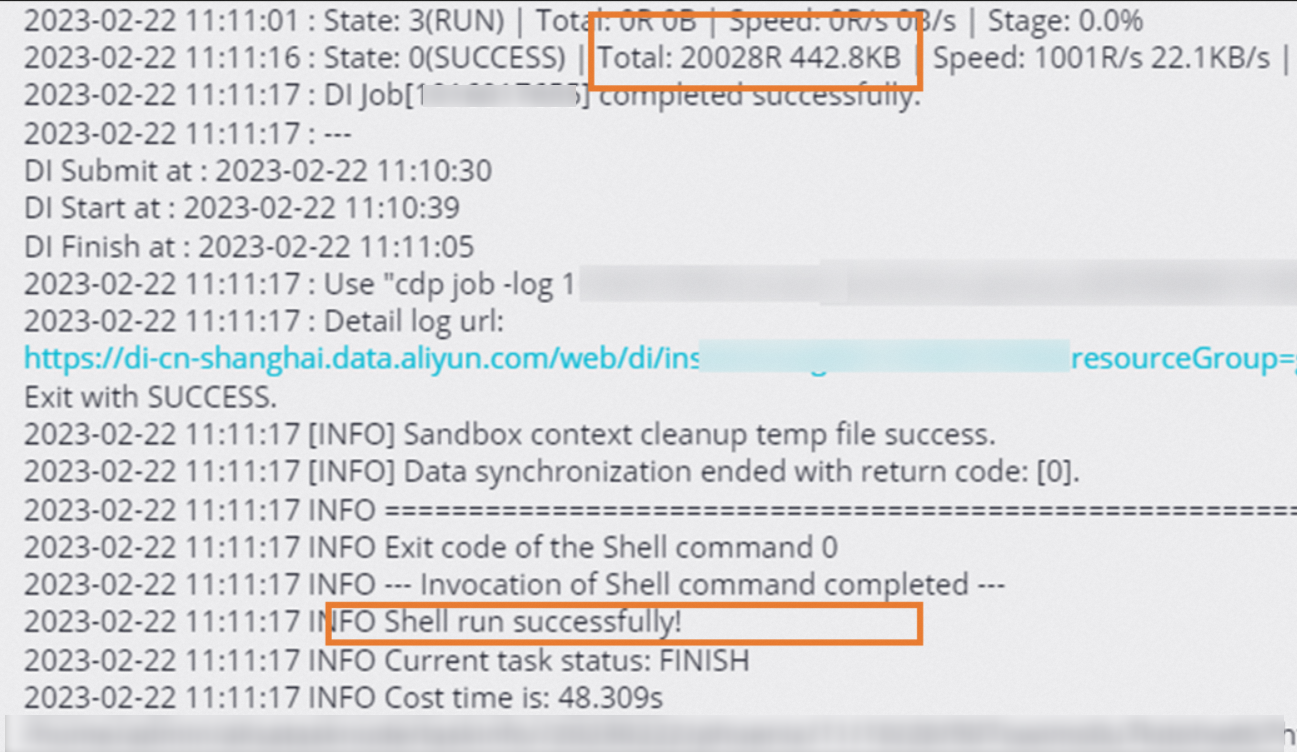

Verify the sync results

To confirm data was written successfully, right-click the ods_user_info_d_emr or ods_raw_log_d_emr node and select View Logs. If the sync completed, the logs show a result similar to:

Then create an ad hoc query to count the imported records.

-

In the left-side navigation pane of the DataStudio page, click the

icon. In the Ad Hoc Query pane, right-click Ad Hoc Query and choose Create Node > EMR Hive.

icon. In the Ad Hoc Query pane, right-click Ad Hoc Query and choose Create Node > EMR Hive. -

Run the following queries to confirm the record counts. Replace

Data timestampwith the actual partition date — if the workflow ran on November 7, 2019, the data timestamp is20191106(one day before).SELECT * FROM ods_user_info_d_emr WHERE dt = Data timestamp; SELECT * FROM ods_raw_log_d_emr WHERE dt = Data timestamp;

What's next

With data collection complete, proceed to the next tutorial to compute and analyze the synced data. See Process data.